## Line Chart: ARC-C Accuracy vs. Training Data Percentage

### Overview

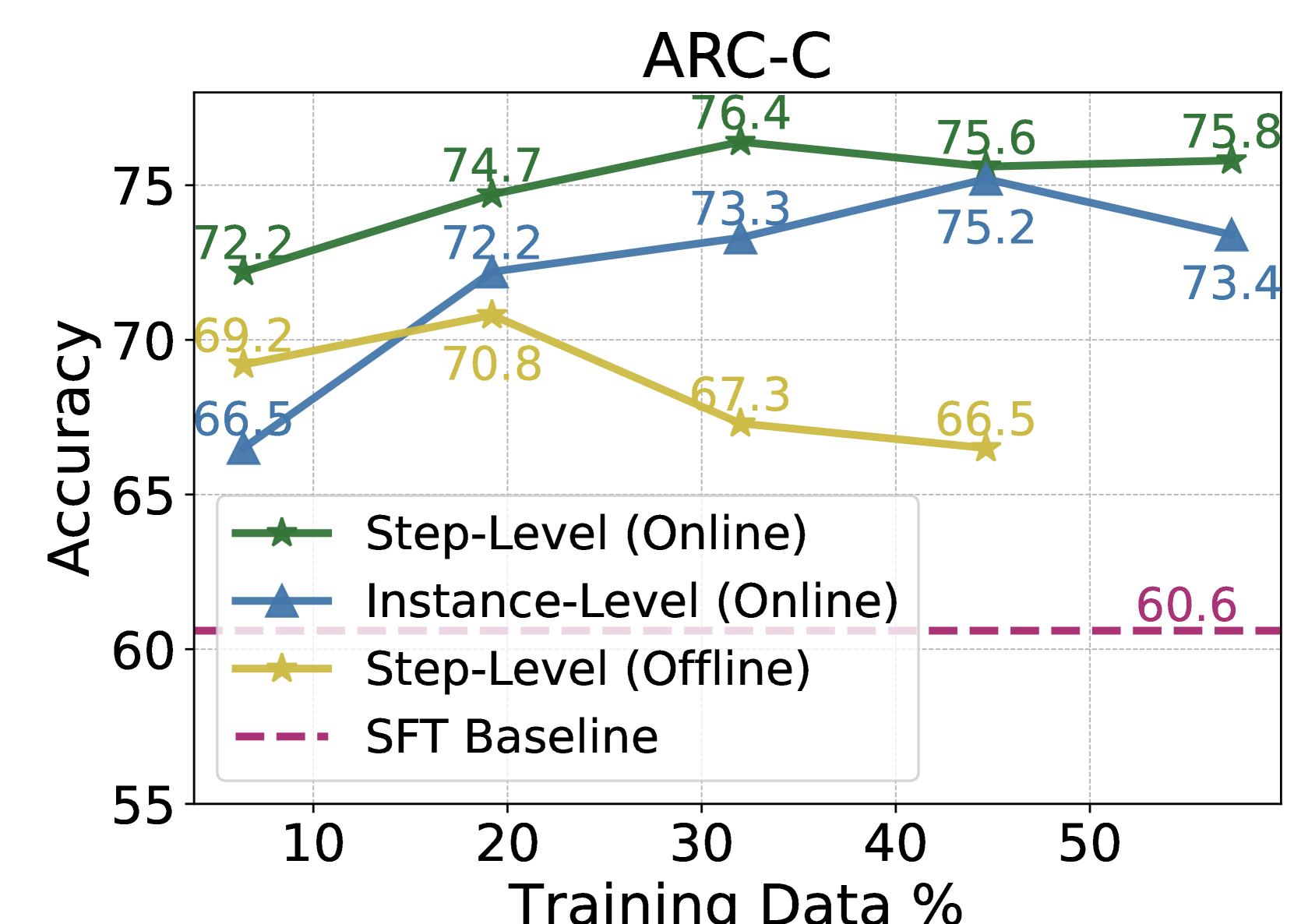

This line chart displays the accuracy of different training methods (Step-Level Online, Instance-Level Online, Step-Level Offline, and SFT Baseline) on the ARC-C dataset as a function of the percentage of training data used. The chart aims to demonstrate how performance scales with increasing data availability for each method.

### Components/Axes

* **Title:** ARC-C (positioned at the top-center)

* **X-axis:** Training Data % (ranging from approximately 5% to 55%, with markers at 10, 20, 30, 40, and 50)

* **Y-axis:** Accuracy (ranging from approximately 55 to 80, with markers at 60, 65, 70, 75)

* **Legend:** Located at the bottom-left corner, containing the following labels and corresponding colors:

* Step-Level (Online) - Green

* Instance-Level (Online) - Blue

* Step-Level (Offline) - Yellow/Orange

* SFT Baseline - Magenta/Purple (dashed line)

### Detailed Analysis

The chart contains four distinct lines representing the accuracy of each training method.

* **Step-Level (Online) - Green:** This line exhibits an upward trend, starting at approximately 69.2 accuracy at 10% training data and reaching approximately 75.8 accuracy at 50% training data. Specific data points are:

* 10%: 69.2

* 20%: 74.7

* 30%: 76.4

* 40%: 75.6

* 50%: 75.8

* **Instance-Level (Online) - Blue:** This line also shows an upward trend, beginning at approximately 72.2 accuracy at 10% training data and reaching approximately 73.4 accuracy at 50% training data. Specific data points are:

* 10%: 72.2

* 20%: 72.2

* 30%: 73.3

* 40%: 75.2

* 50%: 73.4

* **Step-Level (Offline) - Yellow/Orange:** This line initially increases, then decreases. It starts at approximately 66.5 accuracy at 10% training data, peaks at approximately 70.8 accuracy at 20% training data, and then declines to approximately 66.5 accuracy at 50% training data. Specific data points are:

* 10%: 66.5

* 20%: 70.8

* 30%: 67.3

* 40%: 66.5

* 50%: 66.5

* **SFT Baseline - Magenta/Purple (dashed line):** This line is horizontal, indicating a constant accuracy of approximately 60.6 across all training data percentages.

### Key Observations

* The "Step-Level (Online)" and "Instance-Level (Online)" methods consistently outperform the "Step-Level (Offline)" method and the "SFT Baseline."

* The "Step-Level (Offline)" method shows an initial improvement with increasing data, but then plateaus and even slightly decreases.

* The "SFT Baseline" remains constant, suggesting it is not affected by the amount of training data.

* The "Instance-Level (Online)" method shows a relatively flat performance curve, indicating diminishing returns with increased training data.

### Interpretation

The data suggests that online training methods ("Step-Level (Online)" and "Instance-Level (Online)") are more effective than offline training ("Step-Level (Offline)") and the "SFT Baseline" for the ARC-C dataset. The "Step-Level (Online)" method achieves the highest accuracy, particularly with more training data. The "Instance-Level (Online)" method shows a more moderate improvement, while the "Step-Level (Offline)" method's performance is unstable. The constant performance of the "SFT Baseline" indicates that it may be limited by its initial parameters or training process. The diminishing returns observed with the "Instance-Level (Online)" method suggest that beyond a certain point, adding more training data does not significantly improve accuracy. This could be due to the model reaching its capacity or the data becoming redundant. The "Step-Level (Offline)" method's initial increase followed by a decrease could indicate overfitting to the initial training data.