## Screenshot: Example of a Confidently Wrong Language Model Answer

### Overview

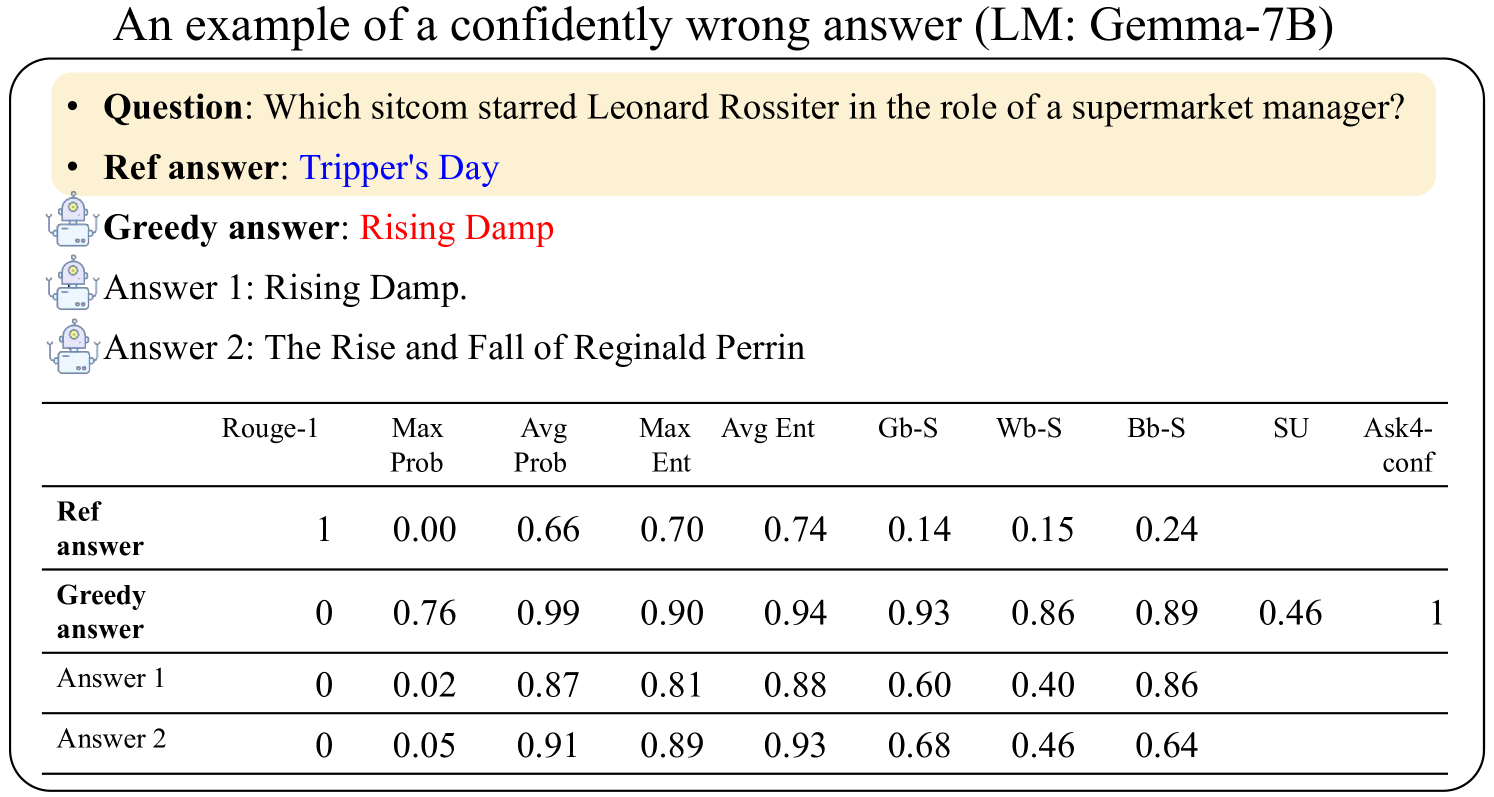

This image is a figure, likely from a research paper or technical report, illustrating an example where a language model (LM: Gemma-7B) provides an incorrect answer with high confidence. It presents a question, a reference answer, several model-generated answers, and a table of associated confidence and similarity metrics.

### Components/Axes

The image is structured into three main regions:

1. **Header/Title:** "An example of a confidently wrong answer (LM: Gemma-7B)"

2. **Question & Answer Block:** A beige-colored box containing the question and reference answer, followed by three model-generated answers.

3. **Metrics Table:** A data table comparing various metrics across the reference answer and the model-generated answers.

**Textual Content (Transcribed):**

* **Title:** An example of a confidently wrong answer (LM: Gemma-7B)

* **Question:** Which sitcom starred Leonard Rossiter in the role of a supermarket manager?

* **Ref answer:** Tripper's Day

* **Greedy answer:** Rising Damp

* **Answer 1:** Rising Damp.

* **Answer 2:** The Rise and Fall of Reginald Perrin

**Table Structure:**

The table has 10 columns and 5 rows (including the header row).

* **Column Headers (Metrics):** Rouge-1, Max Prob, Avg Prob, Max Ent, Avg Ent, Gb-S, Wb-S, Bb-S, SU, Ask4-conf

* **Row Headers (Answer Types):** Ref answer, Greedy answer, Answer 1, Answer 2

### Detailed Analysis

**Table Data Reconstruction:**

| Answer Type | Rouge-1 | Max Prob | Avg Prob | Max Ent | Avg Ent | Gb-S | Wb-S | Bb-S | SU | Ask4-conf |

|---------------|---------|----------|----------|---------|---------|------|------|------|------|-----------|

| **Ref answer** | 1 | 0.00 | 0.66 | 0.70 | 0.74 | 0.14 | 0.15 | 0.24 | | |

| **Greedy answer** | 0 | 0.76 | 0.99 | 0.90 | 0.94 | 0.93 | 0.86 | 0.89 | 0.46 | 1 |

| **Answer 1** | 0 | 0.02 | 0.87 | 0.81 | 0.88 | 0.60 | 0.40 | 0.86 | | |

| **Answer 2** | 0 | 0.05 | 0.91 | 0.89 | 0.93 | 0.68 | 0.46 | 0.64 | | |

*Note: Empty cells in the original table are represented as blank.*

### Key Observations

1. **Confidence vs. Accuracy Discrepancy:** The "Greedy answer" (Rising Damp) is incorrect (Rouge-1 = 0) but exhibits extremely high confidence scores: Max Prob (0.76), Avg Prob (0.99), and a perfect Ask4-conf score of 1.

2. **Reference Answer Profile:** The correct "Ref answer" (Tripper's Day) has a perfect Rouge-1 score of 1 but notably low probability scores (Max Prob = 0.00, Avg Prob = 0.66) and low similarity scores (Gb-S, Wb-S, Bb-S).

3. **Alternative Answers:** "Answer 1" and "Answer 2" are also incorrect (Rouge-1 = 0). They show high average probabilities (0.87, 0.91) and entropy scores, but lower maximum probabilities compared to the greedy answer.

4. **Metric Patterns:** For the incorrect answers, high Avg Prob and Avg Ent generally correlate with higher similarity scores (Gb-S, Wb-S, Bb-S). The "Greedy answer" leads in nearly all confidence and similarity metrics except Rouge-1.

### Interpretation

This figure serves as a clear case study of a failure mode in language models: generating plausible but factually incorrect outputs with high internal confidence.

* **What the data demonstrates:** The model (Gemma-7B) assigns very high probability to the token sequence "Rising Damp," despite it being the wrong answer to the factual question. The reference answer, while correct, receives low probability from the model, suggesting the model's internal knowledge or scoring is misaligned with factual truth for this instance.

* **Relationship between elements:** The table quantifies the model's misplaced confidence. Metrics like `Max Prob`, `Avg Prob`, and `Ask4-conf` are high for the wrong answer, while the correct answer scores low on these. The `Rouge-1` metric, which measures n-gram overlap with the reference, correctly identifies the greedy answer as wrong (score 0) and the reference as correct (score 1).

* **Notable anomaly:** The most striking anomaly is the `Ask4-conf` value of **1** for the "Greedy answer." This suggests that when asked to express confidence, the model was maximally confident in its incorrect response. This highlights a critical challenge in AI safety and reliability: a model can be both wrong and certain about it.

* **Underlying implication:** The figure argues for the necessity of metrics beyond simple probability or confidence scores (like `Rouge-1` or external fact-checking) to evaluate model outputs, especially for factual queries. It visually underscores the problem of "hallucination" or confident confabulation in LLMs.