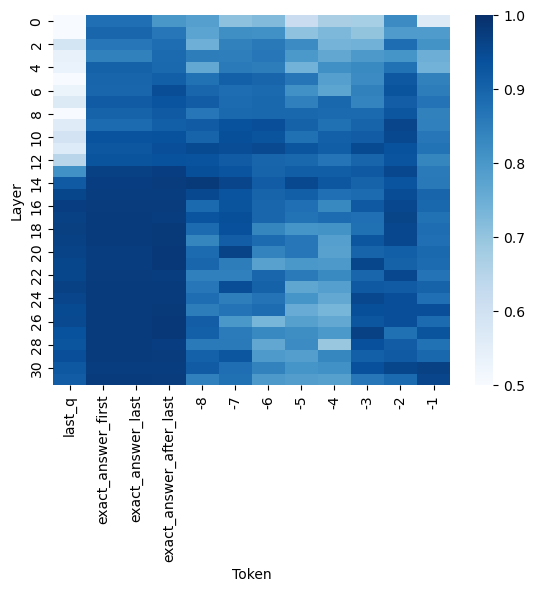

## Heatmap: Layer-Token Correlation Matrix

### Overview

This image is a heatmap visualizing the correlation strength between different "Tokens" (X-axis) across various neural network "Layers" (Y-axis). The color intensity represents the correlation value, with a scale provided on the right. The chart appears to analyze the internal representations of specific tokens within a language model's processing layers.

### Components/Axes

* **Chart Type:** Heatmap (2D grid of colored cells).

* **Y-Axis (Vertical):** Labeled **"Layer"**. It has numerical markers from **0 to 30** in increments of 2 (0, 2, 4, ..., 30). This represents the depth or layer number within a neural network.

* **X-Axis (Horizontal):** Labeled **"Token"**. It contains 12 categorical labels. From left to right:

1. `last_q`

2. `exact_answer_first`

3. `exact_answer_last`

4. `exact_answer_after_last`

5. `-8`

6. `-7`

7. `-6`

8. `-5`

9. `-4`

10. `-3`

11. `-2`

12. `-1`

* **Legend/Color Scale:** Positioned vertically on the **right side** of the chart. It is a gradient bar labeled from **0.5** (bottom, lightest blue/white) to **1.0** (top, darkest blue). The scale indicates the correlation value, where darker blue signifies a higher correlation (closer to 1.0) and lighter blue/white signifies a lower correlation (closer to 0.5).

### Detailed Analysis

The heatmap displays a grid of 31 rows (Layers 0-30) by 12 columns (Tokens). Each cell's color corresponds to a correlation value based on the legend.

**Trend Verification & Data Point Analysis:**

1. **First Four Tokens (`last_q`, `exact_answer_first`, `exact_answer_last`, `exact_answer_after_last`):**

* **Visual Trend:** These columns form a distinct, dark blue block, especially from Layer 12 downward. The correlation is consistently high.

* **Data Points:** The cells for these tokens are predominantly dark blue, indicating correlation values **approximately between 0.85 and 1.0** across most layers. The highest correlations (darkest blue, ~0.95-1.0) are concentrated in the lower half of the network (Layers ~12-30). Layers 0-10 show slightly lighter shades, suggesting correlations in the **~0.75-0.90** range for these tokens.

2. **Numbered Tokens (`-8` to `-1`):**

* **Visual Trend:** These columns show more variability. There is a general pattern where correlation is higher in the middle layers (approx. Layers 8-20) and lower in the very early (0-6) and very late (24-30) layers. The columns for `-4`, `-3`, `-2`, and `-1` appear slightly darker on average than `-8` through `-5`.

* **Data Points:**

* **Mid-Layer Peak:** For tokens `-8` to `-1`, the darkest cells (highest correlation, **~0.80-0.90**) are found roughly between **Layers 8 and 20**.

* **Early/Late Layer Troughs:** The lightest cells (lowest correlation, **~0.50-0.70**) for these tokens are in **Layers 0-6** and **Layers 24-30**.

* **Token Variation:** Tokens `-4`, `-3`, `-2`, `-1` maintain slightly higher correlations in the later layers (20-30) compared to tokens `-8`, `-7`, `-6`, `-5`.

### Key Observations

1. **Bimodal Pattern:** The heatmap reveals two distinct behavioral groups: the "answer-related" tokens (`last_q`, `exact_answer_*`) and the "positional" tokens (`-8` to `-1`).

2. **Layer-Dependent Correlation:** Correlation strength is not uniform across the network. For the positional tokens, it follows an inverted-U shape, peaking in the middle layers. For the answer tokens, it generally increases with depth.

3. **High Answer Token Consistency:** The first four tokens maintain very high inter-correlation throughout the network, suggesting their representations are strongly aligned, especially in deeper layers.

4. **Spatial Grounding:** The legend is on the right. The darkest region of the entire chart is the lower-left quadrant (Layers 12-30, Tokens 1-4). The lightest regions are the top rows (Layers 0-4) across all tokens, and the bottom rows for the numbered tokens.

### Interpretation

This heatmap likely visualizes the **cosine similarity or correlation of token embeddings** across the layers of a transformer-based language model. The "Token" labels suggest an analysis of how the model processes a question (`last_q`) and its answer (`exact_answer_*`), alongside relative positional markers (`-8` to `-1`, likely representing tokens preceding the answer).

* **What the data suggests:** The strong, deep-layer correlation among the answer-related tokens indicates that the model's internal representation of the question and the precise answer span becomes highly unified and stable as information is processed through the network. This is crucial for accurate answer extraction.

* **How elements relate:** The middle-layer peak for positional tokens aligns with the known function of middle transformer layers in building contextual understanding. The drop in correlation for these tokens in final layers might indicate they are being "used up" or transformed into the final answer representation.

* **Notable Anomaly/Insight:** The stark contrast between the two token groups is the key finding. It visually demonstrates that the model treats "semantic" tokens (question/answer) fundamentally differently from "structural" or positional tokens, maintaining a much stronger and more consistent representation for the former throughout its depth. This could be a signature of effective information flow for question-answering tasks.