## Diagram: Comparison of Deep Reasoning Training Methodologies

### Overview

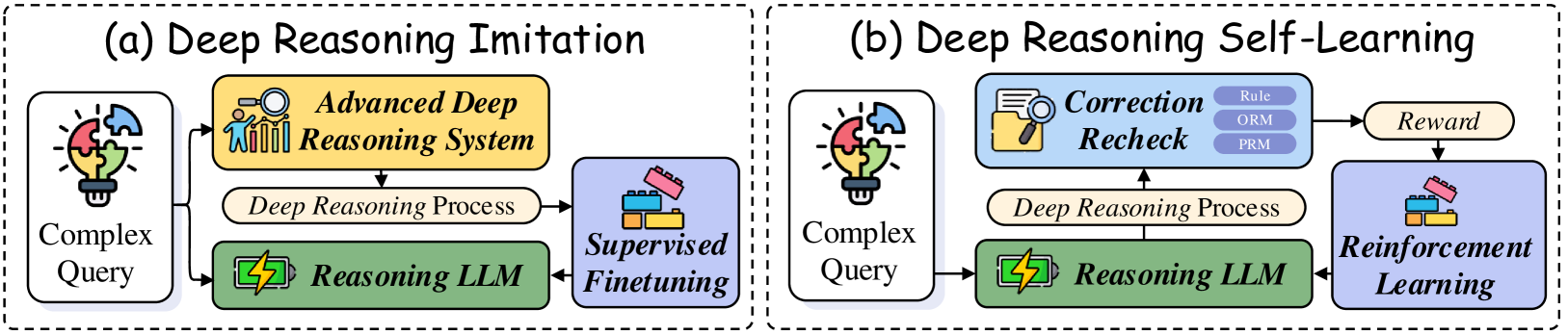

The image is a technical diagram comparing two distinct methodologies for training Large Language Models (LLMs) to perform complex reasoning tasks. It is divided into two side-by-side panels, labeled (a) and (b), each enclosed in a dashed-line box. The diagram uses a combination of text labels, icons, and directional arrows to illustrate the workflow and components of each approach.

### Components/Axes

The diagram is structured into two primary panels:

**Panel (a): Deep Reasoning Imitation**

* **Title:** "(a) Deep Reasoning Imitation" (top center of the left panel).

* **Components (from left to right):**

1. **Complex Query:** Represented by a lightbulb icon with puzzle pieces. This is the input.

2. **Advanced Deep Reasoning System:** A yellow box with an icon of a person at a whiteboard. This is the source of the "teacher" signal.

3. **Deep Reasoning Process:** A beige, rounded rectangle representing the intermediate reasoning steps.

4. **Reasoning LLM:** A green box with a lightning bolt icon. This is the model being trained.

5. **Supervised Finetuning:** A purple box with a building blocks icon. This is the training mechanism.

* **Flow:** Arrows indicate the process flow: `Complex Query` → `Advanced Deep Reasoning System` → `Deep Reasoning Process` → `Supervised Finetuning`. A separate arrow shows `Complex Query` also feeding directly into the `Reasoning LLM`. The `Supervised Finetuning` block then updates the `Reasoning LLM`.

**Panel (b): Deep Reasoning Self-Learning**

* **Title:** "(b) Deep Reasoning Self-Learning" (top center of the right panel).

* **Components (from left to right):**

1. **Complex Query:** Same icon as in panel (a).

2. **Reasoning LLM:** Same green box and icon as in panel (a).

3. **Deep Reasoning Process:** Same beige, rounded rectangle.

4. **Correction Recheck:** A blue box with a magnifying glass over a document icon. It contains three sub-labels stacked vertically: "Rule", "ORM", "PRM".

5. **Reward:** A beige, rounded rectangle.

6. **Reinforcement Learning:** A purple box with a building blocks icon (same icon as "Supervised Finetuning" in panel (a)).

* **Flow:** Arrows indicate: `Complex Query` → `Reasoning LLM` → `Deep Reasoning Process` → `Correction Recheck`. The `Correction Recheck` block outputs to `Reward`, which then feeds into `Reinforcement Learning`. The `Reinforcement Learning` block updates the `Reasoning LLM`.

### Detailed Analysis

The diagram explicitly contrasts two training paradigms:

1. **Deep Reasoning Imitation (Panel a):**

* This is a **supervised learning** approach.

* The `Reasoning LLM` learns by imitating the outputs of a more capable `Advanced Deep Reasoning System`.

* The process is unidirectional: the advanced system generates a reasoning process, which is used to directly finetune the target LLM via supervised methods.

2. **Deep Reasoning Self-Learning (Panel b):**

* This is a **reinforcement learning** approach.

* The `Reasoning LLM` generates its own reasoning process.

* This process is evaluated by a `Correction Recheck` module, which assesses it against specific criteria: "Rule" (likely logical rules), "ORM" (likely Outcome Reward Model), and "PRM" (likely Process Reward Model).

* The evaluation produces a `Reward` signal.

* This reward is used to update the LLM via `Reinforcement Learning`, creating a closed-loop, self-improving system.

### Key Observations

* **Shared Components:** Both panels start with a "Complex Query" and involve a "Reasoning LLM" and a "Deep Reasoning Process." The final training block in both is represented by the same purple building-blocks icon, though labeled differently.

* **Core Difference:** The fundamental difference is the source of the training signal. Panel (a) relies on an external, pre-existing "Advanced System" (imitation). Panel (b) relies on an internal evaluation and reward mechanism (self-learning).

* **Complexity:** The self-learning pathway (b) is more complex, introducing additional components (`Correction Recheck`, `Reward`) and a feedback loop for iterative improvement.

* **Sub-labels in Correction Recheck:** The terms "Rule," "ORM," and "PRM" are critical technical details within the self-learning framework, specifying the types of evaluative models or criteria used.

### Interpretation

This diagram illustrates a conceptual shift in AI training for complex tasks. The "Imitation" approach (a) is simpler and more direct, akin to a student learning by copying a master's work. It is effective but limited by the quality and availability of the "teacher" system.

The "Self-Learning" approach (b) represents a more advanced, autonomous paradigm. Here, the model learns by trial and error, guided by a reward system that critiques both its final outcomes (ORM) and its step-by-step reasoning process (PRM), while also adhering to formal rules. This mimics a more human-like form of learning through practice and self-correction.

The presence of "ORM" and "PRM" suggests the methodology is concerned not just with getting the right answer, but with the validity and soundness of the reasoning path itself. The diagram argues that for developing truly robust reasoning capabilities in AI, moving from pure imitation to structured self-evaluation and reinforcement may be a necessary and more powerful direction. The visual contrast emphasizes the increased architectural complexity required for this autonomous learning loop.