## Diagram: Proof Search and Tactic Generation System

### Overview

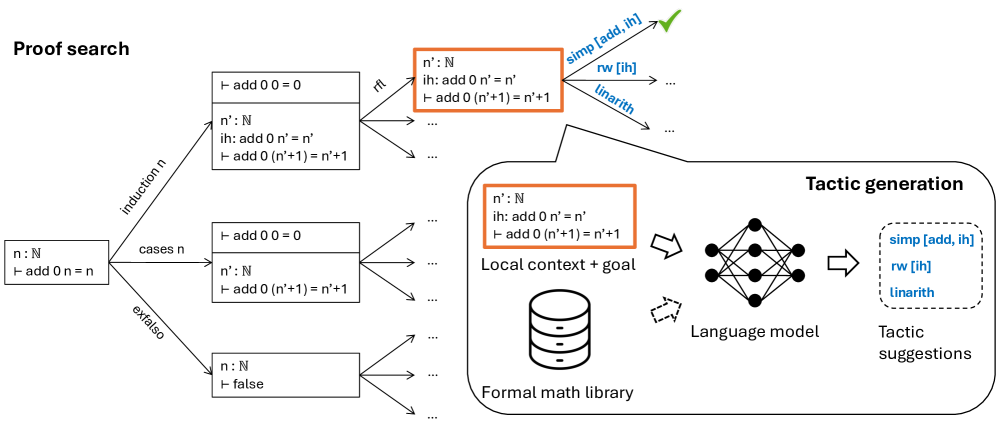

The image is a technical diagram illustrating a hybrid system for automated theorem proving. It depicts two interconnected processes: a symbolic **Proof search** tree on the left and a neural **Tactic generation** module on the right. The system appears to use a language model to suggest proof tactics (like `simp`, `rw`, `linarith`) based on the current proof state (local context and goal), which are then fed back into the proof search process.

### Components/Axes

The diagram is divided into two primary regions:

1. **Left Region: Proof Search**

* **Structure:** A tree graph originating from a single root node.

* **Root Node (Far Left):** Contains the text `n : ℕ` and `⊢ add 0 n = n`.

* **Edges (Branches):** Labeled with proof tactics: `induction n`, `cases n`, and `exfalso`.

* **Child Nodes:** Each branch leads to one or more rectangular boxes representing subgoals or proof states. These boxes contain formal mathematical statements.

* **Highlighted Node:** One specific node in the `induction n` branch is outlined with an orange border. It contains:

* `n' : ℕ`

* `ih: add 0 n' = n'`

* `⊢ add 0 (n'+1) = n'+1`

* **Tactic Suggestions:** From the highlighted node, three blue arrows point to the right, labeled with tactic names: `simp [add, ih]`, `rw [ih]`, and `linarith`. A green checkmark is next to `simp [add, ih]`, indicating it is the selected or successful tactic.

2. **Right Region: Tactic Generation**

* **Container:** A large rounded rectangle labeled **Tactic generation**.

* **Inputs:**

* **Local context + goal:** An orange-bordered box identical to the highlighted node from the proof search tree (`n' : ℕ`, `ih: add 0 n' = n'`, `⊢ add 0 (n'+1) = n'+1`).

* **Formal math library:** Represented by a database/cylinder icon.

* **Processing Unit:** A neural network icon labeled **Language model**. Arrows show it receives input from the "Local context + goal" and has a bidirectional connection (dashed arrow) with the "Formal math library".

* **Output:** An arrow points from the Language model to a dashed box labeled **Tactic suggestions**. This box lists three tactics in blue text: `simp [add, ih]`, `rw [ih]`, and `linarith`.

### Detailed Analysis

**Proof Search Tree Flow:**

1. The process starts with the goal `⊢ add 0 n = n` for a natural number `n`.

2. Three primary tactics are applied to this goal:

* `induction n`: This generates at least two subgoals (base case and inductive step). The diagram shows the inductive step node highlighted.

* `cases n`: This generates subgoals for each constructor of the natural number type (likely zero and successor).

* `exfalso`: This tactic applies the principle of explosion, leading to a subgoal of `⊢ false`.

3. The highlighted node represents the **inductive step** after applying `induction n`. The context includes the inductive hypothesis (`ih`), and the goal is to prove the statement for `n'+1`.

4. From this state, three candidate tactics are generated (by the Tactic Generation module): `simp [add, ih]`, `rw [ih]`, and `linarith`. The green checkmark suggests `simp [add, ih]` is the chosen or correct one to close this proof branch.

**Tactic Generation Module Flow:**

1. The current proof state ("Local context + goal") is fed as input.

2. A **Language model** (neural network) processes this input.

3. The model interacts with a **Formal math library** (likely for retrieving definitions, theorems, or examples).

4. The model outputs a list of suggested tactics (`simp [add, ih]`, `rw [ih]`, `linarith`) that are applicable to the given proof state.

### Key Observations

* **Hybrid Architecture:** The diagram explicitly combines symbolic AI (the proof search tree) with connectionist AI (the language model for tactic suggestion).

* **Context-Aware Suggestions:** The tactic suggestions are not generic; they are specific to the local context (e.g., `simp [add, ih]` uses the inductive hypothesis `ih`).

* **Visual Highlighting:** The use of orange borders creates a clear visual link between the proof state in the search tree and the input to the tactic generator.

* **Tactic Specificity:** The suggested tactics (`simp`, `rw`, `linarith`) are common in interactive theorem provers like Lean or Coq. `simp` is a simplifier, `rw` is for rewriting, and `linarith` handles linear arithmetic.

* **Non-Determinism:** The proof search tree shows multiple branches, indicating the system explores different proof paths. The tactic generator provides multiple suggestions for a single state.

### Interpretation

This diagram represents a **neuro-symbolic approach to automated theorem proving**. The core idea is to use a powerful language model to guide a traditional, exhaustive proof search.

* **What it demonstrates:** The system aims to overcome the combinatorial explosion of proof search by using a neural network to predict the most promising tactics at each step. The language model acts as a "heuristic function" or "policy network," learning from a formal math library which tactics are likely to succeed given a specific goal and context.

* **Relationship between elements:** The proof search provides the structure and formal verification, while the tactic generation provides the intuition and guidance. They form a feedback loop: the search presents a problem state, the generator suggests actions, the search applies them and presents a new state.

* **Notable implications:** This architecture could significantly improve the efficiency and success rate of automated provers. The green checkmark on `simp [add, ih]` implies the model's suggestion was correct, showcasing its potential utility. The reliance on a "Formal math library" also suggests the model's effectiveness is tied to the quality and structure of its training data from formalized mathematics.