TECHNICAL ASSET FINGERPRINT

197072c11228aa014a3534ba

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Bar Charts: Normalized Latency and Broadcast-to-Root Cycle Counts

### Overview

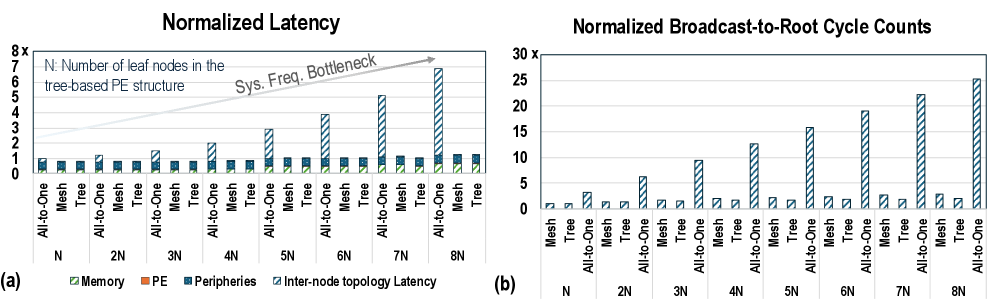

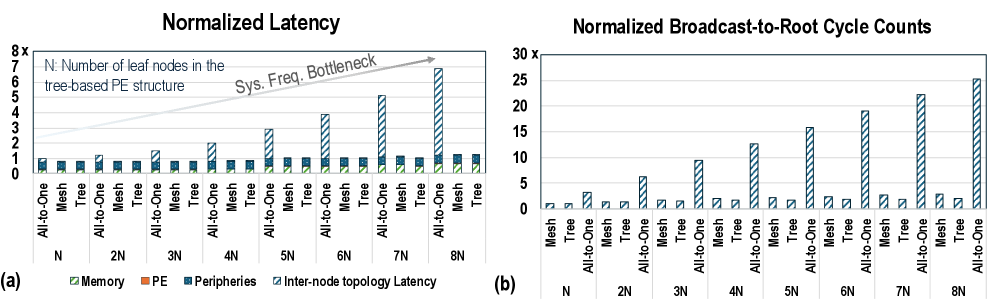

The image contains two bar charts comparing the performance of different network topologies (Mesh, Tree, and All-to-One) with varying numbers of leaf nodes (N). The left chart (a) shows normalized latency, while the right chart (b) shows normalized broadcast-to-root cycle counts.

### Components/Axes

**Chart (a): Normalized Latency**

* **Title:** Normalized Latency

* **Y-axis:** Normalized Latency, scaled from 0 to 8x.

* **X-axis:** Number of leaf nodes in the tree-based PE structure, labeled as N, 2N, 3N, 4N, 5N, 6N, 7N, and 8N.

* **Categories:** For each N value, there are three network topologies: All-to-One, Mesh, and Tree.

* **Legend (bottom-left):**

* Memory (light green)

* PE (orange)

* Peripheries (blue)

* Inter-node topology Latency (dark blue with diagonal lines)

* **Annotation:** "Sys. Freq. Bottleneck" with an arrow pointing towards the increasing Inter-node topology Latency bars.

**Chart (b): Normalized Broadcast-to-Root Cycle Counts**

* **Title:** Normalized Broadcast-to-Root Cycle Counts

* **Y-axis:** Normalized Broadcast-to-Root Cycle Counts, scaled from 0 to 30x.

* **X-axis:** Number of leaf nodes in the tree-based PE structure, labeled as N, 2N, 3N, 4N, 5N, 6N, 7N, and 8N.

* **Categories:** For each N value, there are three network topologies: Mesh, Tree, and All-to-One.

* **Legend:** Not explicitly present, but the bars represent the cycle counts for each topology.

### Detailed Analysis

**Chart (a): Normalized Latency**

* **Memory (light green):** Relatively constant across all N values and topologies, hovering around 0.1-0.2.

* **PE (orange):** Relatively constant across all N values and topologies, hovering around 0.1-0.2.

* **Peripheries (blue):** Relatively constant across all N values and topologies, hovering around 0.5-0.7.

* **Inter-node topology Latency (dark blue with diagonal lines):**

* For Mesh and Tree topologies, the latency remains relatively constant around 0.1-0.2.

* For All-to-One topology, the latency increases significantly with increasing N values:

* N: ~0.8

* 2N: ~1.2

* 3N: ~1.5

* 4N: ~2.8

* 5N: ~1.2

* 6N: ~2.8

* 7N: ~5.0

* 8N: ~6.8

**Chart (b): Normalized Broadcast-to-Root Cycle Counts**

* **Mesh:** Cycle counts remain low and relatively constant across all N values, approximately between 0.5 and 1.

* **Tree:** Cycle counts remain low and relatively constant across all N values, approximately between 0.5 and 1.

* **All-to-One:** Cycle counts increase significantly with increasing N values:

* N: ~2.5

* 2N: ~6.5

* 3N: ~9.5

* 4N: ~2.5

* 5N: ~16

* 6N: ~2.5

* 7N: ~22.5

* 8N: ~25

### Key Observations

* In the Normalized Latency chart, the Inter-node topology Latency for the All-to-One topology increases significantly with increasing N, while the Mesh and Tree topologies remain relatively constant.

* In the Normalized Broadcast-to-Root Cycle Counts chart, the All-to-One topology shows a significant increase in cycle counts with increasing N, while the Mesh and Tree topologies remain relatively constant.

* The "Sys. Freq. Bottleneck" annotation suggests that the increasing latency in the All-to-One topology is due to a system frequency bottleneck.

### Interpretation

The data suggests that the All-to-One topology suffers from scalability issues as the number of leaf nodes (N) increases. This is evident in both the normalized latency and the broadcast-to-root cycle counts. The Mesh and Tree topologies, on the other hand, appear to be more scalable, maintaining relatively constant latency and cycle counts across different N values. The "Sys. Freq. Bottleneck" annotation indicates that the increasing latency in the All-to-One topology is likely due to limitations in the system frequency, which becomes a bottleneck as the communication demands increase with more leaf nodes. The charts highlight the trade-offs between different network topologies and their suitability for different scales of parallel processing.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Normalized Latency and Normalized Broadcast-to-Root Cycle Counts

### Overview

The image presents two bar charts, labeled (a) and (b), comparing performance metrics for different parallel execution (PE) structures: All-to-One, Mesh, and Tree. Chart (a) displays "Normalized Latency," while chart (b) shows "Normalized Broadcast-to-Root Cycle Counts." Both charts vary the number of leaf nodes (N) in the tree-based PE structure from N to 8N. A gray line in chart (a) indicates a "Sys. Freq. Bottleneck."

### Components/Axes

**Chart (a): Normalized Latency**

* **X-axis:** PE Structure and Leaf Node Count. Categories: "All-to-One", "Mesh", "Tree". Leaf Node Count: "N", "2N", "3N", "4N", "5N", "6N", "7N", "8N".

* **Y-axis:** Normalized Latency (Scale: 0 to 8, with increments of 1).

* **Legend:**

* Memory (Blue)

* PE (Orange)

* Peripheries (Gray)

* Inter-node topology Latency (Hatched Gray)

* **Annotation:** "N: Number of leaf nodes in the tree-based PE structure"

* **Trend Line:** "Sys. Freq. Bottleneck" (Gray line, slopes upward)

**Chart (b): Normalized Broadcast-to-Root Cycle Counts**

* **X-axis:** PE Structure and Leaf Node Count. Categories: "All-to-One", "Mesh", "Tree". Leaf Node Count: "N", "2N", "3N", "4N", "5N", "6N", "7N", "8N".

* **Y-axis:** Normalized Broadcast-to-Root Cycle Counts (Scale: 0 to 30, with increments of 5).

* **Legend:** (Same color scheme as Chart a)

* Memory (Blue)

* PE (Orange)

* Peripheries (Gray)

* Inter-node topology Latency (Hatched Gray)

### Detailed Analysis or Content Details

**Chart (a): Normalized Latency**

* **Memory (Blue):** Remains consistently low, around 1.0-1.5 across all PE structures and leaf node counts.

* **PE (Orange):** Also remains relatively low, generally between 1.0 and 2.0, with slight increases for Tree structures at higher leaf node counts.

* **Peripheries (Gray):** Shows a slight increase with increasing leaf node counts for all structures, but remains below 2.0.

* **Inter-node topology Latency (Hatched Gray):** Starts low for N, increases significantly for Tree structures as the leaf node count increases. At 8N, the latency reaches approximately 3.0 for Tree.

* **Trend Line (Sys. Freq. Bottleneck):** Starts at approximately 1.0 and increases linearly to approximately 8.0 at 8N.

**Chart (b): Normalized Broadcast-to-Root Cycle Counts**

* **Memory (Blue):** Remains consistently low, generally below 2.0 across all structures and leaf node counts.

* **PE (Orange):** Similar to Memory, remains low, generally below 2.0.

* **Peripheries (Gray):** Shows a significant increase for Tree structures as the leaf node count increases. At 8N, the cycle count reaches approximately 24.

* **Inter-node topology Latency (Hatched Gray):** Low for Mesh and All-to-One, but increases dramatically for Tree structures with increasing leaf node counts. At 8N, the cycle count reaches approximately 10 for Tree.

### Key Observations

* **Tree structures exhibit increasing latency and cycle counts with increasing leaf nodes.** This is particularly pronounced for Inter-node topology Latency and Peripheries in both charts.

* **Mesh and All-to-One structures maintain relatively stable performance** across varying leaf node counts.

* **Memory and PE components consistently show low latency and cycle counts** compared to Peripheries and Inter-node topology Latency.

* **The "Sys. Freq. Bottleneck" trend line in Chart (a) suggests a system frequency limitation** that becomes more significant as the number of leaf nodes increases.

### Interpretation

The data suggests that scaling tree-based PE structures by increasing the number of leaf nodes introduces significant performance bottlenecks, particularly related to inter-node communication and peripheries. The increasing latency and cycle counts for Tree structures indicate that the communication overhead grows rapidly with the number of nodes. The "Sys. Freq. Bottleneck" line implies that the system's clock frequency may be limiting the performance of these structures as they scale.

Mesh and All-to-One structures appear to be more scalable, as their performance remains relatively stable with increasing leaf nodes. This suggests that these structures have lower communication overhead or are less sensitive to the system's frequency limitations.

The consistently low performance of Memory and PE components indicates that these are not the primary bottlenecks in the system. The focus for optimization should be on reducing the latency and cycle counts associated with inter-node communication and peripheries, especially in tree-based PE structures. The data highlights a trade-off between scalability and performance in parallel execution structures, with tree structures becoming less efficient as the number of nodes increases.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## [Bar Charts]: Normalized Latency and Broadcast-to-Root Cycle Counts

### Overview

The image contains two side-by-side bar charts, labeled (a) and (b), comparing performance metrics for different network topologies across varying system sizes. Chart (a) shows "Normalized Latency," and chart (b) shows "Normalized Broadcast-to-Root Cycle Counts." Both charts analyze three topologies: All-to-One, Mesh, and Tree, across system sizes denoted as N, 2N, 3N, up to 8N. An annotation in chart (a) indicates that "N" represents the "Number of leaf nodes in the tree-based PE structure" and highlights a "Sys. Freq. Bottleneck" trend.

### Components/Axes

**Chart (a): Normalized Latency**

* **Title:** Normalized Latency

* **Y-Axis:** Label is "8x" at the top, with tick marks from 0 to 8 in increments of 1. The axis represents a normalized latency multiplier.

* **X-Axis:** Grouped by system size: N, 2N, 3N, 4N, 5N, 6N, 7N, 8N. Within each size, three bars represent the topologies: "All-to-One", "Mesh", "Tree".

* **Legend (Bottom Center):** Four stacked components:

* Memory (Solid light green)

* PE (Solid orange)

* Peripheries (Solid blue)

* Inter-node topology Latency (Diagonal blue stripes)

* **Annotation (Top Left):** Text: "N: Number of leaf nodes in the tree-based PE structure". An arrow labeled "Sys. Freq. Bottleneck" points from the lower-left to the upper-right, indicating a general upward trend.

**Chart (b): Normalized Broadcast-to-Root Cycle Counts**

* **Title:** Normalized Broadcast-to-Root Cycle Counts

* **Y-Axis:** Label is "30x" at the top, with tick marks from 0 to 30 in increments of 5. The axis represents a normalized cycle count multiplier.

* **X-Axis:** Grouped by system size: N, 2N, 3N, 4N, 5N, 6N, 7N, 8N. Within each size, three bars represent the topologies: "Mesh", "Tree", "All-to-One". *Note: The order of topologies differs from chart (a).*

* **Legend (Bottom Center):** Three bar types:

* Mesh (Solid light green)

* Tree (Solid orange)

* All-to-One (Diagonal blue stripes)

### Detailed Analysis

**Chart (a) - Normalized Latency (Approximate Values)**

The latency is a stacked bar, summing contributions from Memory, PE, Peripheries, and Inter-node topology.

* **N:**

* All-to-One: Total ~1.1x. (Memory ~0.5, PE ~0.3, Peripheries ~0.2, Inter-node ~0.1)

* Mesh: Total ~1.0x. (Memory ~0.5, PE ~0.3, Peripheries ~0.2, Inter-node ~0.0)

* Tree: Total ~1.0x. (Memory ~0.5, PE ~0.3, Peripheries ~0.2, Inter-node ~0.0)

* **2N:**

* All-to-One: Total ~1.5x. (Inter-node component increases to ~0.4)

* Mesh: Total ~1.1x.

* Tree: Total ~1.1x.

* **3N:**

* All-to-One: Total ~2.2x. (Inter-node ~1.0)

* Mesh: Total ~1.2x.

* Tree: Total ~1.2x.

* **4N:**

* All-to-One: Total ~3.0x. (Inter-node ~1.8)

* Mesh: Total ~1.3x.

* Tree: Total ~1.3x.

* **5N:**

* All-to-One: Total ~3.8x. (Inter-node ~2.6)

* Mesh: Total ~1.4x.

* Tree: Total ~1.4x.

* **6N:**

* All-to-One: Total ~4.8x. (Inter-node ~3.6)

* Mesh: Total ~1.5x.

* Tree: Total ~1.5x.

* **7N:**

* All-to-One: Total ~5.8x. (Inter-node ~4.6)

* Mesh: Total ~1.6x.

* Tree: Total ~1.6x.

* **8N:**

* All-to-One: Total ~6.8x. (Inter-node ~5.6)

* Mesh: Total ~1.7x.

* Tree: Total ~1.7x.

**Trend Verification (Chart a):** The "All-to-One" latency line (total bar height) slopes steeply upward. The "Mesh" and "Tree" latency lines slope gently upward. The "Inter-node topology Latency" component (striped section) is the primary driver of the increase for "All-to-One".

**Chart (b) - Normalized Broadcast-to-Root Cycle Counts (Approximate Values)**

Each bar represents the total cycle count for a topology.

* **N:**

* Mesh: ~2x

* Tree: ~1x

* All-to-One: ~3x

* **2N:**

* Mesh: ~4x

* Tree: ~2x

* All-to-One: ~6x

* **3N:**

* Mesh: ~6x

* Tree: ~3x

* All-to-One: ~10x

* **4N:**

* Mesh: ~8x

* Tree: ~4x

* All-to-One: ~13x

* **5N:**

* Mesh: ~10x

* Tree: ~5x

* All-to-One: ~16x

* **6N:**

* Mesh: ~12x

* Tree: ~6x

* All-to-One: ~19x

* **7N:**

* Mesh: ~14x

* Tree: ~7x

* All-to-One: ~22x

* **8N:**

* Mesh: ~16x

* Tree: ~8x

* All-to-One: ~25x

**Trend Verification (Chart b):** All three topology lines slope upward linearly. The "All-to-One" line has the steepest slope, followed by "Mesh", then "Tree".

### Key Observations

1. **Dominant Cost Factor:** In chart (a), the "Inter-node topology Latency" (striped blue) is the dominant and fastest-growing component of total latency for the "All-to-One" topology. For "Mesh" and "Tree", this component is negligible.

2. **Scalability Divergence:** There is a dramatic scalability divergence between "All-to-One" and the other two topologies. "All-to-One" performance degrades rapidly (exponentially in latency, linearly in cycles) as system size (N) increases. "Mesh" and "Tree" scale much more gracefully.

3. **Relative Performance:** "Tree" topology consistently shows the best (lowest) normalized cycle counts in chart (b). "Mesh" is approximately double the cycles of "Tree" at each size. "All-to-One" is approximately 2.5-3 times the cycles of "Tree".

4. **Latency Composition:** The fixed overheads (Memory, PE, Peripheries) are constant across all topologies and sizes, forming a baseline. The variable, topology-dependent cost is solely the inter-node communication.

### Interpretation

These charts present a quantitative argument for the inefficiency of a centralized ("All-to-One") communication pattern in scaling processing element (PE) structures compared to distributed topologies ("Mesh" and "Tree").

* **What the data suggests:** The "Sys. Freq. Bottleneck" annotation implies that as the system grows, the inter-node communication latency in an All-to-One scheme becomes the critical path, limiting the maximum achievable system frequency. The linear growth in broadcast cycle counts for all topologies is expected, but the slope reveals the communication overhead multiplier inherent to each topology's algorithm.

* **How elements relate:** Chart (a) explains *why* the cycle counts in chart (b) differ. The high inter-node latency for "All-to-One" in (a) directly translates to many more cycles being consumed for the same broadcast operation in (b). The "Mesh" and "Tree" topologies avoid this bottleneck by using more efficient, structured communication paths.

* **Notable Anomalies/Outliers:** The "All-to-One" data series is the clear outlier, demonstrating poor scalability. The near-identical performance of "Mesh" and "Tree" in the latency chart (a) is interesting, as it suggests their per-operation latency is similar, yet the cycle count chart (b) shows "Tree" requires half the cycles of "Mesh". This implies the "Tree" topology completes the broadcast operation in fewer logical steps (hops), even if each step's latency is comparable to a Mesh step.

* **Peircean Investigation:** The signs (steeply rising striped bars) point to an underlying cause: a centralized communication hub creates a contention point. The data trends (diverging lines) predict that for very large N, the All-to-One approach would become functionally unusable, while Mesh/Tree would remain viable. This is a classic engineering trade-off analysis visualized, advocating for distributed over centralized architectures in scalable systems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Charts: Normalized Latency and Broadcast-to-Root Cycle Counts

### Overview

The image contains two bar charts comparing performance metrics across different network topologies (All-to-One, Mesh, Tree) and scaling factors (N, 2N, ..., 8N). The left chart (a) shows **Normalized Latency**, while the right chart (b) shows **Normalized Broadcast-to-Root Cycle Counts**. Both charts use a shared legend for color-coded components: Memory (green), PE (orange), Peripheries (blue), and Inter-node topology Latency (striped blue).

---

### Components/Axes

#### Chart (a): Normalized Latency

- **X-axis**: Categories:

- All-to-One (N, 2N, ..., 8N)

- Mesh (N, 2N, ..., 8N)

- Tree (N, 2N, ..., 8N)

- **Y-axis**: "Normalized Latency" (0 to 8x, linear scale).

- **Legend**:

- Memory (green)

- PE (orange)

- Peripheries (blue)

- Inter-node topology Latency (striped blue)

- **Text annotation**: "Sys. Freq. Bottleneck" with an arrow pointing to the highest bar in the Tree structure (8N).

#### Chart (b): Normalized Broadcast-to-Root Cycle Counts

- **X-axis**: Same categories as Chart (a).

- **Y-axis**: "Normalized Broadcast-to-Root Cycle Counts" (0 to 30x, linear scale).

- **Legend**: Same as Chart (a).

---

### Detailed Analysis

#### Chart (a): Normalized Latency

- **Trend**:

- Inter-node topology Latency (striped blue) dominates and increases sharply with the number of leaf nodes (N → 8N), especially in the Tree structure.

- Memory (green) and Peripheries (blue) remain relatively flat across all topologies and scaling factors.

- PE (orange) shows minor fluctuations but stays below 1x.

- **Key values**:

- Tree structure at 8N: ~7x latency (highest).

- Mesh structure at 8N: ~1.5x latency (lowest).

#### Chart (b): Normalized Broadcast-to-Root Cycle Counts

- **Trend**:

- Tree structure (blue bars) exhibits exponentially higher cycle counts compared to Mesh and All-to-One.

- Mesh structure (green bars) remains consistently low (<5x) across all scaling factors.

- All-to-One (orange bars) shows moderate increases but stays below 10x.

- **Key values**:

- Tree structure at 8N: ~25x cycle counts (highest).

- Mesh structure at 8N: ~2x cycle counts (lowest).

---

### Key Observations

1. **Tree Structure Bottleneck**:

- Both latency and cycle counts for the Tree structure grow disproportionately with the number of leaf nodes, aligning with the "Sys. Freq. Bottleneck" annotation.

2. **Mesh Efficiency**:

- Mesh topology maintains low latency and cycle counts, suggesting better scalability.

3. **Inter-node Latency Dominance**:

- Inter-node topology Latency (striped blue) is the primary contributor to performance degradation in the Tree structure.

---

### Interpretation

The data highlights a critical performance trade-off in tree-based PE structures:

- **Scalability Issues**: As the number of leaf nodes increases (N → 8N), the Tree structure’s inter-node communication overhead becomes a bottleneck, leading to exponential increases in latency and cycle counts.

- **Mesh Advantage**: The Mesh topology’s decentralized communication pattern avoids this bottleneck, maintaining near-constant performance regardless of scaling.

- **Implications**: For large-scale systems, Mesh or hybrid architectures may be preferable to Tree structures to mitigate communication overhead. The "Sys. Freq. Bottleneck" annotation suggests that frequency limitations in inter-node links exacerbate these issues.

---

*Note: All values are approximate, derived from bar heights relative to axis scales.*

DECODING INTELLIGENCE...