TECHNICAL ASSET FINGERPRINT

19a7fad826d2ffc5b353c3c0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

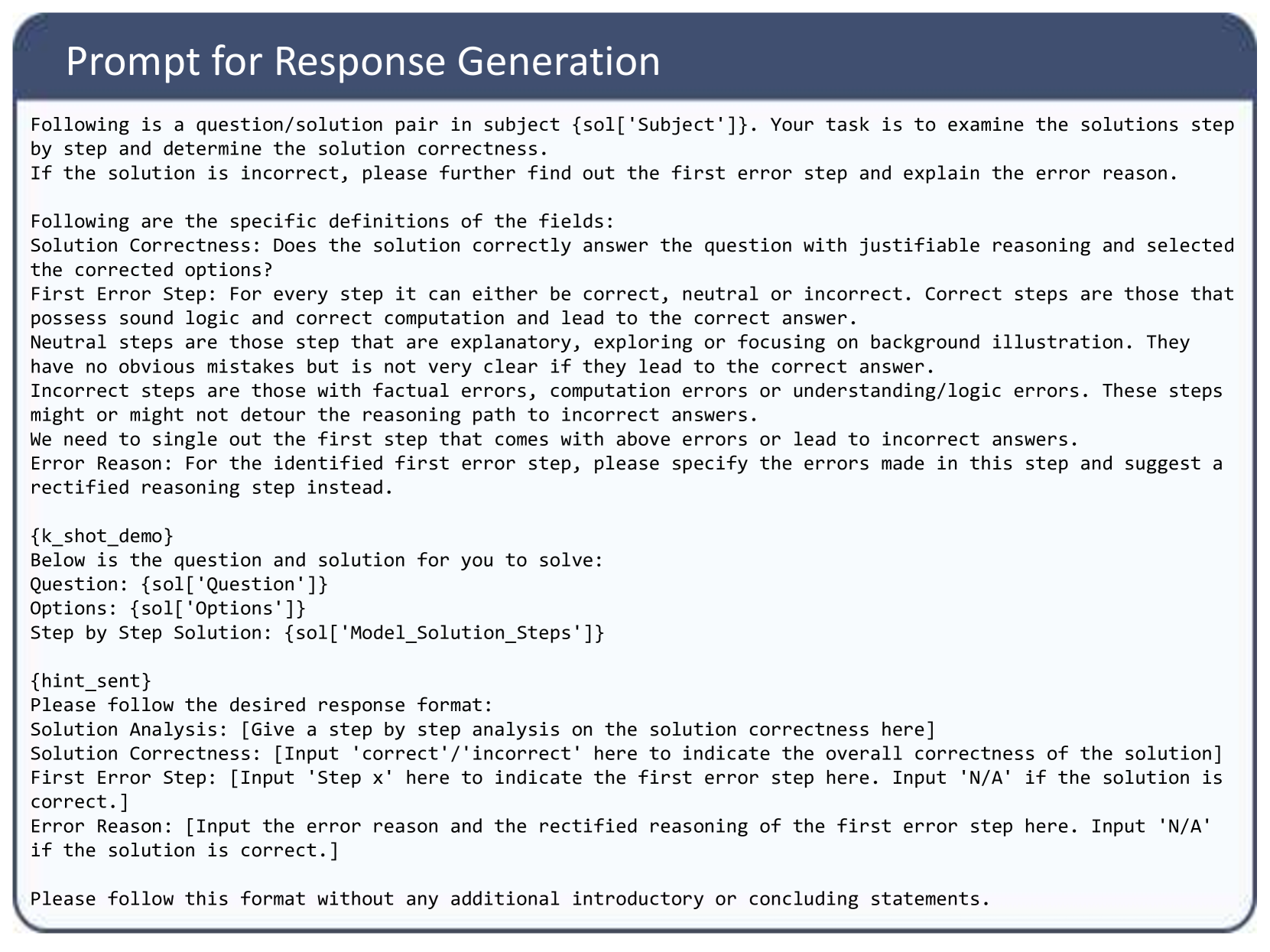

## Prompt: Response Generation

### Overview

The image is a prompt for generating responses to question/solution pairs. It outlines the task, definitions, and desired response format.

### Components/Axes

* **Title:** Prompt for Response Generation

* **Instructions:**

* Examine solutions step-by-step to determine correctness.

* Identify the first error step and explain the reason.

* **Definitions:**

* Solution Correctness: Does the solution correctly answer the question with justifiable reasoning and selected the corrected options?

* First Error Step: Categorizes steps as correct, neutral, or incorrect based on logic, computation, and potential to lead to the correct answer.

* Error Reason: Specifies the errors made in the identified first error step and suggests a rectified reasoning step.

* **Input Fields:**

* Question: {sol['Question']}

* Options: {sol['Options']}

* Step by Step Solution: {sol['Model\_Solution\_Steps']}

* **Response Format:**

* Solution Analysis: \[Give a step by step analysis on the solution correctness here]

* Solution Correctness: \[Input 'correct'/'incorrect' here to indicate the overall correctness of the solution]

* First Error Step: \[Input 'Step x' here to indicate the first error step here. Input 'N/A' if the solution is correct.]

* Error Reason: \[Input the error reason and the rectified reasoning of the first error step here. Input 'N/A' if the solution is correct.]

### Detailed Analysis or ### Content Details

The prompt provides a structured approach to evaluating solutions. It defines criteria for correctness, error identification, and provides a template for generating responses. The prompt emphasizes the need to identify the first error step and provide a rectified reasoning.

### Key Observations

The prompt is designed to standardize the evaluation and response generation process for question/solution pairs. It provides clear definitions and a structured format to ensure consistency.

### Interpretation

The prompt aims to facilitate automated or semi-automated evaluation of solutions. By providing clear guidelines and a structured format, it enables consistent and objective assessment of solution correctness and error identification. The prompt is designed to be used in a system where questions, options, and model solutions are provided, and the system generates an analysis, correctness assessment, error identification, and error reason.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Text Document: Prompt for Response Generation

### Overview

This document outlines a prompt designed for generating responses related to evaluating the correctness of a step-by-step solution to a question. It defines the criteria for assessing solution correctness, categorizes different types of steps (correct, neutral, incorrect), and specifies the required output format for the response.

### Components/Axes

This document does not contain charts or diagrams with axes. It is a textual document.

### Content Details

The document is structured into several sections:

**1. Main Prompt Title:**

"Prompt for Response Generation"

**2. Initial Instructions:**

"Following is a question/solution pair in subject {sol['Subject']}. Your task is to examine the solutions step by step and determine the solution correctness. If the solution is incorrect, please further find out the first error step and explain the error reason."

**3. Definitions of Fields:**

* **Solution Correctness:** "Does the solution correctly answer the question with justifiable reasoning and selected the corrected options?"

* **First Error Step:** "For every step it can either be correct, neutral or incorrect. Correct steps are those that possess sound logic and correct computation and lead to the correct answer. Neutral steps are those steps that are explanatory, exploring or focusing on background illustration. They have no obvious mistakes but is not very clear if they lead to the correct answer. Incorrect steps are those with factual errors, computation errors or understanding/logic errors. These steps might or might not detour the reasoning path to incorrect answers. We need to single out the first step that comes with above errors or lead to incorrect answers."

* **Error Reason:** "For the identified first error step, please specify the errors made in this step and suggest a rectified reasoning step instead."

**4. K-Shot Demo Placeholder:**

"{k_shot_demo}"

**5. Question and Solution Structure:**

"Below is the question and solution for you to solve:

Question: {sol['Question']}

Options: {sol['Options']}

Step by Step Solution: {sol['Model_Solution_Steps']}"

**6. Hint Sent Placeholder:**

"{hint_sent}"

**7. Desired Response Format:**

"Please follow the desired response format:

Solution Analysis: [Give a step by step analysis on the solution correctness here]

Solution Correctness: [Input 'correct'/'incorrect' here to indicate the overall correctness of the solution]

First Error Step: [Input 'Step x' here to indicate the first error step here. Input 'N/A' if the solution is correct.]

Error Reason: [Input the error reason and the rectified reasoning of the first error step here. Input 'N/A' if the solution is correct.]"

**8. Final Instruction:**

"Please follow this format without any additional introductory or concluding statements."

### Key Observations

* The prompt is designed for a system that evaluates the correctness of a provided solution to a question.

* It emphasizes a step-by-step analysis of the solution.

* Clear definitions are provided for "Solution Correctness," "First Error Step," and "Error Reason" to guide the evaluation.

* The prompt uses placeholders like `{sol['Subject']}`, `{sol['Question']}`, `{sol['Options']}`, and `{sol['Model_Solution_Steps']}` which indicate that this is a template for dynamic content insertion.

* A specific output format is mandated, requiring structured fields for analysis, overall correctness, the first error step, and the reason for that error.

* The prompt explicitly states to avoid introductory or concluding remarks in the generated response.

### Interpretation

This document serves as a detailed instruction set for an AI or human evaluator tasked with assessing the quality of a problem-solving process. The prompt aims to elicit a structured and informative critique of a given solution. By defining specific criteria and requiring the identification of the *first* error, it encourages a focused and systematic approach to error detection. The inclusion of placeholders suggests that this prompt is part of a larger system, likely for automated grading, feedback generation, or training data creation for AI models. The emphasis on "justifiable reasoning" and "rectified reasoning" indicates a focus on not just identifying errors but also on providing constructive feedback for improvement. The strict formatting requirement ensures consistency and ease of parsing for downstream processing.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: nugit/gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Text Block: Prompt for Response Generation

### Overview

The image displays a screenshot of a structured text prompt designed for an Artificial Intelligence system, specifically a Large Language Model (LLM). The prompt instructs the AI to act as an evaluator. Its task is to analyze a provided multiple-choice question and a step-by-step solution, determine the overall correctness, identify the specific step where the first error occurs (if any), and provide a reason and correction for that error.

### Components and Layout

The image is composed of two main visual regions:

* **Header (Top):** A dark blue rectangular banner spanning the width of the image. It contains the title text aligned to the left in a white, sans-serif font.

* **Main Body (Center to Bottom):** A light gray/white rectangular area enclosed by a thin dark border. It contains the main instructional text in a black, monospaced or simple sans-serif font.

The text itself is structured into four logical sections:

1. **Task Introduction:** Defines the role and primary objective.

2. **Definitions:** Provides strict criteria for evaluating steps as "correct," "neutral," or "incorrect."

3. **Data Injection Points:** Placeholders where the specific question, options, and solution will be inserted programmatically.

4. **Output Formatting:** Strict instructions on how the AI must format its response.

### Content Details (Full Transcription)

Below is the exact transcription of the text contained within the image. Note the presence of programmatic variables enclosed in curly braces `{}`.

**[Header Text]**

Prompt for Response Generation

**[Main Body Text]**

Following is a question/solution pair in subject {sol['Subject']}. Your task is to examine the solutions step by step and determine the solution correctness.

If the solution is incorrect, please further find out the first error step and explain the error reason.

Following are the specific definitions of the fields:

Solution Correctness: Does the solution correctly answer the question with justifiable reasoning and selected the corrected options?

First Error Step: For every step it can either be correct, neutral or incorrect. Correct steps are those that possess sound logic and correct computation and lead to the correct answer.

Neutral steps are those step that are explanatory, exploring or focusing on background illustration. They have no obvious mistakes but is not very clear if they lead to the correct answer.

Incorrect steps are those with factual errors, computation errors or understanding/logic errors. These steps might or might not detour the reasoning path to incorrect answers.

We need to single out the first step that comes with above errors or lead to incorrect answers.

Error Reason: For the identified first error step, please specify the errors made in this step and suggest a rectified reasoning step instead.

{k_shot_demo}

Below is the question and solution for you to solve:

Question: {sol['Question']}

Options: {sol['Options']}

Step by Step Solution: {sol['Model_Solution_Steps']}

{hint_sent}

Please follow the desired response format:

Solution Analysis: [Give a step by step analysis on the solution correctness here]

Solution Correctness: [Input 'correct'/'incorrect' here to indicate the overall correctness of the solution]

First Error Step: [Input 'Step x' here to indicate the first error step here. Input 'N/A' if the solution is correct.]

Error Reason: [Input the error reason and the rectified reasoning of the first error step here. Input 'N/A' if the solution is correct.]

Please follow this format without any additional introductory or concluding statements.

### Key Observations

* **Programmatic Placeholders:** The text utilizes Python-style dictionary formatting (e.g., `{sol['Subject']}`, `{sol['Question']}`). This indicates that this text is a template used in a software pipeline. A script will replace these variables with actual data before sending the prompt to the AI model.

* **Few-Shot Capability:** The inclusion of the `{k_shot_demo}` variable suggests the system is designed to optionally provide the AI with examples of correctly completed evaluations before asking it to perform the task, a technique known to improve accuracy.

* **Nuanced Evaluation Criteria:** The prompt does not treat reasoning as strictly binary. It introduces the concept of "Neutral steps" (explanatory or background information), which prevents the AI from falsely flagging non-mathematical/non-logical setup text as an error.

* **Strict Output Constraints:** The final sentence explicitly forbids "additional introductory or concluding statements." This is a standard prompt engineering technique to prevent LLMs from generating conversational filler (e.g., "Sure, I can help with that! Here is the analysis..."), ensuring the output can be easily parsed by a computer script.

### Interpretation

This image represents a "meta-evaluation" prompt. It is not meant for a human to read; rather, it is the instruction manual for an AI model that is being used to grade the output of *another* AI model (or potentially human-generated data).

**What the data demonstrates:**

This prompt is likely part of an automated benchmarking system or a Reinforcement Learning from Human Feedback (RLHF) pipeline. By asking an advanced model (like GPT-4) to evaluate the step-by-step reasoning of a lesser model, developers can automatically score the lesser model's performance on complex reasoning tasks (like math or logic).

**Reading between the lines:**

The structure of the requested response format is highly intentional. By forcing the AI to output the `Solution Analysis` *before* it outputs the `Solution Correctness`, the prompt employs a "Chain-of-Thought" (CoT) strategy. It forces the evaluating AI to "think out loud" and process the logic step-by-step before rendering a final verdict. If the prompt asked for `Solution Correctness` first, the AI would have to guess the answer before doing the work, leading to higher hallucination and error rates. The meticulous definition of "Neutral steps" indicates that previous iterations of this prompt likely failed because the evaluating AI was too aggressive in marking explanatory text as incorrect.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Document: Prompt for Response Generation

### Overview

The image presents a document outlining a process for evaluating the correctness of solutions to questions, specifically within a question/solution pair context. It details the criteria for assessing solution steps as correct, neutral, or incorrect, and provides a structured format for reporting the analysis.

### Components/Axes

The document is structured with the following key components:

* **Header:** "Prompt for Response Generation"

* **Introduction:** Explains the task of examining solutions and determining correctness.

* **Definitions:** Defines "Solution Correctness", "First Error Step", and "Error Reason".

* **Input Section:** Contains placeholders for the question, options, and the model's solution steps, denoted by `{sol['Question']}`, `{sol['Options']}`, and `{sol['Model_Solution_Steps']}` respectively.

* **Hint Section:** Provides instructions for the desired response format.

* **Output Format:** Specifies the required structure for the solution analysis, including "Solution Analysis", "Solution Correctness", "First Error Step", and "Error Reason".

### Detailed Analysis or Content Details

The document's content can be transcribed as follows:

"Following a question/solution pair in subject {sol['Subject']}. Your task is to examine the solutions step by step and determine the solution correctness.

Following are the specific definitions of the fields:

Solution Correctness: Does the solution correctly answer the question with justifiable reasoning and selected the corrected options?

First Error Step: For every step it can either be correct, neutral or incorrect. Correct steps are those that possess sound logic and correct computation and lead to the correct answer.

Neutral steps are those step that are explanatory, exploring or focusing on background illustration. They have no obvious mistakes but is not very clear if they lead to the correct answer.

Incorrect steps are those with factual errors, computation errors or understanding/logic errors. These steps might or might not detour the reasoning path to incorrect answers.

We need to single out the first step that comes with above errors or lead to incorrect answers.

Error Reason: For the identified first error step, please specify the errors made in this step and suggest a rectified reasoning step instead.

{_k_shot_demo}

Below is the question and solution for you to solve:

Question: {sol['Question']}

Options: {sol['Options']}

Step by Step Solution: {sol['Model_Solution_Steps']}

{hint_sent}

Please follow the desired response format:

Solution Analysis: [Give a step by step analysis on the solution correctness here]

Solution Correctness: [Input 'correct'/'incorrect' here to indicate the overall correctness of the solution]

First Error Step: [Input 'Step X' here to indicate the first error step here. Input 'N/A' if the solution is correct.]

Error Reason: [Input the error reason and the rectified reasoning of the first error step here. Input 'N/A' if the solution is correct.]

Please follow this format without any additional introductory or concluding statements."

### Key Observations

The document is a procedural guide. It emphasizes a step-by-step evaluation process and provides clear definitions for assessing the quality of each step. The use of placeholders suggests it's intended to be used with a specific question/solution dataset. The strict formatting requirements indicate a need for consistent and structured feedback.

### Interpretation

This document outlines a rigorous methodology for evaluating the correctness of solutions, likely within an automated or semi-automated system. The detailed definitions and structured output format suggest a focus on identifying specific errors and providing constructive feedback. The placeholders for the question, options, and solution steps indicate that this document is part of a larger system designed to assess and improve the performance of a model or algorithm in answering questions. The emphasis on "justifiable reasoning" suggests that the evaluation process is not solely based on the correctness of the answer, but also on the quality of the explanation provided. The document is a set of instructions, not a presentation of data or findings.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Document: Prompt for Response Generation

### Overview

The image displays a structured text document titled "Prompt for Response Generation." It is a template or instruction set designed to guide an AI or evaluator in analyzing a provided question/solution pair. The document defines specific fields for evaluation, provides a template with placeholders for dynamic content, and mandates a strict output format. The text is entirely in English.

### Content Structure

The document is organized into several distinct sections within a bordered frame with a dark blue header.

1. **Header:** "Prompt for Response Generation" in white text on a dark blue background.

2. **Primary Task Description:** A paragraph outlining the core task: to examine a question/solution pair, determine solution correctness, and identify the first error step if the solution is incorrect.

3. **Field Definitions:** A detailed section defining the evaluation criteria:

* **Solution Correctness:** Asks if the solution correctly answers the question with justifiable reasoning and selected corrected options.

* **First Error Step:** Defines three categories for each step:

* *Correct:* Sound logic, correct computation, leads to correct answer.

* *Neutral:* Explanatory or background-focused, no obvious mistakes, but unclear if it leads to the correct answer.

* *Incorrect:* Contains factual, computational, or logic errors that may or may not derail the reasoning.

* **Error Reason:** Requires specifying the errors in the identified first error step and suggesting a rectified reasoning step.

4. **Template Section with Placeholders:** This section contains the dynamic parts of the prompt, marked by curly braces `{}`.

* `{k_shot_demo}`: A placeholder, likely for inserting few-shot demonstration examples.

* "Below is the question and solution for you to solve:"

* `Question: {sol['Question']}`

* `Options: {sol['Options']}`

* `Step by Step Solution: {sol['Model_Solution_Steps']}`

* `{hint_sent}`: Another placeholder, likely for optional hints.

5. **Mandated Response Format:** A final section instructing the responder to follow a specific format without any additional introductory or concluding statements. The required format is:

* `Solution Analysis: [Give a step by step analysis on the solution correctness here]`

* `Solution Correctness: [Input 'correct'/'incorrect' here to indicate the overall correctness of the solution]`

* `First Error Step: [Input 'Step x' here to indicate the first error step here. Input 'N/A' if the solution is correct.]`

* `Error Reason: [Input the error reason and the rectified reasoning of the first error step here. Input 'N/A' if the solution is correct.]`

### Detailed Analysis

The document is a meta-prompt—a prompt designed to generate or structure another AI's response. Its primary function is to enforce a rigorous, step-by-step evaluation of a solution's logical validity.

* **Key Definitions:** The definitions for "Correct," "Neutral," and "Incorrect" steps are precise. A "Neutral" step is particularly interesting; it is not erroneous but is flagged for potentially being non-contributory or insufficiently directed toward the answer.

* **Placeholders:** The use of `{sol['Subject']}`, `{sol['Question']}`, etc., indicates this is a template where specific problem data is inserted programmatically. `{k_shot_demo}` suggests the prompt can be augmented with examples to guide the evaluator's style.

* **Strict Format:** The final instruction ("Please follow this format without any additional introductory or concluding statements") is absolute, aiming to produce standardized, machine-parsable output.

### Key Observations

1. **Focus on Process over Outcome:** The evaluation is heavily weighted on the *reasoning process* ("step by step analysis") rather than just the final answer. Identifying the "First Error Step" is a core requirement.

2. **Granular Error Classification:** The system distinguishes between steps that are outright wrong and steps that are merely unhelpful or neutral, allowing for nuanced feedback.

3. **Template-Driven Design:** The document is clearly a reusable component in a larger pipeline, likely for automated grading, model training, or generating critique datasets.

4. **Visual Layout:** The text is presented in a monospaced font (like Courier) within a light gray box, mimicking a code block or terminal output, which reinforces its technical, programmatic nature.

### Interpretation

This document is a **prompt engineering template for automated solution evaluation**. Its purpose is to standardize the critique of step-by-step problem-solving, likely in an educational or AI training context.

* **What it demonstrates:** It reveals a sophisticated approach to assessment that values logical soundness and process transparency. By requiring the identification of the *first* error, it encourages pinpointing the root cause of failure rather than just listing all mistakes.

* **How elements relate:** The field definitions directly inform the mandated response format. The placeholders connect this static instruction set to dynamic problem data. The strict output format ensures consistency for downstream processing.

* **Notable implications:** The inclusion of a "Neutral" step category is insightful. It acknowledges that not all explanatory text is erroneous, but some may be inefficient or off-topic—a subtle distinction important for training models to generate concise, relevant reasoning. The entire structure is designed to minimize ambiguity and subjective judgment in the evaluation process.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Prompt for Response Generation

### Overview

The image displays a structured prompt template for evaluating the correctness of a solution to a question/solution pair. It outlines criteria for analyzing solution steps, identifying errors, and providing rectified reasoning. The template includes placeholders for dynamic content (e.g., `{sol['Subject']}`) and specifies a strict response format.

### Components/Axes

1. **Header**:

- Title: "Prompt for Response Generation" (dark blue background).

2. **Main Content**:

- **Task Description**:

- Examine solutions step-by-step to determine correctness.

- If incorrect, identify the first error step and explain the error.

- **Definitions**:

- **Solution Correctness**: Whether the solution answers the question with justifiable reasoning and selected options.

- **First Error Step**: Classifies steps as correct (sound logic), neutral (explanatory but unclear), or incorrect (factual/logic errors).

- **Error Reason**: Requires specifying errors in the first erroneous step and suggesting a rectified reasoning step.

- **Example Placeholders**:

- `{k_shot_demo}`: Contains a question, options, and step-by-step solution.

- `{hint_sent}`: Instructions for response format.

3. **Response Format**:

- Structured output sections:

- **Solution Analysis**: Step-by-step analysis of correctness.

- **Solution Correctness**: Input `'correct'`/`'incorrect'`.

- **First Error Step**: Input step number or `'N/A'` if correct.

- **Error Reason**: Input error reason and rectified reasoning or `'N/A'` if correct.

### Detailed Analysis

- **Textual Structure**:

- The prompt is divided into sections with clear headings (e.g., "Definitions," "Example Placeholders").

- Placeholders (e.g., `{sol['Question']}`, `{sol['Options']}`) indicate dynamic content insertion points.

- **Key Instructions**:

- Emphasis on identifying the *first* error step with sound logic for classification.

- Neutral steps are those that are explanatory but lack clarity in leading to the correct answer.

- Incorrect steps involve factual errors, computation mistakes, or flawed logic.

### Key Observations

- The template enforces a strict format for responses, requiring explicit inputs for correctness, error steps, and reasoning.

- Placeholders suggest integration with a system that dynamically populates questions, solutions, and hints.

- The definitions differentiate between neutral and incorrect steps, highlighting the importance of logical rigor.

### Interpretation

This prompt is designed for a technical evaluation system, likely used in AI or automated grading contexts. It ensures systematic analysis of solutions by:

1. **Classifying Steps**: Distinguishing between correct, neutral, and incorrect reasoning to pinpoint errors.

2. **Rectifying Errors**: Providing a framework to suggest corrected reasoning, improving model outputs iteratively.

3. **Dynamic Adaptability**: Placeholders allow customization for different subjects or question types.

The structure prioritizes precision in error detection, which is critical for training or validating AI models in educational or problem-solving domains. The absence of visual elements (e.g., charts) indicates the focus is purely on textual analysis and structured output generation.

DECODING INTELLIGENCE...