## Text Block: Prompt for Response Generation

### Overview

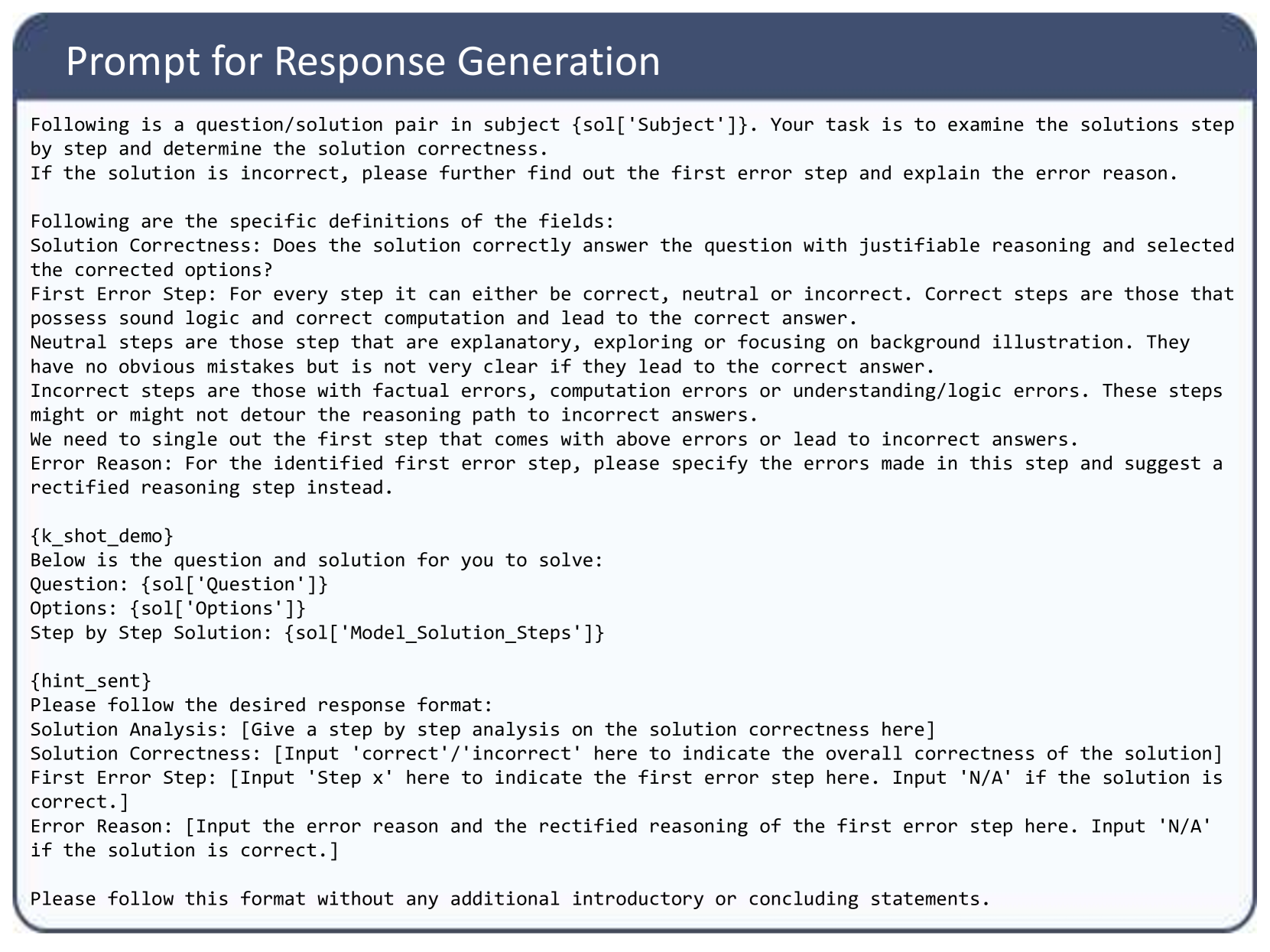

The image displays a screenshot of a structured text prompt designed for an Artificial Intelligence system, specifically a Large Language Model (LLM). The prompt instructs the AI to act as an evaluator. Its task is to analyze a provided multiple-choice question and a step-by-step solution, determine the overall correctness, identify the specific step where the first error occurs (if any), and provide a reason and correction for that error.

### Components and Layout

The image is composed of two main visual regions:

* **Header (Top):** A dark blue rectangular banner spanning the width of the image. It contains the title text aligned to the left in a white, sans-serif font.

* **Main Body (Center to Bottom):** A light gray/white rectangular area enclosed by a thin dark border. It contains the main instructional text in a black, monospaced or simple sans-serif font.

The text itself is structured into four logical sections:

1. **Task Introduction:** Defines the role and primary objective.

2. **Definitions:** Provides strict criteria for evaluating steps as "correct," "neutral," or "incorrect."

3. **Data Injection Points:** Placeholders where the specific question, options, and solution will be inserted programmatically.

4. **Output Formatting:** Strict instructions on how the AI must format its response.

### Content Details (Full Transcription)

Below is the exact transcription of the text contained within the image. Note the presence of programmatic variables enclosed in curly braces `{}`.

**[Header Text]**

Prompt for Response Generation

**[Main Body Text]**

Following is a question/solution pair in subject {sol['Subject']}. Your task is to examine the solutions step by step and determine the solution correctness.

If the solution is incorrect, please further find out the first error step and explain the error reason.

Following are the specific definitions of the fields:

Solution Correctness: Does the solution correctly answer the question with justifiable reasoning and selected the corrected options?

First Error Step: For every step it can either be correct, neutral or incorrect. Correct steps are those that possess sound logic and correct computation and lead to the correct answer.

Neutral steps are those step that are explanatory, exploring or focusing on background illustration. They have no obvious mistakes but is not very clear if they lead to the correct answer.

Incorrect steps are those with factual errors, computation errors or understanding/logic errors. These steps might or might not detour the reasoning path to incorrect answers.

We need to single out the first step that comes with above errors or lead to incorrect answers.

Error Reason: For the identified first error step, please specify the errors made in this step and suggest a rectified reasoning step instead.

{k_shot_demo}

Below is the question and solution for you to solve:

Question: {sol['Question']}

Options: {sol['Options']}

Step by Step Solution: {sol['Model_Solution_Steps']}

{hint_sent}

Please follow the desired response format:

Solution Analysis: [Give a step by step analysis on the solution correctness here]

Solution Correctness: [Input 'correct'/'incorrect' here to indicate the overall correctness of the solution]

First Error Step: [Input 'Step x' here to indicate the first error step here. Input 'N/A' if the solution is correct.]

Error Reason: [Input the error reason and the rectified reasoning of the first error step here. Input 'N/A' if the solution is correct.]

Please follow this format without any additional introductory or concluding statements.

### Key Observations

* **Programmatic Placeholders:** The text utilizes Python-style dictionary formatting (e.g., `{sol['Subject']}`, `{sol['Question']}`). This indicates that this text is a template used in a software pipeline. A script will replace these variables with actual data before sending the prompt to the AI model.

* **Few-Shot Capability:** The inclusion of the `{k_shot_demo}` variable suggests the system is designed to optionally provide the AI with examples of correctly completed evaluations before asking it to perform the task, a technique known to improve accuracy.

* **Nuanced Evaluation Criteria:** The prompt does not treat reasoning as strictly binary. It introduces the concept of "Neutral steps" (explanatory or background information), which prevents the AI from falsely flagging non-mathematical/non-logical setup text as an error.

* **Strict Output Constraints:** The final sentence explicitly forbids "additional introductory or concluding statements." This is a standard prompt engineering technique to prevent LLMs from generating conversational filler (e.g., "Sure, I can help with that! Here is the analysis..."), ensuring the output can be easily parsed by a computer script.

### Interpretation

This image represents a "meta-evaluation" prompt. It is not meant for a human to read; rather, it is the instruction manual for an AI model that is being used to grade the output of *another* AI model (or potentially human-generated data).

**What the data demonstrates:**

This prompt is likely part of an automated benchmarking system or a Reinforcement Learning from Human Feedback (RLHF) pipeline. By asking an advanced model (like GPT-4) to evaluate the step-by-step reasoning of a lesser model, developers can automatically score the lesser model's performance on complex reasoning tasks (like math or logic).

**Reading between the lines:**

The structure of the requested response format is highly intentional. By forcing the AI to output the `Solution Analysis` *before* it outputs the `Solution Correctness`, the prompt employs a "Chain-of-Thought" (CoT) strategy. It forces the evaluating AI to "think out loud" and process the logic step-by-step before rendering a final verdict. If the prompt asked for `Solution Correctness` first, the AI would have to guess the answer before doing the work, leading to higher hallucination and error rates. The meticulous definition of "Neutral steps" indicates that previous iterations of this prompt likely failed because the evaluating AI was too aggressive in marking explanatory text as incorrect.