## Heatmap: Layer-wise Parameter Importance in a Neural Network

### Overview

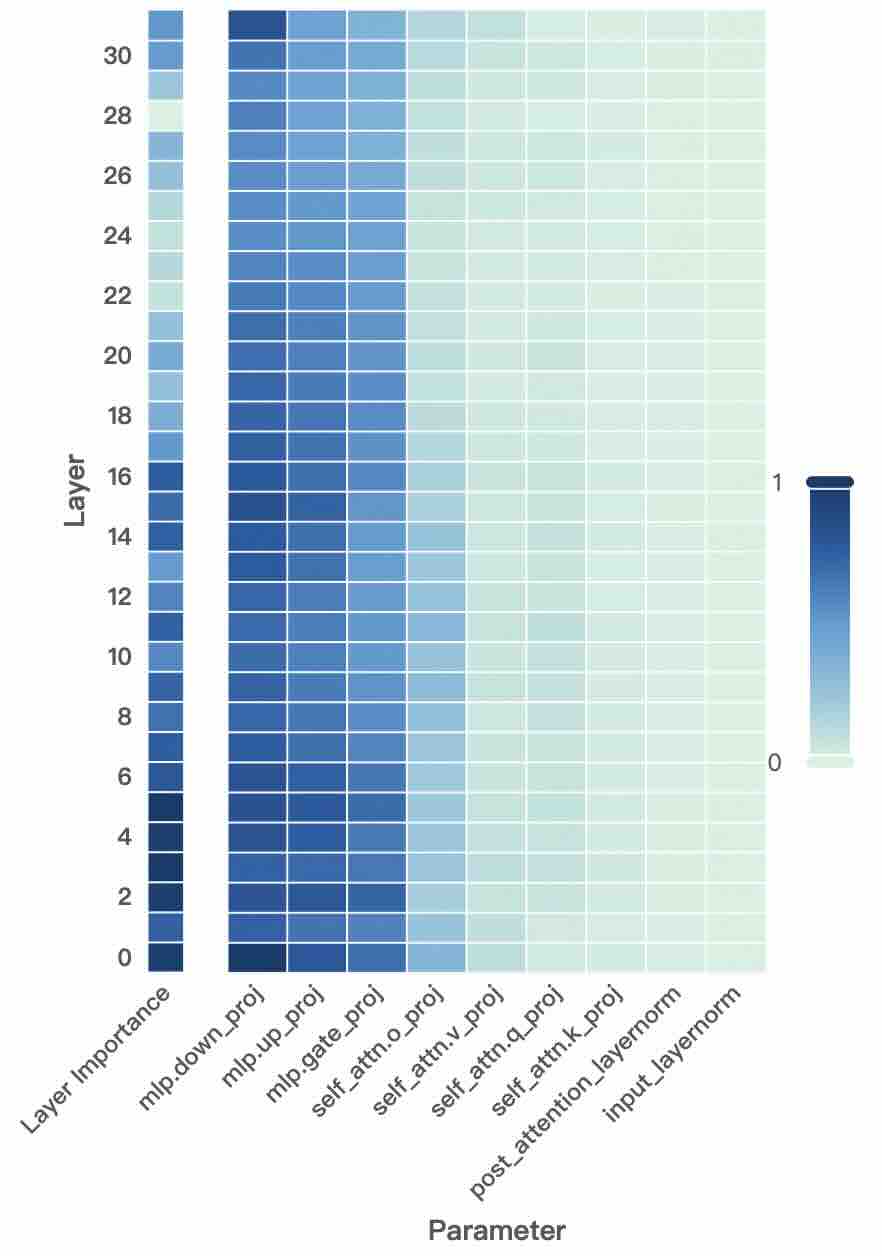

The image is a heatmap visualizing the relative importance of different parameters across the layers of a neural network model. The heatmap uses a color gradient from light (value 0) to dark blue (value 1) to represent importance scores. The data suggests an analysis of which components within the model's architecture are most significant for its function, likely derived from an interpretability technique like gradient-based attribution or parameter pruning sensitivity.

### Components/Axes

* **Y-Axis (Vertical):** Labeled **"Layer"**. It represents the depth of the network, with layers numbered from **0** (bottom) to **30** (top). The axis has tick marks at every even number (0, 2, 4, ..., 30).

* **X-Axis (Horizontal):** Labeled **"Parameter"**. It lists specific components or weight matrices within each layer. The categories, from left to right, are:

1. `Layer Importance` (This appears to be an aggregate or summary column for the entire layer).

2. `mlp.down_proj`

3. `mlp.up_proj`

4. `mlp.gate_proj`

5. `self_attn.o_proj`

6. `self_attn.v_proj`

7. `self_attn.q_proj`

8. `self_attn.k_proj`

9. `post_attention_layernorm`

10. `input_layernorm`

* **Legend/Color Scale:** Located on the right side of the chart. It is a vertical bar showing a gradient from **light greenish-white (labeled "0")** at the bottom to **dark blue (labeled "1")** at the top. This scale maps color intensity to an importance value between 0 and 1.

### Detailed Analysis

The heatmap displays a grid where each cell's color corresponds to the importance value of a specific parameter at a specific layer.

**Trend Verification & Data Point Extraction:**

* **`Layer Importance` Column:** This column shows a clear gradient. Importance is highest (darkest blue, ~0.9-1.0) in the lowest layers (0-6). It gradually becomes lighter (decreasing to ~0.5-0.7) in the middle layers (8-20), and is lightest (lowest importance, ~0.2-0.4) in the highest layers (22-30).

* **MLP Parameters (`mlp.down_proj`, `mlp.up_proj`, `mlp.gate_proj`):** These three columns exhibit a very similar and strong pattern. They are consistently the **darkest blue (highest importance, ~0.8-1.0)** across almost all layers, from 0 to 30. There is a very slight lightening in the topmost layers (28-30), but they remain significantly darker than most other parameters.

* **Self-Attention Output & Value Projections (`self_attn.o_proj`, `self_attn.v_proj`):** These columns show moderate importance. They are a medium blue (~0.5-0.7) in the lower to middle layers (0-18) and become progressively lighter (~0.2-0.4) in the higher layers (20-30).

* **Self-Attention Query & Key Projections (`self_attn.q_proj`, `self_attn.k_proj`):** These are lighter than the `o_proj` and `v_proj`. They start as a light-medium blue (~0.4-0.6) in lower layers and fade to very light (~0.1-0.3) in higher layers.

* **Layer Normalization Parameters (`post_attention_layernorm`, `input_layernorm`):** These two rightmost columns are the **lightest overall (lowest importance, ~0.0-0.2)**. They show a very faint greenish-white color across all layers, with `input_layernorm` being marginally lighter than `post_attention_layernorm`.

### Key Observations

1. **Dominance of MLP Layers:** The Multi-Layer Perceptron (MLP) projection layers (`down_proj`, `up_proj`, `gate_proj`) are unequivocally the most important parameters throughout the entire network depth.

2. **Layer Depth vs. Importance:** There is a general trend where parameter importance decreases as the layer number increases (i.e., deeper into the network). This is most pronounced in the `Layer Importance` summary and the attention projection parameters.

3. **Attention Component Hierarchy:** Within the self-attention mechanism, a clear hierarchy exists: `o_proj` and `v_proj` are more important than `q_proj` and `k_proj`.

4. **Minimal Role of LayerNorm:** The layer normalization parameters (`input_layernorm` and `post_attention_layernorm`) have consistently negligible importance scores according to this metric.

### Interpretation

This heatmap provides a Peircean investigative window into the functional architecture of the analyzed model (likely a Transformer-based LLM). The data suggests:

* **Core Computational Engine:** The MLP blocks are the primary drivers of the model's representational power or task-specific processing, as their parameters are deemed highly important across all layers. This aligns with theories that MLPs store factual knowledge and perform complex transformations.

* **Feature Processing in Early Layers:** The higher importance in lower layers indicates that the initial processing of input features is critical. The model's foundational understanding is built here.

* **Attention's Role:** The attention mechanism, while important, shows a differentiated role. The output (`o_proj`) and value (`v_proj`) projections, which combine information from different tokens, are more crucial than the query (`q_proj`) and key (`k_proj`) projections, which determine attention patterns. This could imply that *how* information is aggregated is more vital than the precise matching of queries and keys for this specific importance metric.

* **Normalization as a Utility:** The very low importance of LayerNorm parameters suggests they act as stable, routine utility functions—essential for training stability but not carrying significant "information" or "importance" in terms of the model's final output decision, as measured by this analysis.

**Anomaly/Notable Point:** The `Layer Importance` column is an aggregate. Its gradient from dark to light confirms the overall trend that lower layers are more "important" than higher layers by this metric, which is a key insight for model compression or pruning strategies—pruning higher layers may be less damaging.