\n

## Bar Chart: Llama3-8B-Instruct Performance

### Overview

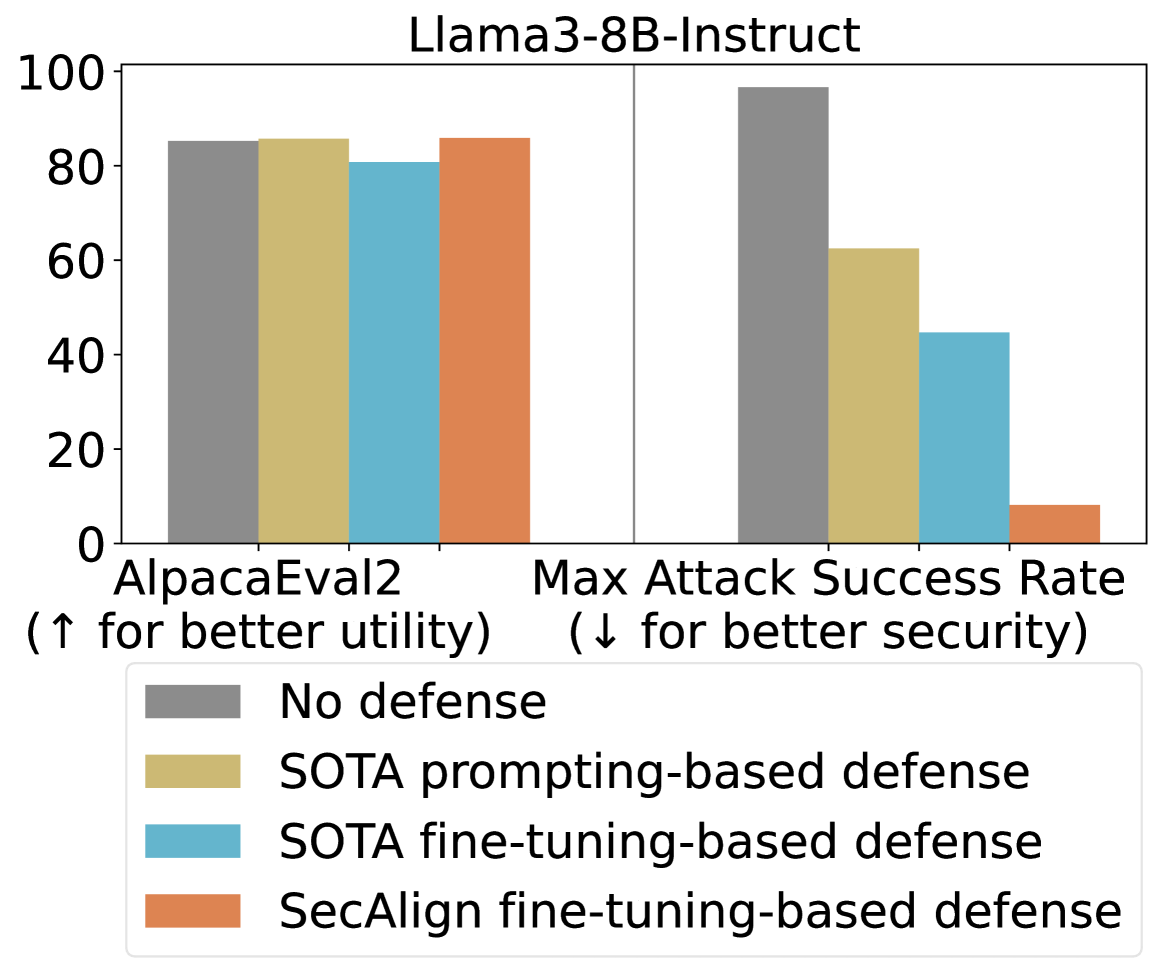

This bar chart compares the performance of the Llama3-8B-Instruct model under different defense strategies against adversarial attacks, evaluated on two metrics: AlpacaEval2 and Max Attack Success Rate. The chart uses grouped bar representations to show the performance of each defense strategy. Higher values are better for AlpacaEval2 (utility) and lower values are better for Max Attack Success Rate (security).

### Components/Axes

* **Title:** Llama3-8B-Instruct

* **X-axis:** Metric - AlpacaEval2 (↑ for better utility) and Max Attack Success Rate (↓ for better security)

* **Y-axis:** Score (Scale from 0 to 100)

* **Legend:** Located at the bottom-center of the chart.

* No defense (Gray)

* SOTA prompting-based defense (Yellow)

* SOTA fine-tuning-based defense (Light Blue)

* SecAlign fine-tuning-based defense (Orange)

### Detailed Analysis

The chart consists of two groups of four bars each, representing the performance on AlpacaEval2 and Max Attack Success Rate respectively.

**AlpacaEval2 (Utility):**

* **No defense:** The bar is approximately 82, with a slight uncertainty of ±2.

* **SOTA prompting-based defense:** The bar is approximately 80, with a slight uncertainty of ±2.

* **SOTA fine-tuning-based defense:** The bar is approximately 84, with a slight uncertainty of ±2.

* **SecAlign fine-tuning-based defense:** The bar is approximately 82, with a slight uncertainty of ±2.

**Max Attack Success Rate (Security):**

* **No defense:** The bar is approximately 98, with a slight uncertainty of ±2.

* **SOTA prompting-based defense:** The bar is approximately 60, with a slight uncertainty of ±2.

* **SOTA fine-tuning-based defense:** The bar is approximately 45, with a slight uncertainty of ±2.

* **SecAlign fine-tuning-based defense:** The bar is approximately 55, with a slight uncertainty of ±2.

### Key Observations

* For AlpacaEval2, SOTA fine-tuning-based defense shows the highest score, indicating the best utility.

* For Max Attack Success Rate, No defense has the highest score, indicating the worst security.

* Both SOTA prompting-based and fine-tuning-based defenses, as well as SecAlign fine-tuning-based defense, significantly reduce the Max Attack Success Rate compared to no defense.

* SOTA fine-tuning-based defense provides the best security (lowest attack success rate).

* The SOTA prompting-based defense and SecAlign fine-tuning-based defense have similar performance on the Max Attack Success Rate.

### Interpretation

The data suggests that applying defense strategies, particularly fine-tuning-based approaches, improves the security of the Llama3-8B-Instruct model against adversarial attacks. While SOTA fine-tuning-based defense enhances utility (AlpacaEval2 score), it also provides the most substantial reduction in attack success rate. The trade-off between utility and security is evident; improving security often comes at the cost of some utility, and vice versa. The relatively similar performance of SOTA prompting-based and SecAlign fine-tuning-based defenses suggests that both are viable options for enhancing security, but may not be as effective as SOTA fine-tuning-based defense. The high attack success rate with no defense highlights the vulnerability of the model without protective measures. The chart demonstrates the importance of considering both utility and security when deploying large language models in real-world applications.