TECHNICAL ASSET FINGERPRINT

1a124c9333865153b7156b03

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Heatmap: F1 Score Analysis: Transformer Models

### Overview

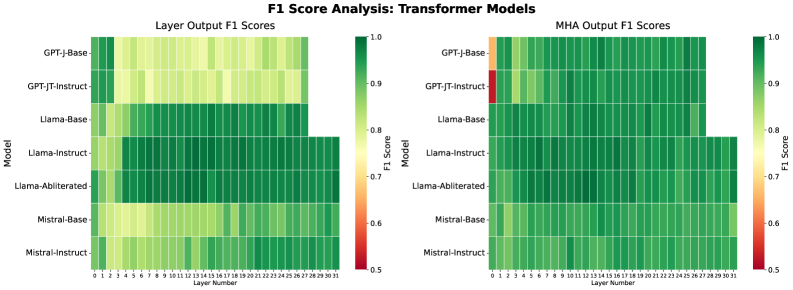

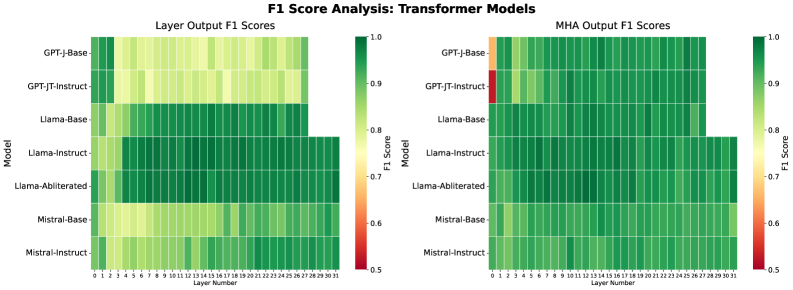

The image presents two heatmaps comparing the F1 scores of various transformer models across different layers. The left heatmap displays "Layer Output F1 Scores," while the right heatmap shows "MHA Output F1 Scores." The models compared are GPT-J-Base, GPT-JT-Instruct, Llama-Base, Llama-Instruct, Llama-Abliterated, Mistral-Base, and Mistral-Instruct. The F1 scores are represented by a color gradient, ranging from red (0.5) to green (1.0).

### Components/Axes

* **Title:** F1 Score Analysis: Transformer Models

* **Left Heatmap Title:** Layer Output F1 Scores

* **Right Heatmap Title:** MHA Output F1 Scores

* **Y-axis (Model):** GPT-J-Base, GPT-JT-Instruct, Llama-Base, Llama-Instruct, Llama-Abliterated, Mistral-Base, Mistral-Instruct

* **X-axis (Layer Number):** 0 to 31

* **Color Scale (F1 Score):** Ranges from 0.5 (red) to 1.0 (green), with intermediate values of 0.6, 0.7, 0.8, and 0.9.

### Detailed Analysis

**Left Heatmap: Layer Output F1 Scores**

* **GPT-J-Base:** Shows relatively high F1 scores (green) across all layers, with some layers showing slightly lower scores (yellowish-green).

* **GPT-JT-Instruct:** Similar to GPT-J-Base, generally high F1 scores across all layers, with some variation.

* **Llama-Base:** High F1 scores (green) up to layer 27, after which the data stops.

* **Llama-Instruct:** High F1 scores (green) up to layer 27, after which the data stops.

* **Llama-Abliterated:** Shows a mix of F1 scores, generally in the green range, but with some layers showing lower scores (yellowish-green). Data stops after layer 27.

* **Mistral-Base:** Shows a mix of F1 scores, generally in the yellowish-green range.

* **Mistral-Instruct:** Similar to Mistral-Base, with F1 scores generally in the yellowish-green range.

**Right Heatmap: MHA Output F1 Scores**

* **GPT-J-Base:** Starts with a low F1 score (orange/red) at layer 0, then quickly increases to high F1 scores (green) for the remaining layers.

* **GPT-JT-Instruct:** Starts with a very low F1 score (red) at layer 0, then quickly increases to high F1 scores (green) for the remaining layers.

* **Llama-Base:** High F1 scores (green) across all layers, data stops after layer 27.

* **Llama-Instruct:** High F1 scores (green) across all layers, data stops after layer 27.

* **Llama-Abliterated:** High F1 scores (green) across all layers, data stops after layer 27.

* **Mistral-Base:** High F1 scores (green) across all layers.

* **Mistral-Instruct:** High F1 scores (green) across all layers.

### Key Observations

* The MHA Output F1 Scores for GPT-J-Base and GPT-JT-Instruct show a significant initial drop in F1 score at layer 0 compared to the Layer Output F1 Scores.

* The Llama models (Base, Instruct, and Abliterated) have data only up to layer 27.

* The Mistral models (Base and Instruct) generally have lower Layer Output F1 Scores compared to the other models.

* The MHA Output F1 Scores are generally higher and more consistent across all models and layers (excluding the initial layer for GPT-J-Base and GPT-JT-Instruct).

### Interpretation

The heatmaps provide a visual comparison of the F1 scores of different transformer models at each layer. The Layer Output F1 Scores represent the performance of each layer individually, while the MHA Output F1 Scores represent the performance of the multi-head attention mechanism within each layer.

The initial drop in MHA Output F1 Scores for GPT-J-Base and GPT-JT-Instruct at layer 0 suggests that the multi-head attention mechanism may have some initial difficulties in processing the input at the first layer. However, this is quickly resolved in subsequent layers.

The generally higher MHA Output F1 Scores indicate that the multi-head attention mechanism is effective in improving the performance of the transformer models.

The lower Layer Output F1 Scores for the Mistral models suggest that these models may have some limitations in their individual layer performance compared to the other models.

The fact that the Llama models only have data up to layer 27 may indicate a difference in architecture or configuration compared to the other models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmaps: F1 Score Analysis - Transformer Models

### Overview

The image presents two heatmaps comparing the F1 scores of different Transformer models across various layers. The left heatmap displays "Layer Output F1 Scores", while the right heatmap shows "MHA Output F1 Scores". Both heatmaps use a color scale to represent F1 score values, ranging from 0.5 (red) to 1.0 (green). The models being compared are GPT-J-Base, GPT-J-Instruct, Llama-Base, Llama-Instruct, Llama-Abliterated, Mistral-Base, and Mistral-Instruct. The x-axis of both heatmaps represents the layer number, ranging from 0 to 31.

### Components/Axes

* **Title:** "F1 Score Analysis: Transformer Models" (centered at the top)

* **Left Heatmap Title:** "Layer Output F1 Scores" (top-left)

* **Right Heatmap Title:** "MHA Output F1 Scores" (top-right)

* **Y-axis Label (Both Heatmaps):** "Model" (left side)

* Categories: GPT-J-Base, GPT-J-Instruct, Llama-Base, Llama-Instruct, Llama-Abliterated, Mistral-Base, Mistral-Instruct

* **X-axis Label (Both Heatmaps):** "Layer Number" (bottom)

* Markers: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31

* **Color Scale (Both Heatmaps):** Located to the right of each heatmap.

* Minimum: 0.5 (Red)

* Maximum: 1.0 (Green)

* Intermediate values are represented by shades of yellow and light green.

### Detailed Analysis or Content Details

**Left Heatmap: Layer Output F1 Scores**

* **GPT-J-Base:** Generally high F1 scores (approximately 0.95-1.0) across all layers, with a slight dip around layers 1-3 (approximately 0.9).

* **GPT-J-Instruct:** Similar to GPT-J-Base, with high F1 scores (approximately 0.95-1.0) across most layers. A more pronounced dip is observed around layers 1-5 (approximately 0.85-0.9).

* **Llama-Base:** F1 scores start lower (approximately 0.7-0.8) in the initial layers (0-5), then increase to around 0.9 by layer 10 and remain relatively stable.

* **Llama-Instruct:** Similar trend to Llama-Base, but with slightly higher initial F1 scores (approximately 0.8-0.9) and a faster increase to around 0.95 by layer 10.

* **Llama-Abliterated:** F1 scores are consistently lower than other models, ranging from approximately 0.6 to 0.8 across all layers.

* **Mistral-Base:** F1 scores start around 0.7-0.8 in the initial layers, increase to approximately 0.9 by layer 10, and remain relatively stable.

* **Mistral-Instruct:** Similar to Mistral-Base, but with slightly higher F1 scores (approximately 0.8-0.9) in the initial layers and a faster increase to around 0.95 by layer 10.

**Right Heatmap: MHA Output F1 Scores**

* **GPT-J-Base:** Very high and consistent F1 scores (approximately 0.95-1.0) across all layers.

* **GPT-J-Instruct:** Similar to GPT-J-Base, with very high and consistent F1 scores (approximately 0.95-1.0) across all layers.

* **Llama-Base:** F1 scores are generally high (approximately 0.85-0.95) across all layers, with a slight dip around layers 1-3 (approximately 0.8).

* **Llama-Instruct:** Similar to Llama-Base, with high F1 scores (approximately 0.9-1.0) across all layers.

* **Llama-Abliterated:** F1 scores are lower than other models, ranging from approximately 0.7 to 0.9 across all layers.

* **Mistral-Base:** F1 scores are generally high (approximately 0.85-0.95) across all layers.

* **Mistral-Instruct:** Similar to Mistral-Base, with high F1 scores (approximately 0.9-1.0) across all layers.

### Key Observations

* GPT-J models (Base and Instruct) consistently exhibit the highest F1 scores in both heatmaps.

* Llama-Abliterated consistently performs the worst across all layers and both types of F1 scores.

* Llama-Base and Mistral-Base show a clear trend of increasing F1 scores with layer number.

* The "MHA Output F1 Scores" heatmap generally shows higher F1 scores across all models compared to the "Layer Output F1 Scores" heatmap.

* The differences in F1 scores between models are more pronounced in the "Layer Output F1 Scores" heatmap, particularly in the initial layers.

### Interpretation

The data suggests that GPT-J models are the most effective in terms of F1 score, regardless of whether the evaluation is performed on layer outputs or MHA outputs. The consistent high performance of GPT-J models indicates a strong ability to learn and represent information across all layers. Llama-Abliterated's consistently lower scores suggest that the ablation process negatively impacts the model's performance.

The higher F1 scores observed in the "MHA Output F1 Scores" heatmap compared to the "Layer Output F1 Scores" heatmap may indicate that the multi-head attention mechanism is a crucial component for achieving high performance in these Transformer models. The increasing F1 scores with layer number for Llama-Base and Mistral-Base suggest that these models benefit from deeper architectures, allowing them to learn more complex representations.

The differences in performance between the "Base" and "Instruct" versions of each model are relatively small, suggesting that instruction tuning does not significantly impact F1 scores in this particular evaluation. However, further analysis may be needed to determine the impact of instruction tuning on other metrics, such as perplexity or human evaluation.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Chart: F1 Score Analysis of Transformer Models

### Overview

The image displays two side-by-side heatmaps comparing the F1 scores (a measure of model accuracy) across different layers of various transformer-based language models. The left heatmap analyzes "Layer Output F1 Scores," while the right analyzes "MHA (Multi-Head Attention) Output F1 Scores." The charts use a color gradient from red (low score, ~0.5) to dark green (high score, 1.0) to visualize performance.

### Components/Axes

* **Chart Titles:**

* Left Chart: "Layer Output F1 Scores"

* Right Chart: "MHA Output F1 Scores"

* **Y-Axis (Vertical):** Labeled "Model." It lists seven distinct models from top to bottom:

1. GPT-J-Base

2. GPT-JT-Instruct

3. Llama-Base

4. Llama-Instruct

5. Llama-Abliterated

6. Mistral-Base

7. Mistral-Instruct

* **X-Axis (Horizontal):** Labeled "Layer Number." It lists layers from 0 to 31 (inclusive), indicating a 32-layer model architecture.

* **Color Scale/Legend:** Located to the right of each heatmap. It is a vertical bar showing the F1 Score mapping:

* Dark Green: 1.0

* Green: ~0.9

* Light Green: ~0.8

* Yellow: ~0.7

* Orange: ~0.6

* Red: 0.5

### Detailed Analysis

**Left Heatmap: Layer Output F1 Scores**

* **GPT-J-Base:** Shows very low scores (red/orange, ~0.5-0.6) in layers 0-2. Performance improves to light green (~0.8) in middle layers and reaches dark green (~0.95-1.0) in the final layers (28-31).

* **GPT-JT-Instruct:** Similar pattern to GPT-J-Base but with a slightly better start. Early layers (0-1) are orange/red, quickly improving to yellow/light green by layer 4, and maintaining high green scores through the later layers.

* **Llama-Base:** Starts with moderate scores (yellow/light green, ~0.7-0.8) in early layers. Shows a strong, consistent performance (dark green, ~0.9-1.0) from approximately layer 6 onward.

* **Llama-Instruct:** Exhibits the strongest overall performance. Begins with good scores (green, ~0.85) and achieves near-perfect dark green scores across the vast majority of layers, especially from layer 5 onwards.

* **Llama-Abliterated:** Performance is very similar to Llama-Instruct, with high dark green scores across most layers, indicating robust performance.

* **Mistral-Base:** Starts with lower scores (yellow, ~0.7) in the first few layers. Performance steadily increases, reaching dark green in the final third of the layers (approx. layers 20-31).

* **Mistral-Instruct:** Begins with moderate scores (light green, ~0.8) and shows a clear, steady improvement trend, culminating in dark green scores in the final layers.

**Right Heatmap: MHA Output F1 Scores**

* **GPT-J-Base:** Shows a dramatic improvement. Layers 0-1 are red/orange (~0.5-0.6), but from layer 2 onward, the scores are almost uniformly dark green (~0.95-1.0).

* **GPT-JT-Instruct:** Has a notable outlier. Layer 0 is red (~0.5), but from layer 1 onward, it is almost entirely dark green.

* **Llama-Base, Llama-Instruct, Llama-Abliterated:** All three Llama variants show consistently high performance (dark green) across nearly all layers for MHA outputs, with minimal variation.

* **Mistral-Base & Mistral-Instruct:** Both show a clear gradient. They start with lighter green/yellow scores in the earliest layers (0-3) and transition to solid dark green for the remainder of the layers.

### Key Observations

1. **Layer-wise Progression:** For all models, F1 scores generally improve from earlier to later layers. The final layers (28-31) consistently show the highest performance.

2. **Model Comparison:** The "Instruct" variants (Llama-Instruct, Mistral-Instruct) and the "Abliterated" model generally outperform their "Base" counterparts, especially in the earlier and middle layers.

3. **MHA vs. Layer Output:** The MHA Output scores (right chart) are uniformly higher and reach peak performance much earlier (often by layer 2-5) compared to the general Layer Output scores. This suggests the attention mechanism's representations become highly effective very early in the network.

4. **Early Layer Vulnerability:** The first 0-3 layers are where the most significant performance deficits and variability occur, particularly for the GPT-J family of models.

5. **Outlier:** The single red cell for GPT-JT-Instruct at Layer 0 in the MHA Output chart is a stark outlier against its otherwise perfect green row.

### Interpretation

This analysis provides a granular view of how different transformer models develop their internal representations across depth. The data suggests:

* **Functional Specialization:** The stark difference between early and late layer performance indicates a functional hierarchy. Early layers likely process basic syntactic or token-level information, while later layers integrate this into semantically rich representations suitable for the final task (as measured by F1).

* **Impact of Training:** The superior performance of Instruct and Abliterated models implies that instruction-tuning or specific modification techniques lead to more robust and immediately useful internal representations across the entire network depth, not just at the output.

* **Attention is Key:** The consistently high MHA Output scores highlight the critical role of the multi-head attention mechanism. It appears to generate high-quality, task-relevant signals very early in the processing stream, which the rest of the network then refines.

* **Architectural Insight:** The uniformity of high scores in later layers across all models suggests a convergence point where the models' internal states become highly optimized for the evaluation task, regardless of their initial training differences. The early layers, however, reveal the "fingerprint" of each model's specific architecture and training regimen.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: F1 Score Analysis of Transformer Models

### Overview

The image presents two side-by-side heatmaps comparing F1 scores of transformer models across different layers. The left heatmap shows "Layer Output F1 Scores," while the right heatmap displays "MHA Output F1 Scores." Models are listed on the y-axis, and layer numbers (0–31) are on the x-axis. Color intensity represents F1 scores, with red (0.5) indicating low performance and green (1.0) indicating high performance.

---

### Components/Axes

- **Y-Axis (Models)**:

- GPT-J-Base

- GPT-JT-Instruct

- Llama-Base

- Llama-Instruct

- Llama-Abliterated

- Mistral-Base

- Mistral-Instruct

- **X-Axis (Layer Numbers)**:

- Layer 0 to Layer 31 (32 layers total).

- **Color Legend**:

- Red (0.5): Low F1 score

- Green (1.0): High F1 score

- Gradient from red to green indicates intermediate scores.

- **Legend Position**:

- Right side of each heatmap, vertically aligned with the y-axis.

---

### Detailed Analysis

#### Layer Output F1 Scores (Left Heatmap)

- **GPT-J-Base**:

- Light green to yellow gradient across layers 0–31, indicating moderate F1 scores (~0.7–0.85).

- **GPT-JT-Instruct**:

- Similar gradient to GPT-J-Base but slightly darker in later layers (~0.75–0.9).

- **Llama-Base**:

- Dark green in layers 10–31 (~0.85–0.95), lighter in early layers (~0.7–0.8).

- **Llama-Instruct**:

- Consistently dark green across all layers (~0.9–1.0).

- **Llama-Abliterated**:

- Dark green in layers 10–31 (~0.85–0.95), lighter in early layers (~0.7–0.8).

- **Mistral-Base**:

- Light green to yellow gradient (~0.7–0.85).

- **Mistral-Instruct**:

- Consistently dark green (~0.9–1.0).

#### MHA Output F1 Scores (Right Heatmap)

- **GPT-J-Base**:

- Light green to yellow gradient (~0.7–0.85).

- **GPT-JT-Instruct**:

- Dark green in most layers (~0.85–0.95), but **red in Layer 0** (~0.5), a notable outlier.

- **Llama-Base**:

- Dark green in layers 10–31 (~0.85–0.95), lighter in early layers (~0.7–0.8).

- **Llama-Instruct**:

- Consistently dark green (~0.9–1.0).

- **Llama-Abliterated**:

- Dark green in layers 10–31 (~0.85–0.95), lighter in early layers (~0.7–0.8).

- **Mistral-Base**:

- Light green to yellow gradient (~0.7–0.85).

- **Mistral-Instruct**:

- Consistently dark green (~0.9–1.0).

---

### Key Observations

1. **Mistral-Instruct** and **Llama-Instruct** consistently achieve the highest F1 scores (~0.9–1.0) across all layers in both heatmaps.

2. **GPT-JT-Instruct** shows a significant outlier in **MHA Output Layer 0** (red, ~0.5), contrasting with its otherwise strong performance.

3. **Llama-Abliterated** and **Llama-Base** exhibit similar trends, with improved performance in later layers.

4. **GPT-J-Base** and **Mistral-Base** have lower scores in early layers but improve gradually.

---

### Interpretation

- **Model Architecture Impact**:

- Instruct-tuned variants (e.g., Llama-Instruct, Mistral-Instruct) outperform base models, suggesting instruction fine-tuning enhances layer-wise performance.

- **MHA Layer Dynamics**:

- The red cell in GPT-JT-Instruct’s MHA Layer 0 indicates a potential architectural weakness or training instability in early attention mechanisms.

- **Layer Depth Trends**:

- Most models show improved F1 scores in deeper layers, implying that later layers capture more complex patterns.

- **Anomaly Investigation**:

- The outlier in GPT-JT-Instruct’s MHA Layer 0 warrants further analysis to determine if it reflects a bug, data artifact, or intentional design choice.

This analysis highlights the importance of model architecture and training strategies in determining layer-wise performance, with instruct-tuned models demonstrating superior consistency.

DECODING INTELLIGENCE...