## Diagram: MoBA Gating and Varlen Flash-Attention Architecture

### Overview

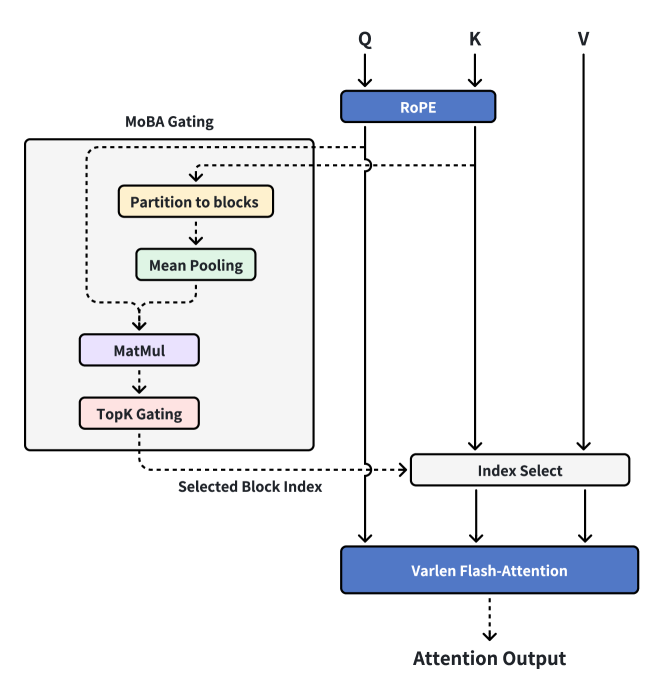

The image is a technical architectural diagram illustrating a neural network attention mechanism. It depicts a system that combines a "MoBA Gating" module with a "Varlen Flash-Attention" computation block. The diagram shows the flow of Query (Q), Key (K), and Value (V) tensors through various processing stages, with a gating mechanism selecting specific blocks of data for the final attention operation.

### Components/Axes

The diagram is composed of labeled processing blocks connected by directional arrows (solid and dashed) indicating data and control flow.

**Primary Input/Output Labels:**

* **Q**: Query input (top center)

* **K**: Key input (top center-right)

* **V**: Value input (top right)

* **Attention Output**: Final output (bottom center)

**Processing Blocks (with approximate colors and positions):**

1. **RoPE** (Blue box, top center): Positioned below the Q and K inputs.

2. **MoBA Gating** (Large light gray box, left side): Contains a sub-process.

* **Partition to blocks** (Yellow box, inside MoBA Gating, top)

* **Mean Pooling** (Green box, inside MoBA Gating, middle)

* **MatMul** (Purple box, inside MoBA Gating, below Mean Pooling)

* **TopK Gating** (Pink box, inside MoBA Gating, bottom)

3. **Index Select** (Light gray box, center-right)

4. **Varlen Flash-Attention** (Blue box, bottom center)

**Flow and Connection Labels:**

* **Selected Block Index**: A dashed arrow output from the "TopK Gating" block.

* **Solid arrows**: Indicate the primary flow of tensor data (Q, K, V).

* **Dashed arrows**: Indicate control signals or index selection paths.

### Detailed Analysis

**Component Isolation & Flow:**

1. **Header Region (Inputs & Initial Processing):**

* Three input tensors, labeled **Q**, **K**, and **V**, enter from the top.

* The **Q** and **K** tensors are fed into a blue **RoPE** (Rotary Positional Embedding) block. The **V** tensor bypasses this block.

* The output of RoPE continues downward along two separate paths (for Q and K).

2. **Main Chart Region (Gating & Selection):**

* **Left Side - MoBA Gating Module:** A dashed line originates from the path after RoPE (likely from K or a derived representation) and enters the **MoBA Gating** block.

* Inside, the data first goes to **Partition to blocks**.

* The output proceeds to **Mean Pooling**.

* The pooled data is then processed by a **MatMul** (Matrix Multiplication) operation.

* Finally, **TopK Gating** selects the most important elements, outputting a **Selected Block Index** via a dashed arrow.

* **Right Side - Index Selection:** The **Selected Block Index** (dashed arrow) points to the **Index Select** block.

* The **Index Select** block receives the post-RoPE **K** tensor and the original **V** tensor via solid arrows.

* It uses the index to select specific blocks from K and V.

3. **Footer Region (Attention Computation):**

* The post-RoPE **Q** tensor, and the selected K and V blocks from **Index Select**, all feed into the **Varlen Flash-Attention** block via solid arrows.

* This block computes the attention mechanism, producing the final **Attention Output**.

**Spatial Grounding:**

* The **MoBA Gating** module occupies the entire left third of the diagram.

* The **RoPE** block is centered horizontally near the top.

* The **Index Select** block is positioned to the right of the center, vertically between the MoBA Gating and Varlen Flash-Attention blocks.

* The **Varlen Flash-Attention** block is centered at the bottom.

* The **Selected Block Index** dashed line travels from the bottom-left (MoBA Gating) to the center-right (Index Select).

### Key Observations

* **Hybrid Control Flow:** The diagram uses solid lines for data flow and dashed lines for control/index flow, clearly separating the main tensor pipeline from the gating mechanism's selection logic.

* **Sparse Attention Pattern:** The architecture implements a form of sparse attention. The **MoBA Gating** module (likely "Mixture of Block Attention" or similar) does not process all key-value pairs. Instead, it uses **Partition to blocks**, **Mean Pooling**, and **TopK Gating** to select a subset of blocks (**Selected Block Index**), which are then used by **Index Select**.

* **Efficiency Focus:** The use of **Varlen Flash-Attention** (an optimized kernel for variable-length sequences) combined with block-wise selection suggests the architecture is designed for computational and memory efficiency, especially with long sequences.

* **Positional Encoding:** The **RoPE** block is applied only to Q and K, not V, which is standard practice for rotary positional embeddings.

### Interpretation

This diagram details an efficient, gated attention mechanism designed to reduce the quadratic complexity of standard self-attention. The **MoBA Gating** module acts as a "router" or "selector." It analyzes the input (likely the keys) to identify the most relevant blocks of information (**TopK Gating**) for a given query, rather than attending to every single position.

The process can be interpreted as follows: For each attention computation, the system first determines *which parts of the memory (Key/Value blocks) are worth attending to*. This selection is based on a lightweight, pooled representation of the blocks. Only these selected blocks are then used in the expensive **Varlen Flash-Attention** operation. This approach dramatically reduces the computational cost, making it feasible to process very long contexts. The architecture embodies a "compute-on-demand" principle for attention, where full computation is reserved only for the most promising data blocks identified by the gating network.