\n

## Diagram: MoBA Gating Architecture

### Overview

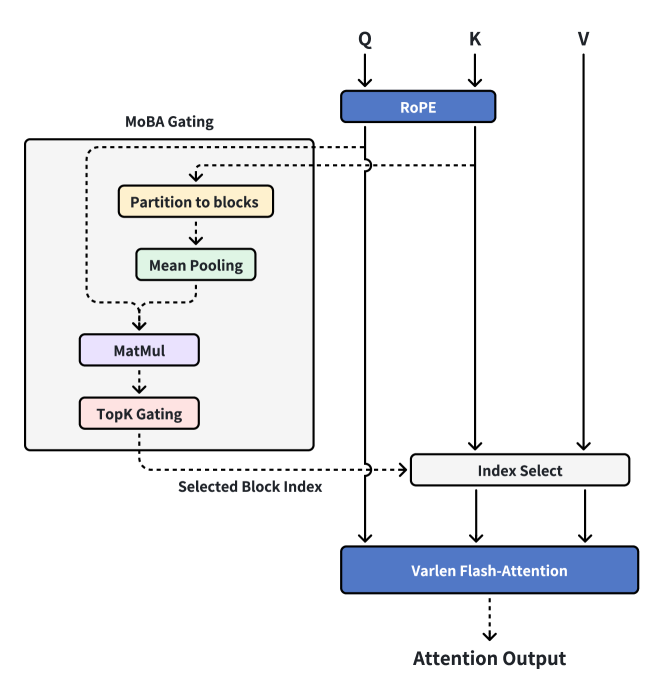

The image depicts a diagram illustrating the architecture of a MoBA (Mixture of Block Attention) Gating mechanism within an attention model. The diagram shows the flow of data through several processing blocks, starting with inputs Q (Query), K (Key), and V (Value), and culminating in an "Attention Output". The MoBA Gating block appears to be a key component, responsible for selecting and processing blocks of information.

### Components/Axes

The diagram consists of the following components:

* **Inputs:** Q (Query), K (Key), V (Value) - positioned at the top of the diagram.

* **RoPE:** A block labeled "RoPE" connected to Q and K.

* **MoBA Gating:** A dashed-border block containing the following sub-components:

* "Partition to blocks"

* "Mean Pooling"

* "MatMul"

* "TopK Gating"

* **Index Select:** A block labeled "Index Select".

* **VarLen Flash-Attention:** A block labeled "VarLen Flash-Attention".

* **Attention Output:** The final output of the system.

* **Selected Block Index:** A label indicating an output from the MoBA Gating block.

* **Arrows:** Indicate the flow of data between components.

There are no axes or scales present in this diagram. It is a flow diagram, not a chart.

### Detailed Analysis or Content Details

The data flow can be described as follows:

1. **Inputs:** Q, K, and V are the initial inputs to the system.

2. **RoPE:** Q and K are fed into a "RoPE" block. The output of RoPE is then passed to the "Index Select" block.

3. **MoBA Gating:** The MoBA Gating block receives input from Q and K (via RoPE). Inside the MoBA Gating block:

* The input is first "Partitioned to blocks".

* These blocks are then subjected to "Mean Pooling".

* The result of Mean Pooling is passed through a "MatMul" (Matrix Multiplication) layer.

* Finally, "TopK Gating" is applied.

* The MoBA Gating block also outputs a "Selected Block Index".

4. **Index Select:** The output of RoPE and the "Selected Block Index" from the MoBA Gating block are fed into the "Index Select" block.

5. **VarLen Flash-Attention:** The output of "Index Select" and V are fed into the "VarLen Flash-Attention" block.

6. **Attention Output:** The "VarLen Flash-Attention" block produces the final "Attention Output".

### Key Observations

The diagram highlights a modular attention mechanism where the MoBA Gating block dynamically selects relevant blocks of information for processing. The use of "TopK Gating" suggests that only the most important blocks are selected. The "VarLen Flash-Attention" block indicates a potentially efficient attention implementation. The RoPE block suggests the use of Rotary Positional Embeddings.

### Interpretation

This diagram illustrates a novel attention mechanism that combines block-wise processing with dynamic gating. The MoBA Gating block acts as a selector, choosing which blocks of information are most relevant for the attention calculation. This approach could improve efficiency and performance by focusing computational resources on the most important parts of the input sequence. The "VarLen Flash-Attention" block suggests an attempt to optimize the attention calculation for variable-length sequences. The overall architecture appears designed to address the limitations of traditional attention mechanisms, particularly in handling long sequences or complex relationships between input elements. The use of RoPE suggests the model is designed to handle sequential data where positional information is important. The diagram does not provide any quantitative data, so it is difficult to assess the effectiveness of this architecture without further information. However, the design suggests a potentially powerful and efficient attention mechanism.