TECHNICAL ASSET FINGERPRINT

1a65c95e749c8d0904528649

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

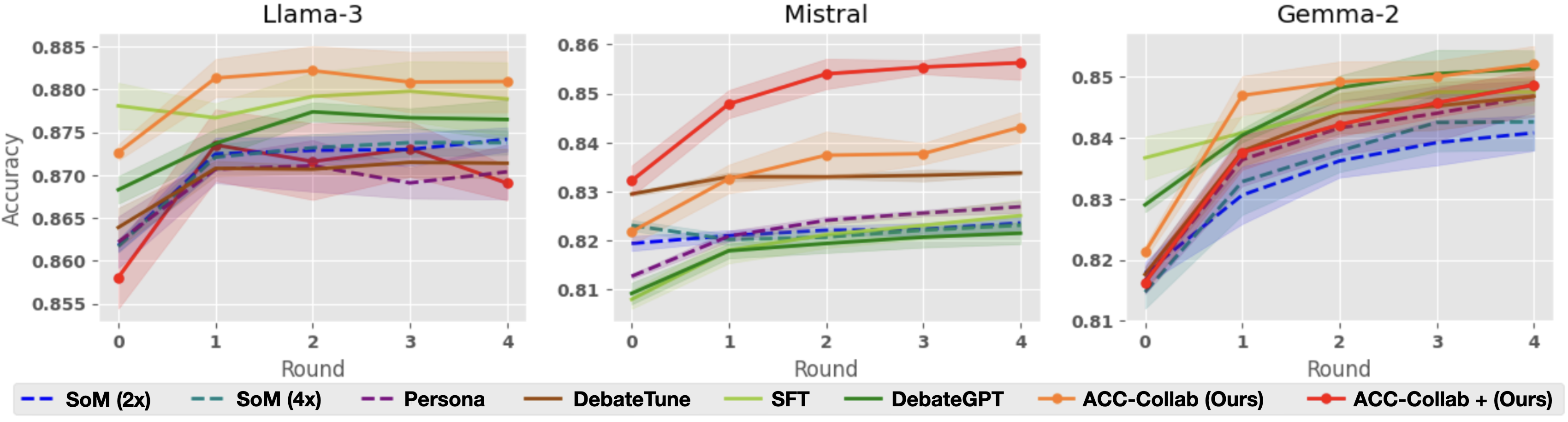

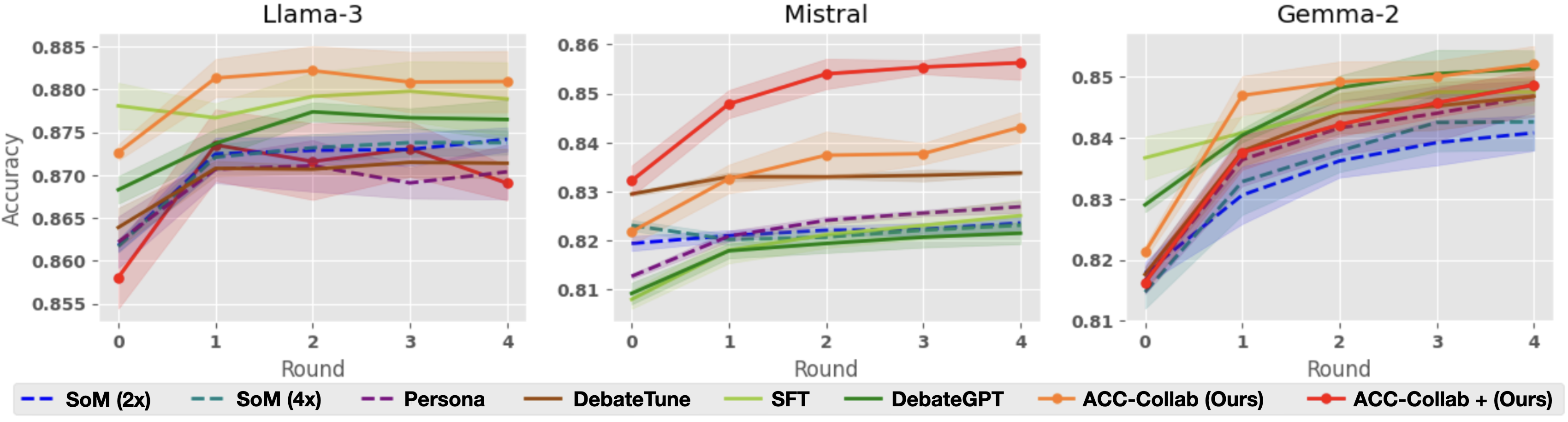

## Line Charts: Model Accuracy vs. Round

### Overview

The image presents three line charts comparing the accuracy of different models (Llama-3, Mistral, and Gemma-2) across several rounds of training or interaction. Each chart displays the performance of various methods, including "SoM (2x)", "SoM (4x)", "Persona", "DebateTune", "SFT", "DebateGPT", "ACC-Collab (Ours)", and "ACC-Collab + (Ours)". The x-axis represents the round number (0 to 4), and the y-axis represents the accuracy, ranging from approximately 0.855 to 0.885 for Llama-3, 0.81 to 0.86 for Mistral, and 0.81 to 0.85 for Gemma-2.

### Components/Axes

* **Titles:**

* Top-left chart: "Llama-3"

* Top-center chart: "Mistral"

* Top-right chart: "Gemma-2"

* **X-axis:**

* Label: "Round"

* Scale: 0, 1, 2, 3, 4

* **Y-axis:**

* Label: "Accuracy"

* Scale (Llama-3): 0.855, 0.860, 0.865, 0.870, 0.875, 0.880, 0.885

* Scale (Mistral): 0.81, 0.82, 0.83, 0.84, 0.85, 0.86

* Scale (Gemma-2): 0.81, 0.82, 0.83, 0.84, 0.85

* **Legend:** Located at the bottom of the image.

* Blue dashed line: "SoM (2x)"

* Teal dashed line: "SoM (4x)"

* Purple dashed line: "Persona"

* Brown solid line: "DebateTune"

* Light green solid line: "SFT"

* Dark green solid line: "DebateGPT"

* Orange solid line: "ACC-Collab (Ours)"

* Red solid line: "ACC-Collab + (Ours)"

### Detailed Analysis

#### Llama-3 Chart

* **SoM (2x)** (Blue dashed line): Starts at approximately 0.860 at round 0, increases to around 0.872 by round 1, and then remains relatively stable around 0.872 through round 4.

* **SoM (4x)** (Teal dashed line): Starts at approximately 0.862 at round 0, increases to around 0.873 by round 1, and then remains relatively stable around 0.873 through round 4.

* **Persona** (Purple dashed line): Starts at approximately 0.860 at round 0, increases to around 0.871 by round 1, and then decreases slightly to around 0.870 by round 4.

* **DebateTune** (Brown solid line): Starts at approximately 0.863 at round 0, increases to around 0.871 by round 1, and then remains relatively stable around 0.871 through round 4.

* **SFT** (Light green solid line): Starts at approximately 0.878 at round 0, increases slightly to around 0.880 by round 1, and then remains relatively stable around 0.878 through round 4.

* **DebateGPT** (Dark green solid line): Starts at approximately 0.868 at round 0, increases to around 0.877 by round 1, and then remains relatively stable around 0.877 through round 4.

* **ACC-Collab (Ours)** (Orange solid line): Starts at approximately 0.872 at round 0, increases to around 0.882 by round 1, and then remains relatively stable around 0.881 through round 4.

* **ACC-Collab + (Ours)** (Red solid line): Starts at approximately 0.858 at round 0, increases to around 0.871 by round 1, and then decreases slightly to around 0.869 by round 4.

#### Mistral Chart

* **SoM (2x)** (Blue dashed line): Starts at approximately 0.820 at round 0, increases slightly to around 0.822 by round 1, and then remains relatively stable around 0.822 through round 4.

* **SoM (4x)** (Teal dashed line): Starts at approximately 0.810 at round 0, increases to around 0.820 by round 1, and then remains relatively stable around 0.820 through round 4.

* **Persona** (Purple dashed line): Starts at approximately 0.815 at round 0, increases to around 0.823 by round 1, and then remains relatively stable around 0.823 through round 4.

* **DebateTune** (Brown solid line): Starts at approximately 0.830 at round 0, increases to around 0.838 by round 1, and then remains relatively stable around 0.838 through round 4.

* **SFT** (Light green solid line): Starts at approximately 0.820 at round 0, increases slightly to around 0.825 by round 1, and then remains relatively stable around 0.825 through round 4.

* **DebateGPT** (Dark green solid line): Starts at approximately 0.818 at round 0, increases slightly to around 0.822 by round 1, and then remains relatively stable around 0.822 through round 4.

* **ACC-Collab (Ours)** (Orange solid line): Starts at approximately 0.822 at round 0, increases to around 0.835 by round 1, and then remains relatively stable around 0.838 through round 4.

* **ACC-Collab + (Ours)** (Red solid line): Starts at approximately 0.835 at round 0, increases to around 0.853 by round 1, and then remains relatively stable around 0.855 through round 4.

#### Gemma-2 Chart

* **SoM (2x)** (Blue dashed line): Starts at approximately 0.820 at round 0, increases to around 0.838 by round 1, and then remains relatively stable around 0.840 through round 4.

* **SoM (4x)** (Teal dashed line): Starts at approximately 0.825 at round 0, increases to around 0.842 by round 1, and then remains relatively stable around 0.843 through round 4.

* **Persona** (Purple dashed line): Starts at approximately 0.818 at round 0, increases to around 0.838 by round 1, and then remains relatively stable around 0.840 through round 4.

* **DebateTune** (Brown solid line): Starts at approximately 0.818 at round 0, increases to around 0.838 by round 1, and then remains relatively stable around 0.840 through round 4.

* **SFT** (Light green solid line): Starts at approximately 0.830 at round 0, increases to around 0.848 by round 1, and then remains relatively stable around 0.850 through round 4.

* **DebateGPT** (Dark green solid line): Starts at approximately 0.828 at round 0, increases to around 0.845 by round 1, and then remains relatively stable around 0.848 through round 4.

* **ACC-Collab (Ours)** (Orange solid line): Starts at approximately 0.822 at round 0, increases to around 0.848 by round 1, and then remains relatively stable around 0.852 through round 4.

* **ACC-Collab + (Ours)** (Red solid line): Starts at approximately 0.815 at round 0, increases to around 0.840 by round 1, and then remains relatively stable around 0.848 through round 4.

### Key Observations

* Across all three models (Llama-3, Mistral, and Gemma-2), most methods show a significant increase in accuracy from round 0 to round 1.

* After round 1, the accuracy of most methods tends to stabilize, with only minor fluctuations.

* The "ACC-Collab + (Ours)" method generally performs well, often achieving the highest accuracy among the compared methods, especially for Mistral.

* The "SoM (2x)", "SoM (4x)", and "Persona" methods tend to have similar performance across all three models.

### Interpretation

The charts suggest that the initial round of training or interaction is crucial for improving the accuracy of these models. The stabilization of accuracy after round 1 indicates that the models may be reaching a point of diminishing returns, where further rounds do not significantly improve performance. The "ACC-Collab + (Ours)" method appears to be a promising approach, as it consistently achieves high accuracy compared to other methods. The similar performance of "SoM (2x)", "SoM (4x)", and "Persona" suggests that these methods may have similar underlying mechanisms or limitations. Further investigation could focus on understanding why "ACC-Collab + (Ours)" is particularly effective and exploring strategies to overcome the performance plateau observed after round 1.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Accuracy vs. Round for Different Models and Training Methods

### Overview

The image presents three line charts, each displaying the accuracy of different language models (Llama-3, Mistral, and Gemma-2) across four rounds of evaluation. Each chart includes multiple lines representing different training methods applied to the respective model. The y-axis represents accuracy, and the x-axis represents the round number. Shaded areas around each line indicate confidence intervals.

### Components/Axes

* **X-axis:** "Round" with values 0, 1, 2, 3, and 4.

* **Y-axis:** "Accuracy" with a scale ranging from approximately 0.855 to 0.86 (Llama-3), 0.81 to 0.86 (Mistral), and 0.81 to 0.855 (Gemma-2).

* **Legends:** Each chart has a legend at the bottom identifying the different training methods/models.

* **Llama-3:** SoM (2x) - dashed dark blue, SoM (4x) - dashed light blue, Persona - solid green, DebateTune - solid dark green, SFT - solid light green, DebateGPT - solid yellow, ACC-Collab (Ours) - solid orange, ACC-Collab + (Ours) - dashed orange.

* **Mistral:** SoM (2x) - dashed dark blue, SoM (4x) - dashed light blue, Persona - solid green, DebateTune - solid dark green, SFT - solid light green, DebateGPT - solid yellow, ACC-Collab (Ours) - solid orange, ACC-Collab + (Ours) - dashed orange.

* **Gemma-2:** SoM (2x) - dashed dark blue, SoM (4x) - dashed light blue, Persona - solid green, DebateTune - solid dark green, SFT - solid light green, DebateGPT - solid yellow, ACC-Collab (Ours) - solid orange, ACC-Collab + (Ours) - dashed orange.

### Detailed Analysis or Content Details

**Llama-3 Chart:**

* **SoM (2x):** Starts at approximately 0.871, remains relatively stable around 0.870 across all rounds.

* **SoM (4x):** Starts at approximately 0.868, increases slightly to around 0.870 by round 2, then plateaus.

* **Persona:** Starts at approximately 0.866, increases to around 0.871 by round 2, then plateaus.

* **DebateTune:** Starts at approximately 0.864, increases to around 0.870 by round 2, then plateaus.

* **SFT:** Starts at approximately 0.863, increases to around 0.868 by round 2, then plateaus.

* **DebateGPT:** Starts at approximately 0.862, increases to around 0.867 by round 2, then plateaus.

* **ACC-Collab (Ours):** Starts at approximately 0.860, increases steadily to around 0.875 by round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.858, increases steadily to around 0.877 by round 4.

**Mistral Chart:**

* **SoM (2x):** Starts at approximately 0.823, increases to around 0.826 by round 1, then plateaus.

* **SoM (4x):** Starts at approximately 0.821, increases to around 0.825 by round 1, then plateaus.

* **Persona:** Starts at approximately 0.819, increases to around 0.825 by round 1, then plateaus.

* **DebateTune:** Starts at approximately 0.818, increases to around 0.824 by round 1, then plateaus.

* **SFT:** Starts at approximately 0.817, increases to around 0.823 by round 1, then plateaus.

* **DebateGPT:** Starts at approximately 0.816, increases to around 0.822 by round 1, then plateaus.

* **ACC-Collab (Ours):** Starts at approximately 0.820, increases steadily to around 0.850 by round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.819, increases steadily to around 0.855 by round 4.

**Gemma-2 Chart:**

* **SoM (2x):** Starts at approximately 0.832, increases to around 0.835 by round 1, then plateaus.

* **SoM (4x):** Starts at approximately 0.830, increases to around 0.834 by round 1, then plateaus.

* **Persona:** Starts at approximately 0.828, increases to around 0.833 by round 1, then plateaus.

* **DebateTune:** Starts at approximately 0.827, increases to around 0.832 by round 1, then plateaus.

* **SFT:** Starts at approximately 0.826, increases to around 0.831 by round 1, then plateaus.

* **DebateGPT:** Starts at approximately 0.825, increases to around 0.830 by round 1, then plateaus.

* **ACC-Collab (Ours):** Starts at approximately 0.824, increases steadily to around 0.848 by round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.823, increases steadily to around 0.852 by round 4.

### Key Observations

* In all three models, the "ACC-Collab (Ours)" and "ACC-Collab + (Ours)" methods consistently outperform other training methods, showing a clear upward trend in accuracy across rounds.

* The other training methods (SoM, Persona, DebateTune, SFT, DebateGPT) generally plateau in accuracy after round 1 or 2.

* Mistral starts with the lowest initial accuracy among the three models, but shows significant improvement with the "ACC-Collab" methods.

* Llama-3 starts with the highest initial accuracy, and the "ACC-Collab" methods provide incremental gains.

### Interpretation

The data suggests that the "ACC-Collab" training methods are highly effective in improving the accuracy of these language models, particularly over multiple rounds of evaluation. The consistent upward trend indicates that these methods allow the models to learn and refine their performance with continued exposure. The plateauing of other methods suggests they may reach a performance limit relatively quickly. The differences in initial accuracy and improvement rates between the models highlight the varying capabilities and sensitivities of each model to different training approaches. The "ACC-Collab +" method consistently outperforms the "ACC-Collab" method, suggesting that the additional component provides a further boost to performance. This data could be used to inform the selection of training methods for these models, with a strong recommendation for the "ACC-Collab" approaches. The confidence intervals (shaded areas) indicate the uncertainty in the accuracy measurements, but the overall trends remain clear.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Charts: Accuracy vs. Round for Three Language Models

### Overview

The image displays three side-by-side line charts comparing the performance of eight different methods across five rounds (0-4) for three distinct language models: Llama-3, Mistral, and Gemma-2. The y-axis represents "Accuracy," and the x-axis represents "Round." Each chart includes shaded regions around the lines, likely indicating confidence intervals or standard deviation. A shared legend at the bottom defines the eight methods.

### Components/Axes

* **Chart Titles (Top Center):** "Llama-3", "Mistral", "Gemma-2"

* **Y-Axis Label (Left Side, Vertical):** "Accuracy"

* **X-Axis Label (Bottom Center):** "Round"

* **X-Axis Markers:** 0, 1, 2, 3, 4

* **Y-Axis Scales:**

* Llama-3: 0.855 to 0.885 (increments of 0.005)

* Mistral: 0.81 to 0.86 (increments of 0.01)

* Gemma-2: 0.81 to 0.85 (increments of 0.01)

* **Legend (Bottom, spanning all charts):**

* **SoM (2x):** Blue dashed line (`--`)

* **SoM (4x):** Teal dashed line (`--`)

* **Persona:** Purple dashed line (`--`)

* **DebateTune:** Brown solid line

* **SFT:** Light green solid line

* **DebateGPT:** Dark green solid line

* **ACC-Collab (Ours):** Orange solid line with circular markers

* **ACC-Collab + (Ours):** Red solid line with circular markers

### Detailed Analysis

#### **Chart 1: Llama-3**

* **Trend Verification:** Most methods show an initial increase from Round 0 to Round 1, followed by a plateau or slight fluctuations. The orange line (ACC-Collab) shows the highest peak.

* **Data Points (Approximate):**

* **ACC-Collab (Ours) [Orange]:** Starts ~0.873 (R0), peaks ~0.882 (R2), ends ~0.881 (R4).

* **ACC-Collab + (Ours) [Red]:** Starts lowest ~0.858 (R0), rises sharply to ~0.873 (R1), fluctuates, ends ~0.869 (R4).

* **DebateGPT [Dark Green]:** Steady increase from ~0.868 (R0) to ~0.877 (R4).

* **SFT [Light Green]:** Starts high ~0.878 (R0), dips slightly, ends ~0.879 (R4).

* **DebateTune [Brown]:** Starts ~0.864 (R0), rises to ~0.871 (R1), plateaus.

* **SoM (2x) [Blue Dashed]:** Starts ~0.862 (R0), rises to ~0.874 (R1), ends ~0.874 (R4).

* **SoM (4x) [Teal Dashed]:** Follows a very similar path to SoM (2x).

* **Persona [Purple Dashed]:** Starts ~0.863 (R0), peaks ~0.872 (R1), declines to ~0.870 (R4).

#### **Chart 2: Mistral**

* **Trend Verification:** The red line (ACC-Collab+) shows a dominant, steep upward trend. The orange line (ACC-Collab) also rises steadily. Other methods show modest gains or remain relatively flat.

* **Data Points (Approximate):**

* **ACC-Collab + (Ours) [Red]:** Clear top performer. Starts ~0.832 (R0), rises steeply to ~0.856 (R4).

* **ACC-Collab (Ours) [Orange]:** Second highest. Starts ~0.822 (R0), rises to ~0.843 (R4).

* **DebateTune [Brown]:** Relatively flat, ~0.830 (R0) to ~0.834 (R4).

* **SoM (2x) [Blue Dashed]:** Starts ~0.820 (R0), ends ~0.824 (R4).

* **SoM (4x) [Teal Dashed]:** Very close to SoM (2x).

* **Persona [Purple Dashed]:** Starts lowest ~0.813 (R0), rises to ~0.827 (R4).

* **SFT [Light Green]:** Starts ~0.810 (R0), ends ~0.825 (R4).

* **DebateGPT [Dark Green]:** Starts ~0.809 (R0), ends ~0.822 (R4).

#### **Chart 3: Gemma-2**

* **Trend Verification:** All methods show a strong, consistent upward trend from Round 0 to Round 4. The lines are more tightly clustered than in the other charts, especially at later rounds.

* **Data Points (Approximate):**

* **ACC-Collab (Ours) [Orange]:** Top performer. Starts ~0.821 (R0), rises to ~0.852 (R4).

* **ACC-Collab + (Ours) [Red]:** Very close second. Starts ~0.816 (R0), rises to ~0.849 (R4).

* **SFT [Light Green]:** Strong performance. Starts ~0.837 (R0), ends ~0.851 (R4).

* **DebateGPT [Dark Green]:** Starts ~0.829 (R0), ends ~0.851 (R4).

* **DebateTune [Brown]:** Starts ~0.817 (R0), ends ~0.847 (R4).

* **Persona [Purple Dashed]:** Starts ~0.816 (R0), ends ~0.844 (R4).

* **SoM (4x) [Teal Dashed]:** Starts ~0.815 (R0), ends ~0.843 (R4).

* **SoM (2x) [Blue Dashed]:** Starts ~0.815 (R0), ends ~0.841 (R4).

### Key Observations

1. **Method Performance Hierarchy:** The proposed methods, **ACC-Collab (Ours)** and **ACC-Collab + (Ours)**, are consistently among the top performers across all three models.

2. **Model-Specific Behavior:**

* **Mistral:** Shows the most dramatic separation between methods. **ACC-Collab +** is the clear, dominant leader.

* **Gemma-2:** Shows the strongest overall improvement for all methods, with the highest final accuracy values and the tightest clustering of results.

* **Llama-3:** Shows more variability and less dramatic gains after Round 1 for most methods.

3. **Round 0 Baseline:** The starting accuracy (Round 0) varies significantly by model and method, indicating different baseline capabilities before the debate/round process begins.

4. **Trend Consistency:** With few exceptions (e.g., Persona in Llama-3), accuracy either improves or holds steady as rounds progress; no method shows a significant, sustained decline.

### Interpretation

This data demonstrates the effectiveness of the **ACC-Collab** family of methods for improving the accuracy of language models through a multi-round process, likely involving debate or collaboration. The key findings are:

* **Efficacy of Proposed Method:** The "Ours" methods (orange and red lines) generally outperform or match the other techniques (SoM, Persona, DebateTune, SFT, DebateGPT), suggesting the authors' approach is successful.

* **Importance of Model Architecture:** The same methods yield different relative performance gains on different base models (Llama-3, Mistral, Gemma-2). This implies that the effectiveness of collaborative/ debate-based training or inference is not universal but interacts with the underlying model's characteristics. Gemma-2 appears most receptive to these techniques.

* **Value of Iteration:** The general upward trend across rounds validates the core premise that iterative refinement (rounds) can boost accuracy. The most significant gains often occur in the first one to two rounds.

* **Stability vs. Peak Performance:** **ACC-Collab +** (red) achieves the highest single accuracy score on Mistral but is sometimes outperformed by its non-"+" variant (**ACC-Collab**, orange) on other models. This suggests a potential trade-off between peak performance and consistency across different tasks or models.

In summary, the charts provide strong evidence that the authors' collaborative methods enhance model accuracy through iterative rounds, with the degree of improvement being model-dependent. The results position ACC-Collab as a competitive technique in the landscape of methods designed to improve LLM reasoning or output quality.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Accuracy Comparison Across Rounds

### Overview

Three side-by-side line graphs compare the accuracy of different AI models across four training rounds. Each graph represents a different base model (Llama-3, Mistral, Gemma-2) with multiple training approaches plotted against accuracy metrics. The graphs show progressive improvement in accuracy for most models, with shaded regions indicating confidence intervals.

### Components/Axes

- **X-axis**: "Round" (0 to 4) - Discrete training iterations

- **Y-axis**: "Accuracy" (0.855 to 0.885) - Performance metric

- **Legends**: Positioned at bottom of each graph with color-coded labels:

- **Blue**: SoM (2x)

- **Teal**: SoM (4x)

- **Purple**: Persona

- **Brown**: DebateTune

- **Green**: SFT

- **Dark Green**: DebateGPT

- **Orange**: ACC-Collab (Ours)

- **Red**: ACC-Collab + (Ours)

### Detailed Analysis

#### Llama-3 Graph

- **ACC-Collab (Ours)**: Starts at 0.873 (Round 0), peaks at 0.882 (Round 4)

- **ACC-Collab + (Ours)**: Begins at 0.858 (Round 0), rises to 0.878 (Round 4)

- **SoM (4x)**: Maintains steady 0.875-0.880 range

- **DebateGPT**: Shows gradual increase from 0.868 to 0.877

- **Confidence Intervals**: Widest for ACC-Collab + (Ours) in early rounds

#### Mistral Graph

- **ACC-Collab (Ours)**: Starts at 0.822 (Round 0), reaches 0.855 (Round 4)

- **ACC-Collab + (Ours)**: Begins at 0.831 (Round 0), peaks at 0.858 (Round 4)

- **DebateTune**: Flat line at 0.830-0.832

- **SFT**: Gradual rise from 0.815 to 0.828

- **Confidence Intervals**: Narrowest for ACC-Collab (Ours)

#### Gemma-2 Graph

- **ACC-Collab (Ours)**: Starts at 0.821 (Round 0), ends at 0.852 (Round 4)

- **ACC-Collab + (Ours)**: Begins at 0.815 (Round 0), reaches 0.855 (Round 4)

- **DebateGPT**: Sharp increase from 0.825 to 0.848

- **Persona**: Steady improvement from 0.818 to 0.842

- **Confidence Intervals**: Most variable for ACC-Collab + (Ours)

### Key Observations

1. **ACC-Collab + (Ours)** consistently outperforms base models across all three architectures

2. **SoM (4x)** shows highest stability in Llama-3 but underperforms in Mistral

3. **DebateGPT** demonstrates strongest growth in Gemma-2

4. **Confidence intervals** widen significantly for ACC-Collab + (Ours) in early rounds, suggesting greater uncertainty in initial training phases

5. **All models** show diminishing returns after Round 2, with accuracy plateaus observed in later rounds

### Interpretation

The data suggests that the ACC-Collab + (Ours) methodology provides the most significant accuracy improvements across different base architectures, particularly in early training rounds. The widening confidence intervals for this approach indicate higher variance in initial performance, which stabilizes as training progresses. While SoM (4x) shows promise in Llama-3, its performance is architecture-dependent. The consistent plateaus after Round 2 across all models suggest potential optimization limits or diminishing returns in the training methodology. The ACC-Collab (Ours) baseline demonstrates strong foundational performance, with the "+ (Ours)" enhancement providing additional gains through iterative refinement.

DECODING INTELLIGENCE...