## Scatter Plot Grid: RSA Decision Boundaries Across CVaR μ Values

### Overview

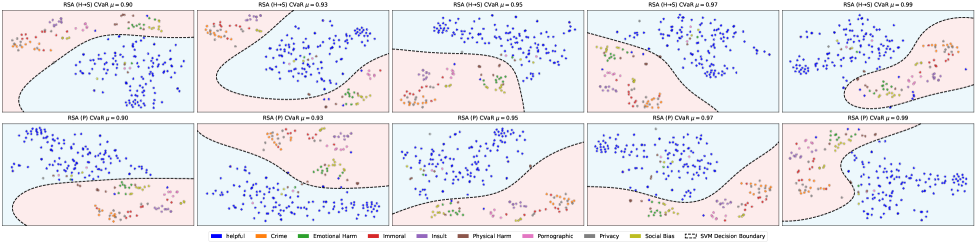

The image displays a 2x5 grid of scatter plots illustrating the decision boundaries of two variants of a classification model (likely "RSA") across five different confidence levels (μ) for Conditional Value at Risk (CVaR). Each plot shows data points colored by category, overlaid on a two-region background (light blue and light pink) separated by a dashed black decision boundary. The plots demonstrate how the model's decision surface evolves as the μ parameter increases from 0.90 to 0.99.

### Components/Axes

* **Layout:** A grid with 2 rows and 5 columns.

* **Row Labels (Implicit):**

* **Top Row:** Titles indicate "RSA (H+S) CVaR μ=[value]".

* **Bottom Row:** Titles indicate "RSA (P) CVaR μ=[value]".

* **Column Labels (Explicit in Titles):** Each column corresponds to a specific μ value: 0.90, 0.93, 0.95, 0.97, 0.99.

* **Plot Axes:** The X and Y axes are not labeled with titles or numerical markers. They represent a 2D feature space.

* **Background Regions:** Each plot is divided into two colored regions:

* **Light Blue Region:** Represents one class or decision area.

* **Light Pink Region:** Represents the opposing class or decision area.

* **Decision Boundary:** A dashed black line (`---`) separates the two background regions.

* **Legend (Bottom Center):** A horizontal legend maps 10 colors to specific categories:

* Blue: `Helpful`

* Orange: `Crime`

* Green: `Emotional Harm`

* Red: `Immoral`

* Purple: `Insult`

* Brown: `Physical Harm`

* Pink: `Pornographic`

* Gray: `Privacy`

* Olive Green: `Social Bias`

* (The legend also includes an entry for the `SVM Decision Boundary`, which corresponds to the dashed black line in the plots).

### Detailed Analysis

**Trend Verification & Spatial Grounding:**

The primary trend is the increasing complexity and non-linearity of the SVM decision boundary as the μ parameter increases from left to right in both rows.

* **Top Row - RSA (H+S):**

* **μ=0.90:** The boundary is a relatively smooth, convex curve. The `Helpful` (blue) points are predominantly in the light blue region, while points for categories like `Crime` (orange), `Immoral` (red), and `Physical Harm` (brown) are mostly in the light pink region. Other categories are mixed.

* **μ=0.93:** The boundary begins to develop a slight indentation on the right side.

* **μ=0.95:** The indentation deepens, creating a more pronounced concave section.

* **μ=0.97:** The boundary becomes significantly more complex, with a large, deep concave "bay" forming on the right side, enclosing a cluster of points.

* **μ=0.99:** The boundary is highly non-linear, with multiple curves and indentations, closely wrapping around clusters of data points.

* **Bottom Row - RSA (P):**

* **μ=0.90:** The boundary is a smooth, convex curve, similar to the top row at μ=0.90 but with a different orientation.

* **μ=0.93:** The boundary starts to develop a slight wave.

* **μ=0.95:** The wave becomes more pronounced, forming a gentle S-curve.

* **μ=0.97:** The S-curve sharpens, with steeper gradients.

* **μ=0.99:** The boundary is complex and wavy, though perhaps slightly less intricate than the top row's μ=0.99 plot.

**Category Distribution (General Observation across plots):**

* `Helpful` (blue) points show a strong tendency to cluster together, often forming the core of the group within the light blue region.

* Categories like `Crime` (orange), `Immoral` (red), `Physical Harm` (brown), and `Insult` (purple) frequently appear in the light pink region, often intermingled.

* `Emotional Harm` (green), `Privacy` (gray), and `Social Bias` (olive) points are more dispersed and appear in both regions, suggesting they are harder for the model to classify cleanly based on the shown features.

* `Pornographic` (pink) points are relatively few and scattered.

### Key Observations

1. **Parameter Sensitivity:** The model's decision boundary is highly sensitive to the CVaR μ parameter. A higher μ (closer to 1.0) leads to a more complex, data-adaptive boundary.

2. **Variant Difference:** While both RSA (H+S) and RSA (P) show increased boundary complexity with higher μ, the specific shapes differ. The (H+S) variant develops a deep concave feature, while the (P) variant develops a pronounced S-curve.

3. **Class Separability:** The `Helpful` class appears to be the most separable, forming a distinct cluster. Other categories, particularly those related to harm or misconduct, overlap significantly in the feature space.

4. **Boundary-Data Relationship:** At high μ values (0.97, 0.99), the decision boundary appears to "chase" or tightly enclose specific clusters of points, which may indicate a risk of overfitting to the training data distribution.

### Interpretation

This visualization demonstrates the trade-off controlled by the CVaR μ parameter in a robust classification model (RSA). The μ parameter likely controls the model's conservatism or risk sensitivity.

* **Low μ (0.90):** The model learns a simple, generalizable boundary. It prioritizes a smooth decision surface that may misclassify some ambiguous points (e.g., `Emotional Harm`, `Privacy`) but captures the broad separation between `Helpful` content and clearly harmful categories.

* **High μ (0.99):** The model becomes highly sensitive to the worst-case data points (the tail of the distribution, as per CVaR). This results in a complex boundary that tries to correctly classify almost every training point, including the ambiguous ones. While this may improve training accuracy, the intricate boundary is likely to be less robust and may not generalize well to new, unseen data.

The difference between the **RSA (H+S)** and **RSA (P)** rows suggests these are two different model formulations or feature sets. The (H+S) variant's boundary reacts more dramatically to high μ, creating a deep concavity, which might indicate it is using features that create a more challenging, non-linear separation problem for certain data clusters.

**In essence, the image is a technical demonstration of how a model's decision-making complexity can be tuned via a risk parameter (μ), highlighting the fundamental machine learning tension between fitting the training data closely and maintaining a simple, generalizable model.**