\n

## Line Chart: Average Response Length

### Overview

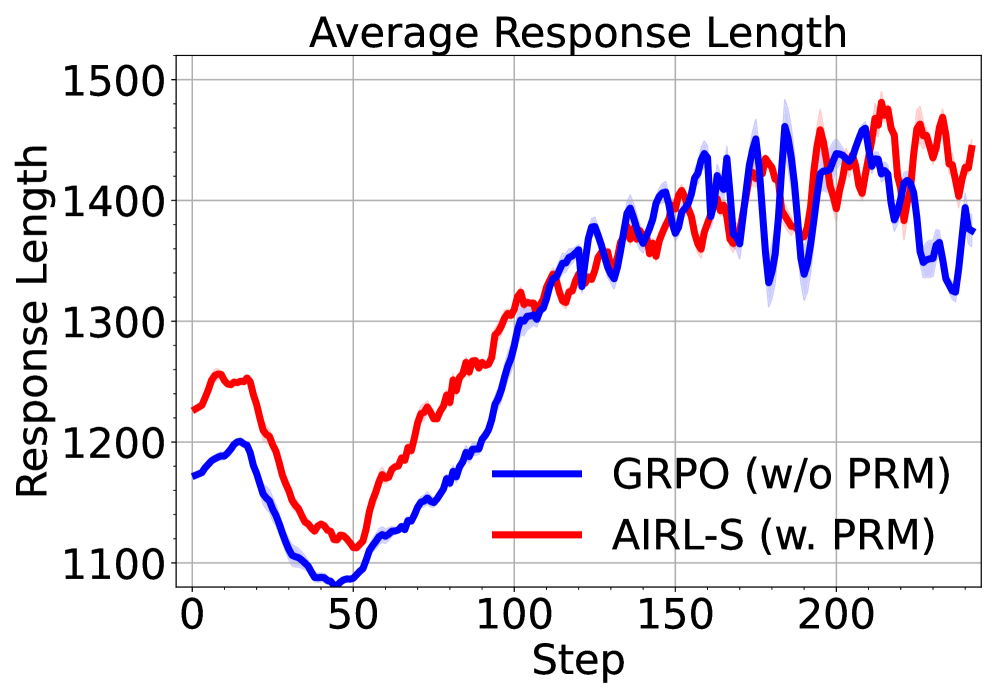

The image displays a line chart comparing the average response length (in tokens or characters, unit unspecified) over training steps for two different reinforcement learning methods. The chart shows the progression of response length as training advances, with both methods exhibiting an initial dip followed by a sustained increase.

### Components/Axes

* **Chart Title:** "Average Response Length" (centered at the top).

* **Y-Axis:** Labeled "Response Length". The scale runs from 1100 to 1500, with major gridlines at intervals of 100 (1100, 1200, 1300, 1400, 1500).

* **X-Axis:** Labeled "Step". The scale runs from 0 to over 200, with major tick marks and labels at 0, 50, 100, 150, and 200.

* **Legend:** Located in the bottom-right quadrant of the chart area.

* A blue line is labeled **"GRPO (w/o PRM)"**.

* A red line is labeled **"AIRL-S (w. PRM)"**.

* **Data Series:** Two lines with shaded regions (likely representing confidence intervals or standard deviation).

* **Blue Line (GRPO w/o PRM):** Represents the method without a Process Reward Model.

* **Red Line (AIRL-S w. PRM):** Represents the method with a Process Reward Model.

### Detailed Analysis

**Trend Verification:**

* **Both Lines:** Exhibit a similar macro-trend: an initial decline from step 0 to approximately step 50, followed by a strong, sustained upward trend until the end of the plotted steps (~240).

* **Blue Line (GRPO):** Starts at ~1170. Dips to a minimum of ~1080 at step 50. Rises steadily, crossing 1300 around step 110 and 1400 around step 160. Shows high volatility after step 150, with sharp peaks and troughs between ~1320 and ~1460.

* **Red Line (AIRL-S):** Starts higher at ~1230. Dips to a minimum of ~1110 at step 50. Rises more steeply than the blue line initially, crossing 1300 around step 90 and 1400 around step 140. Maintains a generally higher value than the blue line from step ~60 onward. Also shows high volatility in later steps, with peaks reaching near 1480.

**Key Data Points (Approximate):**

* **Step 0:** GRPO ~1170, AIRL-S ~1230.

* **Step 50 (Trough):** GRPO ~1080, AIRL-S ~1110.

* **Step 100:** GRPO ~1200, AIRL-S ~1280.

* **Step 150:** GRPO ~1400, AIRL-S ~1380 (lines intersect around here).

* **Step 200:** GRPO ~1420, AIRL-S ~1440.

* **Final Steps (~240):** GRPO ~1380, AIRL-S ~1450.

**Spatial Grounding & Confidence Intervals:**

The shaded blue and red regions around each line indicate variance. The variance appears to increase for both methods as the step count and response length increase, particularly after step 150, where the shaded bands become wider and the lines more jagged.

### Key Observations

1. **Initial Dip:** Both methods cause a decrease in average response length during the first 50 steps of training.

2. **Sustained Growth:** After step 50, both methods drive a significant and continuous increase in response length for the remainder of the training shown.

3. **Method Comparison:** The AIRL-S (with PRM) method generally results in longer average responses than GRPO (without PRM) after the initial training phase (post step ~60). The gap between them is most pronounced between steps 60-120.

4. **Increased Volatility:** In the later stages of training (steps >150), both methods exhibit high-frequency oscillations in average response length, suggesting less stability in the learned policy's output length.

### Interpretation

This chart demonstrates the effect of two different reinforcement learning algorithms on the verbosity of a model's generated responses during training.

* **The initial dip** suggests an early phase where the models might be optimizing for other factors (like accuracy or reward) at the expense of length, or are exploring a more concise output space.

* **The strong upward trend** indicates that both algorithms successfully incentivize longer responses over time. This could be because longer responses are correlated with higher rewards in the training environment (e.g., more detailed answers are preferred).

* **The superiority of AIRL-S (w. PRM)** implies that incorporating a Process Reward Model provides a better or more stable learning signal for increasing response length compared to the GRPO baseline without it. The PRM may offer more granular feedback that encourages elaboration.

* **The late-stage volatility** is a critical observation. It indicates that while the models learn to produce longer responses, the consistency of that length degrades. This could be a sign of over-optimization, policy instability, or that the reward function does not strongly penalize variance in length once a certain threshold is passed.

In summary, the data suggests that using AIRL-S with a PRM is more effective for training a model to generate longer responses than GRPO without a PRM, though both methods lead to increased length and eventual instability in output length consistency.