\n

## Diagram: Evaluation Pipeline for AI Models

### Overview

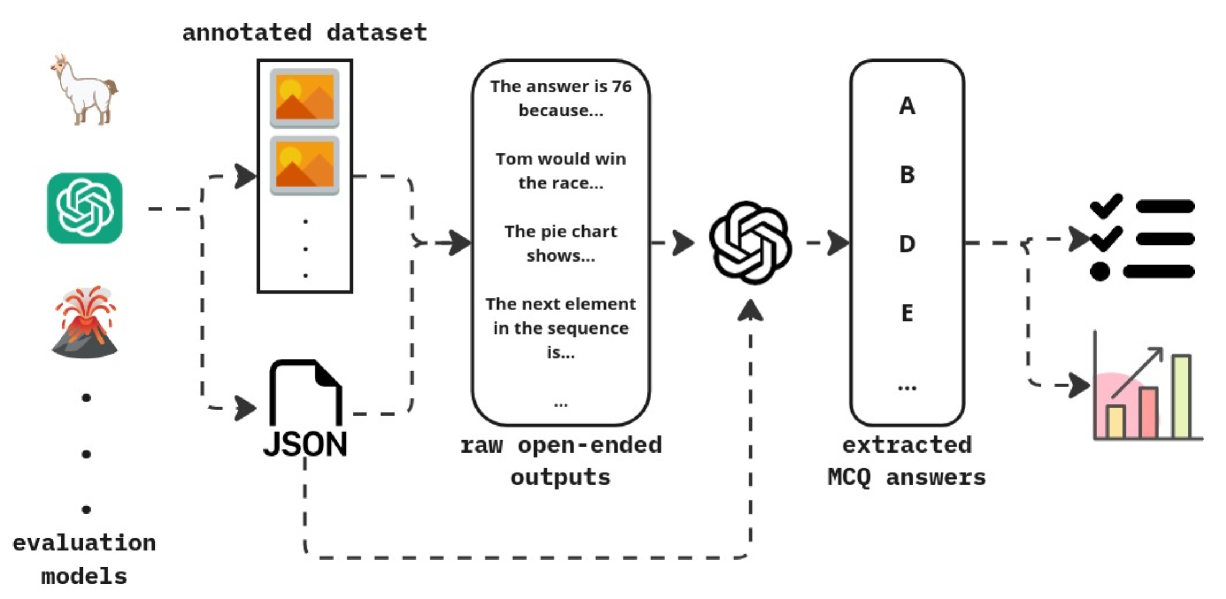

The image is a flowchart illustrating a technical pipeline for evaluating AI models. The process begins with multiple evaluation models processing an annotated dataset, generating raw open-ended outputs, which are then converted into extracted multiple-choice question (MCQ) answers, and finally evaluated. The diagram uses icons, text labels, and directional arrows to depict the flow of data and processing steps.

### Components/Axes

The diagram is organized into three main vertical sections, flowing from left to right.

**1. Left Section: Input & Models**

* **Label:** `evaluation models` (bottom-left).

* **Icons:** A vertical list of model icons:

* A llama (likely representing LLaMA or similar open-source models).

* A green, circular logo with a white knot-like symbol (resembling the OpenAI/ChatGPT logo).

* A volcano icon (possibly representing a specific model or framework).

* Three vertical dots (`...`) indicating additional models.

* **Data Source:** An `annotated dataset` (top-center of this section), depicted as a box containing two image icons (orange/yellow landscapes) and vertical dots (`...`), suggesting a collection of image-text pairs.

* **Data Format:** A `JSON` file icon, connected via a dashed arrow from the dataset, indicating the dataset's format.

**2. Middle Section: Processing Stages**

* **Stage 1 - Raw Outputs:** A rounded rectangle labeled `raw open-ended outputs`. It contains example text snippets:

* `The answer is 76 because...`

* `Tom would win the race...`

* `The pie chart shows...`

* `The next element in the sequence is...`

* `...` (ellipsis indicating more outputs).

* **Processing Node:** A ChatGPT-style icon (interlocking rings) sits between the two main processing stages. Arrows point into it from the `raw open-ended outputs` and from the `JSON` file below, and an arrow points out from it to the next stage. This suggests a central processing or parsing model (likely a large language model) is used to transform the data.

* **Stage 2 - Extracted Answers:** A rounded rectangle labeled `extracted MCQ answers`. It contains a vertical list of letters:

* `A`

* `B`

* `D`

* `E`

* `...` (ellipsis indicating more options).

**3. Right Section: Outputs & Evaluation**

* **Output 1 (Top):** A set of evaluation symbols: two checkmarks (`✓✓`), a filled circle (`●`), and three horizontal bars of varying lengths. This likely represents a scoring or grading rubric.

* **Output 2 (Bottom):** A small bar chart with three bars of increasing height (pink, red, yellow-green) and an upward-trending arrow overlay. This represents quantitative results or performance metrics.

**Flow & Connections:**

* Solid arrows indicate the primary data flow: from the `evaluation models` and `annotated dataset` into the `raw open-ended outputs`, then through the central processing node to the `extracted MCQ answers`, and finally to the two output visualizations.

* Dashed arrows indicate secondary or supporting data flows, notably from the `JSON` file to the central processing node and from the `extracted MCQ answers` to the outputs.

### Detailed Analysis

The diagram explicitly maps a multi-step evaluation methodology:

1. **Input Phase:** Multiple AI models (llama, ChatGPT-like, volcano, etc.) are tasked with processing a common `annotated dataset` (likely containing images and questions/answers in JSON format).

2. **Generation Phase:** The models produce `raw open-ended outputs`—free-text responses to the dataset's prompts.

3. **Transformation Phase:** A central language model (represented by the ChatGPT icon) processes these open-ended texts. Its function is to parse or extract structured multiple-choice answers from the unstructured text.

4. **Extraction Phase:** The result is a set of `extracted MCQ answers` (e.g., A, B, D, E), which are standardized, machine-readable responses.

5. **Evaluation Phase:** These extracted answers are then evaluated, producing both qualitative (checkmarks, bars) and quantitative (bar chart) results.

### Key Observations

* **Hybrid Evaluation:** The pipeline combines the generative capability of models (producing open-ended text) with the standardized scoring of MCQs.

* **Central Parser:** The ChatGPT-like icon in the middle is pivotal. It acts as a "judge" or "parser" that converts subjective, open-ended text into objective, gradable answers.

* **Non-Sequential MCQ Options:** The listed options in the extracted answers box are `A, B, D, E`, skipping `C`. This could be an example, or it might indicate the system handles non-standard or partial answer sets.

* **Multiple Output Forms:** The evaluation produces both symbolic (checkmarks) and graphical (bar chart) results, suggesting a comprehensive assessment.

### Interpretation

This diagram illustrates a sophisticated framework for benchmarking AI models, particularly on tasks that require reasoning (e.g., visual question answering, logical puzzles). The core innovation is using a powerful language model not just as a test-taker, but as an **evaluation intermediary**. It translates the nuanced, human-like responses from various models into a uniform format (MCQs) that can be automatically and consistently scored.

The process addresses a key challenge in AI evaluation: how to fairly compare models that produce different styles of open-ended text. By funneling all outputs through a common parser to extract a standardized answer format, the framework aims to create a level playing field for comparison. The final outputs (symbolic and graphical) suggest the results are used for both detailed error analysis (which questions were missed) and high-level performance tracking (overall accuracy trends). The presence of multiple model icons on the left emphasizes that this pipeline is designed for comparative analysis across different AI systems.