## Diagrams: Binary Neural Network Operations with Modulo 2 Arithmetic

### Overview

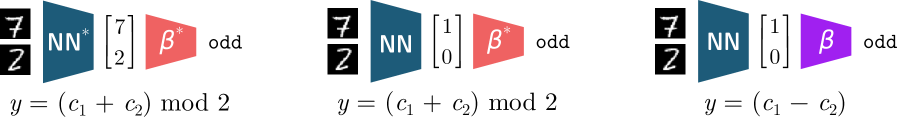

The image contains three sequential diagrams illustrating binary neural network (NN) operations combined with modulo 2 arithmetic. Each diagram includes a 2x2 matrix, a blue "NN" block, a colored "β" block (red or purple), and an equation defining the output `y`. The diagrams emphasize binary transformations (`mod 2`) and arithmetic operations (`+`, `-`) on inputs `c1` and `c2`.

### Components/Axes

1. **Left Matrix**:

- First diagram: `[[7], [2]]`

- Second and third diagrams: `[[1], [0]]`

- Labels: No explicit axis titles, but matrices are positioned as input vectors.

2. **Blocks**:

- **Blue "NN" Block**: Positioned between the matrix and "β" block.

- **Colored "β" Block**:

- First diagram: Red with superscript `*` (`β*`), labeled "odd".

- Second diagram: Red without superscript (`β`), labeled "odd".

- Third diagram: Purple (`β`), labeled "odd".

3. **Equations**:

- First diagram: `y = (c1 + c2) mod 2`

- Second diagram: `y = (c1 + c2) mod 2`

- Third diagram: `y = (c1 - c2)`

### Detailed Analysis

- **Matrix Inputs**:

- The first diagram uses a matrix with values `7` and `2`, while the latter two use `1` and `0`. This suggests a transition from non-binary to binary input representation.

- **NN Block**:

- Consistently blue across all diagrams, implying a fixed neural network layer.

- **β Block Variations**:

- **Red with `*` (`β*`)**: Likely a modified bias term (e.g., learnable parameter).

- **Red without `*` (`β`)**: Standard bias term.

- **Purple (`β`)**: May represent a different activation function or operation (e.g., subtraction instead of addition).

- **Equations**:

- First two diagrams use addition modulo 2, while the third uses subtraction. This indicates a shift in arithmetic logic, possibly for error correction or alternative computation.

### Key Observations

1. **Consistency in NN Block**: The blue "NN" block remains unchanged, suggesting it acts as a constant processing unit.

2. **β Block Role**: The color and superscript differences in "β" imply distinct functional roles (e.g., learnable vs. fixed parameters).

3. **Equation Shift**: The third diagram’s subtraction operation (`y = c1 - c2`) deviates from the addition in the first two, hinting at a specialized use case (e.g., differential computation).

### Interpretation

These diagrams likely model a binary neural network architecture where:

- **Inputs** (`c1`, `c2`) are processed through a neural network layer (`NN`).

- **Bias Terms** (`β`) modulate the output, with variations in color/superscript indicating parameterization or activation function differences.

- **Modulo 2 Arithmetic** (`mod 2`) enforces binary outputs, critical for energy-efficient hardware implementations.

- The transition from addition to subtraction in the third diagram may represent a correction mechanism or alternative logic gate (e.g., XOR vs. XNOR).

The use of `7` and `2` in the first matrix suggests non-binary inputs being converted to binary via the NN layer, while `1` and `0` in later diagrams indicate direct binary input handling. The "odd" label on β blocks might relate to parity checks or odd-even classification tasks.