## Diagram: Impact of Meaningless Tokens on LLM Processing

### Overview

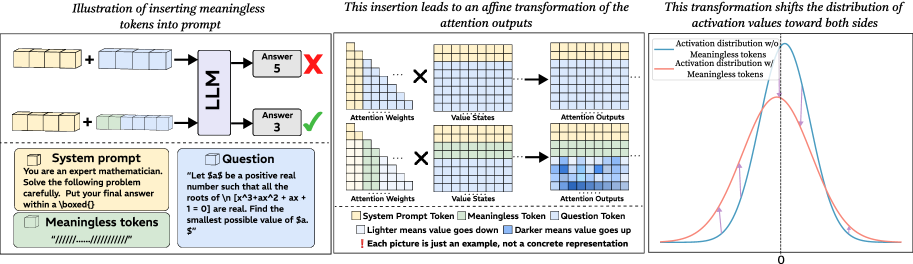

The image illustrates how inserting meaningless tokens into a prompt affects a large language model's (LLM) processing, attention mechanisms, and activation distributions. It is divided into three sections:

1. **Prompt Insertion**: Shows a system prompt, question, and meaningless tokens.

2. **Attention Mechanism Transformation**: Visualizes changes in attention weights, value states, and outputs.

3. **Activation Distribution Shift**: Compares activation distributions with/without meaningless tokens.

---

### Components/Axes

#### Left Section (Prompt Insertion)

- **System Prompt**:

- Text: *"You are an expert mathematician. Solve the following problem carefully. Put your final answer within a \boxed{}."*

- **Question**:

- Text: *"Let $a$ be a positive real number such that all the roots of \\(n[x^3 + ax^2 + 1 = 0]\\) are real. Find the smallest possible value of $a$."*

- **Meaningless Tokens**:

- Represented as green boxes labeled `"/////....//////"`.

- **LLM Output**:

- Two answers:

- Incorrect: `5` (marked with red X).

- Correct: `3` (marked with green check).

#### Middle Section (Attention Mechanism)

- **Legend**:

- Yellow: System Prompt Token

- Green: Meaningless Token

- Blue: Question Token

- **Components**:

- **Attention Weights**: Heatmaps showing token interactions.

- **Value States**: Gridded representations of token values.

- **Attention Outputs**: Modified grids after token insertion.

- **Arrows**: Indicate transformations between components.

- **Note**: *"Each picture is just an example, not a concrete representation."*

#### Right Section (Activation Distribution)

- **Graph**:

- **X-axis**: Labeled `0` (activation value).

- **Y-axis**: Labeled `Activation distribution`.

- **Lines**:

- Blue: Activation distribution *without* meaningless tokens.

- Red: Activation distribution *with* meaningless tokens.

- **Legend**:

- Blue: *Without* tokens.

- Red: *With* tokens.

---

### Detailed Analysis

#### Left Section

- The system prompt instructs the LLM to solve a math problem involving real roots of a cubic equation.

- The question includes a LaTeX-formatted equation: \\(n[x^3 + ax^2 + 1 = 0]\\).

- Inserting meaningless tokens (green boxes) leads to an incorrect answer (`5` vs. correct `3`).

#### Middle Section

- **Attention Weights**:

- Yellow (system prompt) and green (meaningless tokens) tokens show lighter/darker shading, indicating value changes.

- **Value States**:

- Grids with blue shading represent darker value shifts.

- **Attention Outputs**:

- Modified grids show altered token interactions post-insertion.

#### Right Section

- **Graph Trends**:

- Blue line (no tokens) peaks sharply at `0`.

- Red line (with tokens) has a broader, bimodal distribution, peaking at `0` and `1`.

- **Key Insight**:

- Meaningless tokens shift activation values toward both extremes (`0` and `1`), suggesting increased model uncertainty or distraction.

---

### Key Observations

1. **Incorrect Answer**: Inserting meaningless tokens causes the LLM to output `5` instead of the correct `3`.

2. **Attention Shifts**:

- System prompt tokens (yellow) and question tokens (blue) dominate attention weights without tokens.

- With tokens, green meaningless tokens disrupt attention, altering value states.

3. **Activation Distribution**:

- Tokens introduce bimodality, indicating fragmented focus or noise in processing.

---

### Interpretation

- **Mechanism of Failure**:

Meaningless tokens disrupt the LLM's attention by introducing irrelevant tokens, causing the model to misallocate computational resources. This leads to incorrect answers and distorted activation distributions.

- **Attention Dynamics**:

The heatmaps suggest that meaningless tokens (green) interfere with the system prompt (yellow) and question (blue) tokens, reducing coherence in value states.

- **Activation Bimodality**:

The red line’s broader distribution implies that tokens force the model to consider conflicting or irrelevant information, reducing confidence in the correct answer.

- **Practical Implication**:

Inserting meaningless tokens could be a form of adversarial attack or prompt injection, degrading model performance by corrupting attention and activation patterns.

---

**Note**: All textual content (e.g., system prompt, question, labels) is transcribed verbatim. Colors in diagrams align with the legend (yellow/green/blue for tokens, blue/red for activation lines). No numerical data beyond axis labels and line trends is provided in the image.