## Bar Chart: Performance Comparison of Language Models on Question Answering Datasets

### Overview

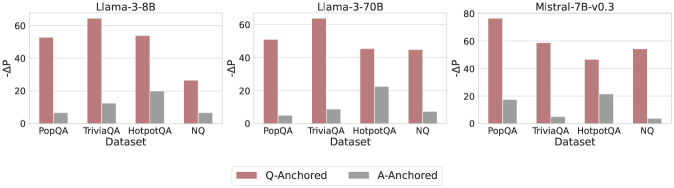

This image presents a comparative analysis of three language models – Llama-3-8B, Llama-3-70B, and Mistral-7B-v0.3 – across four question answering datasets: PopQA, TriviaQA, HotpotQA, and NQ. The performance metric is represented as "-ΔP", indicating a change in performance. Each model's performance is shown for both "Q-Anchored" and "A-Anchored" approaches.

### Components/Axes

* **X-axis:** "Dataset" with categories: PopQA, TriviaQA, HotpotQA, NQ.

* **Y-axis:** "-ΔP" (Performance Difference), ranging from 0 to 80.

* **Legend:** Located at the bottom-center of the image.

* "Q-Anchored" (represented by a reddish-brown color)

* "A-Anchored" (represented by a gray color)

* **Titles:** Each chart has a title indicating the language model being evaluated: "Llama-3-8B", "Llama-3-70B", "Mistral-7B-v0.3". These titles are positioned at the top-center of each respective chart.

### Detailed Analysis

The image consists of three separate bar charts, each representing a different language model.

**1. Llama-3-8B:**

* **PopQA:** Q-Anchored ≈ 52, A-Anchored ≈ 8

* **TriviaQA:** Q-Anchored ≈ 64, A-Anchored ≈ 12

* **HotpotQA:** Q-Anchored ≈ 56, A-Anchored ≈ 24

* **NQ:** Q-Anchored ≈ 28, A-Anchored ≈ 10

**2. Llama-3-70B:**

* **PopQA:** Q-Anchored ≈ 50, A-Anchored ≈ 10

* **TriviaQA:** Q-Anchored ≈ 68, A-Anchored ≈ 12

* **HotpotQA:** Q-Anchored ≈ 48, A-Anchored ≈ 24

* **NQ:** Q-Anchored ≈ 44, A-Anchored ≈ 16

**3. Mistral-7B-v0.3:**

* **PopQA:** Q-Anchored ≈ 72, A-Anchored ≈ 18

* **TriviaQA:** Q-Anchored ≈ 80, A-Anchored ≈ 20

* **HotpotQA:** Q-Anchored ≈ 64, A-Anchored ≈ 20

* **NQ:** Q-Anchored ≈ 48, A-Anchored ≈ 16

For all three models, the "Q-Anchored" bars are consistently higher than the "A-Anchored" bars across all datasets, indicating better performance with the Q-Anchored approach.

### Key Observations

* **Model Performance:** Mistral-7B-v0.3 generally exhibits the highest "-ΔP" values for Q-Anchored, particularly on TriviaQA (≈80).

* **Dataset Difficulty:** The performance difference between Q-Anchored and A-Anchored appears to be more pronounced on TriviaQA and PopQA for all models, suggesting these datasets may be more sensitive to the anchoring method.

* **Anchoring Impact:** The Q-Anchored approach consistently outperforms the A-Anchored approach across all models and datasets.

### Interpretation

The data suggests that the choice of anchoring method (Q-Anchored vs. A-Anchored) significantly impacts the performance of these language models on question answering tasks. The Q-Anchored approach consistently yields better results, implying that anchoring based on the question itself is more effective than anchoring based on the answer.

The varying performance across datasets indicates that the difficulty and characteristics of each dataset influence the effectiveness of the models. TriviaQA and PopQA seem to be more sensitive to the anchoring method, potentially due to the nature of the questions or the way answers are structured within those datasets.

Mistral-7B-v0.3 appears to be the strongest performer overall, particularly when using the Q-Anchored approach. This could be attributed to its model architecture, training data, or other factors. The consistent gap between Q-Anchored and A-Anchored performance highlights a potential area for further research and optimization in question answering systems. The "-ΔP" metric, while not explicitly defined, likely represents a performance *loss* relative to a baseline, as higher negative values indicate worse performance.