# Technical Data Extraction: Throughput Comparison Chart

## 1. Component Isolation

* **Header (Legend):** Located at the top center. Contains two colored squares with labels:

* **Orange Square:** SGLang

* **Green Square:** vLLM

* **Main Chart Area:** A grouped bar chart comparing normalized throughput across 11 different benchmarks/tasks.

* **Y-Axis (Left):** Labeled "Throughput (Normalized)". Scale ranges from 0.0 to 1.0 with increments of 0.2.

* **X-Axis (Bottom):** Contains 11 categorical labels representing different LLM tasks/benchmarks.

---

## 2. Data Table Reconstruction

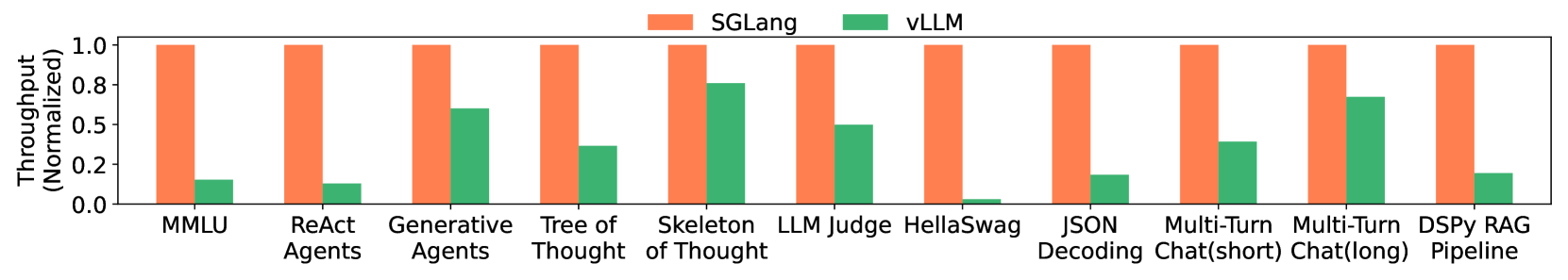

The following table represents the normalized throughput values extracted from the bar heights. Values for SGLang are consistently at the baseline of 1.0, while vLLM values are estimated based on the y-axis scale.

| Benchmark / Task | SGLang (Orange) | vLLM (Green) |

| :--- | :---: | :---: |

| **MMLU** | 1.0 | ~0.15 |

| **ReAct Agents** | 1.0 | ~0.12 |

| **Generative Agents** | 1.0 | ~0.60 |

| **Tree of Thought** | 1.0 | ~0.35 |

| **Skeleton of Thought** | 1.0 | ~0.75 |

| **LLM Judge** | 1.0 | ~0.50 |

| **HellaSwag** | 1.0 | ~0.03 |

| **JSON Decoding** | 1.0 | ~0.18 |

| **Multi-Turn Chat (short)** | 1.0 | ~0.40 |

| **Multi-Turn Chat (long)** | 1.0 | ~0.68 |

| **DSPy RAG Pipeline** | 1.0 | ~0.20 |

---

## 3. Trend Verification and Analysis

### SGLang (Orange Bars)

* **Visual Trend:** A perfectly flat horizontal line across all categories at the 1.0 mark.

* **Interpretation:** SGLang serves as the performance baseline (1.0) for this comparison.

### vLLM (Green Bars)

* **Visual Trend:** Highly variable performance relative to the baseline. The bars are significantly shorter than the orange bars in every single category.

* **Performance Peaks:** vLLM performs best (closest to SGLang) in "Skeleton of Thought" (~0.75) and "Multi-Turn Chat (long)" (~0.68).

* **Performance Valleys:** vLLM shows the lowest relative throughput in "HellaSwag" (near zero), "ReAct Agents," and "MMLU."

---

## 4. Summary of Findings

The chart demonstrates that **SGLang** consistently achieves higher throughput than **vLLM** across all 11 tested benchmarks. In several cases (HellaSwag, ReAct Agents, MMLU, JSON Decoding, and DSPy RAG Pipeline), SGLang's throughput is multiple times higher than that of vLLM. The gap is narrowest in "Skeleton of Thought" and "Multi-Turn Chat (long)," though SGLang still maintains a clear lead.