\n

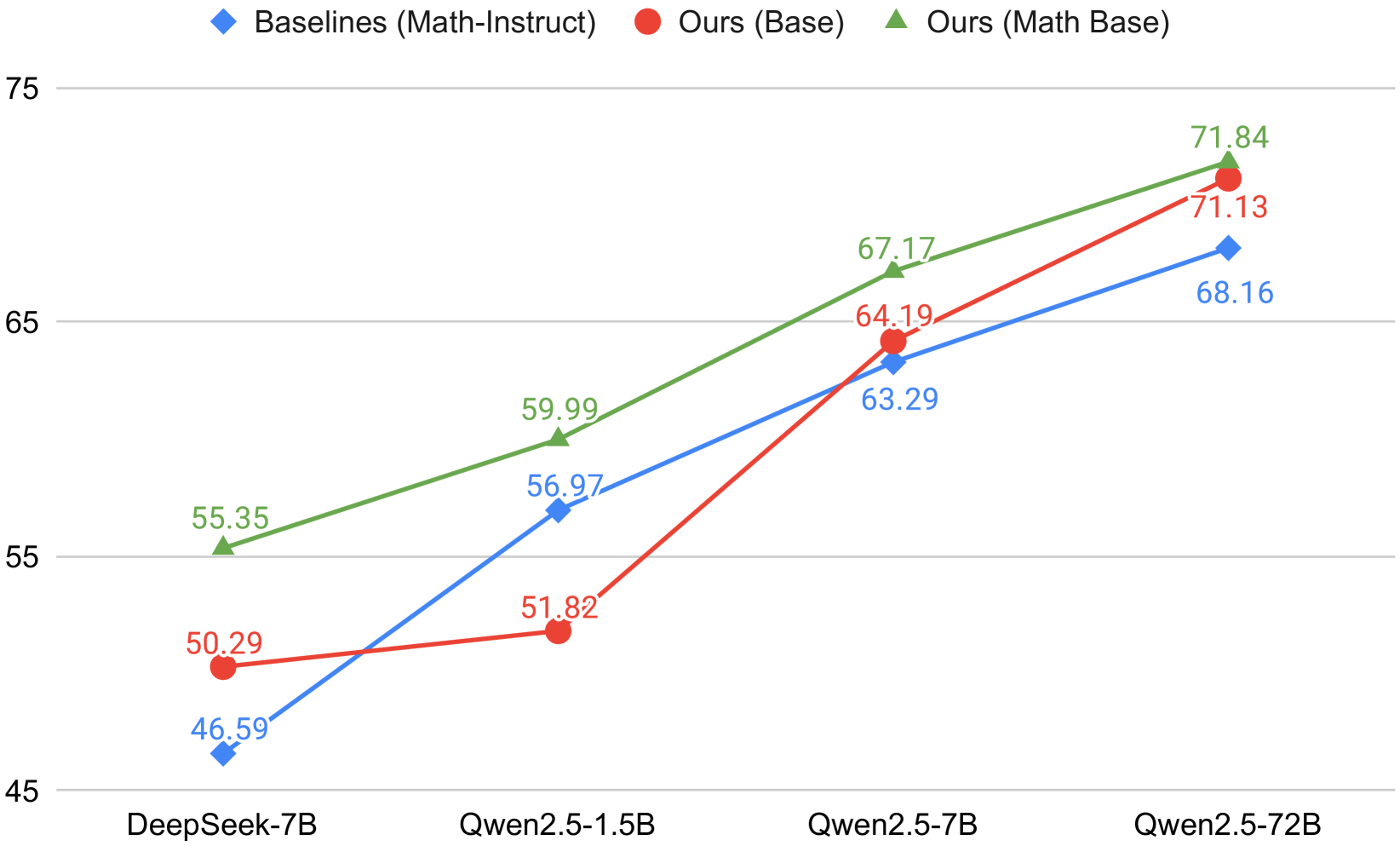

## Line Chart: Performance Comparison of Model Series Across Architectures

### Overview

This image is a line chart comparing the performance of three distinct model series across four different model architectures/sizes. The chart plots numerical performance scores (y-axis) against specific model names (x-axis). The three series are differentiated by color and marker shape, with a legend provided at the top of the chart.

### Components/Axes

* **X-Axis (Horizontal):** Lists four specific model architectures. From left to right:

1. `DeepSeek-7B`

2. `Qwen2.5-1.5B`

3. `Qwen2.5-7B`

4. `Qwen2.5-72B`

* **Y-Axis (Vertical):** Represents a numerical performance score. The axis is labeled with major gridlines at intervals of 10, specifically marking the values `45`, `55`, `65`, and `75`. The exact metric (e.g., accuracy, score) is not specified in the image.

* **Legend (Top Center):** Defines the three data series:

* **Blue Diamond (♦):** `Baselines (Math-Instruct)`

* **Red Circle (●):** `Ours (Base)`

* **Green Triangle (▲):** `Ours (Math Base)`

### Detailed Analysis

The chart displays three upward-trending lines, each connecting four data points corresponding to the x-axis models. The exact values are annotated next to each data point.

**1. Series: Baselines (Math-Instruct) - Blue Line with Diamond Markers**

* **Trend:** Consistently slopes upward from left to right.

* **Data Points:**

* DeepSeek-7B: `46.59`

* Qwen2.5-1.5B: `56.97`

* Qwen2.5-7B: `63.29`

* Qwen2.5-72B: `68.16`

**2. Series: Ours (Base) - Red Line with Circle Markers**

* **Trend:** Shows a slight initial increase, then a steeper upward slope. It starts above the blue line, is overtaken by it at the second point, and then surpasses it again at the final two points.

* **Data Points:**

* DeepSeek-7B: `50.29`

* Qwen2.5-1.5B: `51.82`

* Qwen2.5-7B: `64.19`

* Qwen2.5-72B: `71.13`

**3. Series: Ours (Math Base) - Green Line with Triangle Markers**

* **Trend:** Consistently slopes upward and maintains the highest position on the chart for all four model points.

* **Data Points:**

* DeepSeek-7B: `55.35`

* Qwen2.5-1.5B: `59.99`

* Qwen2.5-7B: `67.17`

* Qwen2.5-72B: `71.84`

### Key Observations

1. **Performance Hierarchy:** The `Ours (Math Base)` (green) series demonstrates the highest performance at every model size tested. The `Ours (Base)` (red) series generally performs second best, except at the Qwen2.5-1.5B point where it is slightly below the `Baselines` (blue).

2. **Scaling Trend:** All three series show a clear positive correlation between model size/complexity (moving right on the x-axis) and performance score. The gains are substantial, with scores increasing by approximately 20-25 points from the smallest to the largest model within each series.

3. **Convergence at Scale:** The performance gap between the three series narrows significantly at the largest model size (`Qwen2.5-72B`). The scores for `Ours (Base)` (`71.13`) and `Ours (Math Base)` (`71.84`) are very close, while the `Baselines` score (`68.16`) is only slightly lower.

4. **Notable Anomaly:** The `Ours (Base)` (red) series shows a much smaller performance increase between `DeepSeek-7B` (`50.29`) and `Qwen2.5-1.5B` (`51.82`) compared to the other two series, which see larger jumps at this step. This creates a temporary dip in its relative ranking.

### Interpretation

This chart presents a comparative analysis likely from a research paper or technical report, evaluating a new method ("Ours") against a baseline. The data suggests several key findings:

* **Efficacy of Proposed Method:** The "Ours (Math Base)" variant is consistently the top performer, indicating that the proposed method, when combined with math-specific training or data, yields superior results across a range of model architectures.

* **Importance of Specialization:** The consistent lead of the green line (`Math Base`) over the red line (`Base`) implies that domain-specific (math) adaptation provides a clear performance advantage over a generic base model, even when using the same core method.

* **Scaling Laws Hold:** The strong upward trend for all lines confirms that increasing model capacity is an effective strategy for improving performance on the measured task, regardless of the training methodology.

* **Diminishing Returns of Methodology at Scale:** The convergence of scores at the `Qwen2.5-72B` size suggests that for very large models, the inherent capability of the model architecture may begin to dominate, reducing the relative advantage provided by the specialized training method. The baseline method also scales well, closing much of the gap.

* **Architectural Sensitivity:** The performance ordering is not perfectly consistent across all architectures (evidenced by the red/blue line crossover at `Qwen2.5-1.5B`), suggesting that the effectiveness of each approach can be somewhat dependent on the underlying model architecture.

In summary, the chart argues for the value of the "Ours (Math Base)" approach, demonstrates the universal benefit of scaling, and hints that methodological advantages may be most pronounced in mid-sized models before large-scale parameters begin to equalize performance.