## Line Chart: OOD Generalization: 10x10 -> 15x15 Transfer

### Overview

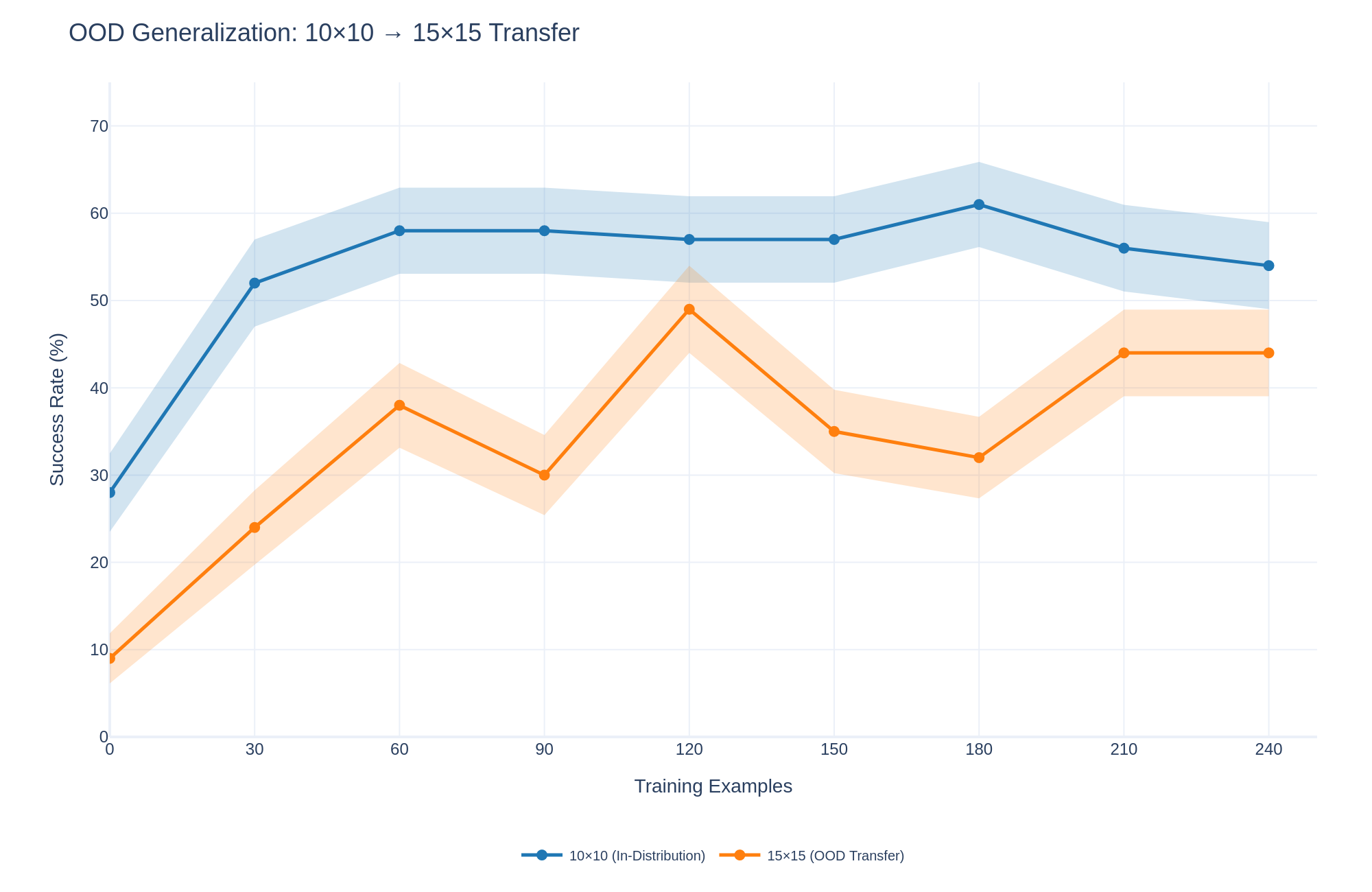

This line chart illustrates the success rate (%) of a model trained with varying numbers of training examples, comparing performance on in-distribution data (10x10) versus out-of-distribution (OOD) transfer data (15x15). The chart shows how the success rate changes as the number of training examples increases, with shaded areas representing confidence intervals.

### Components/Axes

* **Title:** OOD Generalization: 10x10 -> 15x15 Transfer

* **X-axis:** Training Examples (ranging from 0 to 240, with markers at 0, 30, 60, 90, 120, 150, 180, 210, and 240)

* **Y-axis:** Success Rate (%) (ranging from 0 to 70, with markers at 0, 10, 20, 30, 40, 50, 60, and 70)

* **Legend:**

* Blue Line: 10x10 (In-Distribution)

* Orange Line: 15x15 (OOD Transfer)

* **Shaded Areas:** Represent confidence intervals around each line. The blue shaded area corresponds to the 10x10 data, and the orange shaded area corresponds to the 15x15 data.

### Detailed Analysis

**10x10 (In-Distribution) - Blue Line:**

The blue line representing the in-distribution data starts at approximately 45% success rate at 0 training examples. It rises sharply to approximately 58% at 30 training examples, then continues to increase at a decreasing rate, reaching a peak of approximately 64% at 150 training examples. After 150 training examples, the success rate plateaus and fluctuates between approximately 58% and 62% until 240 training examples.

**15x15 (OOD Transfer) - Orange Line:**

The orange line representing the OOD transfer data begins at approximately 10% success rate at 0 training examples. It increases to approximately 25% at 30 training examples, then rises more steeply to approximately 48% at 90 training examples. The line then decreases to approximately 35% at 120 training examples, increases to approximately 40% at 150 training examples, decreases to approximately 30% at 180 training examples, and finally rises to approximately 35% at 240 training examples.

**Confidence Intervals:**

The shaded areas around each line indicate the confidence intervals. The blue shaded area is relatively narrow, suggesting more consistent performance for the in-distribution data. The orange shaded area is wider, indicating greater variability in the OOD transfer performance.

### Key Observations

* The in-distribution data consistently outperforms the OOD transfer data across all training example counts.

* The OOD transfer data exhibits more significant fluctuations in success rate as the number of training examples increases.

* The in-distribution data reaches a plateau in performance after approximately 150 training examples, while the OOD transfer data continues to fluctuate.

* The initial performance gap between the two datasets is substantial, but it narrows somewhat as the number of training examples increases.

### Interpretation

The chart demonstrates the impact of domain generalization on model performance. The in-distribution data (10x10) benefits from training within the same distribution as the test data, resulting in higher and more stable success rates. The OOD transfer data (15x15), however, faces a distribution shift, leading to lower and more variable performance.

The initial low success rate for the OOD transfer data suggests that the model struggles to generalize to the new domain with limited training examples. The fluctuations in the OOD transfer line indicate that the model's performance is sensitive to the specific training examples it receives. The narrowing gap between the two datasets as the number of training examples increases suggests that the model can learn to adapt to the new domain, but it requires a substantial amount of data to achieve comparable performance to the in-distribution scenario.

The wider confidence intervals for the OOD transfer data highlight the challenges of domain generalization and the need for robust techniques to mitigate the effects of distribution shift. The plateau in the in-distribution data suggests diminishing returns from adding more training examples once a certain level of performance is reached. This could inform decisions about data collection and model training strategies.