## Diagram: Threats to Federated Learning (FL)

### Overview

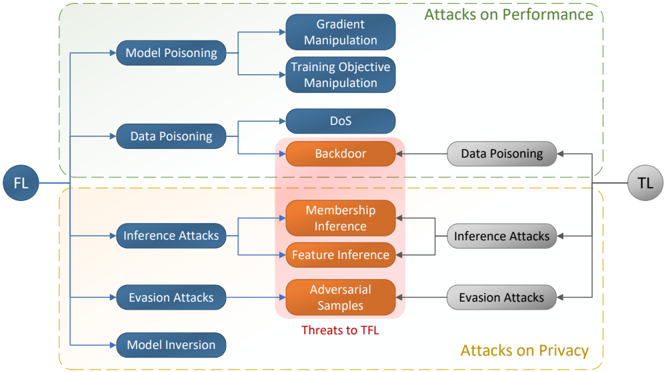

The image is a diagram illustrating various attack vectors in Federated Learning (FL) systems. It categorizes attacks based on their impact: Attacks on Performance and Attacks on Privacy. The diagram shows how attacks originating from the FL system can target the Trusted Layer (TL), and highlights specific threats to the TFL.

### Components/Axes

* **Nodes:**

* FL (Federated Learning): Located on the left side of the diagram.

* TL (Trusted Layer): Located on the right side of the diagram.

* **Attack Categories:**

* Attacks on Performance (top, enclosed in a dashed green box)

* Attacks on Privacy (bottom, enclosed in a dashed yellow box)

* **Threats to TFL:** Highlighted in a red shaded box in the center.

* **Attack Types (Performance):**

* Model Poisoning

* Gradient Manipulation

* Training Objective Manipulation

* Data Poisoning

* DoS (Denial of Service)

* Backdoor

* **Attack Types (Privacy):**

* Inference Attacks

* Membership Inference

* Feature Inference

* Evasion Attacks

* Adversarial Samples

* Model Inversion

* **Connections:** Arrows indicate the flow and relationship between different attack types and their targets.

### Detailed Analysis or ### Content Details

* **Attacks on Performance:**

* Model Poisoning: Leads to Gradient Manipulation and Training Objective Manipulation.

* Data Poisoning: Leads to DoS and Backdoor attacks.

* **Attacks on Privacy:**

* Inference Attacks: Leads to Membership Inference and Feature Inference.

* Evasion Attacks: Leads to Adversarial Samples.

* Model Inversion: Directly connected to the FL node.

* **Threats to TFL:**

* Backdoor attacks from Data Poisoning can target the TL with Data Poisoning.

* Membership Inference and Feature Inference attacks from Inference Attacks can target the TL with Inference Attacks.

* Adversarial Samples from Evasion Attacks can target the TL with Evasion Attacks.

### Key Observations

* The diagram clearly distinguishes between attacks that degrade performance and those that compromise privacy.

* The "Threats to TFL" section highlights specific attack types that pose a direct risk to the trusted layer.

* Model Poisoning and Data Poisoning are categorized under "Attacks on Performance," while Inference Attacks, Evasion Attacks, and Model Inversion are under "Attacks on Privacy."

* The diagram shows a flow of attacks originating from the FL system and potentially impacting the TL.

### Interpretation

The diagram illustrates the complex landscape of security threats in Federated Learning. It emphasizes that FL systems are vulnerable to both performance degradation and privacy breaches. The "Threats to TFL" section is particularly important, as it identifies the specific attack vectors that could compromise the integrity and confidentiality of the trusted layer, which is crucial for secure FL deployment. The diagram suggests that a comprehensive security strategy for FL must address both performance and privacy concerns, and must specifically protect the trusted layer from targeted attacks.