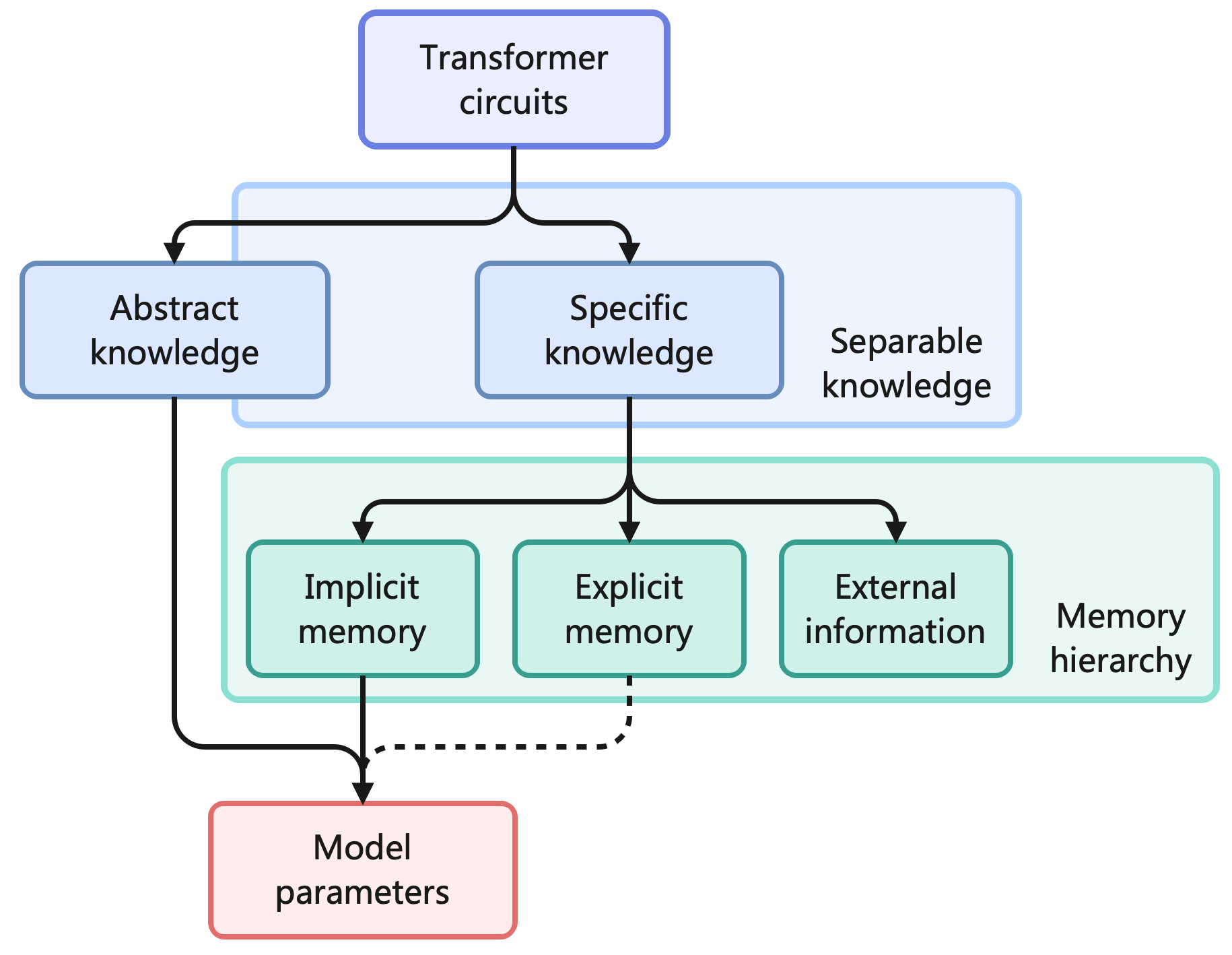

## Diagram: Transformer Circuits Knowledge and Memory Hierarchy

### Overview

This image is a hierarchical flowchart illustrating the conceptual architecture of how "Transformer circuits" process, categorize, and store different types of information. It maps the flow from high-level circuits down to the foundational "Model parameters," using grouping boxes to define specific ontological categories like "Separable knowledge" and "Memory hierarchy."

### Components

* **Nodes:** Seven rounded rectangles containing text, color-coded by hierarchical level.

* **Grouping Containers:** Two large, lightly shaded rectangular outlines that enclose specific sets of nodes, with descriptive text placed to their right.

* **Connectors:**

* Solid black arrows indicating direct flow or primary relationships.

* One dashed black arrow indicating a secondary, indirect, or conditional relationship.

* **Language:** All text is in English.

### Content Details

**1. Top Region (Root)**

* **Node:** Centered at the top is a light purple box with a darker purple border.

* **Text:** "Transformer circuits"

* **Flow:** A single solid black line descends from this box and branches into two paths, leading to the Level 1 nodes.

**2. Middle Region (Level 1: Separable Knowledge)**

* **Nodes:** Two light blue boxes with darker blue borders, positioned side-by-side.

* Left Node Text: "Abstract knowledge"

* Right Node Text: "Specific knowledge"

* **Grouping:** Both of these blue boxes are enclosed within a larger, very light blue rectangular container.

* **Grouping Label:** To the right of this container, the text reads: "Separable knowledge".

* **Flow:**

* A solid black line descends from "Abstract knowledge" all the way to the bottom region.

* A solid black line descends from "Specific knowledge" and branches into three paths, leading to the Level 2 nodes.

**3. Lower-Middle Region (Level 2: Memory Hierarchy)**

* **Nodes:** Three light teal/green boxes with darker teal/green borders, positioned side-by-side beneath "Specific knowledge".

* Left Node Text: "Implicit memory"

* Center Node Text: "Explicit memory"

* Right Node Text: "External information"

* **Grouping:** These three boxes are enclosed within a larger, very light teal/green rectangular container.

* **Grouping Label:** To the right of this container, the text reads: "Memory hierarchy".

* **Flow:**

* A solid black line descends from "Implicit memory" to the bottom region.

* A **dashed** black line descends from "Explicit memory", turns left, and merges with the path coming from "Implicit memory".

* "External information" has **no** outgoing arrows.

**4. Bottom Region (Terminal Node)**

* **Node:** A single light red/pink box with a darker red/pink border, positioned at the bottom, aligned to the left-center of the diagram.

* **Text:** "Model parameters"

* **Inbound Flow:** This node receives inputs from the higher levels:

* Direct solid line from "Abstract knowledge".

* Direct solid line from "Implicit memory".

* Dashed line from "Explicit memory" (which merges with the Implicit memory line just before entering the box).

### Key Observations

* **Bifurcation of Knowledge:** The system fundamentally splits learned information into "Abstract" and "Specific" categories, explicitly labeling them as "Separable."

* **Asymmetrical Flow:** While "Abstract knowledge" maps directly to the final parameters, "Specific knowledge" must pass through a "Memory hierarchy" first.

* **Varying Connection Strengths:** The connections to "Model parameters" vary in nature. Abstract and Implicit memory have solid (direct/primary) connections. Explicit memory has a dashed (secondary/indirect) connection.

* **Isolated Node:** "External information" is part of the memory hierarchy but is visually isolated from the "Model parameters," possessing no downward connecting arrow.

### Interpretation

This diagram represents a theoretical framework for understanding how Large Language Models (specifically Transformer architectures) store and utilize information.

* **Separable Knowledge:** The diagram suggests that a model's ability to understand generalized rules, logic, or syntax ("Abstract knowledge") is structurally distinct from its memorization of facts ("Specific knowledge").

* **The Nature of Model Parameters:** The "Model parameters" (the actual weights and biases of the neural network) are formed directly by "Abstract knowledge" and "Implicit memory." This implies that during training, generalized rules and deeply ingrained factual associations become permanently baked into the model's core weights.

* **Explicit vs. Implicit:** The dashed line from "Explicit memory" to "Model parameters" suggests a nuanced relationship. Explicit memory (perhaps exact factual recall or data retrieved via mechanisms like RAG - Retrieval-Augmented Generation) might influence the model's output or temporary state (in-context learning) but does not alter the foundational, frozen weights in the same direct, permanent way that implicit training does.

* **The Boundary of the Model:** The most critical Peircean observation is the lack of an arrow from "External information" to "Model parameters." This visually enforces the concept that while a Transformer can process external data (like a user's prompt or a web search result provided in the context window), this data is transient. It exists within the "Memory hierarchy" during inference but *never* updates or alters the underlying "Model parameters." The model's core weights remain insulated from external, real-time information.