## Diagram: Hierarchical Neural Network Architecture with MNIST Dataset

### Overview

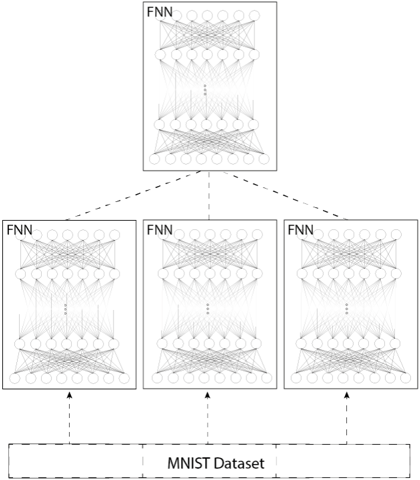

The diagram illustrates a hierarchical neural network architecture connected to the MNIST dataset. It consists of four Fully Connected Neural Networks (FNNs) arranged vertically, with the topmost FNN labeled "FNN" and three additional FNNs below it. Arrows indicate data flow from the MNIST dataset upward through the FNNs. The structure suggests a multi-stage processing pipeline.

### Components/Axes

- **Top Block**: Labeled "FNN" (Fully Connected Neural Network), containing interconnected nodes represented by circles and lines.

- **Middle Blocks**: Three identical FNN blocks, each labeled "FNN," with identical internal node structures.

- **Bottom Block**: Labeled "MNIST Dataset," depicted as a horizontal rectangle.

- **Arrows**: Dashed lines connect the MNIST dataset to the three middle FNNs, and solid arrows connect the middle FNNs to the top FNN.

- **No axes, scales, or legends** are present in the diagram.

### Detailed Analysis

- **FNN Structure**: Each FNN block contains multiple layers of interconnected nodes (circles) with dense interconnections (lines), typical of fully connected layers in neural networks.

- **Data Flow**:

- The MNIST dataset feeds into the three middle FNNs via dashed arrows.

- The outputs of the three middle FNNs converge into the top FNN via solid arrows.

- **No numerical values, categories, or sub-categories** are explicitly labeled in the diagram.

### Key Observations

- The hierarchical arrangement implies a cascading processing model, where lower-level FNNs extract features from the MNIST data, and higher-level FNNs refine or classify these features.

- The use of dashed arrows for input connections and solid arrows for output connections may indicate different stages of processing (e.g., training vs. inference).

- The identical structure of the three middle FNNs suggests parallel processing or redundancy in feature extraction.

### Interpretation

This diagram represents a multi-stage neural network architecture designed for processing the MNIST dataset, a collection of handwritten digits commonly used for benchmarking machine learning models. The hierarchical structure implies:

1. **Feature Hierarchy**: Lower FNNs (closer to the dataset) likely learn basic features (e.g., edges, strokes), while higher FNNs learn abstract representations (e.g., digit shapes).

2. **Ensemble or Redundancy**: The three middle FNNs may act as parallel feature extractors, with their outputs aggregated in the top FNN for final classification.

3. **Training Pipeline**: The dashed arrows could represent data flow during training, while solid arrows might indicate inference paths.

The absence of explicit numerical values or labels for layer sizes (e.g., number of neurons) limits quantitative analysis. However, the diagram emphasizes the conceptual flow of information through a cascading neural network structure, a common approach in deep learning for hierarchical feature learning.