TECHNICAL ASSET FINGERPRINT

1d21cf35265a0c0f2580c9a1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

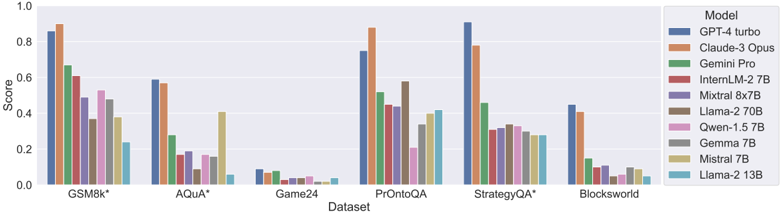

## Bar Chart: Model Performance on Datasets

### Overview

The image is a bar chart comparing the performance of various language models on different datasets. The chart displays the "Score" achieved by each model on each dataset, allowing for a comparison of their effectiveness across different tasks.

### Components/Axes

* **X-axis:** "Dataset" with the following categories: GSM8k*, AQUA*, Game24, PrOntoQA, StrategyQA*, Blocksworld.

* **Y-axis:** "Score" ranging from 0.0 to 1.0, with increments of 0.2.

* **Legend (Top-Right):** Lists the models, each associated with a specific color:

* GPT-4 turbo (Dark Blue)

* Claude-3 Opus (Orange)

* Gemini Pro (Green)

* InternLM-2 7B (Red-Brown)

* Mixtral 8x7B (Purple)

* Llama-2 70B (Brown)

* Qwen-1.5 7B (Pink)

* Gemma 7B (Gray)

* Mistral 7B (Tan)

* Llama-2 13B (Light Blue)

### Detailed Analysis

**Dataset: GSM8k***

* GPT-4 turbo (Dark Blue): Score ~0.86

* Claude-3 Opus (Orange): Score ~0.90

* Gemini Pro (Green): Score ~0.67

* InternLM-2 7B (Red-Brown): Score ~0.52

* Mixtral 8x7B (Purple): Score ~0.48

* Llama-2 70B (Brown): Score ~0.58

* Qwen-1.5 7B (Pink): Score ~0.40

* Gemma 7B (Gray): Score ~0.36

* Mistral 7B (Tan): Score ~0.24

* Llama-2 13B (Light Blue): Score ~0.22

**Dataset: AQUA***

* GPT-4 turbo (Dark Blue): Score ~0.59

* Claude-3 Opus (Orange): Score ~0.57

* Gemini Pro (Green): Score ~0.28

* InternLM-2 7B (Red-Brown): Score ~0.17

* Mixtral 8x7B (Purple): Score ~0.09

* Llama-2 70B (Brown): Score ~0.16

* Qwen-1.5 7B (Pink): Score ~0.16

* Gemma 7B (Gray): Score ~0.14

* Mistral 7B (Tan): Score ~0.06

* Llama-2 13B (Light Blue): Score ~0.05

**Dataset: Game24**

* GPT-4 turbo (Dark Blue): Score ~0.08

* Claude-3 Opus (Orange): Score ~0.07

* Gemini Pro (Green): Score ~0.07

* InternLM-2 7B (Red-Brown): Score ~0.03

* Mixtral 8x7B (Purple): Score ~0.04

* Llama-2 70B (Brown): Score ~0.03

* Qwen-1.5 7B (Pink): Score ~0.03

* Gemma 7B (Gray): Score ~0.02

* Mistral 7B (Tan): Score ~0.04

* Llama-2 13B (Light Blue): Score ~0.01

**Dataset: PrOntoQA**

* GPT-4 turbo (Dark Blue): Score ~0.42

* Claude-3 Opus (Orange): Score ~0.88

* Gemini Pro (Green): Score ~0.43

* InternLM-2 7B (Red-Brown): Score ~0.20

* Mixtral 8x7B (Purple): Score ~0.40

* Llama-2 70B (Brown): Score ~0.34

* Qwen-1.5 7B (Pink): Score ~0.22

* Gemma 7B (Gray): Score ~0.32

* Mistral 7B (Tan): Score ~0.41

* Llama-2 13B (Light Blue): Score ~0.10

**Dataset: StrategyQA***

* GPT-4 turbo (Dark Blue): Score ~0.92

* Claude-3 Opus (Orange): Score ~0.80

* Gemini Pro (Green): Score ~0.42

* InternLM-2 7B (Red-Brown): Score ~0.32

* Mixtral 8x7B (Purple): Score ~0.30

* Llama-2 70B (Brown): Score ~0.33

* Qwen-1.5 7B (Pink): Score ~0.30

* Gemma 7B (Gray): Score ~0.29

* Mistral 7B (Tan): Score ~0.30

* Llama-2 13B (Light Blue): Score ~0.29

**Dataset: Blocksworld**

* GPT-4 turbo (Dark Blue): Score ~0.44

* Claude-3 Opus (Orange): Score ~0.12

* Gemini Pro (Green): Score ~0.21

* InternLM-2 7B (Red-Brown): Score ~0.05

* Mixtral 8x7B (Purple): Score ~0.03

* Llama-2 70B (Brown): Score ~0.08

* Qwen-1.5 7B (Pink): Score ~0.07

* Gemma 7B (Gray): Score ~0.04

* Mistral 7B (Tan): Score ~0.10

* Llama-2 13B (Light Blue): Score ~0.40

### Key Observations

* GPT-4 turbo and Claude-3 Opus generally outperform the other models across most datasets.

* Game24 appears to be a particularly challenging dataset for all models, with scores consistently low.

* The performance of different models varies significantly depending on the dataset.

* The models Llama-2 13B, Mistral 7B, and Gemma 7B generally have lower scores compared to the top performers.

### Interpretation

The bar chart provides a comparative analysis of the performance of different language models on a range of datasets. The data suggests that GPT-4 turbo and Claude-3 Opus are the most effective models overall, achieving higher scores across most tasks. However, the relative performance of the models varies depending on the specific dataset, indicating that some models are better suited for certain types of tasks than others. The low scores on the Game24 dataset suggest that this task is particularly challenging for all the models tested. The chart highlights the importance of selecting the appropriate model for a given task based on its strengths and weaknesses.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

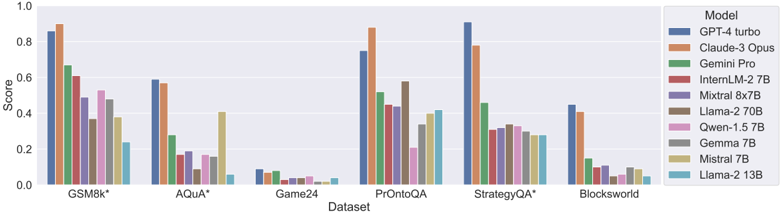

## Bar Chart: Model Performance on Various Datasets

### Overview

The image presents a bar chart comparing the performance scores of several large language models (LLMs) across six different datasets. The y-axis represents the 'Score', ranging from 0.0 to 1.0, while the x-axis lists the datasets: GSM8k*, Aqua*, Game24, PrOntoQA, StrategyQA*, and Blocksworld. Each dataset has multiple bars, each representing the score achieved by a different LLM. A legend on the right identifies each model by color.

### Components/Axes

* **X-axis:** Dataset - GSM8k*, Aqua*, Game24, PrOntoQA, StrategyQA*, Blocksworld.

* **Y-axis:** Score - Scale from 0.0 to 1.0.

* **Legend (Top-Right):**

* GPT-4 turbo (Blue)

* Claude-3 Opus (Orange)

* Gemini Pro (Green)

* InternLM-2 7B (Red)

* Mixtral 8x7B (Purple)

* Llama-2 70B (Brown)

* Qwen-1.5 7B (Pink)

* Gemma 7B (Gray)

* Mistral 7B (Teal)

* Llama-2 13B (Light Blue)

### Detailed Analysis

Here's a breakdown of the scores for each model on each dataset, with approximate values based on visual estimation:

* **GSM8k*:**

* GPT-4 turbo: ~0.94

* Claude-3 Opus: ~0.92

* Gemini Pro: ~0.68

* InternLM-2 7B: ~0.45

* Mixtral 8x7B: ~0.62

* Llama-2 70B: ~0.55

* Qwen-1.5 7B: ~0.40

* Gemma 7B: ~0.30

* Mistral 7B: ~0.25

* Llama-2 13B: ~0.15

* **Aqua*:**

* GPT-4 turbo: ~0.62

* Claude-3 Opus: ~0.58

* Gemini Pro: ~0.55

* InternLM-2 7B: ~0.22

* Mixtral 8x7B: ~0.45

* Llama-2 70B: ~0.35

* Qwen-1.5 7B: ~0.25

* Gemma 7B: ~0.18

* Mistral 7B: ~0.15

* Llama-2 13B: ~0.08

* **Game24:**

* GPT-4 turbo: ~0.95

* Claude-3 Opus: ~0.90

* Gemini Pro: ~0.40

* InternLM-2 7B: ~0.05

* Mixtral 8x7B: ~0.50

* Llama-2 70B: ~0.20

* Qwen-1.5 7B: ~0.05

* Gemma 7B: ~0.02

* Mistral 7B: ~0.03

* Llama-2 13B: ~0.01

* **PrOntoQA:**

* GPT-4 turbo: ~0.92

* Claude-3 Opus: ~0.85

* Gemini Pro: ~0.50

* InternLM-2 7B: ~0.40

* Mixtral 8x7B: ~0.70

* Llama-2 70B: ~0.55

* Qwen-1.5 7B: ~0.45

* Gemma 7B: ~0.35

* Mistral 7B: ~0.30

* Llama-2 13B: ~0.20

* **StrategyQA*:**

* GPT-4 turbo: ~0.95

* Claude-3 Opus: ~0.90

* Gemini Pro: ~0.60

* InternLM-2 7B: ~0.30

* Mixtral 8x7B: ~0.75

* Llama-2 70B: ~0.50

* Qwen-1.5 7B: ~0.35

* Gemma 7B: ~0.25

* Mistral 7B: ~0.20

* Llama-2 13B: ~0.10

* **Blocksworld:**

* GPT-4 turbo: ~0.90

* Claude-3 Opus: ~0.85

* Gemini Pro: ~0.50

* InternLM-2 7B: ~0.10

* Mixtral 8x7B: ~0.40

* Llama-2 70B: ~0.25

* Qwen-1.5 7B: ~0.15

* Gemma 7B: ~0.05

* Mistral 7B: ~0.03

* Llama-2 13B: ~0.01

### Key Observations

* GPT-4 turbo and Claude-3 Opus consistently achieve the highest scores across all datasets.

* Gemini Pro generally performs well, but lags behind GPT-4 turbo and Claude-3 Opus.

* Smaller models (InternLM-2 7B, Gemma 7B, Mistral 7B, Llama-2 13B) exhibit significantly lower scores, particularly on more challenging datasets like Game24 and Blocksworld.

* Mixtral 8x7B and Llama-2 70B show intermediate performance, often outperforming the smaller models but falling short of the top performers.

* The datasets GSM8k* and StrategyQA* show the largest performance differences between models.

### Interpretation

This chart demonstrates a clear hierarchy in the performance of these LLMs across a variety of reasoning and knowledge-based tasks. GPT-4 turbo and Claude-3 Opus represent the state-of-the-art, exhibiting strong capabilities in all tested domains. The performance gap between these models and the others highlights the importance of model size and architecture in achieving high accuracy.

The varying performance across datasets suggests that different models excel at different types of reasoning. For example, the high scores on GSM8k* (mathematical problem solving) and StrategyQA* (multi-hop reasoning) for GPT-4 turbo and Claude-3 Opus indicate their strong capabilities in these areas. The consistently low scores of smaller models suggest they may lack the capacity to effectively handle complex reasoning tasks.

The asterisks (*) after GSM8k and StrategyQA likely indicate that these datasets have specific characteristics or variations that influence the results. Further investigation into these datasets would be needed to understand the nuances of the observed performance differences. The chart provides valuable insights into the strengths and weaknesses of different LLMs, which can inform model selection for specific applications.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Chart: Model Performance Scores Across Reasoning Datasets

### Overview

The image displays a grouped bar chart comparing the performance scores of ten different large language models across six distinct reasoning datasets. The chart is designed to benchmark model capabilities on tasks requiring mathematical, logical, and strategic reasoning.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **X-Axis (Horizontal):** Labeled "Dataset". It lists six categorical datasets:

1. GSM8k*

2. AQuA*

3. Game24

4. PrOntoQA

5. StrategyQA*

6. Blocksworld

* **Y-Axis (Vertical):** Labeled "Score". It represents a normalized performance metric ranging from 0.0 to 0.8, with major tick marks at 0.0, 0.2, 0.4, 0.6, and 0.8.

* **Legend:** Positioned on the right side of the chart. It maps colors to ten specific models:

* Blue: GPT-4 turbo

* Orange: Claude-3 Opus

* Green: Gemini Pro

* Red: InternLM-2 7B

* Purple: Mixtral 8x7B

* Brown: Llama-2 70B

* Pink: Qwen-1.5 7B

* Gray: Gemma 7B

* Olive: Mistral 7B

* Cyan: Llama-2 13B

### Detailed Analysis

Performance scores are approximate, estimated from bar height relative to the y-axis grid lines.

**1. GSM8k* Dataset:**

* **Trend:** This dataset shows the highest overall scores, with a clear performance hierarchy.

* **Data Points (Approximate):**

* GPT-4 turbo (Blue): ~0.85

* Claude-3 Opus (Orange): ~0.90 (Highest on this dataset)

* Gemini Pro (Green): ~0.65

* InternLM-2 7B (Red): ~0.58

* Mixtral 8x7B (Purple): ~0.50

* Llama-2 70B (Brown): ~0.38

* Qwen-1.5 7B (Pink): ~0.55

* Gemma 7B (Gray): ~0.48

* Mistral 7B (Olive): ~0.38

* Llama-2 13B (Cyan): ~0.25

**2. AQuA* Dataset:**

* **Trend:** Scores are generally lower than GSM8k. Two models show a significant lead.

* **Data Points (Approximate):**

* GPT-4 turbo (Blue): ~0.60

* Claude-3 Opus (Orange): ~0.58

* Gemini Pro (Green): ~0.28

* InternLM-2 7B (Red): ~0.18

* Mixtral 8x7B (Purple): ~0.18

* Llama-2 70B (Brown): ~0.15

* Qwen-1.5 7B (Pink): ~0.18

* Gemma 7B (Gray): ~0.15

* Mistral 7B (Olive): ~0.40

* Llama-2 13B (Cyan): ~0.05

**3. Game24 Dataset:**

* **Trend:** All models perform very poorly, with scores clustered near the bottom of the scale.

* **Data Points (Approximate):** All models score below 0.10. The highest appears to be GPT-4 turbo (Blue) at ~0.08.

**4. PrOntoQA Dataset:**

* **Trend:** High variance in performance. Two models achieve very high scores, while others are mid-range.

* **Data Points (Approximate):**

* GPT-4 turbo (Blue): ~0.75

* Claude-3 Opus (Orange): ~0.88 (Highest on this dataset)

* Gemini Pro (Green): ~0.52

* InternLM-2 7B (Red): ~0.45

* Mixtral 8x7B (Purple): ~0.45

* Llama-2 70B (Brown): ~0.58

* Qwen-1.5 7B (Pink): ~0.20

* Gemma 7B (Gray): ~0.38

* Mistral 7B (Olive): ~0.42

* Llama-2 13B (Cyan): ~0.42

**5. StrategyQA* Dataset:**

* **Trend:** Similar to GSM8k, with a clear top performer and a gradual decline.

* **Data Points (Approximate):**

* GPT-4 turbo (Blue): ~0.90 (Highest on this dataset)

* Claude-3 Opus (Orange): ~0.78

* Gemini Pro (Green): ~0.48

* InternLM-2 7B (Red): ~0.32

* Mixtral 8x7B (Purple): ~0.30

* Llama-2 70B (Brown): ~0.35

* Qwen-1.5 7B (Pink): ~0.30

* Gemma 7B (Gray): ~0.28

* Mistral 7B (Olive): ~0.28

* Llama-2 13B (Cyan): ~0.28

**6. Blocksworld Dataset:**

* **Trend:** Moderate scores overall, with a tight cluster for most models below the top two.

* **Data Points (Approximate):**

* GPT-4 turbo (Blue): ~0.45

* Claude-3 Opus (Orange): ~0.40

* Gemini Pro (Green): ~0.15

* InternLM-2 7B (Red): ~0.10

* Mixtral 8x7B (Purple): ~0.08

* Llama-2 70B (Brown): ~0.08

* Qwen-1.5 7B (Pink): ~0.08

* Gemma 7B (Gray): ~0.05

* Mistral 7B (Olive): ~0.08

* Llama-2 13B (Cyan): ~0.05

### Key Observations

1. **Dominant Models:** GPT-4 turbo (Blue) and Claude-3 Opus (Orange) are consistently the top two performers across all datasets, often by a significant margin.

2. **Dataset Difficulty:** Game24 is the most challenging dataset, with all models scoring near zero. GSM8k and PrOntoQA appear to be the easiest, allowing top models to achieve scores above 0.8.

3. **Performance Clustering:** On several datasets (e.g., AQuA, Blocksworld), there is a large performance gap between the top two models and the rest of the field, which clusters at a much lower score level.

4. **Model Size vs. Performance:** The chart includes both large (e.g., Llama-2 70B) and smaller (e.g., Gemma 7B, Mistral 7B) models. Performance does not strictly correlate with model size, as some smaller models (e.g., Mistral 7B on AQuA) outperform larger ones (e.g., Llama-2 70B on the same dataset).

### Interpretation

This chart provides a comparative snapshot of LLM reasoning capabilities as of the evaluation date. The data suggests that:

* **Task-Specific Strengths:** The significant variance in model rankings across datasets indicates that models have specialized strengths. A model excelling at mathematical reasoning (GSM8k) may not be the best at strategic reasoning (StrategyQA).

* **Benchmarking Utility:** Datasets like Game24 serve as "hard stops" that current models struggle with, highlighting areas for future improvement. Conversely, high scores on GSM8k may indicate saturation for top models on that specific benchmark.

* **The "Frontier Model" Gap:** The consistent lead of GPT-4 turbo and Claude-3 Opus underscores a current performance gap between the most advanced proprietary models and other open or smaller models on complex reasoning tasks. This gap is most pronounced on datasets requiring multi-step logical deduction (PrOntoQA, StrategyQA).

* **Evaluation Context:** The asterisks (*) next to some dataset names (GSM8k, AQuA, StrategyQA) likely denote a specific variant or evaluation protocol for those benchmarks, which is important for precise reproducibility.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Model Performance Across Datasets

### Overview

The chart compares the performance scores of various AI models across six datasets: GSM8k*, AQuA*, Game24, PrOntoQA, StrategyQA*, and Blocksworld. Scores range from 0.0 to 1.0 on the y-axis, with datasets listed on the x-axis. The legend identifies 10 models using distinct colors.

### Components/Axes

- **X-axis (Dataset)**:

- GSM8k* (leftmost)

- AQuA*

- Game24

- PrOntoQA

- StrategyQA*

- Blocksworld (rightmost)

- **Y-axis (Score)**:

- Scale from 0.0 to 1.0 in increments of 0.2.

- **Legend (Right)**:

- Models and colors:

- Blue: GPT-4 turbo

- Orange: Claude-3 Opus

- Green: Gemini Pro

- Red: InternLM-2 7B

- Purple: Mixtral 8x7B

- Brown: Llama-2 70B

- Pink: Qwen-1.5 7B

- Gray: Gemma 7B

- Yellow: Mistral 7B

- Cyan: Llama-2 13B

### Detailed Analysis

1. **GSM8k***:

- GPT-4 turbo (blue): ~0.85

- Claude-3 Opus (orange): ~0.88

- Gemini Pro (green): ~0.65

- InternLM-2 7B (red): ~0.60

- Mixtral 8x7B (purple): ~0.50

- Llama-2 70B (brown): ~0.38

- Qwen-1.5 7B (pink): ~0.52

- Gemma 7B (gray): ~0.48

- Mistral 7B (yellow): ~0.38

- Llama-2 13B (cyan): ~0.22

2. **AQuA***:

- GPT-4 turbo: ~0.58

- Claude-3 Opus: ~0.56

- Gemini Pro: ~0.28

- InternLM-2 7B: ~0.16

- Mixtral 8x7B: ~0.18

- Llama-2 70B: ~0.08

- Qwen-1.5 7B: ~0.16

- Gemma 7B: ~0.14

- Mistral 7B: ~0.40

- Llama-2 13B: ~0.06

3. **Game24**:

- GPT-4 turbo: ~0.08

- Claude-3 Opus: ~0.04

- Gemini Pro: ~0.06

- InternLM-2 7B: ~0.02

- Mixtral 8x7B: ~0.03

- Llama-2 70B: ~0.03

- Qwen-1.5 7B: ~0.04

- Gemma 7B: ~0.02

- Mistral 7B: ~0.01

- Llama-2 13B: ~0.02

4. **PrOntoQA**:

- GPT-4 turbo: ~0.75

- Claude-3 Opus: ~0.85

- Gemini Pro: ~0.50

- InternLM-2 7B: ~0.45

- Mixtral 8x7B: ~0.45

- Llama-2 70B: ~0.58

- Qwen-1.5 7B: ~0.20

- Gemma 7B: ~0.35

- Mistral 7B: ~0.40

- Llama-2 13B: ~0.42

5. **StrategyQA***:

- GPT-4 turbo: ~0.90

- Claude-3 Opus: ~0.78

- Gemini Pro: ~0.45

- InternLM-2 7B: ~0.30

- Mixtral 8x7B: ~0.32

- Llama-2 70B: ~0.33

- Qwen-1.5 7B: ~0.32

- Gemma 7B: ~0.30

- Mistral 7B: ~0.28

- Llama-2 13B: ~0.28

6. **Blocksworld**:

- GPT-4 turbo: ~0.45

- Claude-3 Opus: ~0.40

- Gemini Pro: ~0.14

- InternLM-2 7B: ~0.08

- Mixtral 8x7B: ~0.10

- Llama-2 70B: ~0.04

- Qwen-1.5 7B: ~0.06

- Gemma 7B: ~0.08

- Mistral 7B: ~0.08

- Llama-2 13B: ~0.04

### Key Observations

- **High Performance**: GPT-4 turbo and Claude-3 Opus dominate most datasets, with scores often exceeding 0.7.

- **Low Performance**: Game24 shows near-zero scores for most models, except Mistral 7B (~0.01) and Llama-2 13B (~0.02).

- **Mid-Range Models**: Gemini Pro, InternLM-2 7B, and Mixtral 8x7B cluster between 0.3–0.5 across datasets.

- **Smaller Models**: Llama-2 70B, Qwen-1.5 7B, and Mistral 7B generally underperform larger models but show variability (e.g., Mistral 7B outperforms others on AQuA*).

### Interpretation

The chart suggests that larger models (e.g., GPT-4 turbo, Claude-3 Opus) consistently achieve higher scores, indicating superior generalization across tasks. However, exceptions like Mistral 7B on AQuA* (~0.40) and Llama-2 13B on PrOntoQA (~0.42) highlight dataset-specific strengths. The near-zero scores on Game24 imply this dataset poses unique challenges, possibly requiring specialized training. The data underscores a correlation between model size and performance but also reveals niche capabilities in smaller models for specific tasks.

DECODING INTELLIGENCE...