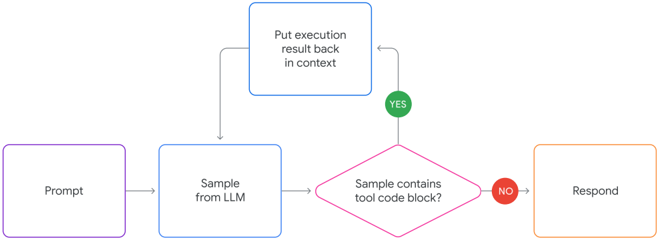

## Flowchart: LLM Tool Code Execution

### Overview

The image is a flowchart illustrating the process of handling a prompt using a Large Language Model (LLM) and determining whether it contains a tool code block. The flowchart outlines the steps from receiving a prompt to either executing code or responding directly.

### Components/Axes

* **Shapes:** The flowchart uses rectangles for processes and a diamond for a decision.

* **Arrows:** Arrows indicate the flow of the process.

* **Colors:** Each rectangle has a different border color: purple, light blue, blue, and orange. The decision diamond has a pink border. The "YES" and "NO" indicators are green and red circles, respectively.

### Detailed Analysis

1. **Prompt:** A purple-bordered rectangle labeled "Prompt" initiates the process.

2. **Sample from LLM:** A light blue-bordered rectangle labeled "Sample from LLM" follows the prompt. An arrow connects the "Prompt" to "Sample from LLM".

3. **Sample contains tool code block?:** A pink-bordered diamond labeled "Sample contains tool code block?" represents a decision point.

* **YES:** If the sample contains a tool code block, a green circle labeled "YES" directs the flow upwards to a blue-bordered rectangle labeled "Put execution result back in context". An arrow connects the "YES" to "Put execution result back in context". From here, the flow returns to the "Sample from LLM" step.

* **NO:** If the sample does not contain a tool code block, a red circle labeled "NO" directs the flow to an orange-bordered rectangle labeled "Respond". An arrow connects the "NO" to "Respond".

### Key Observations

* The flowchart describes a loop where the LLM samples and checks for tool code blocks. If a tool code block is found, the execution result is put back into context, and the process repeats.

* The process terminates with a response if no tool code block is found.

### Interpretation

The flowchart illustrates a system where an LLM processes prompts and can utilize external tools or code blocks to enhance its responses. The loop involving "Sample from LLM," "Sample contains tool code block?," and "Put execution result back in context" suggests an iterative refinement process. The system checks if the LLM's output contains code intended for execution. If so, it executes the code, incorporates the result back into its context, and continues processing. This allows the LLM to leverage external tools or APIs to provide more accurate or comprehensive responses. If no tool code block is detected, the LLM proceeds to generate a final response.