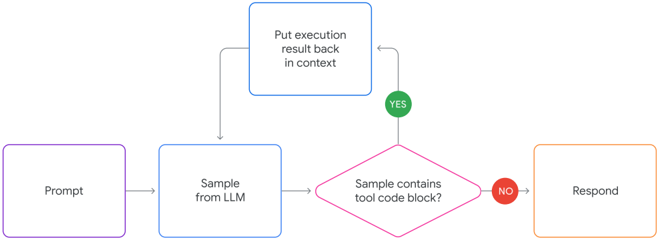

## Flowchart: Tool-Augmented LLM Interaction Process

### Overview

The image displays a flowchart illustrating a decision-making process for a Large Language Model (LLM) that can execute tool code blocks. The process begins with a user prompt, involves sampling from the LLM, checks for the presence of tool code, and either loops back to incorporate execution results or proceeds to generate a final response.

### Components/Axes

The flowchart consists of five primary shapes connected by directional arrows, each with distinct colors and text labels. There are no traditional axes, as this is a process diagram.

**Shapes and Labels (in process order):**

1. **Purple Rectangle (Leftmost):** "Prompt"

2. **Blue Rectangle (Center-Left):** "Sample from LLM"

3. **Pink Diamond (Center-Right):** "Sample contains tool code block?"

4. **Green Circle (Top, from Diamond's "YES" path):** "YES"

5. **Blue Rectangle (Top, after "YES"):** "Put execution result back in context"

6. **Red Circle (Right, from Diamond's "NO" path):** "NO"

7. **Orange Rectangle (Rightmost):** "Respond"

**Flow Connections (Arrows):**

* An arrow points from "Prompt" to "Sample from LLM".

* An arrow points from "Sample from LLM" to the decision diamond "Sample contains tool code block?".

* From the diamond, two paths diverge:

* The "YES" path (green circle) leads upward to the box "Put execution result back in context".

* From "Put execution result back in context", an arrow loops back to the input of "Sample from LLM".

* The "NO" path (red circle) leads rightward to the final box "Respond".

### Detailed Analysis

The flowchart describes a sequential and conditional process:

1. **Initiation:** The process starts with a "Prompt" (purple box, left).

2. **LLM Sampling:** The prompt is fed into the LLM, which generates a "Sample" (blue box, center-left).

3. **Decision Point:** The system checks the generated sample. The central question, posed in a pink diamond, is: "Sample contains tool code block?"

4. **Conditional Paths:**

* **If YES (Green Path):** The flow follows the "YES" indicator (green circle). The system executes the tool code and then performs the action: "Put execution result back in context" (blue box, top). Crucially, the flow then **loops back** to the "Sample from LLM" step. This creates an iterative cycle where the LLM receives its own execution results as new context and generates a new sample, which is again checked for tool code.

* **If NO (Red Path):** The flow follows the "NO" indicator (red circle). The system proceeds directly to the final step: "Respond" (orange box, right), presumably generating a natural language answer for the user.

### Key Observations

* **Iterative Loop:** The most significant feature is the feedback loop from "Put execution result back in context" to "Sample from LLM". This indicates the system is designed for multi-step reasoning or tasks requiring tool use, where the LLM can chain actions.

* **Color Coding:** Colors are used functionally: purple for input, blue for LLM actions, pink for decision, green/red for binary outcomes, and orange for final output.

* **Spatial Layout:** The main linear flow (Prompt -> Sample -> Decision -> Respond) runs left-to-right. The iterative "YES" path creates a vertical loop above this main line, visually emphasizing its cyclical nature.

### Interpretation

This flowchart models the architecture of a **tool-augmented or agentic LLM system**. It demonstrates how an LLM can be integrated into a larger computational loop.

* **Purpose:** The system is designed to handle complex queries that require external computation, data retrieval, or specific actions (via "tool code blocks") beyond pure text generation.

* **Mechanism:** Instead of a single forward pass, the LLM operates in a **generate-verify-execute loop**. It generates a response, the system checks if that response contains an actionable command (tool code), executes it if present, and feeds the result back. This allows the LLM to break down problems, use tools, observe outcomes, and refine its approach iteratively.

* **Significance:** This pattern is fundamental to modern AI assistants that can browse the web, run code, or interact with APIs. The "NO" path represents the termination condition, where the LLM produces a final, tool-free response for the user. The diagram effectively captures the core logic of turning a static language model into a dynamic, problem-solving agent.