TECHNICAL ASSET FINGERPRINT

1dc17b0fdc3be8f46bb3e60f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Llama-3-8B and Llama-3-70B Performance

### Overview

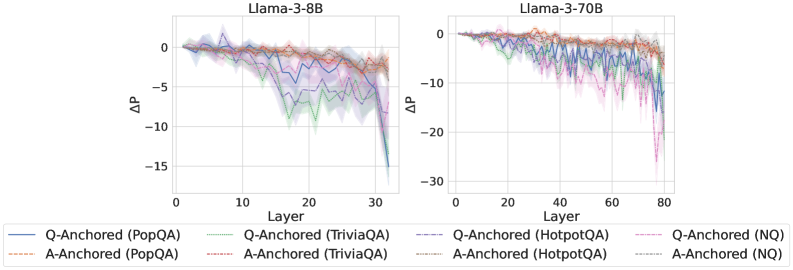

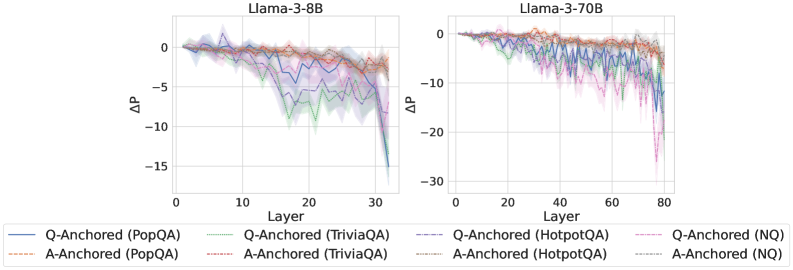

The image presents two line charts comparing the performance of Llama-3-8B and Llama-3-70B models across different layers. The y-axis represents ΔP (change in performance), and the x-axis represents the layer number. Each chart displays six data series, representing Q-Anchored and A-Anchored performance on PopQA, TriviaQA, HotpotQA, and NQ datasets. The charts show how performance changes as the model processes information through its layers.

### Components/Axes

**Chart 1: Llama-3-8B**

* **Title:** Llama-3-8B

* **X-axis:** Layer

* Scale: 0 to 30, incrementing by 10

* **Y-axis:** ΔP

* Scale: -15 to 0, incrementing by 5

* **Legend:** Located at the bottom of the image, spanning both charts.

* Q-Anchored (PopQA): Solid Blue Line

* A-Anchored (PopQA): Dashed Brown Line

* Q-Anchored (TriviaQA): Dotted Green Line

* A-Anchored (TriviaQA): Dash-Dotted Brown Line

* Q-Anchored (HotpotQA): Dash-Dot Blue Line

* A-Anchored (HotpotQA): Dotted Brown Line

* Q-Anchored (NQ): Dash-Dot Pink Line

* A-Anchored (NQ): Dotted Brown Line

**Chart 2: Llama-3-70B**

* **Title:** Llama-3-70B

* **X-axis:** Layer

* Scale: 0 to 80, incrementing by 20

* **Y-axis:** ΔP

* Scale: -30 to 0, incrementing by 10

* **Legend:** (Same as Chart 1, located at the bottom of the image)

### Detailed Analysis

**Chart 1: Llama-3-8B**

* **Q-Anchored (PopQA):** (Solid Blue Line) Starts around 0, fluctuates slightly, then drops sharply to approximately -12 around layer 30.

* **A-Anchored (PopQA):** (Dashed Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 30.

* **Q-Anchored (TriviaQA):** (Dotted Green Line) Starts around 0, gradually decreases to approximately -8 around layer 30.

* **A-Anchored (TriviaQA):** (Dash-Dotted Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 30.

* **Q-Anchored (HotpotQA):** (Dash-Dot Blue Line) Starts around 0, fluctuates slightly, then drops sharply to approximately -12 around layer 30.

* **A-Anchored (HotpotQA):** (Dotted Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 30.

* **Q-Anchored (NQ):** (Dash-Dot Pink Line) Starts around 0, fluctuates slightly, then drops sharply to approximately -12 around layer 30.

* **A-Anchored (NQ):** (Dotted Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 30.

**Chart 2: Llama-3-70B**

* **Q-Anchored (PopQA):** (Solid Blue Line) Starts around 0, fluctuates slightly, then drops sharply to approximately -25 around layer 80.

* **A-Anchored (PopQA):** (Dashed Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 80.

* **Q-Anchored (TriviaQA):** (Dotted Green Line) Starts around 0, gradually decreases to approximately -10 around layer 80.

* **A-Anchored (TriviaQA):** (Dash-Dotted Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 80.

* **Q-Anchored (HotpotQA):** (Dash-Dot Blue Line) Starts around 0, fluctuates slightly, then drops sharply to approximately -25 around layer 80.

* **A-Anchored (HotpotQA):** (Dotted Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 80.

* **Q-Anchored (NQ):** (Dash-Dot Pink Line) Starts around 0, fluctuates slightly, then drops sharply to approximately -25 around layer 80.

* **A-Anchored (NQ):** (Dotted Brown Line) Starts around 0, gradually decreases to approximately -2 around layer 80.

### Key Observations

* For both models, the Q-Anchored data series (PopQA, HotpotQA, and NQ) show a more significant drop in performance (ΔP) as the layer number increases compared to the A-Anchored series.

* The Llama-3-70B model (right chart) exhibits a larger performance drop (ΔP) compared to the Llama-3-8B model (left chart).

* The A-Anchored data series (PopQA, TriviaQA, HotpotQA, and NQ) show a relatively stable performance across all layers for both models.

* The shaded regions around each line indicate the uncertainty or variance in the performance data.

### Interpretation

The charts suggest that as the Llama models process information through deeper layers, the performance on Q-Anchored tasks (PopQA, HotpotQA, NQ) degrades more significantly than on A-Anchored tasks. This could indicate that the model's ability to handle question-related information diminishes with increasing depth, while its ability to handle answer-related information remains relatively stable.

The larger performance drop in Llama-3-70B compared to Llama-3-8B could be attributed to the increased complexity and depth of the larger model. While larger models often have greater capacity, they may also be more susceptible to issues like vanishing gradients or overfitting, leading to performance degradation in deeper layers.

The relatively stable performance of A-Anchored tasks suggests that the model maintains a consistent understanding or representation of answer-related information throughout its layers. This could be due to the nature of the tasks or the way the model is trained.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: ΔP vs. Layer for Llama-3 Models

### Overview

The image presents two line charts comparing the change in performance (ΔP) across layers for two Llama-3 models: Llama-3-8B and Llama-3-70B. The x-axis represents the layer number, and the y-axis represents ΔP. Each chart displays multiple lines, each representing a different question-answering dataset and anchoring method.

### Components/Axes

* **Title (Left Chart):** Llama-3-8B

* **Title (Right Chart):** Llama-3-70B

* **X-axis Label (Both Charts):** Layer

* **Y-axis Label (Both Charts):** ΔP

* **X-axis Scale (Left Chart):** 0 to 30

* **X-axis Scale (Right Chart):** 0 to 80

* **Y-axis Scale (Both Charts):** -30 to 0

* **Legend (Bottom-Center):**

* Q-Anchored (PopQA) - Blue Line

* A-Anchored (PopQA) - Orange Dotted Line

* Q-Anchored (TriviaQA) - Green Line

* A-Anchored (TriviaQA) - Purple Dotted Line

* Q-Anchored (HotpotQA) - Brown Dotted Line

* A-Anchored (HotpotQA) - Pink Dotted Line

* Q-Anchored (NQ) - Teal Line

* A-Anchored (NQ) - Red Dotted Line

### Detailed Analysis or Content Details

**Llama-3-8B Chart:**

* **Q-Anchored (PopQA):** The blue line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -11 at Layer 30.

* **A-Anchored (PopQA):** The orange dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -8 at Layer 30.

* **Q-Anchored (TriviaQA):** The green line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -9 at Layer 30.

* **A-Anchored (TriviaQA):** The purple dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -7 at Layer 30.

* **Q-Anchored (HotpotQA):** The brown dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -8 at Layer 30.

* **A-Anchored (HotpotQA):** The pink dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -7 at Layer 30.

* **Q-Anchored (NQ):** The teal line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -10 at Layer 30.

* **A-Anchored (NQ):** The red dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 15, then declines steadily to approximately ΔP = -9 at Layer 30.

**Llama-3-70B Chart:**

* **Q-Anchored (PopQA):** The blue line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -12 at Layer 80.

* **A-Anchored (PopQA):** The orange dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -10 at Layer 80.

* **Q-Anchored (TriviaQA):** The green line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -13 at Layer 80.

* **A-Anchored (TriviaQA):** The purple dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -11 at Layer 80.

* **Q-Anchored (HotpotQA):** The brown dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -12 at Layer 80.

* **A-Anchored (HotpotQA):** The pink dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -10 at Layer 80.

* **Q-Anchored (NQ):** The teal line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -15 at Layer 80.

* **A-Anchored (NQ):** The red dotted line starts at approximately ΔP = 0 at Layer 0, remains relatively stable around ΔP = -1 to -2 from Layer 0 to Layer 50, then declines more rapidly to approximately ΔP = -13 at Layer 80.

### Key Observations

* In both charts, all lines generally exhibit a downward trend, indicating a decrease in ΔP as the layer number increases.

* The Llama-3-70B model shows a more pronounced decline in ΔP compared to the Llama-3-8B model, especially in the later layers.

* The Q-Anchored lines generally have lower ΔP values than the A-Anchored lines for the same dataset.

* The NQ dataset consistently shows the lowest ΔP values across both models.

### Interpretation

The charts demonstrate how performance changes across layers in the Llama-3 models when evaluated on different question-answering datasets using question-anchored (Q-Anchored) and answer-anchored (A-Anchored) methods. The negative ΔP values suggest a degradation in performance as the model progresses through its layers.

The steeper decline in ΔP for the Llama-3-70B model indicates that the larger model may be more susceptible to performance degradation in deeper layers. The difference between Q-Anchored and A-Anchored lines suggests that the method of anchoring the questions or answers impacts performance, with question-anchoring generally leading to lower ΔP values. The consistently lower performance on the NQ dataset suggests that this dataset presents a greater challenge for the models.

The overall trend suggests that while the models perform well in the initial layers, their performance deteriorates as they process information through deeper layers. This could be due to issues like vanishing gradients or the accumulation of errors during processing. Further investigation is needed to understand the underlying causes of this performance degradation and to develop strategies for mitigating it.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Layer-wise Performance Delta (ΔP) Comparison Charts

### Overview

The image displays two side-by-side line charts comparing the layer-wise change in performance (ΔP) for two different Llama-3 language models (8B and 70B parameters). The charts track this metric across the models' layers for eight different evaluation scenarios, defined by a combination of an anchoring method (Q-Anchored or A-Anchored) and a dataset (PopQA, TriviaQA, HotpotQA, NQ).

### Components/Axes

* **Titles:** The left chart is titled "Llama-3-8B". The right chart is titled "Llama-3-70B".

* **X-Axis (Both Charts):** Labeled "Layer". It represents the sequential layers of the neural network.

* Llama-3-8B: Scale runs from 0 to 30, with major ticks at 0, 10, 20, 30.

* Llama-3-70B: Scale runs from 0 to 80, with major ticks at 0, 20, 40, 60, 80.

* **Y-Axis (Both Charts):** Labeled "ΔP". This represents a change in a performance metric (likely probability or accuracy delta). Negative values indicate a decrease.

* Llama-3-8B: Scale runs from -15 to 0, with major ticks at -15, -10, -5, 0.

* Llama-3-70B: Scale runs from -30 to 0, with major ticks at -30, -20, -10, 0.

* **Legend:** Positioned at the bottom of the image, spanning both charts. It defines eight data series:

1. `Q-Anchored (PopQA)`: Solid blue line.

2. `Q-Anchored (TriviaQA)`: Solid green line.

3. `Q-Anchored (HotpotQA)`: Dashed blue line.

4. `Q-Anchored (NQ)`: Dashed magenta/pink line.

5. `A-Anchored (PopQA)`: Dashed orange line.

6. `A-Anchored (TriviaQA)`: Dashed red line.

7. `A-Anchored (HotpotQA)`: Dotted green line.

8. `A-Anchored (NQ)`: Dotted cyan/light blue line.

### Detailed Analysis

**Llama-3-8B Chart (Left):**

* **General Trend:** All lines start near ΔP = 0 at layer 0. Most lines show a general downward trend as layers increase, with increased volatility in later layers (20-30).

* **Q-Anchored Series (Solid/Dashed lines):** These show the most significant negative ΔP.

* `Q-Anchored (TriviaQA)` (solid green) and `Q-Anchored (NQ)` (dashed magenta) exhibit the steepest declines, dropping sharply after layer 20 to reach approximately ΔP = -15 by layer 30.

* `Q-Anchored (PopQA)` (solid blue) and `Q-Anchored (HotpotQA)` (dashed blue) also decline significantly, reaching approximately ΔP = -10 to -12 by layer 30.

* **A-Anchored Series (Dashed/Dotted lines):** These lines remain much closer to zero.

* `A-Anchored (PopQA)` (dashed orange) and `A-Anchored (TriviaQA)` (dashed red) show a slight, gradual decline, staying above ΔP = -5.

* `A-Anchored (HotpotQA)` (dotted green) and `A-Anchored (NQ)` (dotted cyan) are the most stable, fluctuating near ΔP = 0 throughout all layers.

**Llama-3-70B Chart (Right):**

* **General Trend:** Similar starting point at ΔP ≈ 0. The decline for some series begins earlier (around layer 20) and is more pronounced, with a very sharp drop in the final layers (70-80).

* **Q-Anchored Series:**

* `Q-Anchored (NQ)` (dashed magenta) shows the most extreme behavior, plummeting after layer 60 to a low of approximately ΔP = -25 to -30 by layer 80.

* `Q-Anchored (TriviaQA)` (solid green) and `Q-Anchored (HotpotQA)` (dashed blue) also experience a severe late drop, reaching approximately ΔP = -15 to -20.

* `Q-Anchored (PopQA)` (solid blue) declines steadily but less severely, ending near ΔP = -10.

* **A-Anchored Series:**

* `A-Anchored (TriviaQA)` (dashed red) and `A-Anchored (PopQA)` (dashed orange) show a moderate, noisy decline, ending between ΔP = -5 and -10.

* `A-Anchored (HotpotQA)` (dotted green) and `A-Anchored (NQ)` (dotted cyan) again remain the most stable, hovering near or slightly below ΔP = 0.

### Key Observations

1. **Anchoring Method Dominance:** The most striking pattern is the consistent, significant difference between **Q-Anchored** and **A-Anchored** methods. A-Anchored lines are consistently more stable (closer to ΔP=0) across all datasets and both models.

2. **Dataset Difficulty:** Within the Q-Anchored group, the **NQ** and **TriviaQA** datasets consistently show the largest negative ΔP, suggesting they are more challenging for this anchoring method. **PopQA** appears to be the least challenging for Q-Anchored methods.

3. **Model Scale Effect:** The larger model (Llama-3-70B) exhibits more extreme behavior. The negative ΔP for difficult cases (Q-Anchored on NQ/TriviaQA) is much larger in magnitude (-30 vs -15) and the drop is concentrated in the very final layers.

4. **Late-Layer Collapse:** Both models, but especially the 70B, show a dramatic acceleration in performance drop (negative ΔP) in the last ~10 layers for the Q-Anchored scenarios.

5. **Stability of Certain Configurations:** The A-Anchored configurations on HotpotQA and NQ are remarkably flat, indicating that for these datasets, the performance metric (ΔP) is largely unaffected by the layer when using answer-anchoring.

### Interpretation

These charts visualize how the internal processing of a Large Language Model (LLM) affects its performance on different knowledge-intensive QA tasks, depending on whether the model's attention is "anchored" to the question (Q) or the answer (A).

* **What the data suggests:** The consistent negative ΔP for Q-Anchored methods implies that as information propagates through the network layers, the model's confidence or accuracy on the correct answer *decreases* when it is forced to attend primarily to the question. This could indicate a form of "detrimental refinement" or interference in deeper layers for these tasks.

* **Why A-Anchoring is stable:** Anchoring to the answer (A-Anchored) likely provides a stronger, more consistent signal that preserves the correct information pathway through the network, preventing the degradation seen with question-anchoring.

* **The "Late-Layer Collapse" phenomenon:** The sharp drop in the final layers of the 70B model for hard Q-Anchored tasks is particularly notable. It suggests that the final processing stages in very large models might be highly specialized or sensitive, and when given a potentially weaker signal (question-only anchoring), they can dramatically amplify errors or uncertainties.

* **Practical Implication:** The results argue for the importance of **answer-aware or answer-anchored mechanisms** in the architecture or prompting of LLMs for knowledge-intensive tasks, as they appear to provide a more robust signal that maintains performance across the model's depth. The vulnerability of Q-Anchored methods, especially in large models, highlights a potential failure mode to be aware of in interpretability and steering research.

**Language Note:** All text in the image is in English.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Llama-3-8B and Llama-3-70B Performance Trends

### Overview

The image contains two line graphs comparing performance degradation (ΔP) across transformer layers for two Llama-3 model variants (8B and 70B parameters). Each graph tracks multiple data series representing different question-answering datasets (PopQA, TriviaQA, HotpotQA, NQ) and anchoring strategies (Q-Anchored vs. A-Anchored). The graphs show layer-wise performance shifts, with ΔP values plotted against layer indices.

### Components/Axes

- **X-axis (Layer)**:

- Left graph: 0–30 (Llama-3-8B)

- Right graph: 0–80 (Llama-3-70B)

- **Y-axis (ΔP)**:

- Left graph: -15 to 0

- Right graph: -30 to 0

- **Legend**:

- Colors/styles map to:

- Q-Anchored (PopQA): Solid blue

- Q-Anchored (TriviaQA): Dashed green

- Q-Anchored (HotpotQA): Dotted purple

- Q-Anchored (NQ): Dash-dot pink

- A-Anchored (PopQA): Solid red

- A-Anchored (TriviaQA): Dashed orange

- A-Anchored (HotpotQA): Dotted brown

- A-Anchored (NQ): Dash-dot gray

- **Placement**: Legends at bottom; left graph on left, right graph on right.

### Detailed Analysis

#### Llama-3-8B (Left Graph)

- **Trends**:

- All lines start near ΔP=0 at layer 0.

- Gradual decline to ΔP≈-10 by layer 30, with oscillations.

- Q-Anchored (HotpotQA) shows steepest drop (-12 to -15 range).

- A-Anchored (NQ) remains closest to 0 (-2 to -4 range).

- **Key Data Points**:

- Q-Anchored (PopQA): 0 → -8 (layer 30)

- A-Anchored (HotpotQA): 0 → -5 (layer 30)

#### Llama-3-70B (Right Graph)

- **Trends**:

- Steeper overall decline than 8B variant.

- ΔP reaches -25 to -30 in later layers (60–80).

- Q-Anchored (HotpotQA) drops most sharply (-30 at layer 80).

- A-Anchored (NQ) stabilizes at ΔP≈-10.

- **Key Data Points**:

- Q-Anchored (PopQA): 0 → -20 (layer 80)

- A-Anchored (HotpotQA): 0 → -25 (layer 80)

### Key Observations

1. **Model Size Impact**: Larger model (70B) exhibits 2–3× greater ΔP magnitude than 8B across all datasets.

2. **Anchoring Strategy**: Q-Anchored consistently underperforms A-Anchored, with HotpotQA showing the largest gap (ΔP difference: -10 to -15 in 70B).

3. **Dataset Sensitivity**: HotpotQA induces the steepest performance degradation, followed by TriviaQA and NQ.

4. **Layer Dynamics**: Performance degradation accelerates in deeper layers (layers 40–80 in 70B).

### Interpretation

The data demonstrates that:

- **Model Scale Amplifies Degradation**: Larger models (70B) suffer more severe performance drops, particularly in complex reasoning tasks (HotpotQA).

- **Anchoring Matters**: A-Anchored models mitigate degradation better, suggesting architectural choices influence robustness.

- **Task Complexity Correlation**: HotpotQA’s steep decline implies it relies more heavily on early-layer representations, which degrade faster in deeper models.

- **Stability in NQ**: Minimal ΔP for NQ suggests it depends less on layer-specific features, possibly relying on surface-level patterns.

This analysis highlights trade-offs between model scale, architectural design, and task-specific performance in transformer-based QA systems.

DECODING INTELLIGENCE...