## Chart: Comparison of TS timestep and TS episode agents with informed and uninformed priors

### Overview

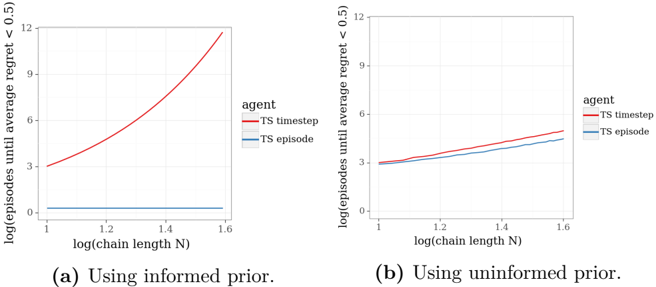

The image presents two line charts comparing the performance of two agents, "TS timestep" and "TS episode," under different conditions: using an informed prior (left chart) and using an uninformed prior (right chart). The y-axis represents the logarithm of episodes until the average regret is less than 0.5, while the x-axis represents the logarithm of the chain length N.

### Components/Axes

* **Title (Left Chart):** (a) Using informed prior.

* **Title (Right Chart):** (b) Using uninformed prior.

* **Y-axis Label (Both Charts):** log(episodes until average regret < 0.5)

* Scale: 0 to 12, with tick marks at 0, 3, 6, 9, and 12.

* **X-axis Label (Both Charts):** log(chain length N)

* Scale: 1 to 1.6, with tick marks at 1, 1.2, 1.4, and 1.6.

* **Legend (Both Charts, Top Right):**

* TS timestep (Red line)

* TS episode (Blue line)

### Detailed Analysis

**Left Chart (Informed Prior):**

* **TS timestep (Red):** The line starts at approximately y=3 when x=1 and increases exponentially to approximately y=11.5 when x=1.6.

* **TS episode (Blue):** The line remains relatively flat, staying close to y=0.2 across the entire x-axis range (x=1 to x=1.6).

**Right Chart (Uninformed Prior):**

* **TS timestep (Red):** The line starts at approximately y=3 when x=1 and increases gradually to approximately y=5.5 when x=1.6.

* **TS episode (Blue):** The line starts at approximately y=3 when x=1 and increases gradually to approximately y=5 when x=1.6.

### Key Observations

* With an informed prior, the "TS timestep" agent requires significantly more episodes to achieve an average regret less than 0.5 as the chain length increases, while the "TS episode" agent's performance remains relatively constant.

* With an uninformed prior, both agents show a gradual increase in the number of episodes required as the chain length increases, with the "TS timestep" agent performing slightly worse than the "TS episode" agent.

* The difference in performance between the two agents is much more pronounced with an informed prior than with an uninformed prior.

### Interpretation

The data suggests that the choice of prior significantly impacts the performance of the "TS timestep" agent, particularly as the chain length increases. When an informed prior is used, the "TS timestep" agent struggles to maintain a low average regret, requiring a rapidly increasing number of episodes. In contrast, the "TS episode" agent's performance is relatively unaffected by the chain length when using an informed prior.

When an uninformed prior is used, both agents exhibit a more gradual increase in the number of episodes required as the chain length increases, suggesting that the prior knowledge plays a crucial role in the "TS timestep" agent's performance. The "TS timestep" agent performs slightly worse than the "TS episode" agent, indicating that the timestep approach may be less efficient in the absence of prior knowledge.

The charts highlight the importance of selecting an appropriate prior for the "TS timestep" agent, as an informed prior can lead to significantly worse performance compared to an uninformed prior or the "TS episode" agent. This could be due to the informed prior being misaligned with the true underlying distribution, causing the "TS timestep" agent to explore suboptimal actions.