## Line Chart: Performance vs. Context Length for Different Frameworks

### Overview

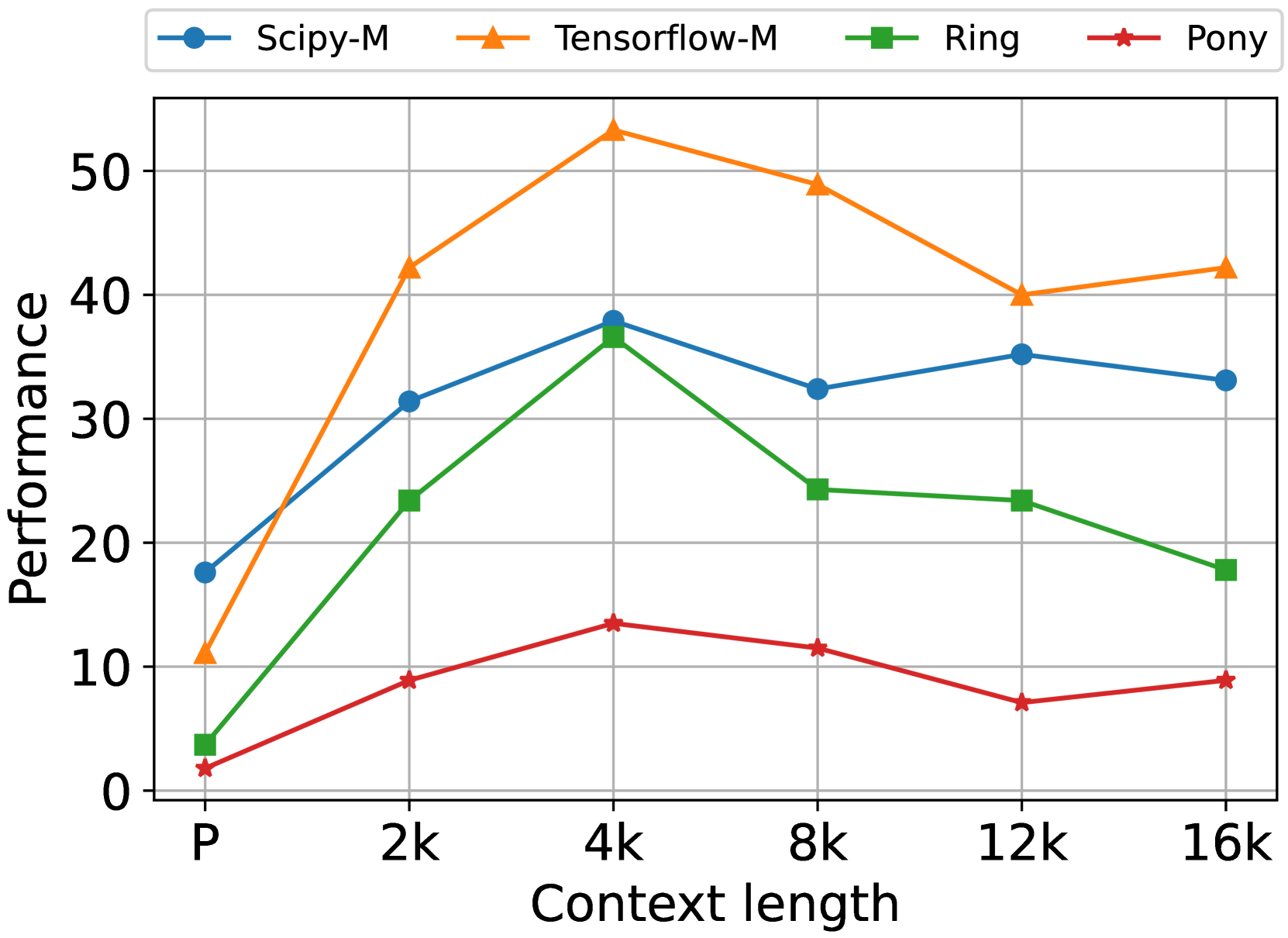

The image is a line chart comparing the performance of four frameworks (Scipy-M, Tensorflow-M, Ring, and Pony) across different context lengths. The x-axis represents context length, and the y-axis represents performance.

### Components/Axes

* **Title:** (None visible)

* **X-axis:**

* Label: "Context length"

* Scale: Categorical/Numerical with values "P", "2k", "4k", "8k", "12k", "16k". These likely represent context lengths in thousands (e.g., 2k = 2000).

* **Y-axis:**

* Label: "Performance"

* Scale: Numerical, ranging from 0 to 50, with gridlines at intervals of 10.

* **Legend:** Located at the top of the chart.

* Scipy-M (Blue line with circle markers)

* Tensorflow-M (Orange line with triangle markers)

* Ring (Green line with square markers)

* Pony (Red line with star markers)

### Detailed Analysis

* **Scipy-M (Blue):**

* Trend: Initially increases, peaks around 4k, then gradually decreases.

* Data Points:

* P: ~18

* 2k: ~32

* 4k: ~38

* 8k: ~33

* 12k: ~35

* 16k: ~33

* **Tensorflow-M (Orange):**

* Trend: Increases sharply, peaks at 4k, then decreases to 8k, and then slightly increases again.

* Data Points:

* P: ~11

* 2k: ~42

* 4k: ~53

* 8k: ~49

* 12k: ~40

* 16k: ~42

* **Ring (Green):**

* Trend: Increases sharply, peaks at 4k, then decreases steadily.

* Data Points:

* P: ~4

* 2k: ~24

* 4k: ~38

* 8k: ~25

* 12k: ~23

* 16k: ~18

* **Pony (Red):**

* Trend: Increases slightly, peaks around 4k, then decreases gradually.

* Data Points:

* P: ~2

* 2k: ~9

* 4k: ~14

* 8k: ~11

* 12k: ~7

* 16k: ~9

### Key Observations

* Tensorflow-M achieves the highest peak performance at a context length of 4k.

* Pony consistently shows the lowest performance across all context lengths.

* All frameworks except Pony show a performance peak at a context length of 4k.

* Scipy-M and Tensorflow-M have relatively stable performance at higher context lengths (8k-16k).

* Ring's performance decreases significantly at higher context lengths.

### Interpretation

The chart illustrates how the performance of different frameworks varies with the context length. The data suggests that a context length of 4k is optimal for Tensorflow-M and Ring, while Scipy-M maintains relatively stable performance across different context lengths. Pony's performance is consistently low, indicating it may not be suitable for tasks requiring longer context lengths. The performance decrease observed in Ring at higher context lengths could be due to increased computational overhead or memory limitations. Tensorflow-M's performance is the most volatile, with a large peak and subsequent drop.