## Violin Plot Array: Causal Effect Analysis Across Fairness Scenarios

### Overview

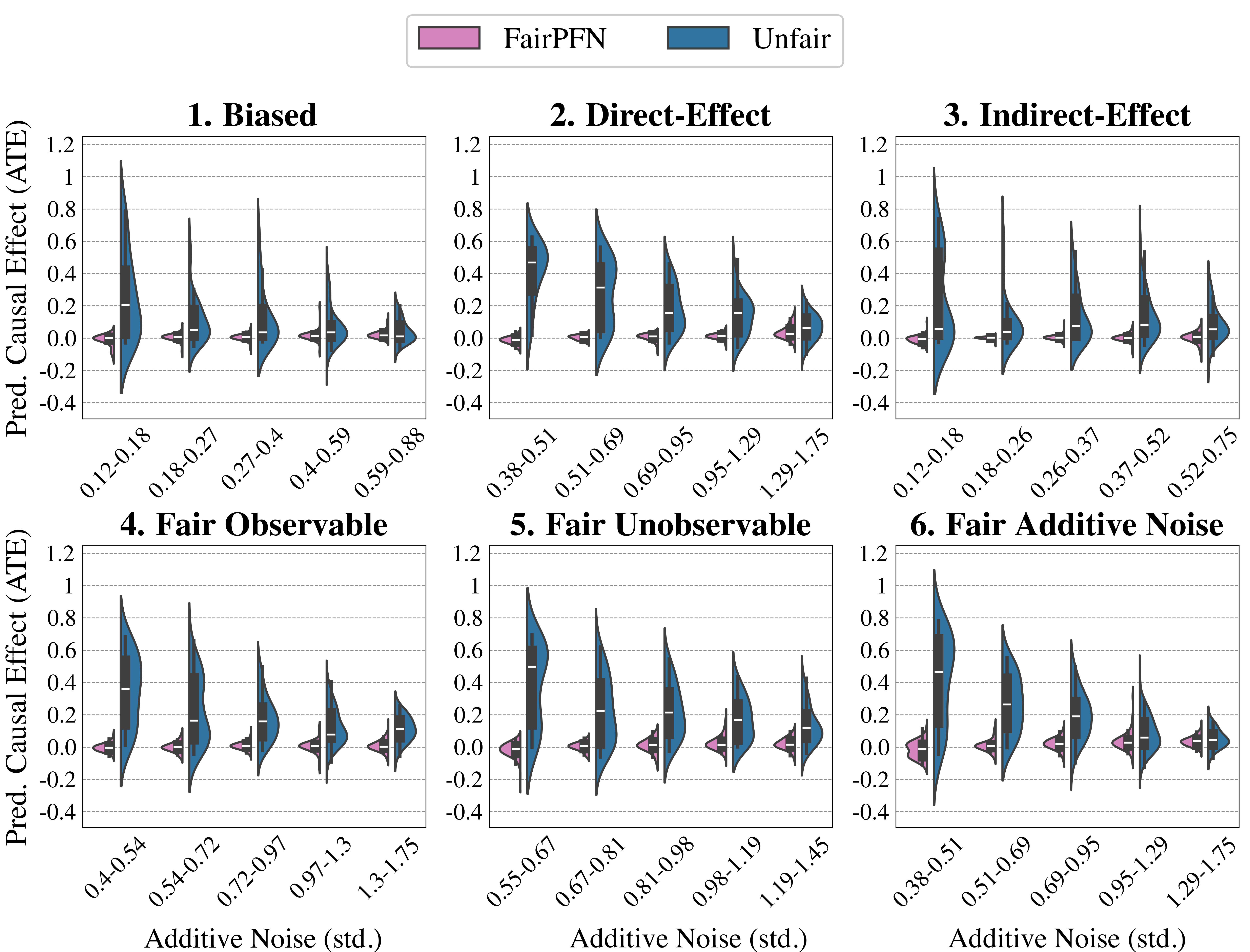

The image presents six violin plots arranged in a 2x3 grid, comparing the distribution of predicted causal effects (ATE) between "FairPFN" (pink) and "Unfair" (blue) models across different fairness scenarios. Each plot varies in additive noise levels (x-axis) and causal effect magnitude (y-axis).

### Components/Axes

- **Legend**: Top-center, labeled "FairPFN" (pink) and "Unfair" (blue)

- **Y-axis**: "Pred. Causal Effect (ATE)" ranging from -0.4 to 1.2

- **X-axis**: "Additive Noise (std.)" with scenario-specific ranges:

1. Biased: 0.12–0.88

2. Direct-Effect: 0.38–1.75

3. Indirect-Effect: 0.12–0.75

4. Fair Observable: 0.4–1.75

5. Fair Unobservable: 0.55–1.45

6. Fair Additive Noise: 0.38–1.75

### Detailed Analysis

1. **Biased Scenario**

- **Trend**: FairPFN (pink) shows narrower distributions centered near 0.2–0.4 ATE, while Unfair (blue) spans wider ranges (0.0–0.8).

- **Key Data**: Median ATE for FairPFN ≈ 0.3; Unfair ≈ 0.5.

2. **Direct-Effect Scenario**

- **Trend**: Unfair distributions are taller and skewed right (up to 0.8 ATE), while FairPFN remains concentrated below 0.4.

- **Key Data**: FairPFN median ≈ 0.2; Unfair median ≈ 0.6.

3. **Indirect-Effect Scenario**

- **Trend**: Both models show overlapping distributions, but Unfair exhibits higher variability (up to 0.8 ATE).

- **Key Data**: FairPFN median ≈ 0.1; Unfair median ≈ 0.4.

4. **Fair Observable Scenario**

- **Trend**: FairPFN maintains tight distributions (0.0–0.6 ATE), while Unfair spreads wider (0.0–0.8).

- **Key Data**: FairPFN median ≈ 0.3; Unfair median ≈ 0.5.

5. **Fair Unobservable Scenario**

- **Trend**: FairPFN distributions narrow further (0.0–0.5 ATE), contrasting with Unfair’s broader spread (0.0–0.7).

- **Key Data**: FairPFN median ≈ 0.2; Unfair median ≈ 0.4.

6. **Fair Additive Noise Scenario**

- **Trend**: As noise increases (x-axis), both models show declining ATE, but FairPFN remains more stable (0.0–0.6 ATE vs. Unfair’s 0.0–0.8).

- **Key Data**: At 1.75 std. noise, FairPFN median ≈ 0.1; Unfair ≈ 0.3.

### Key Observations

- **FairPFN Consistency**: Narrower distributions across all scenarios suggest more stable causal effect estimates.

- **Unfair Variability**: Wider distributions indicate higher sensitivity to noise and bias.

- **Noise Impact**: In "Fair Additive Noise," both models degrade with noise, but FairPFN retains lower ATE variability.

- **Outliers**: No extreme outliers observed; all distributions remain within -0.4 to 1.2 ATE bounds.

### Interpretation

The data demonstrates that FairPFN models consistently produce more reliable causal effect estimates (narrower ATE distributions) compared to Unfair models, particularly under noise and bias. The "Fair Additive Noise" scenario highlights FairPFN’s robustness, maintaining lower ATE variability even as noise increases. This suggests FairPFN’s design mitigates fairness-aware degradation better than Unfair models, which exhibit amplified sensitivity to noise and bias across scenarios. The consistent median ATE values for FairPFN (0.1–0.3) vs. Unfair (0.3–0.6) reinforce its superiority in fairness-aware causal inference tasks.