## Diagram: Multi-Policy Reinforcement Learning Loop

### Overview

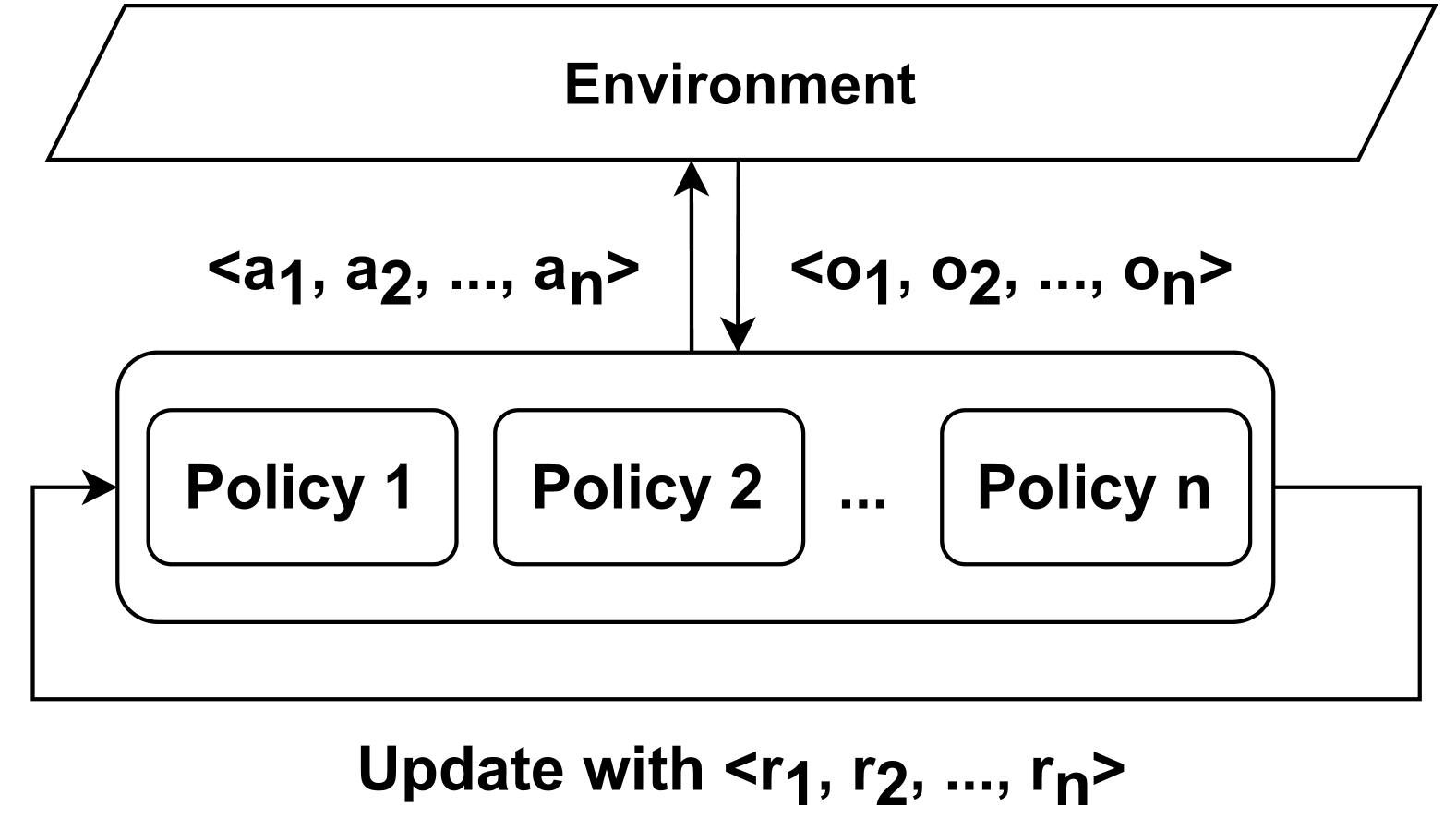

The image is a technical diagram illustrating a system where multiple policies interact with a shared environment in a reinforcement learning framework. The diagram depicts a cyclical process involving action, observation, and reward-based updates.

### Components/Axes

The diagram consists of three primary components arranged vertically:

1. **Top Component (Environment):** A parallelogram labeled **"Environment"**.

2. **Middle Component (Policy Set):** A large, rounded rectangle containing a series of smaller, rounded rectangles labeled **"Policy 1"**, **"Policy 2"**, **"..."**, and **"Policy n"**. This represents a set of *n* distinct policies.

3. **Bottom Text (Update Mechanism):** Text at the bottom of the diagram reads **"Update with <r₁, r₂, ..., rₙ>"**.

**Flow and Connections (Arrows):**

* A vertical, double-headed arrow connects the **Environment** and the **Policy Set**.

* To the left of this central arrow, text indicates the flow from policies to environment: **"<a₁, a₂, ..., aₙ>"**. This represents a vector of actions from the *n* policies.

* To the right of the central arrow, text indicates the flow from environment to policies: **"<o₁, o₂, ..., oₙ>"**. This represents a vector of observations sent to the *n* policies.

* A feedback loop arrow originates from the right side of the **Policy Set** rectangle, curves downward, and points back into the left side of the same rectangle. This loop is associated with the bottom text **"Update with <r₁, r₂, ..., rₙ>"**, indicating a vector of rewards used for updating the policies.

### Detailed Analysis

The diagram explicitly defines the following data flows:

* **Action Vector (`<a₁, a₂, ..., aₙ>`):** A set of *n* actions, where `aᵢ` is the action generated by Policy *i*. This vector is sent to the Environment.

* **Observation Vector (`<o₁, o₂, ..., oₙ>`):** A set of *n* observations, where `oᵢ` is the observation provided by the Environment to Policy *i*. This vector is received from the Environment.

* **Reward Vector (`<r₁, r₂, ..., rₙ>`):** A set of *n* rewards, where `rᵢ` is the reward signal associated with Policy *i*. This vector is used in the update step.

The spatial layout is hierarchical and cyclical:

* The **Environment** is positioned at the top, acting as the external system.

* The **Policy Set** is centrally located, acting as the decision-making agent(s).

* The **Update** mechanism is shown as a feedback loop at the bottom, closing the cycle.

### Key Observations

1. **Multi-Agent/Multi-Policy Structure:** The diagram is not for a single agent but for a system with *n* policies (`Policy 1` to `Policy n`). This could represent multiple agents, an ensemble of policies, or a single agent with multiple policy components.

2. **Vectorized Communication:** All interactions (actions, observations, rewards) are explicitly shown as vectors of length *n*, implying a one-to-one correspondence between policies and their respective signals.

3. **Centralized Environment:** All policies interact with a single, shared **Environment**.

4. **Closed Learning Loop:** The diagram clearly shows a complete reinforcement learning cycle: Policies -> Actions -> Environment -> Observations -> Policies, with a separate Reward -> Update loop modifying the policies.

### Interpretation

This diagram models a **centralized multi-policy reinforcement learning system**. It visually answers the question: "How do multiple policies learn from a shared environment?"

* **What it demonstrates:** The core process of distributed decision-making and learning. Each policy `i` generates an action `aᵢ`, which collectively influence the environment. The environment responds with observations `oᵢ` and, crucially, provides individual reward signals `rᵢ` for each policy. These rewards are then used to update the respective policies, aiming to improve their future action selection.

* **Relationships:** The Environment is the source of truth and feedback (observations and rewards). The Policy Set is the learning component. The update loop is the learning algorithm (e.g., policy gradient, Q-learning) applied to each policy based on its own reward signal.

* **Notable Implications:**

* **Credit Assignment:** The structure suggests each policy receives its own reward `rᵢ`, which is essential for determining which policy's actions were effective. This is a key challenge in multi-agent learning.

* **Scalability:** The use of `n` and ellipses (`...`) indicates the framework is designed to be general for any number of policies.

* **Potential Scenarios:** This could model a team of cooperative agents, a population of competing agents, or a single agent using multiple sub-policies (like options in hierarchical RL). The diagram is abstract and does not specify the relationship (cooperative, competitive, etc.) between the policies.

**Language Note:** All text in the image is in English. Mathematical notation uses standard subscripts (₁, ₂, ₙ).