## Grouped Bar Chart: Performance Comparison by Category

### Overview

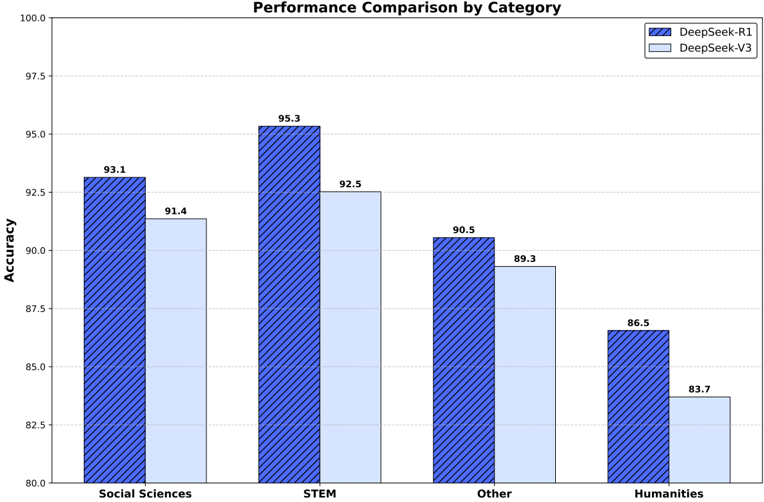

The image is a grouped bar chart titled "Performance Comparison by Category." It displays the accuracy percentages of two models, DeepSeek-R1 and DeepSeek-V3, across four distinct subject categories. The chart is designed to visually compare the performance of the two models side-by-side within each category.

### Components/Axes

* **Chart Title:** "Performance Comparison by Category" (centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy" (rotated vertically on the left side).

* **Scale:** Linear scale ranging from 80.0 to 100.0, with major tick marks every 2.5 units (80.0, 82.5, 85.0, 87.5, 90.0, 92.5, 95.0, 97.5, 100.0).

* **X-Axis:**

* **Categories (from left to right):** "Social Sciences", "STEM", "Other", "Humanities".

* **Legend:** Located in the top-right corner of the chart area.

* **DeepSeek-R1:** Represented by a dark blue bar with a diagonal stripe pattern.

* **DeepSeek-V3:** Represented by a solid, light blue bar.

* **Data Labels:** Each bar has its exact accuracy value printed directly above it.

### Detailed Analysis

The chart presents the following specific accuracy values for each model in each category:

| Category | DeepSeek-R1 | DeepSeek-V3 |

|-----------------|-------------|-------------|

| Social Sciences | 93.1 | 91.4 |

| STEM | 95.3 | 92.5 |

| Other | 90.5 | 89.3 |

| Humanities | 86.5 | 83.7 |

**Trend Verification:** For every category, the DeepSeek-R1 bar (dark blue, striped) is taller than the corresponding DeepSeek-V3 bar (light blue, solid). This indicates a consistent performance advantage for the R1 model across all measured domains. The highest accuracy for both models is in the STEM category, and the lowest is in the Humanities category.

### Key Observations

* **Consistent Performance Gap:** DeepSeek-R1 outperforms DeepSeek-V3 in all four categories. The performance gap ranges from 1.2 percentage points (in "Other") to 2.8 percentage points (in "STEM").

* **Category Difficulty:** Both models achieve their highest scores in "STEM" and their lowest scores in "Humanities," suggesting the STEM tasks in this evaluation were relatively easier for these models, or the Humanities tasks were more challenging.

* **Relative Ranking:** The order of category difficulty is consistent for both models: STEM (easiest) > Social Sciences > Other > Humanities (hardest).

* **Magnitude of Scores:** All accuracy scores are above 80%, indicating a high baseline performance for both models across these diverse categories.

### Interpretation

This chart provides a clear, quantitative comparison demonstrating that the DeepSeek-R1 model has superior accuracy compared to DeepSeek-V3 across a broad spectrum of knowledge domains, including Social Sciences, STEM, Other, and Humanities. The consistent lead suggests architectural or training improvements in R1 that generalize well.

The data implies that while both models are highly capable, the choice between them could be significant for applications requiring maximum accuracy, particularly in STEM fields where the performance gap is largest. The lower scores in Humanities for both models might indicate that this domain contains more nuanced, subjective, or complex reasoning tasks that are currently more challenging for these AI systems. The "Other" category serves as a catch-all, and its performance sits between the specialized domains, which is expected. The chart effectively communicates that DeepSeek-R1 is the higher-performing model in this specific evaluation framework.