\n

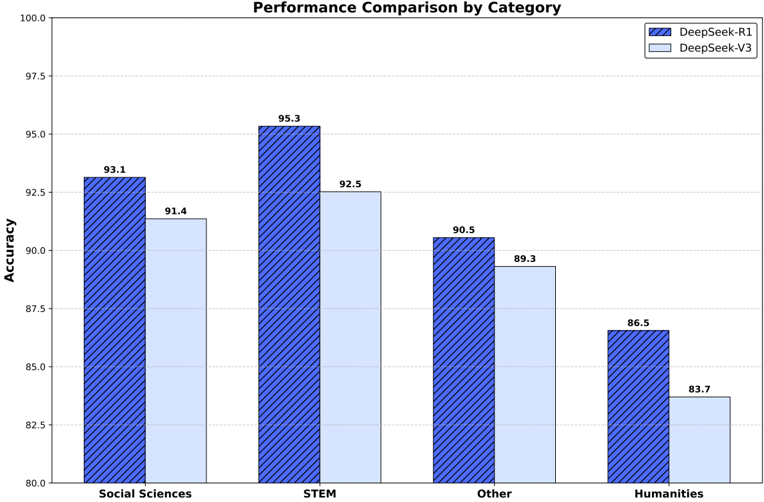

## Bar Chart: Performance Comparison by Category

### Overview

This bar chart compares the performance (accuracy) of two models, DeepSeek-R1 and DeepSeek-V3, across four categories: Social Sciences, STEM, Other, and Humanities. The accuracy is measured on the y-axis, ranging from 80.0 to 100.0, while the categories are displayed on the x-axis. Each category has two bars representing the accuracy of each model.

### Components/Axes

* **Title:** Performance Comparison by Category

* **X-axis:** Category (Social Sciences, STEM, Other, Humanities)

* **Y-axis:** Accuracy (ranging from 80.0 to 100.0, with increments of 2.5)

* **Legend:**

* DeepSeek-R1 (represented by a solid, darker blue color)

* DeepSeek-V3 (represented by a lighter, patterned blue color)

### Detailed Analysis

The chart consists of four sets of two bars, one for each category.

* **Social Sciences:**

* DeepSeek-R1: Approximately 93.1 accuracy.

* DeepSeek-V3: Approximately 91.4 accuracy.

* **STEM:**

* DeepSeek-R1: Approximately 95.3 accuracy.

* DeepSeek-V3: Approximately 92.5 accuracy.

* **Other:**

* DeepSeek-R1: Approximately 90.5 accuracy.

* DeepSeek-V3: Approximately 89.3 accuracy.

* **Humanities:**

* DeepSeek-R1: Approximately 86.5 accuracy.

* DeepSeek-V3: Approximately 83.7 accuracy.

The DeepSeek-R1 model consistently outperforms DeepSeek-V3 across all four categories. The largest difference in performance is observed in the STEM category, where DeepSeek-R1 achieves an accuracy of approximately 95.3, compared to DeepSeek-V3's 92.5. The smallest difference is in the "Other" category, with DeepSeek-R1 at 90.5 and DeepSeek-V3 at 89.3.

### Key Observations

* DeepSeek-R1 consistently achieves higher accuracy than DeepSeek-V3 across all categories.

* The performance gap between the two models is most significant in the STEM category.

* The Humanities category shows the lowest overall accuracy for both models.

* The accuracy values are relatively high across all categories, suggesting both models perform well overall.

### Interpretation

The data suggests that DeepSeek-R1 is a more accurate model than DeepSeek-V3 across a range of categories. The substantial performance difference in STEM indicates that DeepSeek-R1 may be better suited for tasks involving scientific, technological, engineering, and mathematical content. The lower accuracy in the Humanities category could be due to the inherent complexity of the subject matter or limitations in the training data used for both models. The consistent outperformance of DeepSeek-R1 suggests it may have a more robust architecture or have been trained on a more comprehensive dataset. Further investigation into the training data and model architectures could provide insights into the reasons for these performance differences. The chart provides a clear, quantitative comparison of the two models, allowing for informed decisions about which model to use for specific applications.