\n

## Diagram: Problem Solving Process with Reward System

### Overview

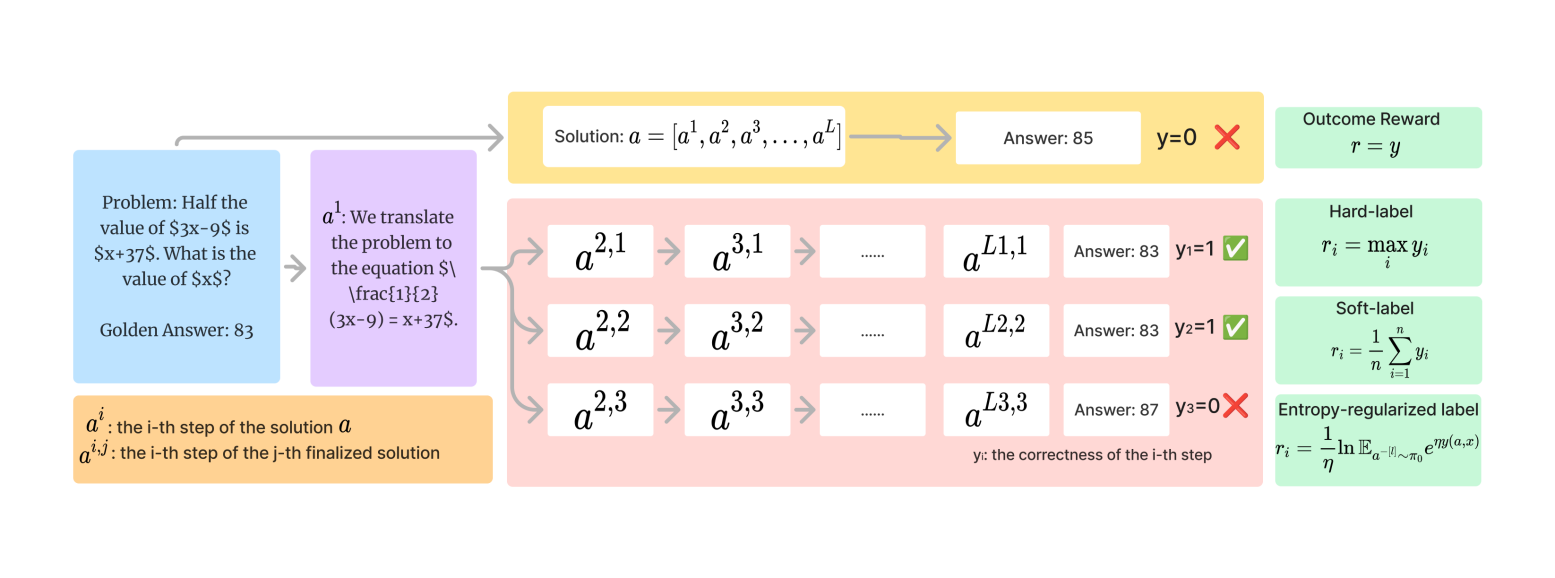

The image depicts a diagram illustrating a problem-solving process, likely within a reinforcement learning or similar framework. It shows a step-by-step solution generation, evaluation, and reward assignment. The process starts with a problem statement, proceeds through iterative solution steps (a<sup>2,1</sup>, a<sup>2,2</sup>, a<sup>2,3</sup>, etc.), evaluates each step, and culminates in a final answer with associated rewards. The diagram is segmented into a "Problem" section on the left, a "Solution" section in the center, and a "Reward" section on the right.

### Components/Axes

The diagram consists of the following components:

* **Problem Statement:** "Problem: Half the value of 3x-9$ is 5x+37$. What is the value of 5x$?" with "Golden Answer: 83" below it.

* **Solution Steps:** A series of boxes representing iterative solution steps, labeled a<sup>2,1</sup>, a<sup>2,2</sup>, a<sup>2,3</sup>, a<sup>3,1</sup>, a<sup>3,2</sup>, a<sup>3,3</sup>, a<sup>L1,1</sup>, a<sup>L2,2</sup>, a<sup>L3,3</sup>.

* **Equation Translation:** A box explaining the initial translation of the problem into an equation: "a<sup>1</sup>: We translate the problem to the equation $\frac{[x-9]}{2} = x+37$."

* **Answer Boxes:** Boxes associated with each solution step, displaying the answer and a binary correctness indicator (y=0 or y=1).

* **Solution Definition:** "Solution: a = [a<sup>1</sup>, a<sup>2</sup>, a<sup>3</sup>, ..., a<sup>L</sup>]"

* **Reward System:** A section detailing different reward types: "Outcome Reward", "Hard-label", "Soft-label", and "Entropy-regularized label" with their respective formulas.

* **Step Correctness Definition:** "y: the correctness of the i-th step"

* **Solution Step Definition:** "a<sub>i</sub>: the i-th step of the solution a" and "a<sub>i</sub><sup>j</sup>: the i-th step of the j-th finalized solution"

### Detailed Analysis or Content Details

The diagram illustrates a process with three main stages:

1. **Problem Formulation:** The problem is stated as "Half the value of 3x-9$ is 5x+37$. What is the value of 5x$?". The golden answer is given as 83.

2. **Iterative Solution:** The solution is generated in steps.

* **Step a<sup>2,1</sup>:** Answer: 85, y=0.

* **Step a<sup>2,2</sup>:** Answer: 83, y=1.

* **Step a<sup>2,3</sup>:** Answer: 87, y=0.

* **Step a<sup>3,1</sup>:** Answer: 83, y=1.

* **Step a<sup>3,2</sup>:** Answer: 83, y=1.

* **Step a<sup>3,3</sup>:** Answer: 87, y=0.

* **Step a<sup>L1,1</sup>:** Answer: 83, y=1.

* **Step a<sup>L2,2</sup>:** Answer: 83, y=1.

* **Step a<sup>L3,3</sup>:** Answer: 87, y=0.

3. **Reward Assignment:** The diagram defines four reward types:

* **Outcome Reward (r<sub>t</sub> = y):** The reward is equal to the correctness indicator (y).

* **Hard-label (r<sub>i</sub> = max y<sub>i</sub>):** The reward is the maximum correctness indicator across all steps.

* **Soft-label (r<sub>i</sub> = 1/n * Σ y<sub>i</sub>):** The reward is the average correctness indicator across all steps.

* **Entropy-regularized label (r<sub>i</sub> = -ln E<sub>q<sub>θ</sub></sub>[π<sub>i</sub>] * exp(α<sub>i</sub>)):** A more complex reward function involving entropy regularization.

### Key Observations

* The correctness indicator (y) fluctuates between 0 and 1 across the solution steps, indicating that not all steps are correct.

* The final answer is not always correct, as evidenced by the y=0 values.

* The reward system provides different ways to incentivize correct steps, ranging from simple outcome-based rewards to more sophisticated entropy-regularized rewards.

* The notation a<sup>i,j</sup> suggests a multi-stage or iterative solution process, where 'i' represents the stage and 'j' represents the step within that stage.

### Interpretation

This diagram illustrates a reinforcement learning approach to solving mathematical problems. The agent attempts to solve the problem iteratively, generating a sequence of steps. Each step is evaluated for correctness, and a reward is assigned based on the outcome. The different reward types represent different strategies for guiding the agent's learning process. The entropy-regularized reward, in particular, suggests an attempt to encourage exploration and prevent the agent from getting stuck in suboptimal solutions. The diagram highlights the importance of both the correctness of individual steps (y) and the overall outcome (final answer) in shaping the agent's behavior. The use of "Golden Answer" suggests a supervised learning component, where the agent is trained to match a known correct solution. The diagram is a conceptual representation of a learning algorithm, rather than a specific numerical dataset. It demonstrates the flow of information and the relationships between different components of the system.