## Diagram: Activation-Based vs. Hidden State-Based Neural Networks

### Overview

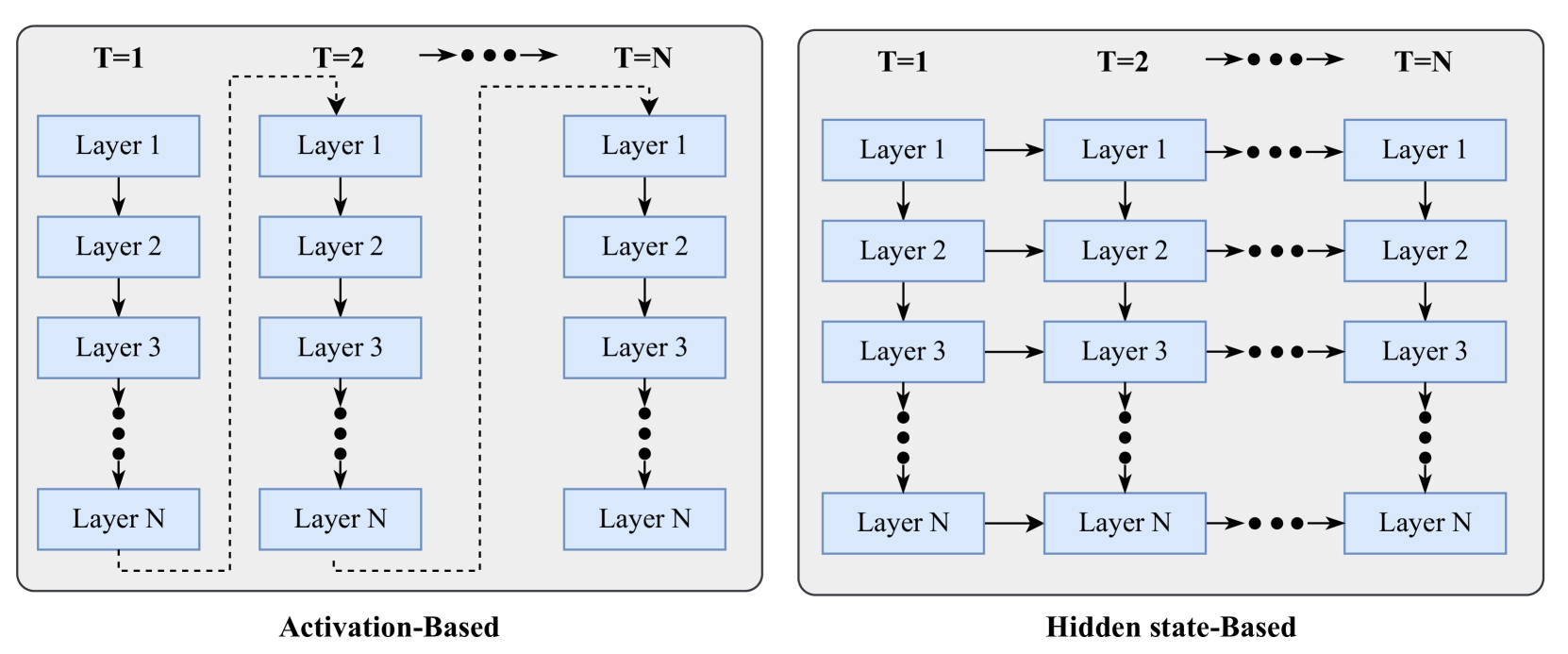

The image presents two diagrams illustrating different architectures for neural networks: "Activation-Based" and "Hidden state-Based". Both diagrams depict multiple layers (Layer 1, Layer 2, Layer 3, ..., Layer N) at different time steps (T=1, T=2, ..., T=N). The diagrams highlight the flow of information between layers and across time steps in each architecture.

### Components/Axes

* **Title (Left Diagram):** Activation-Based

* **Title (Right Diagram):** Hidden state-Based

* **Layers:** Layer 1, Layer 2, Layer 3, ..., Layer N (where N is an unspecified integer)

* **Time Steps:** T=1, T=2, ..., T=N (where N is an unspecified integer)

* **Nodes:** Each layer at each time step is represented by a rectangular node.

* **Arrows:** Arrows indicate the flow of information between layers and/or time steps.

### Detailed Analysis

**Left Diagram: Activation-Based**

* **Structure:** The diagram shows a series of vertical stacks of layers, each stack representing a time step.

* **Flow within a Time Step:** Within each time step (T=1, T=2, ..., T=N), information flows from Layer 1 to Layer 2, Layer 2 to Layer 3, and so on, down to Layer N. This is indicated by downward-pointing arrows.

* **Flow across Time Steps:** There is a dashed arrow connecting Layer 1 at T=1 to Layer 1 at T=2, and a series of dots and arrows connecting Layer 1 at T=2 to Layer 1 at T=N. This suggests a recurrent connection, where the activation of Layer 1 at a previous time step influences its activation at the current time step. There is also a dashed arrow connecting Layer N at T=1 to Layer N at T=2, and a series of dots and arrows connecting Layer N at T=2 to Layer N at T=N.

* **Dots:** Vertical dots between Layer 3 and Layer N indicate that there are intermediate layers not explicitly shown.

**Right Diagram: Hidden state-Based**

* **Structure:** Similar to the Activation-Based diagram, this diagram shows a series of vertical stacks of layers, each stack representing a time step.

* **Flow within a Time Step:** Within each time step (T=1, T=2, ..., T=N), information flows from Layer 1 to Layer 2, Layer 2 to Layer 3, and so on, down to Layer N. This is indicated by downward-pointing arrows.

* **Flow across Time Steps:** In addition to the vertical flow, there are horizontal arrows connecting each layer at time step T=1 to the corresponding layer at time step T=2, and a series of dots and arrows connecting each layer at time step T=2 to the corresponding layer at time step T=N. This indicates that each layer's hidden state is passed to the same layer at the next time step.

* **Dots:** Vertical dots between Layer 3 and Layer N indicate that there are intermediate layers not explicitly shown.

### Key Observations

* Both diagrams illustrate neural networks with multiple layers and time steps.

* The Activation-Based network has recurrent connections only at Layer 1 and Layer N, while the Hidden state-Based network has recurrent connections at every layer.

* The diagrams abstract away the specific computations performed within each layer, focusing instead on the flow of information.

### Interpretation

The diagrams illustrate two different approaches to handling temporal dependencies in neural networks. The Activation-Based network uses recurrent connections at specific layers to incorporate information from previous time steps, potentially focusing on specific features or states. The Hidden state-Based network, on the other hand, propagates the hidden state of each layer to the next time step, allowing the entire network to maintain a memory of past inputs. The choice between these architectures depends on the specific task and the nature of the temporal dependencies in the data. The Hidden state-Based approach is more common in recurrent neural networks (RNNs) like LSTMs and GRUs.