\n

## Diagram: Activation-Based vs. Hidden State-Based Layer Structures

### Overview

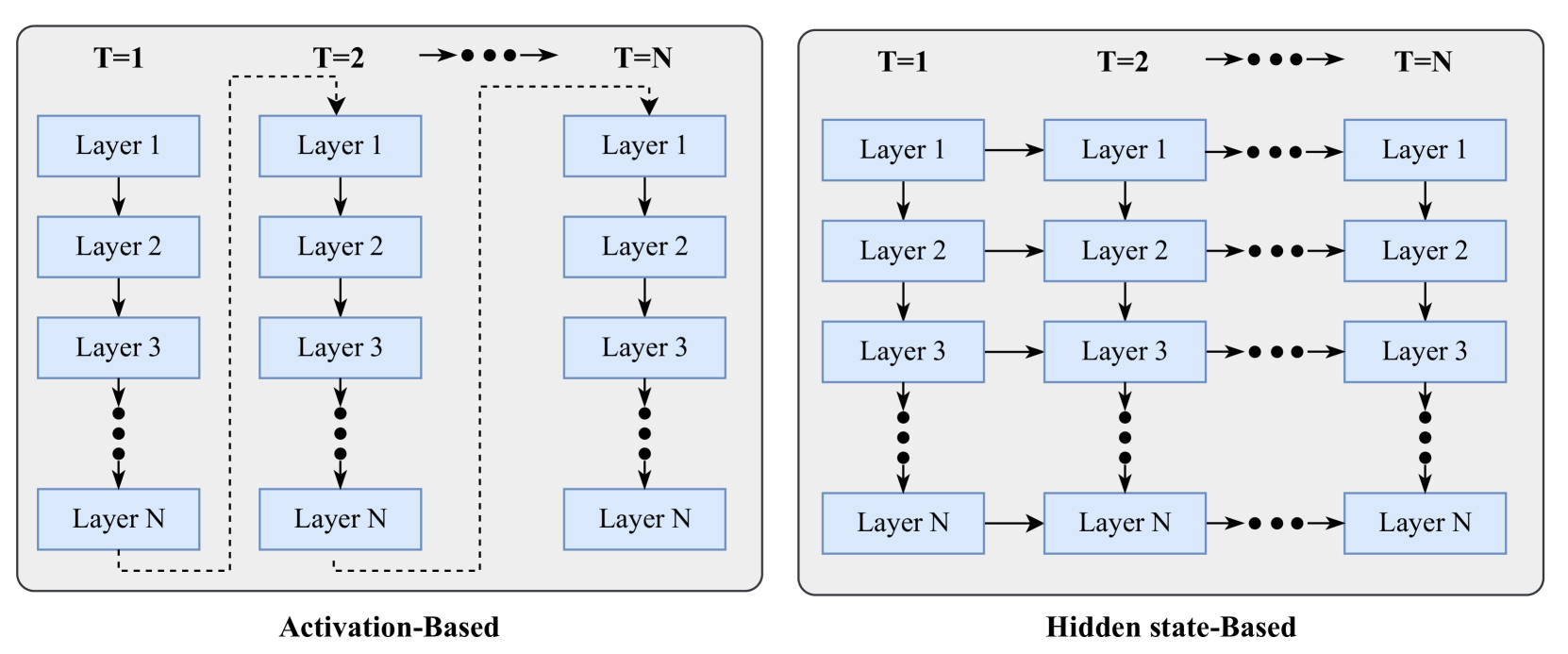

The image presents a comparative diagram illustrating two different layer structures within a neural network or similar sequential processing system: "Activation-Based" and "Hidden State-Based". Both structures consist of N layers, labeled Layer 1 through Layer N, arranged vertically. The key difference lies in how information flows between consecutive time steps (T=1, T=2, ... T=N).

### Components/Axes

The diagram is divided into two main sections, positioned side-by-side. Each section represents one of the layer structures.

* **Time Steps:** Each section shows three time steps labeled "T=1", "T=2", and "T=N" positioned at the top of each column.

* **Layers:** Each section contains N layers, labeled "Layer 1" through "Layer N", arranged vertically.

* **Arrows:** Arrows indicate the direction of information flow between layers and time steps.

* **Labels:** The bottom of each section is labeled "Activation-Based" and "Hidden state-Based" respectively.

### Detailed Analysis or Content Details

**Activation-Based (Left Side):**

* **T=1:** Layer 1 receives no input. Layer 2 receives input from Layer 1, Layer 3 from Layer 2, and so on, down to Layer N receiving input from Layer (N-1).

* **T=2:** Layer 1 receives input from Layer 1 at T=1. Layer 2 receives input from Layer 2 at T=1, Layer 3 from Layer 3 at T=1, and so on, down to Layer N receiving input from Layer N at T=1. A dashed box encompasses the layers at T=1 and T=2, indicating a recurrent connection within these two time steps.

* **T=N:** Layer 1 receives input from Layer 1 at T=(N-1). Layer 2 receives input from Layer 2 at T=(N-1), Layer 3 from Layer 3 at T=(N-1), and so on, down to Layer N receiving input from Layer N at T=(N-1).

* **Information Flow:** The arrows indicate that at each time step, each layer receives input *only* from the corresponding layer in the *previous* time step. There is no lateral connection between layers at the same time step.

**Hidden State-Based (Right Side):**

* **T=1:** Layer 1 receives no input. Layer 2 receives input from Layer 1, Layer 3 from Layer 2, and so on, down to Layer N receiving input from Layer (N-1).

* **T=2:** Layer 1 receives input from Layer 1 at T=1. Layer 2 receives input from Layer 2 at T=1, Layer 3 from Layer 3 at T=1, and so on, down to Layer N receiving input from Layer N at T=1.

* **T=N:** Layer 1 receives input from Layer 1 at T=(N-1). Layer 2 receives input from Layer 2 at T=(N-1), Layer 3 from Layer 3 at T=(N-1), and so on, down to Layer N receiving input from Layer N at T=(N-1).

* **Information Flow:** The arrows indicate that at each time step, each layer receives input from the corresponding layer in the *previous* time step. However, the arrows are solid and continuous, suggesting a persistent connection or "hidden state" being carried forward through time.

### Key Observations

The primary difference is the nature of the connection between time steps. The "Activation-Based" model appears to have a more discrete, step-by-step flow, with a highlighted recurrent connection between T=1 and T=2. The "Hidden State-Based" model suggests a continuous flow of information, potentially representing a memory or state that is updated at each time step.

### Interpretation

This diagram illustrates two different approaches to handling sequential data. The "Activation-Based" model likely represents a system where information is processed in discrete steps, with activations from one time step influencing the next. The dashed box suggests a limited form of memory or recurrence. The "Hidden State-Based" model, on the other hand, suggests a system with a more persistent memory or state that is carried forward through time. This is common in Recurrent Neural Networks (RNNs) or similar architectures where the hidden state encapsulates information about past inputs.

The diagram highlights the fundamental difference between systems that process information in a purely feedforward manner versus those that maintain and update an internal state over time. The choice between these approaches depends on the nature of the data and the task at hand. If the data has strong temporal dependencies, a hidden state-based approach is likely to be more effective. If the data is more independent across time steps, an activation-based approach may suffice.