## Diagram: Activation-Based vs. Hidden state-Based Architectures

### Overview

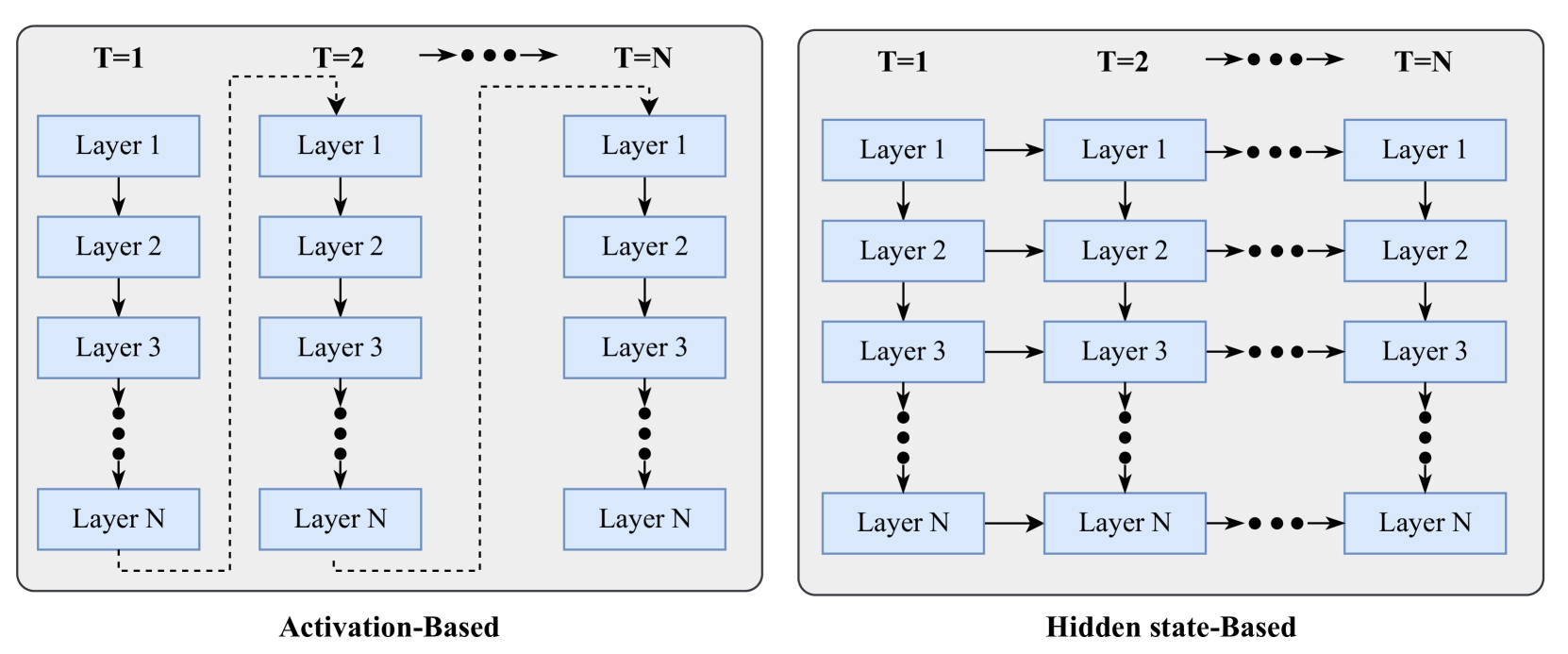

The image compares two neural network architectures: **Activation-Based** (left) and **Hidden state-Based** (right). Both diagrams depict multi-layered systems across sequential time steps (T=1 to T=N). The left diagram emphasizes vertical layer interactions, while the right focuses on horizontal state propagation.

### Components/Axes

- **Left Diagram (Activation-Based)**:

- **Layers**: Vertically stacked blocks labeled "Layer 1" to "Layer N" (N unspecified).

- **Time Steps**: Horizontally labeled T=1, T=2, ..., T=N (N unspecified).

- **Connections**: Solid arrows flow downward between layers (e.g., Layer 1 → Layer 2 → ... → Layer N).

- **Dotted Lines**: Connect identical layers across time steps (e.g., Layer 1 at T=1 ↔ Layer 1 at T=N).

- **Right Diagram (Hidden state-Based)**:

- **Layers**: Vertically stacked blocks labeled "Layer 1" to "Layer N" (N unspecified).

- **Time Steps**: Horizontally labeled T=1, T=2, ..., T=N (N unspecified).

- **Connections**: Solid arrows flow horizontally between layers (e.g., Layer 1 → Layer 2 → ... → Layer N).

- **Dotted Lines**: Connect identical layers across time steps (e.g., Layer 1 at T=1 ↔ Layer 1 at T=N).

### Detailed Analysis

- **Activation-Based (Left)**:

- Vertical arrows suggest sequential processing through layers at each time step.

- Dotted lines imply recurrent or feedback connections, enabling information retention across time steps.

- No explicit activation functions or state variables are labeled.

- **Hidden state-Based (Right)**:

- Horizontal arrows indicate state propagation between layers across time steps.

- Dotted lines suggest shared hidden states or memory across time steps.

- No explicit state variables or activation functions are labeled.

### Key Observations

1. **Flow Direction**:

- Activation-Based: Vertical (layer-wise) processing.

- Hidden state-Based: Horizontal (state-wise) propagation.

2. **Temporal Coupling**:

- Both architectures use dotted lines to link layers across time steps, but their roles differ:

- Activation-Based: Likely recurrent feedback.

- Hidden state-Based: Likely state retention for sequential dependencies.

3. **Layer Count**: Both diagrams use N layers, but N is not quantified (e.g., 3 layers shown, but N could be larger).

### Interpretation

- **Activation-Based Architecture**:

- Resembles a **recurrent neural network (RNN)** where each layer’s activation is passed downward and retained across time steps via feedback. This could model temporal dependencies in sequential data.

- **Hidden state-Based Architecture**:

- Mirrors a **feedforward RNN** where hidden states (layer outputs) are propagated horizontally across time steps. This aligns with architectures like LSTMs or GRUs, which maintain state for sequential tasks.

- **Key Difference**:

- Activation-Based emphasizes vertical layer interactions with feedback, while Hidden state-Based focuses on horizontal state transitions.

- **Uncertainties**:

- The exact number of layers (N) and time steps (T) is unspecified.

- No numerical values, activation functions, or state variables are labeled, limiting quantitative analysis.

### Conclusion

The diagrams illustrate two approaches to modeling temporal data:

1. **Activation-Based**: Suitable for tasks requiring feedback across layers and time steps (e.g., sequence modeling with recurrent connections).

2. **Hidden state-Based**: Optimized for sequential processing where hidden states encode temporal context (e.g., language modeling).

Both architectures highlight the importance of temporal coupling in neural networks but differ in their implementation of layer interactions and state management.