TECHNICAL ASSET FINGERPRINT

1f10e77e0d7e5c67b2e15d8c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

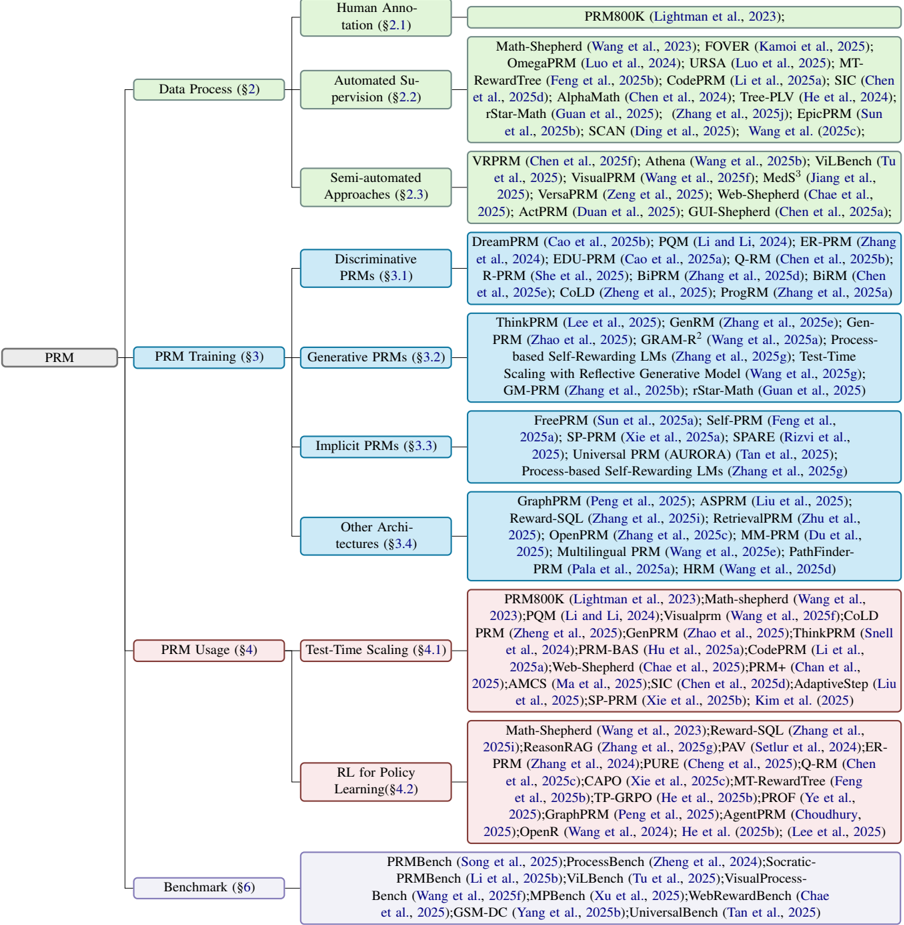

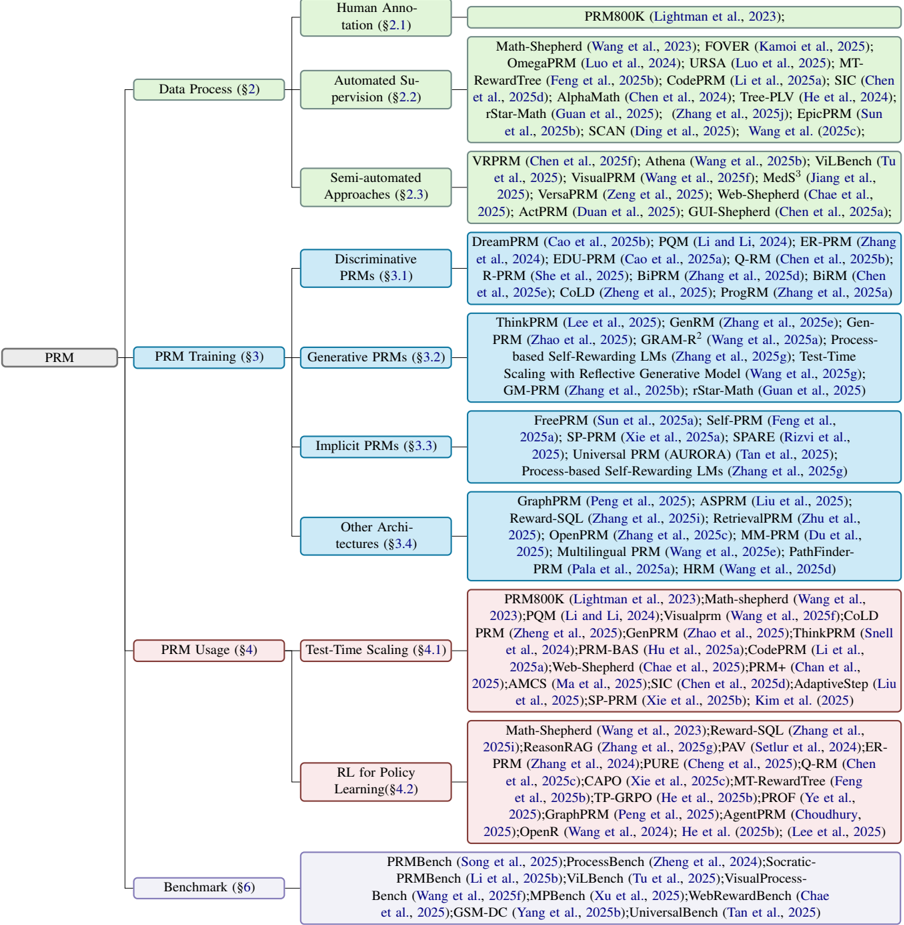

## Flowchart: PRM Taxonomy

### Overview

The image is a flowchart that categorizes different types of PRM (likely standing for "Probabilistic RoadMap") algorithms and related concepts. It branches out from a central "PRM" node into various subcategories like data processing, training, usage, and benchmarking, further detailing specific algorithms and techniques within each category.

### Components/Axes

* **Main Node:** PRM

* **Level 1 Categories:**

* Data Process (§2) - Light Green

* PRM Training (§3) - Blue

* PRM Usage (§4) - Red

* Benchmark (§6) - Purple

* **Level 2 Categories (under Data Process):**

* Human Annotation (§2.1) - Light Green

* Automated Supervision (§2.2) - Light Green

* Semi-automated Approaches (§2.3) - Light Green

* **Level 2 Categories (under PRM Training):**

* Discriminative PRMs (§3.1) - Blue

* Generative PRMs (§3.2) - Blue

* Implicit PRMs (§3.3) - Blue

* Other Architectures (§3.4) - Blue

* **Level 2 Categories (under PRM Usage):**

* Test-Time Scaling (§4.1) - Red

* RL for Policy Learning (§4.2) - Red

### Detailed Analysis or ### Content Details

**1. Data Process (§2) - Light Green**

* Human Annotation (§2.1): No specific algorithms listed.

* Automated Supervision (§2.2): PRM800K (Lightman et al., 2023)

* Semi-automated Approaches (§2.3):

* Math-Shepherd (Wang et al., 2023)

* FOVER (Kamoi et al., 2025)

* OmegaPRM (Luo et al., 2024)

* URSA (Luo et al., 2025)

* MT-RewardTree (Feng et al., 2025b)

* CodePRM (Li et al., 2025a)

* SIC (Chen et al., 2025d)

* AlphaMath (Chen et al., 2024)

* Tree-PLV (He et al., 2024)

* rStar-Math (Guan et al., 2025)

* (Zhang et al., 2025j)

* EpicPRM (Sun et al., 2025b)

* SCAN (Ding et al., 2025)

* Wang et al. (2025c)

**2. PRM Training (§3) - Blue**

* Discriminative PRMs (§3.1):

* VRPRM (Chen et al., 2025f)

* Athena (Wang et al., 2025b)

* ViLBench (Tu et al., 2025)

* VisualPRM (Wang et al., 2025f)

* MedS³ (Jiang et al., 2025)

* VersaPRM (Zeng et al., 2025)

* Web-Shepherd (Chae et al., 2025)

* ActPRM (Duan et al., 2025)

* GUI-Shepherd (Chen et al., 2025a)

* Generative PRMs (§3.2):

* DreamPRM (Cao et al., 2025b)

* PQM (Li and Li, 2024)

* ER-PRM (Zhang et al., 2024)

* EDU-PRM (Cao et al., 2025a)

* Q-RM (Chen et al., 2025b)

* R-PRM (She et al., 2025)

* BiPRM (Zhang et al., 2025d)

* BiRM (Chen et al., 2025e)

* CoLD (Zheng et al., 2025)

* ProgRM (Zhang et al., 2025a)

* Implicit PRMs (§3.3):

* ThinkPRM (Lee et al., 2025)

* GenRM (Zhang et al., 2025e)

* Gen-PRM (Zhao et al., 2025)

* GRAM-R2 (Wang et al., 2025a)

* Process-based Self-Rewarding LMs (Zhang et al., 2025g)

* Test-Time Scaling with Reflective Generative Model (Wang et al., 2025g)

* GM-PRM (Zhang et al., 2025b)

* rStar-Math (Guan et al., 2025)

* Other Architectures (§3.4):

* FreePRM (Sun et al., 2025a)

* Self-PRM (Feng et al., 2025a)

* SP-PRM (Xie et al., 2025a)

* SPARE (Rizvi et al., 2025)

* Universal PRM (AURORA) (Tan et al., 2025)

* Process-based Self-Rewarding LMs (Zhang et al., 2025g)

* GraphPRM (Peng et al., 2025)

* ASPRM (Liu et al., 2025)

* Reward-SQL (Zhang et al., 2025i)

* RetrievalPRM (Zhu et al., 2025)

* OpenPRM (Zhang et al., 2025c)

* MM-PRM (Du et al., 2025)

* Multilingual PRM (Wang et al., 2025e)

* PathFinder-PRM (Pala et al., 2025a)

* HRM (Wang et al., 2025d)

**3. PRM Usage (§4) - Red**

* Test-Time Scaling (§4.1):

* PRM800K (Lightman et al., 2023)

* Math-shepherd (Wang et al., 2023)

* PQM (Li and Li, 2024)

* Visualprm (Wang et al., 2025f)

* CoLD PRM (Zheng et al., 2025)

* GenPRM (Zhao et al., 2025)

* ThinkPRM (Snell et al., 2024)

* PRM-BAS (Hu et al., 2025a)

* CodePRM (Li et al., 2025a)

* Web-Shepherd (Chae et al., 2025)

* PRM+ (Chan et al., 2025)

* AMCS (Ma et al., 2025)

* SIC (Chen et al., 2025d)

* AdaptiveStep (Liu et al., 2025)

* SP-PRM (Xie et al., 2025b)

* Kim et al. (2025)

* RL for Policy Learning (§4.2):

* Math-Shepherd (Wang et al., 2023)

* Reward-SQL (Zhang et al., 2025i)

* ReasonRAG (Zhang et al., 2025g)

* PAV (Setlur et al., 2024)

* ER-PRM (Zhang et al., 2024)

* PURE (Cheng et al., 2025)

* Q-RM (Chen et al., 2025c)

* CAPO (Xie et al., 2025c)

* MT-RewardTree (Feng et al., 2025b)

* TP-GRPO (He et al., 2025b)

* PROF (Ye et al., 2025)

* GraphPRM (Peng et al., 2025)

* AgentPRM (Choudhury, 2025)

* OpenR (Wang et al., 2024)

* He et al. (2025b)

* (Lee et al., 2025)

**4. Benchmark (§6) - Purple**

* PRMBench (Song et al., 2025)

* ProcessBench (Zheng et al., 2024)

* Socratic-PRMBench (Li et al., 2025b)

* ViLBench (Tu et al., 2025)

* Visual Process-Bench (Wang et al., 2025f)

* MPBench (Xu et al., 2025)

* WebRewardBench (Chae et al., 2025)

* GSM-DC (Yang et al., 2025b)

* UniversalBench (Tan et al., 2025)

### Key Observations

* The flowchart provides a hierarchical organization of PRM-related concepts.

* Each category lists specific algorithms or techniques, along with associated publications (authors and year).

* The years listed are mostly in the future (2024, 2025), suggesting this is either a projection or a typo.

* The most granular level lists specific PRM algorithms and associated publications.

### Interpretation

The flowchart serves as a taxonomy or classification system for PRM algorithms and related research. It highlights the different stages of PRM development (data processing, training, usage) and provides a structured overview of the field. The inclusion of specific algorithms and their corresponding publications makes it a valuable resource for researchers and practitioners in the area of probabilistic roadmaps and motion planning. The future dates suggest this is a forward-looking view of the field.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Taxonomy of Prompting Methods for Large Language Models

### Overview

This diagram presents a hierarchical taxonomy of prompting methods used for Large Language Models (LLMs). The taxonomy is structured around two main axes: Data Process and Training. Within each axis, methods are categorized into levels of automation (Human Annotation, Automated Supervision, Semi-automated Approaches, and Discriminative/Generative PRMs) and training paradigms (Prompt Training and In-Context Learning). Each node in the tree represents a specific prompting technique, accompanied by citations. The diagram is oriented with the root nodes at the top and branching downwards.

### Components/Axes

* **Main Axes:**

* Data Process (Top-Left)

* Prompt Training (Top-Center)

* In-Context Learning (Top-Right)

* **Sub-Categories within Data Process:**

* Human Annotation (§2.1)

* Automated Supervision (§2.2)

* Semi-automated Approaches (§2.3)

* Discriminative PRMs (§3.1)

* **Sub-Categories within Prompt Training:**

* Generative PRMs (§3.2)

* Discrete PRMs (§3.3)

* Continuous PRMs (§3.4)

* **Sub-Categories within In-Context Learning:**

* Demonstration (§4.1)

* Retrieval (§4.2)

* Generated Knowledge (§4.3)

* **Legend/Citations:** Each method is followed by a citation in the format "Author(s) et al., Year". The section number is also included in parentheses (e.g., §2.1).

### Detailed Analysis or Content Details

Here's a breakdown of the methods listed under each category, with approximate counts where applicable.

**Data Process:**

* **Human Annotation (§2.1):** PRM800K (Lightman et al., 2023)

* **Automated Supervision (§2.2):** Math-Shepherd (Wang et al., 2023); FOVER (Kamoi et al., 2025); OmegaProm (Luo et al., 2024); URSA (Luo et al., 2025); MT-RewardTree (Feng et al., 2025b); CodeProm(Li et al., 2025a); SIC (Chen et al., 2025d); AlphaMath (Chen et al., 2024); Tree-PLV (He et al., 2024); rStar-Math (Guan et al., 2025); Zhang et al. (2025c); EpicPRM (Sun et al., 2025b); SCAN (Ding et al., 2025); Wang et al. (2025c). (Approximately 13 methods)

* **Semi-automated Approaches (§2.3):** VPRPRM (Chen et al., 2025f); Athena (Wang et al., 2025b); ViLBenc (Tu et al., 2025); VisualPRM (Wang et al., 2025); Meds^3 (Jiang et al., 2025); VersaPRM (Zeng et al., 2025); Web-Shepherd (Chae et al., 2025a); ActPRM (Duan et al., 2025); GUI-Shepherd (Chen et al., 2025a). (Approximately 9 methods)

* **Discriminative PRMs (§3.1):** DreamPRM (Cao et al., 2025b); POM (Li and Li, 2024); ER-PRM (Zhang et al., 2024); EDU-PRM (Cao et al., 2025a); Q-RM (Chen et al., 2025b); R-PRM (She et al., 2025); BiPRM (Zhang et al., 2025d); BiRM (Chen et al., 2025e); COLD (Zheng et al., 2025); ProgPRM (Zhang et al., 2025a). (Approximately 10 methods)

**Prompt Training:**

* **Generative PRMs (§3.2):** ThinkPRM (Lee et al., 2025); GenRM (Zhang et al., 2025e); Gen-PRM (Zhao et al., 2025); GRAMR^2 (Wang et al., 2025a); Process-based Self-Rewarding LMs (Zhang et al., 2025g); Test-Time Scaling with Reflective Generative Model (Guan et al., 2025); GM-PRM (Zhang et al., 2025f); rStar-Math (Wang et al., 2025b). (Approximately 8 methods)

* **Discrete PRMs (§3.3):** ZeroDPRM (Sun et al., 2025a); Self-PRM (Park et al., 2025); Prefix-Tuning (Li et al., 2021); AutoPrompt (Shin et al., 2020); GradientPrompt (Pratt et al., 2020); P-Tuning (Lü et al., 2022); Prompt-Tuning (Leskovec et al., 2021). (Approximately 7 methods)

* **Continuous PRMs (§3.4):** Reparameterizable Prompt Tuning (Hu et al., 2022); LoRA (Hu et al., 2021); AdaLoRA (Zhang et al., 2024); IA^3 (Mou et al., 2025); BitFit (Zaken et al., 2021); UniPELT (Wang et al., 2025d); PEFT (Mangrulkar et al., 2023). (Approximately 7 methods)

**In-Context Learning:**

* **Demonstration (§4.1):** Exemplar-PRM (Yang et al., 2025); DemoPRM (Li et al., 2025b); Active-PRM (Zhao et al., 2025); ORCA (Mukherjee et al., 2023); Self-Instruct (Wang et al., 2022); Flan (Wei et al., 2022). (Approximately 6 methods)

* **Retrieval (§4.2):** RAG (Lewis et al., 2020); REALM (Guu et al., 2020); kNN-LM (Retriever) (Izacard et al., 2021); Atlas (Jiang et al., 2022); ReAct (Yao et al., 2023); Dyna-PRM (Zhao et al., 2025b). (Approximately 6 methods)

* **Generated Knowledge (§4.3):** G-RAG (Laskar et al., 2024); Know-Gen (Li et al., 2023); Graph-RAG (Wang et al., 2023); Self-Knowledge (Chen et al., 2023); Knowledge-Augmented LLM (Liu et al., 2023); Auto-Knowledge (Zou et al., 2023). (Approximately 6 methods)

### Key Observations

* The diagram demonstrates a rapidly expanding field, with numerous methods being developed in each category.

* The "Automated Supervision" branch under "Data Process" contains the largest number of methods, suggesting a strong focus on automating the process of creating effective prompts.

* The "Generative PRMs" and "Continuous PRMs" categories within "Prompt Training" are also well-populated, indicating active research in these areas.

* The citations indicate that much of this work is very recent (2023-2025), highlighting the dynamic nature of LLM prompting research.

### Interpretation

This taxonomy provides a structured overview of the diverse landscape of prompting techniques for LLMs. The categorization by data process and training paradigm helps to understand the different approaches being taken to improve LLM performance. The diagram suggests a trend towards more automated and generative methods, likely driven by the desire to reduce the need for manual prompt engineering and to create more adaptable and robust prompting strategies. The sheer number of methods listed indicates a highly competitive research area, with ongoing efforts to explore new and innovative ways to elicit desired behavior from LLMs. The inclusion of section numbers suggests this diagram is part of a larger document or survey paper. The diagram is a valuable resource for researchers and practitioners seeking to navigate the complex world of LLM prompting.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Taxonomy of Process Reward Models (PRMs)

### Overview

The image is a hierarchical taxonomy diagram (tree structure) classifying research and methods related to Process Reward Models (PRMs). The diagram is organized from a central root node "PRM" which branches into four primary categories: Data Process, PRM Training, PRM Usage, and Benchmark. Each primary category further subdivides into specific approaches or sub-fields, with each leaf node listing numerous associated research papers and their citations. The diagram uses color-coding: primary categories are in light green boxes, subcategories are in light blue boxes, and the lists of associated papers/references are in light red boxes.

### Components/Axes

* **Root Node:** "PRM" (center-left).

* **Primary Categories (Green Boxes):**

* Data Process (§2)

* PRM Training (§3)

* PRM Usage (§4)

* Benchmark (§6)

* **Subcategories (Blue Boxes):** Branch from their respective primary category.

* From Data Process: Human Annotation (§2.1), Automated Supervision (§2.2), Semi-automated Approaches (§2.3).

* From PRM Training: Discriminative PRMs (§3.1), Generative PRMs (§3.2), Implicit PRMs (§3.3), Other Architectures (§3.4).

* From PRM Usage: Test-Time Scaling (§4.1), RL for Policy Learning (§4.2).

* **Reference Lists (Red Boxes):** Each subcategory box is connected to a corresponding red box containing a list of research papers. The connection is a straight line from the right side of the blue subcategory box to the left side of the red reference box.

* **Spatial Layout:** The tree flows from left to right. The root is on the far left. Primary categories are stacked vertically to its right. Subcategories are stacked vertically to the right of their parent category. Reference lists are aligned vertically to the far right, each adjacent to its parent subcategory.

### Detailed Analysis

**1. Data Process (§2)**

* **Human Annotation (§2.1):** Associated with `PRM800K (Lightman et al., 2023)`.

* **Automated Supervision (§2.2):** Associated with a long list of methods including Math-Shepherd, FOVER, OmegaPRM, URSA, MT-RewardTree, CodePRM, SIC, AlphaMath, Tree-PLV, rStar-Math, SCAN, and Wang et al. (2025c).

* **Semi-automated Approaches (§2.3):** Associated with VRPRM, Athena, ViLBench, VisualPRM, MedS³, VersaPRM, Web-Shepherd, ActPRM, and GUI-Shepherd.

**2. PRM Training (§3)**

* **Discriminative PRMs (§3.1):** Associated with DreamPRM, PQM, ER-PRM, EDU-PRM, Q-RM, R-PRM, BiPRM, BiRM, CoLD, and ProgRM.

* **Generative PRMs (§3.2):** Associated with ThinkPRM, GenRM, GRAM-R², Process-based Self-Rewarding LMs, Test-Time Scaling with Reflective Generative Model, GM-PRM, and rStar-Math.

* **Implicit PRMs (§3.3):** Associated with FreePRM, Self-PRM, SP-PRM, SPARE, Universal PRM (AURORA), and Process-based Self-Rewarding LMs.

* **Other Architectures (§3.4):** Associated with GraphPRM, ASPRM, Reward-SQL, RetrievalPRM, OpenPRM, MM-PRM, Multilingual PRM, Pathfinder-PRM, and HRM.

**3. PRM Usage (§4)**

* **Test-Time Scaling (§4.1):** Associated with a dense list including PRM800K, Math-shepherd, PQM, VisualPRM, CoLD PRM, GenPRM, ThinkPRM, PRM-BAS, CodePRM, Web-Shepherd, PRM+, AMCS, SIC, AdaptiveStep, SP-PRM, and Kim et al. (2025).

* **RL for Policy Learning (§4.2):** Associated with Math-Shepherd, Reward-SQL, ReasonRAG, PAV, ER-PRM, PURE, Q-RM, CAPO, MT-RewardTree, TP-GRPO, PROF, GraphPRM, AgentPRM, OpenR, He et al. (2024), and Lee et al. (2025).

**4. Benchmark (§6)**

* This primary category has no further subcategories. It connects directly to a single red box listing benchmark works: PRMBench, ProcessBench, Socratic-PRMBench, ViLBench, VisualProcessing-Bench, MPBench, WebRewardBench, GSM-DC, and UniversalBench.

### Key Observations

* **Research Density:** The "PRM Training" and "PRM Usage" sections contain the most numerous and densely packed subcategories and references, indicating these are the most active areas of research.

* **Methodological Evolution:** The "Data Process" section shows a clear progression from pure human annotation (§2.1) to automated (§2.2) and hybrid (§2.3) methods.

* **Architectural Diversity:** The "PRM Training" section highlights a split between discriminative (§3.1) and generative (§3.2) approaches, with additional categories for implicit models and other novel architectures.

* **Application Focus:** The "PRM Usage" section is explicitly divided between using PRMs for scaling at inference time (§4.1) and for reinforcement learning to improve policies (§4.2).

* **Citation Patterns:** Many papers appear in multiple sections (e.g., `Math-Shepherd` appears under Data Process, PRM Usage, and RL for Policy Learning), indicating foundational or multi-faceted work. The year range is predominantly 2023-2025, showing this is a very current and rapidly evolving field.

### Interpretation

This taxonomy provides a structured map of the Process Reward Model research landscape as of early 2025. It demonstrates that the field has moved beyond simple human-labeled data (§2.1) to develop sophisticated automated and semi-automated techniques for generating process supervision signals. The core technical challenge is reflected in the diverse training paradigms (§3), where researchers are exploring not just traditional discriminative models but also generative and implicit reward models, suggesting a search for more scalable and flexible architectures.

The "Usage" section (§4) reveals the two primary *purposes* of PRMs: to improve the reasoning of language models during inference (test-time scaling) and to serve as a reward signal for training better policies via RL. The extensive list of benchmarks (§6) underscores the community's focus on rigorous evaluation. Overall, the diagram illustrates a maturing research area that is systematically building the tools (data, models), applications (scaling, RL), and evaluation frameworks (benchmarks) necessary to use process-based rewards to enhance the reasoning capabilities of AI systems. The high density of 2024-2025 citations signals a period of intense innovation and publication.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Hierarchical Diagram: PRM (Probabilistic Reward Models) Taxonomy

### Overview

The diagram presents a hierarchical taxonomy of Probabilistic Reward Models (PRMs), organized into five main categories: **Human Annotation**, **Data Process**, **PRM Training**, **PRM Usage**, and **Benchmark**. Each category branches into subcategories with specific methods, papers, and years. Color coding distinguishes main categories: green (Human Annotation/Data Process), blue (PRM Training), pink (PRM Usage), and purple (Benchmark).

### Components/Axes

- **Main Categories** (Top-level labels):

- Human Annotation ($2.1)

- Data Process ($2)

- PRM Training ($3)

- PRM Usage ($4)

- Benchmark ($6)

- **Subcategories** (Nested under main categories):

- Automated Supervision ($2.2)

- Semi-automated Approaches ($2.3)

- Discriminative PRMs ($3.1)

- Generative PRMs ($3.2)

- Implicit PRMs ($3.3)

- Other Architectures ($3.4)

- Test-Time Scaling ($4.1)

- RL for Policy Learning ($4.2)

- **Legend**:

- Green: Human Annotation, Data Process

- Blue: PRM Training

- Pink: PRM Usage

- Purple: Benchmark

### Detailed Analysis

#### Human Annotation ($2.1)

- **Methods**:

- PRM800K (Lightman et al., 2023)

- Math-Shepherd (Wang et al., 2023)

- FOVER (Kamoi et al., 2025)

- OmegaPRM (Luo et al., 2024)

- URSA (Luo et al., 2025)

- MT-RewardTree (Feng et al., 2025b)

- CodePRM (Li et al., 2025)

- SIC (Chen et al., 2025)

- AlphaMath (Chen et al., 2024)

- Tree-PLV (He et al., 2024)

- rStarMath (Guan et al., 2025)

- Zeng et al. (2025b)

- SCAN (Ding et al., 2025)

- WANG et al. (2025c)

#### Data Process ($2)

- **Subcategories**:

- **Automated Supervision ($2.2)**:

- PRM800K (Lightman et al., 2023)

- Math-Shepherd (Wang et al., 2023)

- FOVER (Kamoi et al., 2025)

- OmegaPRM (Luo et al., 2024)

- URSA (Luo et al., 2025)

- MT-RewardTree (Feng et al., 2025b)

- CodePRM (Li et al., 2025)

- SIC (Chen et al., 2025)

- AlphaMath (Chen et al., 2024)

- Tree-PLV (He et al., 2024)

- rStarMath (Guan et al., 2025b)

- SCAN (Ding et al., 2025)

- WANG et al. (2025c)

- **Semi-automated Approaches ($2.3)**:

- VPRPM (Chen et al., 2025f)

- Athena (Wang et al., 2025b)

- ViLBench (Tu et al., 2025)

- VisualPRM (Wang et al., 2025f)

- MedS³ (Jiang et al., 2025)

- VersaPRM (Zeng et al., 2025)

- Web-Shepherd (Wang et al., 2025)

- ActPRM (Duan et al., 2025)

- GUI-Shepherd (Chen et al., 2025a)

#### PRM Training ($3)

- **Subcategories**:

- **Discriminative PRMs ($3.1)**:

- DreamPRM (Cao et al., 2025b)

- EDU-PRM (Cao et al., 2025a)

- PQM (Li and Li, 2024)

- ER-PRM (Zhang et al., 2024)

- R-PRM (She et al., 2025)

- BiPRM (Zhang et al., 2025b)

- QRM (Chen et al., 2025b)

- ColD (Zheng et al., 2025)

- ProgRM (Zhang et al., 2025a)

- **Generative PRMs ($3.2)**:

- PRMTPRM (Zhao et al., 2025)

- GRAM-R² (Wang et al., 2025a)

- Process-based Self-Rewarding LMs (Zhang et al., 2025g)

- Test-Time Scaling with Reflective Generative Model (Wang et al., 2025g)

- GM-PRM (Zhang et al., 2025b)

- rStarMath (Guan et al., 2025)

- **Implicit PRMs ($3.3)**:

- FreePRM (Sun et al., 2025a)

- Self-PRM (Feng et al., 2025a)

- SP-PRM (Xie et al., 2025a)

- SPARE (Rizvi et al., 2025)

- Universal PRM (AURORA) (Tan et al., 2025)

- Process-based Self-Rewarding LMs (Zhang et al., 2025g)

- **Other Architectures ($3.4)**:

- GraphPRM (Peng et al., 2025)

- ASPRM (Liu et al., 2025)

- Reward-SQL (Zhang et al., 2025i)

- RetrievalPRM (Zhu et al., 2025)

- OpenPRM (Zhang et al., 2025c)

- MM-PRM (Du et al., 2025)

- Multilingual PRM (HRM et al., 2025e)

- PathFinderPRM (Pala et al., 2025a)

- WRM (Wang et al., 2025)

#### PRM Usage ($4)

- **Subcategories**:

- **Test-Time Scaling ($4.1)**:

- PRM800K (Lightman et al., 2023)

- Math-Shepherd (Wang et al., 2023)

- PQM (Li and Li, 2024)

- Visualpralm (Wang et al., 2025f)

- ThinkPRM (Zeng et al., 2025)

- PRM-BAS (Hu et al., 2025a)

- CodePRM (Li et al., 2025)

- Web-Shepherd (Chae et al., 2025)

- PRM+ (Chan et al., 2025)

- AMCMS (Ma et al., 2025)

- SIC (Chen et al., 2025d)

- AdaptiveStep (Liu et al., 2025)

- SP-PRM (Xie et al., 2025b)

- Kim et al. (2025)

- **RL for Policy Learning ($4.2)**:

- PRMBench (Song et al., 2025)

- ProcessBench (Zheng et al., 2024)

- SocraticBench (Li et al., 2025b)

- ViLBench (Tu et al., 2025)

- VisualProcessBench (Li et al., 2025b)

- ViLBench (Tu et al., 2025)

- WebRewardBench (Chae et al., 2025)

- ReasonRAG (Zhang et al., 2025)

- P-SQL (Zhu et al., 2024)

- ER-PRM (Zhang et al., 2024)

- PURE (Cheng et al., 2025)

- QRM (Chen et al., 2025c)

- CAPO (Xie et al., 2025c)

- MT-RewardTree (Feng et al., 2025b)

- TG-GRPO (He et al., 2025b)

- PROF (Ye et al., 2025)

- GraphPRM (Peng et al., 2025)

- AgentPRM (Choudhury, 2025)

- OpenR (Wang et al., 2024)

- He et al. (2025b)

- Lee et al. (2025)

#### Benchmark ($6)

- **Methods**:

- PRMBench (Song et al., 2025)

- PRMBench (Li et al., 2025b)

- ViLBench (Tu et al., 2025)

- VisualProcessBench (Li et al., 2025b)

- WebRewardBench (Chae et al., 2025)

- GSM-DC (Yang et al., 2025b)

- UniversalBench (Tan et al., 2025)

### Key Observations

1. **Temporal Trends**: Most methods are from 2024–2025, indicating rapid development in PRM research.

2. **Method Diversity**: Subcategories like "Generative PRMs" and "Implicit PRMs" show significant innovation.

3. **Color Consistency**: Legend colors align with categories (e.g., blue for PRM Training).

4. **Overlap**: Some methods appear in multiple subcategories (e.g., PRM800K in both Human Annotation and Data Process).

### Interpretation

The diagram illustrates the rapid evolution of PRMs, with a focus on training methodologies (e.g., generative and implicit models) and practical applications (e.g., test-time scaling). The hierarchical structure highlights interdisciplinary approaches, integrating reinforcement learning (RL) and multilingual capabilities. The dominance of 2024–2025 publications suggests a surge in research activity, possibly driven by advancements in large language models (LLMs). The inclusion of benchmarks like PRMBench and ViLBench underscores the need for standardized evaluation frameworks.

DECODING INTELLIGENCE...