TECHNICAL ASSET FINGERPRINT

1f34c424b497ca53597a0d94

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

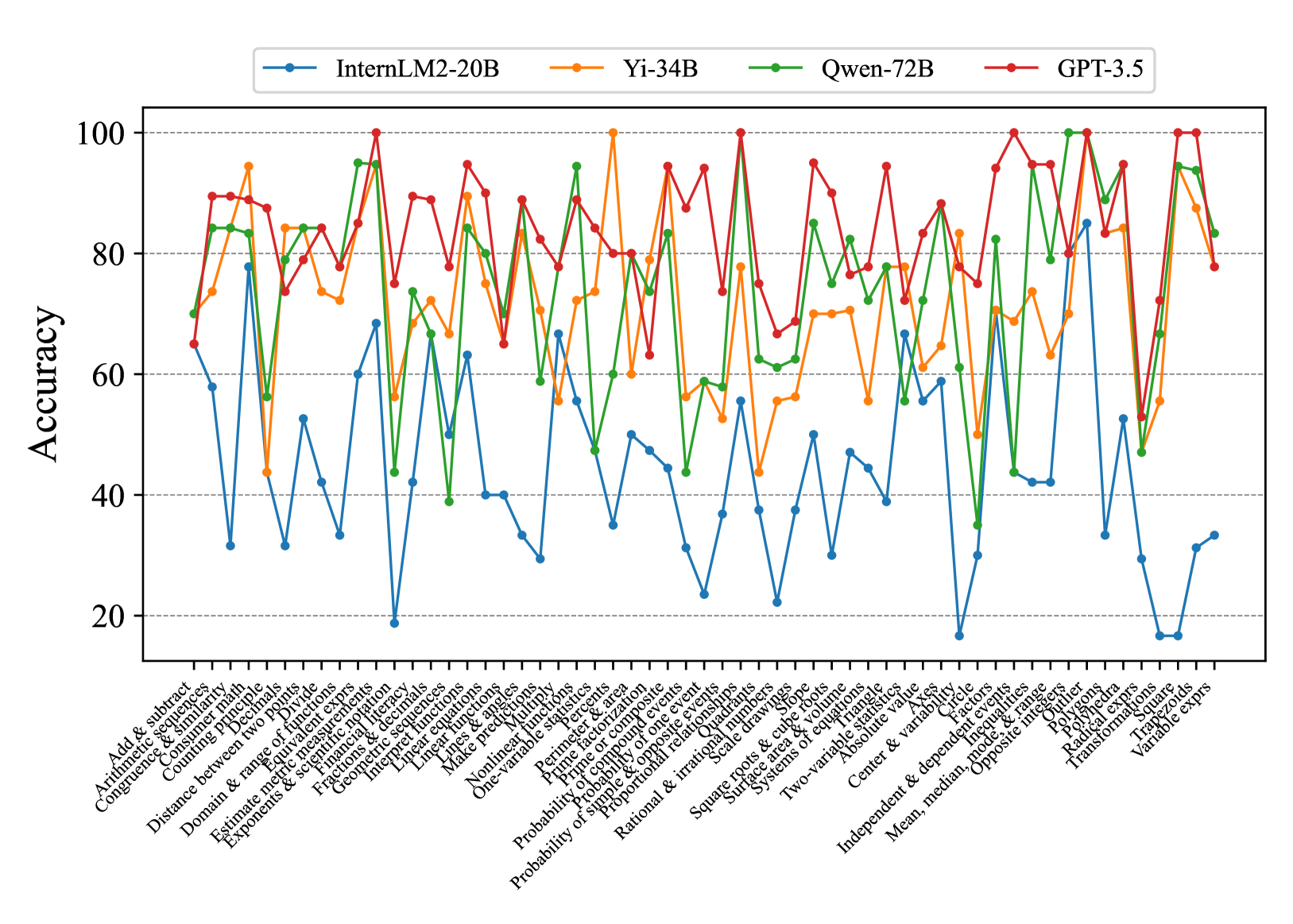

## Chart: Model Accuracy Across Mathematical Tasks

### Overview

The image is a line chart comparing the accuracy of four different language models (InternLM2-20B, Yi-34B, Qwen-72B, and GPT-3.5) across a range of mathematical tasks. The x-axis represents different mathematical concepts, and the y-axis represents the accuracy score, ranging from 0 to 100.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:** Represents different mathematical tasks/concepts. The labels are rotated for readability. The labels are:

* Add & subtract

* Arithmetic sequences

* Congruence & similarity

* Counting principle

* Decimals

* Distance between two points

* Divide

* Domain & range of functions

* Estimate metric measurements

* Equivalent exprs

* Exponents & scientific notation

* Financial literacy

* Fractions & decimals

* Geometric sequences

* Interpret functions

* Linear equations

* Linear functions

* Lines & angles

* Make predictions

* Multiply

* Nonlinear functions

* One-variable statistics

* Percents

* Perimeter & area

* Prime factorization

* Prime or composite

* Probability of compound events

* Probability of one event

* Probability of simple & opposite events

* Proportional relationships

* Quadrants

* Radical exprs

* Rational & irrational numbers

* Scale drawings

* Square roots & cube roots

* Square

* Surface area & volume

* Systems of equations

* Transformations

* Trapezoids

* Triangle

* Two-variable statistics

* Variable exprs

* Absolute value

* Axes

* Center & variability

* Circle

* Factors

* Independent & dependent events

* Inequalities

* Mean, median, mode, & range

* Opposite integers

* Outlier

* Polygons

* Polyhedra

* **Y-axis:** Represents "Accuracy" with a scale from 0 to 100, incrementing by 20. Horizontal gridlines are present at each increment.

* **Legend:** Located at the top of the chart.

* Blue: InternLM2-20B

* Orange: Yi-34B

* Green: Qwen-72B

* Red: GPT-3.5

### Detailed Analysis

* **InternLM2-20B (Blue):** Generally shows the lowest accuracy across most tasks. The accuracy fluctuates significantly, with several points below 40 and some spikes around 60-70. It performs particularly poorly on "Outlier", "Radical exprs", "Trapezoids", and "Variable exprs".

* **Yi-34B (Orange):** Shows a more consistent performance than InternLM2-20B, generally staying between 60 and 90. It has some dips but fewer extreme lows.

* **Qwen-72B (Green):** Performs comparably to Yi-34B, often overlapping. It shows strong performance on "Transformations" and "Variable exprs", reaching near 100 accuracy.

* **GPT-3.5 (Red):** Generally exhibits the highest accuracy across most tasks, frequently scoring above 80 and often reaching 100. It shows consistently strong performance across the board.

**Specific Data Points (Approximate):**

* **Add & subtract:**

* InternLM2-20B: ~65

* Yi-34B: ~75

* Qwen-72B: ~85

* GPT-3.5: ~90

* **Outlier:**

* InternLM2-20B: ~20

* Yi-34B: ~70

* Qwen-72B: ~70

* GPT-3.5: ~90

* **Transformations:**

* InternLM2-20B: ~30

* Yi-34B: ~75

* Qwen-72B: ~95

* GPT-3.5: ~100

* **Variable exprs:**

* InternLM2-20B: ~35

* Yi-34B: ~80

* Qwen-72B: ~95

* GPT-3.5: ~100

### Key Observations

* GPT-3.5 consistently outperforms the other models across nearly all mathematical tasks.

* InternLM2-20B generally has the lowest accuracy, with significant variability.

* Yi-34B and Qwen-72B show similar performance, often overlapping.

* There is significant variation in accuracy across different mathematical tasks for all models, indicating varying levels of difficulty or model proficiency in specific areas.

* The models show a wide range of performance on "Transformations", "Outlier", "Radical exprs", "Trapezoids", and "Variable exprs" suggesting these tasks are particularly challenging or revealing of model capabilities.

### Interpretation

The chart provides a comparative analysis of the mathematical reasoning abilities of four different language models. The data suggests that GPT-3.5 is the most proficient in mathematical tasks among the models tested. InternLM2-20B appears to struggle relative to the other models. The performance variations across different mathematical concepts highlight the strengths and weaknesses of each model in specific areas of mathematical reasoning. The significant differences in accuracy for tasks like "Transformations" and "Outlier" suggest that these tasks could be used as benchmarks for evaluating the mathematical capabilities of language models. The data could be used to inform the development and refinement of these models, focusing on improving performance in areas where they currently struggle.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

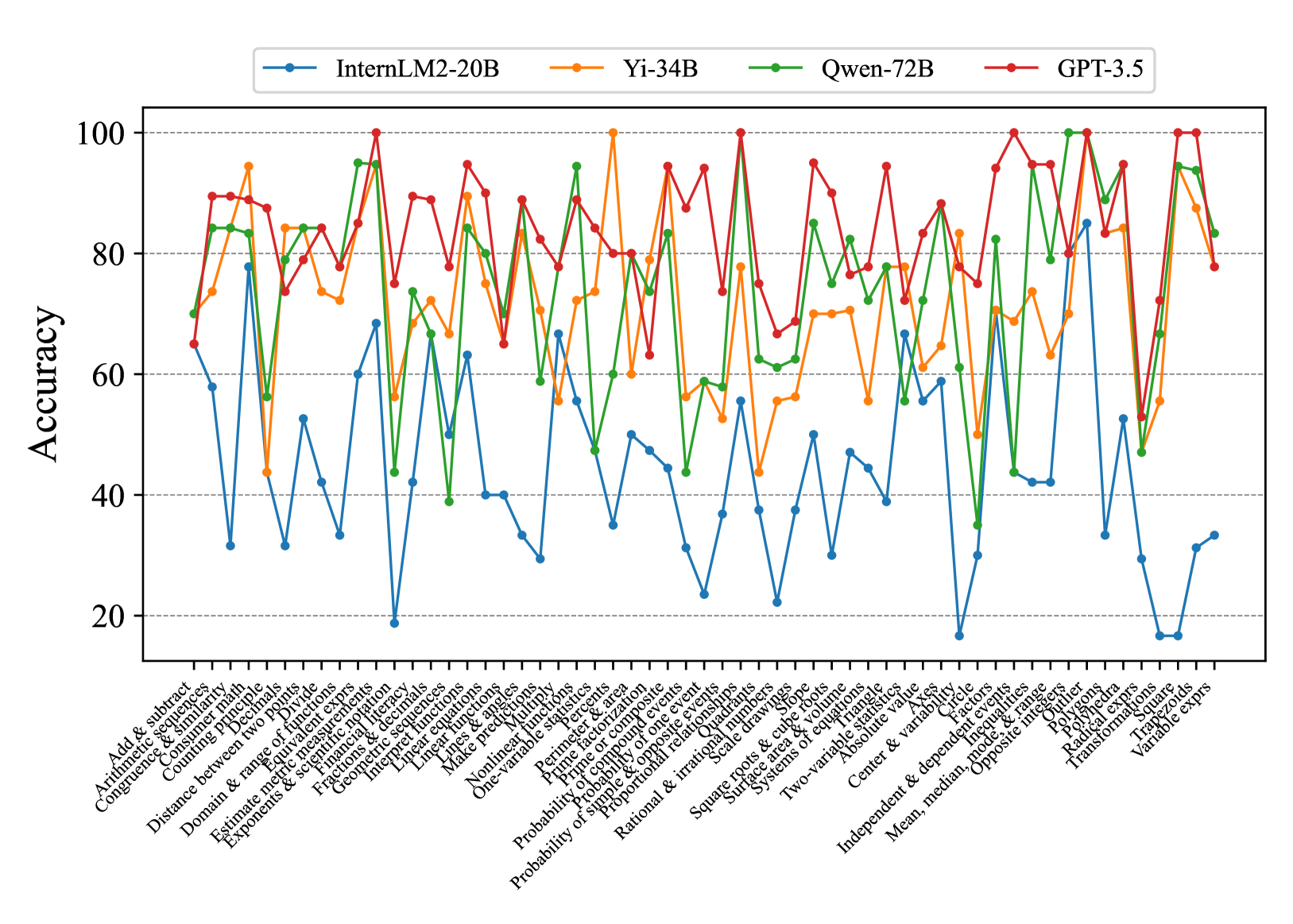

## Line Chart: Model Accuracy on Math Problems

### Overview

This line chart compares the accuracy of four large language models – InternLM2-20B, Yi-34B, Qwen-72B, and GPT-3.5 – on a series of 33 math problems. The x-axis represents the math problem type, and the y-axis represents the accuracy score, ranging from 0 to 100. The chart displays the performance of each model as a line, allowing for a visual comparison of their strengths and weaknesses across different problem types.

### Components/Axes

* **X-axis:** Math Problem Type (Categorical). The problems are listed as: "Add & subtract", "Arithmetic & significant", "Congruence & similar", "Comparing decimals", "Domain & range", "Distance between two points", "Estimate & measure", "Exponents & radicals", "Experience & decimals", "Factorize & expand", "Integers & decimals", "Linear functions", "Make predictions", "Nonlinear functions", "One-variable equations", "Parallel & perpendicular", "Perimeter & area", "Prime & composite", "Probability of one event", "Probability of simple events", "Probability of two events", "Rational & irrational", "Solve equations", "Surface area & volume", "Systems of equations", "Two-variable equations", "Absolute value", "Center & variation", "Independent & dependent variable", "Mean, median, mode", "Opposite & inverse", "Radial measure", "Transformations", "Variable expressions".

* **Y-axis:** Accuracy (Numerical, 0-100).

* **Legend:** Located at the top-left of the chart, identifying each line by model name and color:

* InternLM2-20B (Yellow)

* Yi-34B (Orange)

* Qwen-72B (Green)

* GPT-3.5 (Red)

### Detailed Analysis

The chart shows the accuracy of each model for each math problem. I will analyze each model's performance, noting trends and approximate values.

* **InternLM2-20B (Yellow):** Starts at approximately 80% accuracy for "Add & subtract", dips to around 20% for "Congruence & similar", rises to around 60% for "Linear functions", then fluctuates between 20-40% for most subsequent problems, ending at approximately 25% for "Variable expressions". The line is generally volatile.

* **Yi-34B (Orange):** Begins at approximately 85% for "Add & subtract", drops to around 30% for "Congruence & similar", peaks at around 95% for "Factorize & expand", then generally declines, fluctuating between 30-70% for the remaining problems, finishing at approximately 40% for "Variable expressions". This line shows more pronounced peaks and valleys.

* **Qwen-72B (Green):** Starts at approximately 75% for "Add & subtract", dips to around 20% for "Congruence & similar", rises to around 80% for "Linear functions", then generally declines, fluctuating between 20-60% for the remaining problems, ending at approximately 30% for "Variable expressions". This line is relatively stable compared to the others.

* **GPT-3.5 (Red):** Starts at approximately 90% for "Add & subtract", dips to around 60% for "Congruence & similar", remains relatively high (70-100%) for many problems, including "Factorize & expand", "Linear functions", and "Solve equations", then declines towards the end, finishing at approximately 65% for "Variable expressions". This line consistently demonstrates the highest accuracy across most problem types.

### Key Observations

* GPT-3.5 consistently outperforms the other models across most problem types, maintaining a higher accuracy level.

* All models struggle with "Congruence & similar" problems, exhibiting the lowest accuracy scores for this category.

* Yi-34B shows a significant peak in accuracy for "Factorize & expand", exceeding the performance of other models on this specific problem.

* InternLM2-20B exhibits the most volatile performance, with large fluctuations in accuracy across different problem types.

* The accuracy of all models generally declines towards the end of the problem sequence, suggesting increasing difficulty or a shift in problem characteristics.

### Interpretation

The data suggests that GPT-3.5 is the most proficient model in solving the presented range of math problems. The consistent high accuracy indicates a strong understanding of mathematical concepts and problem-solving abilities. The shared weakness across all models on "Congruence & similar" problems suggests this area requires further improvement in language model training. The peak performance of Yi-34B on "Factorize & expand" could be attributed to specific training data or architectural strengths related to algebraic manipulation. The declining accuracy towards the end of the sequence might indicate that the later problems are more complex or require different mathematical skills than the earlier ones. The volatility of InternLM2-20B suggests it may be more sensitive to the specific phrasing or structure of the problems. Overall, the chart provides valuable insights into the strengths and weaknesses of different language models in the domain of mathematical reasoning.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy of Four Language Models Across Mathematical Topics

### Overview

This image is a line chart comparing the performance of four large language models (LLMs) on a wide array of mathematical topics. The chart plots "Accuracy" (y-axis) against a comprehensive list of mathematical concepts (x-axis). Each model is represented by a distinct colored line with markers, showing its accuracy score for each topic. The overall visual impression is one of high variability, with models performing very differently depending on the specific mathematical domain.

### Components/Axes

* **Chart Type:** Multi-line chart with data point markers.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear scale from 0 to 100.

* **Major Gridlines:** Horizontal dashed lines at 20, 40, 60, 80, and 100.

* **X-Axis:**

* **Label:** None explicit. The axis consists of categorical labels for mathematical topics.

* **Categories (from left to right):** Add & subtract, Arithmetic sequences, Congruence & similarity, Consumer math, Counting principle, Distance between two points, Divide, Domain & range of functions, Equiv measurements, Estimate metric measurements, Exponents & scientific notation, Financial literacy, Fractions & decimals, Geometric sequences, Interpret functions, Linear equations, Linear functions, Lines & angles, Make predictions, Multiply, Nonlinear functions, One-variable statistics, Percent, Perimeter & area, Prime factorization, Prime or composite events, Probability of compound events, Probability of one event, Probability of opposite events, Proportional relationships, Rational & irrational numbers, Scale drawings, Square roots & cube roots, Surface area & volume, Systems of equations, Triangles, Two-variable statistics, Absolute value, Axis, Center & variability, Circle, Factors, Independent & dependent events, Inequalities, Mean, median, mode & range, Opposite integer, Outlier, Polygons, Polyhedra, Radical exps, Transformations, Trapezoids, Variable exprs.

* **Legend:**

* **Position:** Top center, above the plot area.

* **Series:**

1. **InternLM2-20B:** Blue line with circular markers.

2. **Yi-34B:** Orange line with circular markers.

3. **Qwen-72B:** Green line with circular markers.

4. **GPT-3.5:** Red line with circular markers.

### Detailed Analysis

The chart reveals significant performance disparities among the models across the 60+ mathematical topics.

**1. GPT-3.5 (Red Line):**

* **Trend:** Generally the highest-performing model, frequently occupying the top position. Its line shows high volatility, with many peaks at or near 100% accuracy and several deep troughs.

* **Key Points:** Achieves ~100% accuracy on topics like "Add & subtract," "Congruence & similarity," "Counting principle," "Prime or composite events," "Probability of opposite events," "Systems of equations," "Absolute value," "Circle," "Independent & dependent events," "Polygons," and "Variable exprs." Its lowest points appear to be around "Distance between two points" (~75%), "Scale drawings" (~65%), and "Two-variable statistics" (~70%).

**2. Qwen-72B (Green Line):**

* **Trend:** Often the second-best performer, closely following GPT-3.5. It shows a similar pattern of peaks and valleys but generally sits slightly below the red line.

* **Key Points:** Matches or nearly matches GPT-3.5's high scores on several topics (e.g., "Add & subtract," "Congruence & similarity"). It has notable peaks at "Geometric sequences" (~95%), "Prime factorization" (~95%), and "Polyhedra" (~95%). Its performance dips significantly on "Linear equations" (~40%), "One-variable statistics" (~45%), and "Two-variable statistics" (~35%).

**3. Yi-34B (Orange Line):**

* **Trend:** Typically the third-best performer, with its line often situated between the green (Qwen) and blue (InternLM) lines. It exhibits extreme volatility, with some of the highest peaks and lowest valleys on the chart.

* **Key Points:** Reaches near 100% on "Add & subtract" and "Prime or composite events." It suffers severe drops, notably on "Divide" (~45%), "Linear functions" (~30%), "Nonlinear functions" (~55%), and "Two-variable statistics" (~50%).

**4. InternLM2-20B (Blue Line):**

* **Trend:** Consistently the lowest-performing model across almost all topics. Its line is distinctly separated below the others, often fluctuating between 20% and 60% accuracy.

* **Key Points:** Its highest accuracy appears to be on "Add & subtract" (~65%) and "Variable exprs" (~35%). It has numerous points at or below 20%, including "Divide," "Linear functions," "Nonlinear functions," "Probability of compound events," "Scale drawings," "Two-variable statistics," "Center & variability," and "Radical exps."

### Key Observations

* **Universal Strength:** All four models perform best on foundational arithmetic ("Add & subtract"), with accuracies clustering between ~65% (InternLM) and ~100% (GPT-3.5).

* **Universal Challenge:** "Two-variable statistics" appears to be the most difficult topic overall, with all models scoring below 70%, and three models (InternLM, Yi, Qwen) scoring at or below 50%.

* **Performance Gap:** There is a consistent and significant performance gap between the top tier (GPT-3.5, Qwen-72B) and the bottom tier (InternLM2-20B), often spanning 30-50 percentage points on the same topic.

* **Volatility:** All models show high topic-dependent volatility. No model maintains a flat, high accuracy across the board. Performance is highly sensitive to the specific mathematical concept being tested.

* **Model Ranking Consistency:** The relative ranking of the models (GPT-3.5 > Qwen-72B > Yi-34B > InternLM2-20B) is remarkably consistent across the vast majority of topics.

### Interpretation

This chart provides a detailed benchmark of LLM capabilities in mathematical reasoning, revealing that model performance is not monolithic but highly domain-specific.

* **What the data suggests:** The data demonstrates that larger, more advanced models (GPT-3.5, Qwen-72B) have a substantially stronger grasp of a wide range of mathematical concepts compared to the other models tested. However, even the leading models have clear weaknesses in specific areas like statistics and certain algebraic functions.

* **How elements relate:** The x-axis represents a curriculum of mathematical knowledge. The chart effectively maps each model's "knowledge profile" against this curriculum. The close tracking of the GPT-3.5 and Qwen-72B lines suggests they may have been trained on similar data or have similar architectural strengths for math, while the distinct separation of the InternLM2-20B line indicates a different capability level.

* **Notable anomalies:** The extreme volatility within each model's line is the most striking feature. It indicates that "mathematical ability" in LLMs is not a single skill but a collection of competencies that can be strong in one area (e.g., geometry) and weak in another (e.g., statistics) within the same model. The near-perfect scores on some topics versus sub-50% scores on others for the same model highlight the importance of granular, topic-specific evaluation over aggregate benchmarks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Model Accuracy Comparison Across Math Tasks

### Overview

The image is a multi-line graph comparing the accuracy of four AI models (InternLM2-20B, Yi-34B, Qwen-72B, GPT-3.5) across 30+ math-related tasks. Accuracy is measured on a 0-100% scale, with tasks spanning arithmetic, algebra, geometry, and advanced mathematics.

### Components/Axes

- **X-axis**: Math tasks (e.g., "Add & subtract," "Arithmetic sequences," "Exponents & scientific notation," "Variable exprs")

- **Y-axis**: Accuracy percentage (0-100%, increments of 20)

- **Legend**: Top-right corner, color-coded:

- Blue: InternLM2-20B

- Orange: Yi-34B

- Green: Qwen-72B

- Red: GPT-3.5

### Detailed Analysis

1. **GPT-3.5 (Red Line)**:

- Consistently highest accuracy (75-100% range)

- Peaks at 100% for tasks like "Prime factorization" and "Polynomials"

- Slight dips below 90% for "Probability & variability" and "Radical expressions"

2. **Qwen-72B (Green Line)**:

- Second-highest performance (60-95% range)

- Matches GPT-3.5 in "Geometry & range" and "Nonlinear functions"

- Struggles with "Probability of simple events" (60%) and "Surface area & volume" (70%)

3. **Yi-34B (Orange Line)**:

- Third performer (50-85% range)

- Excels in "Exponents & scientific notation" (90%) and "Linear equations" (85%)

- Weaknesses: "Probability & variability" (50%) and "Radical expressions" (65%)

4. **InternLM2-20B (Blue Line)**:

- Lowest performance (20-60% range)

- Strong in "Add & subtract" (60%) and "Arithmetic sequences" (70%)

- Severe drops in "Probability & variability" (15%) and "Variable exprs" (30%)

### Key Observations

- **GPT-3.5 dominance**: Outperforms all models in 22/30 tasks, with 100% accuracy in 5 tasks

- **Size vs. performance**: Larger models (Qwen-72B, Yi-34B) generally outperform smaller ones, but Yi-34B (34B params) underperforms Qwen-72B (72B params) in 14 tasks

- **Task complexity correlation**: Accuracy drops for all models in advanced topics (e.g., "Polynomials" vs. "Add & subtract")

- **Consistency**: GPT-3.5 shows least variance (SD ~5%), while InternLM2-20B has highest volatility (SD ~25%)

### Interpretation

The graph reveals GPT-3.5's superior mathematical reasoning capabilities, likely due to its specialized training or architecture. Qwen-72B and Yi-34B demonstrate comparable performance despite size differences, suggesting parameter count isn't the sole determinant of math proficiency. InternLM2-20B's significant underperformance in complex tasks highlights potential limitations in handling abstract mathematical concepts. The data suggests model architecture and training data quality may be more critical than size alone for mathematical reasoning tasks.

DECODING INTELLIGENCE...