TECHNICAL ASSET FINGERPRINT

1f9eca63c5545b6665cc5e5f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

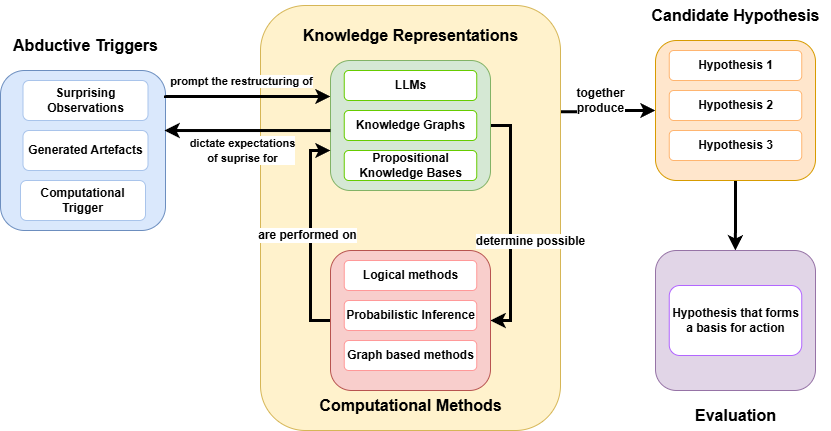

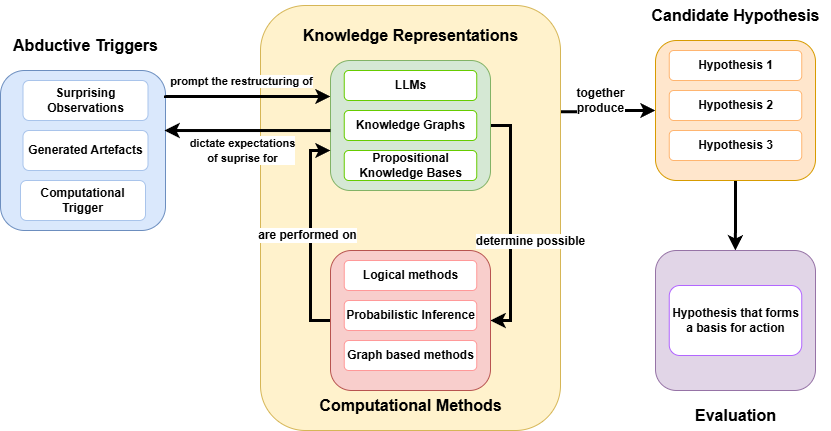

## Diagram: Abductive Reasoning Process

### Overview

The image is a diagram illustrating an abductive reasoning process. It shows the flow of information and the relationships between different components, including abductive triggers, knowledge representations, computational methods, candidate hypotheses, and evaluation.

### Components/Axes

* **Abductive Triggers** (Blue box, top-left): Contains "Surprising Observations", "Generated Artefacts", and "Computational Trigger".

* **Knowledge Representations** (Yellow rounded box, center): Contains "LLMs", "Knowledge Graphs", and "Propositional Knowledge Bases" (all in green boxes).

* **Computational Methods** (Red rounded box, center-bottom): Contains "Logical methods", "Probabilistic Inference", and "Graph based methods" (all in red boxes).

* **Candidate Hypothesis** (Orange rounded box, top-right): Contains "Hypothesis 1", "Hypothesis 2", and "Hypothesis 3" (all in orange boxes).

* **Evaluation** (Purple rounded box, bottom-right): Contains "Hypothesis that forms a basis for action" (in a purple box).

* **Arrows**: Indicate the flow of information and relationships between the components.

* An arrow from "Abductive Triggers" to "Knowledge Representations" is labeled "prompt the restructuring of".

* An arrow from "Knowledge Representations" to "Abductive Triggers" is labeled "dictate expectations of surprise for".

* An arrow from "Knowledge Representations" to "Candidate Hypothesis" is labeled "together produce".

* An arrow from "Computational Methods" to "Knowledge Representations" is labeled "are performed on".

* An arrow from "Computational Methods" to "Candidate Hypothesis" is labeled "determine possible".

* An arrow from "Candidate Hypothesis" to "Evaluation" is unlabeled.

### Detailed Analysis or Content Details

* **Abductive Triggers**:

* Surprising Observations: Represents unexpected or anomalous data that initiates the reasoning process.

* Generated Artefacts: Refers to new data or information created during the reasoning process.

* Computational Trigger: Represents a computational event that initiates the reasoning process.

* **Knowledge Representations**:

* LLMs: Refers to Large Language Models.

* Knowledge Graphs: Represents structured knowledge in the form of nodes and edges.

* Propositional Knowledge Bases: Represents knowledge in the form of logical propositions.

* **Computational Methods**:

* Logical methods: Refers to methods based on formal logic.

* Probabilistic Inference: Refers to methods for reasoning under uncertainty.

* Graph based methods: Refers to methods that utilize graph structures for computation.

* **Candidate Hypothesis**:

* Hypothesis 1, Hypothesis 2, Hypothesis 3: Represents different possible explanations or solutions.

* **Evaluation**:

* Hypothesis that forms a basis for action: Represents the selected hypothesis that is used for decision-making or action.

### Key Observations

* The diagram illustrates a cyclical process where abductive triggers initiate the restructuring of knowledge representations.

* Knowledge representations and computational methods work together to generate candidate hypotheses.

* The evaluation stage selects a hypothesis for action.

### Interpretation

The diagram depicts a high-level overview of abductive reasoning, a form of logical inference that starts with an observation and then seeks to find the simplest and most likely explanation. The process begins with "Abductive Triggers" that prompt the system to restructure its "Knowledge Representations". These representations, along with "Computational Methods", are used to generate "Candidate Hypotheses". Finally, the hypotheses are evaluated, and one is selected as the basis for action. The cycle suggests a continuous refinement of knowledge and hypotheses based on new observations and computational methods. The feedback loop between "Knowledge Representations" and "Abductive Triggers" indicates that expectations are adjusted based on the outcomes of the reasoning process.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Diagram: Abductive Reasoning and Hypothesis Generation Framework

### Overview

The image is a technical flowchart illustrating a system architecture or cognitive framework for abductive reasoning. It details the cyclical and linear processes by which anomalous inputs ("triggers") interact with existing knowledge bases and computational engines to generate, and subsequently evaluate, actionable hypotheses.

### Components and Spatial Grounding

The diagram is divided into four primary spatial regions, each color-coded and containing specific sub-elements:

1. **Left Region (Light Blue):** "Abductive Triggers"

2. **Center Region (Pale Yellow Background):** A central processing hub containing "Knowledge Representations" (Light Green) and "Computational Methods" (Light Pink).

3. **Top-Right Region (Light Orange):** "Candidate Hypothesis"

4. **Bottom-Right Region (Light Purple):** "Evaluation"

### Content Details and Flow Analysis

#### 1. Abductive Triggers (Left Region)

* **Visuals:** A light blue container with rounded corners.

* **Header Text:** `Abductive Triggers`

* **Contained Elements (White boxes):**

* `Surprising Observations`

* `Generated Artefacts`

* `Computational Trigger`

#### 2. Central Processing Hub (Center Region)

This region is bounded by a large pale yellow box with rounded corners. It contains two distinct sub-systems that interact with each other.

* **Top Sub-system (Light Green):**

* **Header Text:** `Knowledge Representations`

* **Contained Elements (White boxes):**

* `LLMs` (Large Language Models)

* `Knowledge Graphs`

* `Propositional Knowledge Bases`

* **Bottom Sub-system (Light Pink):**

* **Footer Text:** `Computational Methods`

* **Contained Elements (White boxes):**

* `Logical methods`

* `Probabilistic Inference`

* `Graph based methods`

* **Internal Flow (Vertical):**

* A downward-pointing arrow from the Green box to the Pink box is labeled: `determine possible`.

* An upward-pointing arrow from the Pink box to the Green box is labeled: `are performed on`.

#### 3. Candidate Hypothesis (Top-Right Region)

* **Visuals:** A light orange container with rounded corners.

* **Header Text:** `Candidate Hypothesis`

* **Contained Elements (White boxes):**

* `Hypothesis 1`

* `Hypothesis 2`

* `Hypothesis 3`

#### 4. Evaluation (Bottom-Right Region)

* **Visuals:** A light purple container with rounded corners.

* **Footer Text:** `Evaluation`

* **Contained Elements (White box):**

* `Hypothesis that forms a basis for action`

#### 5. External System Flows (Connecting the Regions)

* **Trigger to Knowledge (Left to Center):** A right-pointing arrow connects the "Abductive Triggers" box to the "Knowledge Representations" box.

* **Label:** `prompt the restructuring of`

* **Knowledge to Trigger (Center to Left):** A left-pointing arrow connects the "Knowledge Representations" box back to the "Abductive Triggers" box.

* **Label:** `dictate expectations of suprise for` *(Note: "surprise" is misspelled as "suprise" in the source image).*

* **Processing to Hypothesis (Center to Top-Right):** A right-pointing arrow originates from the edge of the central yellow box and points to the "Candidate Hypothesis" box.

* **Label:** `together produce`

* **Hypothesis to Evaluation (Top-Right to Bottom-Right):** A downward-pointing arrow connects the "Candidate Hypothesis" box to the "Evaluation" box.

* **Label:** None.

### Key Observations

* **Feedback Loops:** There are two distinct feedback loops in the diagram. The first is between Triggers and Knowledge Representations. The second is internal to the central processing hub, between Knowledge Representations and Computational Methods.

* **Convergence of AI Paradigms:** The "Knowledge Representations" section notably combines modern neural/statistical AI (`LLMs`) with traditional symbolic AI (`Knowledge Graphs`, `Propositional Knowledge Bases`).

* **Funneling Effect:** The system moves from multiple inputs (triggers), through complex cyclical processing, generates multiple outputs (Hypotheses 1, 2, 3), and ultimately funnels down to a single, actionable output in the Evaluation phase.

### Interpretation

This diagram outlines a sophisticated architecture for an autonomous agent or advanced AI system based on **Peircean Abductive Reasoning**. In Charles Sanders Peirce's philosophy, abduction is the process of forming an explanatory hypothesis in response to an anomaly.

The diagram maps this cognitive process perfectly:

1. **The Anomaly:** Existing knowledge dictates what the system expects to see (`dictate expectations of suprise for`). When an input violates these expectations (`Surprising Observations`), it acts as a trigger.

2. **The Restructuring:** This surprise forces the system to update its worldview (`prompt the restructuring of`). It does this by applying rigorous `Computational Methods` (logic, probability, graph math) to its existing `Knowledge Representations` (LLMs, KGs). The representations determine what computations are possible, and the computations are performed on the representations to alter them.

3. **Hypothesis Generation:** This internal engine does not produce a single definitive answer; rather, the combined systems (`together produce`) generate a set of plausible explanations (`Candidate Hypothesis 1, 2, 3`).

4. **Actionable Output:** Because an agent cannot act on multiple conflicting theories simultaneously, these candidates must pass through an `Evaluation` phase. The surviving hypothesis is no longer just a theory; it becomes the pragmatic `basis for action`.

Ultimately, the diagram demonstrates a blueprint for moving AI beyond simple pattern recognition into the realm of dynamic reasoning, where systems can independently notice when they are wrong, compute why, and formulate a plan to adapt.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Abductive Reasoning Process

### Overview

This diagram illustrates the process of abductive reasoning, outlining the triggers, knowledge representations, computational methods, and resulting hypothesis evaluation. The diagram uses boxes and arrows to show the flow of information and relationships between different components. It is divided into four main sections: Abductive Triggers, Knowledge Representations, Computational Methods, and Evaluation.

### Components/Axes

The diagram consists of the following components:

* **Abductive Triggers:** Includes "Surprising Observations", "Generated Artefacts", and "Computational Trigger".

* **Knowledge Representations:** Includes "LLMs", "Knowledge Graphs", and "Propositional Knowledge Bases".

* **Computational Methods:** Includes "Logical methods", "Probabilistic Inference", and "Graph based methods".

* **Candidate Hypothesis:** Includes "Hypothesis 1", "Hypothesis 2", and "Hypothesis 3".

* **Evaluation:** Includes "Hypothesis that forms a basis for action".

Arrows indicate the direction of influence or processing. Text labels on the arrows describe the nature of the relationship.

### Detailed Analysis or Content Details

**Abductive Triggers (Left Side - Light Blue):**

* "Surprising Observations" is a rectangular box.

* "Generated Artefacts" is a rectangular box.

* "Computational Trigger" is a rectangular box.

* An arrow labeled "prompt the restructuring of" points from "Abductive Triggers" to "Knowledge Representations".

* An arrow labeled "dictate expectations of surprise for" points from "Abductive Triggers" to "Knowledge Representations".

**Knowledge Representations (Center-Left - Yellow):**

* "LLMs" is a rectangular box.

* "Knowledge Graphs" is a rectangular box.

* "Propositional Knowledge Bases" is a rectangular box.

* An arrow labeled "together produce" points from "Knowledge Representations" to "Computational Methods".

**Computational Methods (Bottom-Center - Light Orange):**

* "Logical methods" is a rectangular box.

* "Probabilistic Inference" is a rectangular box.

* "Graph based methods" is a rectangular box.

* An arrow labeled "are performed on" points from "Knowledge Representations" to "Computational Methods".

* An arrow labeled "determine possible" points from "Computational Methods" to "Candidate Hypothesis".

**Candidate Hypothesis & Evaluation (Right Side - Light Purple/Pink):**

* "Hypothesis 1" is a rectangular box.

* "Hypothesis 2" is a rectangular box.

* "Hypothesis 3" is a rectangular box.

* "Hypothesis that forms a basis for action" is a rectangular box.

* An arrow points from "Candidate Hypothesis" to "Evaluation".

### Key Observations

The diagram highlights a cyclical process. Abductive triggers initiate a restructuring of knowledge representations, which are then processed by computational methods to generate candidate hypotheses. These hypotheses are then evaluated, potentially leading to action. The diagram emphasizes the interplay between observation, knowledge, computation, and action in abductive reasoning.

### Interpretation

The diagram illustrates a computational model of abductive reasoning. Abduction, in philosophy and AI, is a form of logical inference that starts with an observation and seeks the simplest and most likely explanation. This diagram breaks down that process into distinct stages.

* **Triggers:** The process begins with something unexpected – a surprising observation, an artifact that doesn't fit existing models, or a computational signal.

* **Knowledge Representation:** These triggers prompt a restructuring of existing knowledge, represented here by LLMs (Large Language Models), Knowledge Graphs, and Propositional Knowledge Bases. These are different ways of storing and organizing information.

* **Computational Methods:** The restructured knowledge is then subjected to computational methods – logical reasoning, probabilistic inference, and graph-based analysis – to generate possible explanations.

* **Hypothesis Generation & Evaluation:** These methods produce a set of candidate hypotheses, which are then evaluated to determine which one best explains the initial observation and forms a basis for action.

The diagram suggests that abductive reasoning is not simply a matter of finding the "right" answer, but rather a process of generating and evaluating multiple possibilities. The use of LLMs, Knowledge Graphs, and probabilistic methods indicates a modern, computational approach to this traditionally philosophical problem. The diagram doesn't provide specific data or numerical values, but rather a conceptual framework for understanding the process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Abductive Reasoning Framework

### Overview

The image is a flowchart illustrating a conceptual framework for abductive reasoning, likely within an AI or computational context. It depicts a cyclical process where triggers lead to knowledge representation restructuring, which is processed by computational methods to generate candidate hypotheses that are then evaluated. The diagram uses color-coded boxes and directional arrows to show relationships and information flow.

### Components/Axes

The diagram is organized into five main colored boxes, each containing specific components:

1. **Abductive Triggers (Blue Box, Left)**

* Contents:

* Surprising Observations

* Generated Artefacts

* Computational Trigger

* **Relationships:**

* An arrow points from this box to the "Knowledge Representations" box with the label: `prompt the restructuring of`.

* A return arrow points from "Knowledge Representations" back to this box with the label: `dictate expectations of surprise for`.

2. **Knowledge Representations (Green Box, Center-Top)**

* Contents:

* LLMs (Large Language Models)

* Knowledge Graphs

* Propositional Knowledge Bases

* **Relationships:**

* An arrow points from this box to the "Computational Methods" box with the label: `are performed on`.

* An arrow points from this box to the "Candidate Hypothesis" box with the label: `together produce`.

3. **Computational Methods (Pink Box, Center-Bottom)**

* Contents:

* Logical methods

* Probabilistic Inference

* Graph based methods

* **Relationships:**

* An arrow points from this box to the "Candidate Hypothesis" box with the label: `determine possible`.

4. **Candidate Hypothesis (Orange Box, Right-Top)**

* Contents:

* Hypothesis 1

* Hypothesis 2

* Hypothesis 3

* **Relationships:**

* An arrow points from this box down to the "Evaluation" box.

5. **Evaluation (Purple Box, Right-Bottom)**

* Contents:

* Hypothesis that forms a basis for action

### Detailed Analysis

The diagram describes a closed-loop system for generating and refining hypotheses.

* **Process Initiation:** The process begins with "Abductive Triggers" (e.g., surprising data, generated outputs, or a computational event). These triggers "prompt the restructuring of" the system's "Knowledge Representations."

* **Knowledge & Computation Interaction:** The structured knowledge (in the form of LLMs, Knowledge Graphs, or Knowledge Bases) serves as the substrate upon which "Computational Methods" (logical, probabilistic, graph-based) "are performed."

* **Hypothesis Generation:** The "Knowledge Representations" and "Computational Methods" work in tandem. The knowledge representations "together produce" candidate hypotheses, while the computational methods "determine possible" hypotheses. This suggests a collaborative or filtering role.

* **Output and Feedback:** The output is a set of "Candidate Hypotheses" (1, 2, 3). These are then subjected to "Evaluation," with the goal of identifying a "Hypothesis that forms a basis for action."

* **Critical Feedback Loop:** A key feature is the feedback arrow from "Knowledge Representations" back to "Abductive Triggers," labeled `dictate expectations of surprise for`. This implies the system's current knowledge state actively shapes what it considers a "surprising" trigger, creating a self-referential or adaptive cycle.

### Key Observations

* **Bidirectional Relationship:** The connection between "Abductive Triggers" and "Knowledge Representations" is bidirectional, indicating a dynamic, two-way influence rather than a simple linear flow.

* **Multiple Pathways to Hypothesis:** Hypotheses are generated through two described pathways: directly from knowledge representations and via determination by computational methods.

* **Action-Oriented Goal:** The final evaluation stage explicitly aims for a hypothesis that is actionable, grounding the abstract reasoning process in practical utility.

* **Component Grouping:** The diagram groups related concepts (triggers, representations, methods) into distinct modules, clarifying the functional architecture of the proposed system.

### Interpretation

This diagram outlines a sophisticated model for abductive inference—the process of forming the best explanation for an observation. It is particularly relevant to modern AI systems that combine large language models (LLMs) with structured knowledge (graphs, bases) and various inference techniques.

The framework suggests that reasoning is not a one-way street. Instead, it's a cycle where new information (triggers) forces an update to the system's internal world model (knowledge representations). This updated model, processed by computational logic, generates potential explanations (hypotheses). Crucially, the system's existing knowledge also defines what it finds "surprising," meaning its learning and hypothesis generation are guided by its prior understanding. The ultimate goal is to move from speculation to a hypothesis robust enough to guide action, closing the loop between perception, reasoning, and decision-making. This model could be applied in fields like scientific discovery, diagnostic systems, or autonomous AI agents.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Cognitive Process for Hypothesis Generation and Evaluation

### Overview

This diagram illustrates a cognitive framework for generating and evaluating hypotheses through abductive reasoning. It depicts the flow from initial triggers through knowledge representations to computational methods, culminating in actionable hypotheses.

### Components/Axes

1. **Abductive Triggers (Left Box, Blue)**

- Surprising Observations

- Generated Artefacts

- Computational Trigger

- Arrows labeled:

- "prompt the restructuring of"

- "dictate expectations of surprise for"

2. **Knowledge Representations (Central Box, Yellow)**

- **Sub-components (Green Box):**

- LLMs

- Knowledge Graphs

- Propositional Knowledge Bases

- **Computational Methods (Pink Box):**

- Logical methods

- Probabilistic Inference

- Graph-based methods

- Arrows labeled:

- "are performed on"

- "determine possible"

3. **Candidate Hypothesis (Right Box, Orange)**

- Hypothesis 1

- Hypothesis 2

- Hypothesis 3

- Arrow labeled: "together produce"

4. **Evaluation (Purple Box)**

- Final output: "Hypothesis that forms a basis for action"

### Detailed Analysis

- **Flow Direction:**

- Left → Center: Abductive triggers initiate knowledge restructuring

- Center → Right: Computational methods process knowledge representations to generate hypotheses

- Right → Bottom: Evaluation refines hypotheses into actionable forms

- **Key Connections:**

- Surprising Observations directly influence LLMs and Knowledge Graphs

- Generated Artefacts feed into Propositional Knowledge Bases

- Computational Trigger enables all computational methods

- All computational methods contribute to hypothesis generation

### Key Observations

1. The framework emphasizes iterative refinement through multiple hypothesis generations

2. Computational methods serve as the bridge between raw knowledge and hypothesis formation

3. Evaluation acts as a quality control step before action implementation

4. No numerical data present; purely conceptual flow diagram

### Interpretation

This diagram represents a formalized model of abductive reasoning in AI systems. The blue-to-yellow-to-orange-to-purple color progression visually represents the transition from raw data (triggers) to structured knowledge (representations) to synthesized understanding (hypotheses) and finally to validated action (evaluation). The absence of quantitative metrics suggests this is a conceptual rather than empirical framework, focusing on process flow rather than performance metrics. The use of "together produce" for hypothesis generation implies collaborative or ensemble-based reasoning approaches.

DECODING INTELLIGENCE...