## Flowchart Diagram: Multi-Stage Logical Reasoning System

### Overview

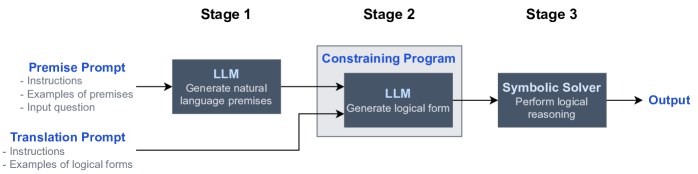

The diagram illustrates a three-stage process for logical reasoning, integrating natural language processing (NLP) and symbolic AI. It shows the flow of data from input prompts through language models (LLMs) to a symbolic solver, culminating in an output.

### Components/Axes

- **Stage 1**:

- **Premise Prompt**: Contains "Instructions," "Examples of premises," and "Input question."

- **Translation Prompt**: Contains "Instructions" and "Examples of logical forms."

- **LLM**: Generates "natural language premises" from the Premise Prompt.

- **Stage 2**:

- **Constraining Program**: A gray box labeled "Constraining Program" that uses the LLM to generate a "logical form."

- **Stage 3**:

- **Symbolic Solver**: Performs "logical reasoning" to produce the final "Output."

### Detailed Analysis

- **Stage 1**:

- The **Premise Prompt** provides context (instructions, examples, and input questions) to guide the LLM in generating natural language premises.

- The **Translation Prompt** supplies examples of logical forms to ensure the LLM aligns its output with required structures.

- The LLM processes both prompts to generate natural language premises, which are then fed into the Constraining Program.

- **Stage 2**:

- The **Constraining Program** acts as a bridge, using the LLM to convert natural language premises into a structured logical form.

- **Stage 3**:

- The **Symbolic Solver** takes the logical form and executes formal reasoning to derive the final output.

### Key Observations

1. **Hybrid Architecture**: Combines LLMs (for natural language understanding) with symbolic solvers (for formal reasoning), suggesting a system designed for complex, multi-step problem-solving.

2. **Flow Direction**: Data flows unidirectionally from Stage 1 → Stage 2 → Stage 3, with feedback loops implied by the bidirectional arrow between the LLM and Constraining Program.

3. **Role of Prompts**: Explicit instructions and examples in prompts ensure the LLM adheres to task requirements, reducing ambiguity in generated outputs.

### Interpretation

This diagram represents a pipeline for tasks requiring both linguistic understanding and logical deduction. The use of LLMs in Stage 1 and 2 highlights their role in translating unstructured input into structured logical forms, while the Symbolic Solver handles rigorous reasoning. The Constraining Program likely enforces consistency between natural language outputs and formal logic, addressing potential mismatches. The system’s design implies applications in domains like automated theorem proving, legal reasoning, or AI-driven decision-making, where precision and interpretability are critical.