\n

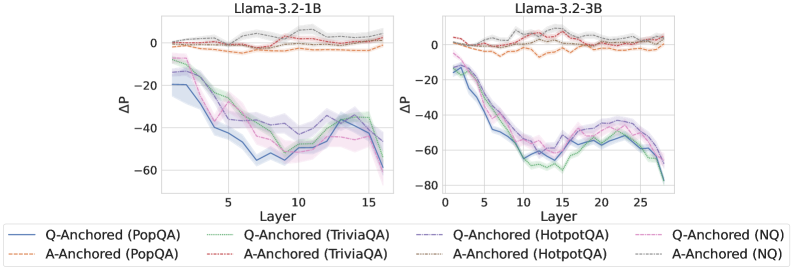

## Line Chart: ΔP vs. Layer for Different QA Datasets and Model Sizes

### Overview

The image presents two line charts, side-by-side, comparing the change in perplexity (ΔP) across different layers of two Llama models: Llama-3.2-1B and Llama-3.2-3B. Each chart displays multiple lines representing different question-answering (QA) datasets and anchoring methods. The charts aim to visualize how perplexity changes with layer depth for each configuration.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 15 for the 1B model and 0 to 25 for the 3B model).

* **Y-axis:** ΔP (change in perplexity), ranging from approximately -80 to 0.

* **Title (Left Chart):** Llama-3.2-1B

* **Title (Right Chart):** Llama-3.2-3B

* **Legend:** Located at the bottom of the image, containing the following labels and corresponding line styles/colors:

* Q-Anchored (PopQA) - Solid Blue Line

* A-Anchored (PopQA) - Dotted Orange Line

* Q-Anchored (TriviaQA) - Solid Purple Line

* A-Anchored (TriviaQA) - Dotted Brown Line

* Q-Anchored (HotpotQA) - Dashed Teal Line

* A-Anchored (HotpotQA) - Dashed Green Line

* Q-Anchored (NQ) - Solid Light Blue Line

* A-Anchored (NQ) - Dotted Pink Line

### Detailed Analysis or Content Details

**Left Chart (Llama-3.2-1B):**

* **Q-Anchored (PopQA):** Starts at approximately ΔP = -15 at Layer 0, decreases to approximately ΔP = -50 at Layer 10, and then slightly recovers to approximately ΔP = -45 at Layer 15.

* **A-Anchored (PopQA):** Starts at approximately ΔP = -5 at Layer 0, decreases to approximately ΔP = -25 at Layer 10, and then slightly recovers to approximately ΔP = -20 at Layer 15.

* **Q-Anchored (TriviaQA):** Starts at approximately ΔP = -20 at Layer 0, decreases to approximately ΔP = -45 at Layer 10, and then decreases further to approximately ΔP = -55 at Layer 15.

* **A-Anchored (TriviaQA):** Starts at approximately ΔP = -10 at Layer 0, decreases to approximately ΔP = -30 at Layer 10, and then decreases further to approximately ΔP = -40 at Layer 15.

* **Q-Anchored (HotpotQA):** Starts at approximately ΔP = -10 at Layer 0, decreases to approximately ΔP = -35 at Layer 10, and then decreases further to approximately ΔP = -50 at Layer 15.

* **A-Anchored (HotpotQA):** Starts at approximately ΔP = -5 at Layer 0, decreases to approximately ΔP = -30 at Layer 10, and then decreases further to approximately ΔP = -45 at Layer 15.

* **Q-Anchored (NQ):** Starts at approximately ΔP = -15 at Layer 0, decreases to approximately ΔP = -40 at Layer 10, and then decreases further to approximately ΔP = -50 at Layer 15.

* **A-Anchored (NQ):** Starts at approximately ΔP = -5 at Layer 0, decreases to approximately ΔP = -30 at Layer 10, and then decreases further to approximately ΔP = -40 at Layer 15.

**Right Chart (Llama-3.2-3B):**

* **Q-Anchored (PopQA):** Starts at approximately ΔP = -15 at Layer 0, decreases to approximately ΔP = -40 at Layer 10, and then slightly recovers to approximately ΔP = -35 at Layer 25.

* **A-Anchored (PopQA):** Starts at approximately ΔP = -5 at Layer 0, decreases to approximately ΔP = -25 at Layer 10, and then slightly recovers to approximately ΔP = -20 at Layer 25.

* **Q-Anchored (TriviaQA):** Starts at approximately ΔP = -20 at Layer 0, decreases to approximately ΔP = -50 at Layer 10, and then decreases further to approximately ΔP = -65 at Layer 25.

* **A-Anchored (TriviaQA):** Starts at approximately ΔP = -10 at Layer 0, decreases to approximately ΔP = -35 at Layer 10, and then decreases further to approximately ΔP = -50 at Layer 25.

* **Q-Anchored (HotpotQA):** Starts at approximately ΔP = -10 at Layer 0, decreases to approximately ΔP = -40 at Layer 10, and then decreases further to approximately ΔP = -60 at Layer 25.

* **A-Anchored (HotpotQA):** Starts at approximately ΔP = -5 at Layer 0, decreases to approximately ΔP = -30 at Layer 10, and then decreases further to approximately ΔP = -50 at Layer 25.

* **Q-Anchored (NQ):** Starts at approximately ΔP = -15 at Layer 0, decreases to approximately ΔP = -45 at Layer 10, and then decreases further to approximately ΔP = -60 at Layer 25.

* **A-Anchored (NQ):** Starts at approximately ΔP = -5 at Layer 0, decreases to approximately ΔP = -30 at Layer 10, and then decreases further to approximately ΔP = -45 at Layer 25.

### Key Observations

* In both charts, all lines generally exhibit a downward trend, indicating that ΔP decreases as the layer number increases. This suggests that perplexity generally increases with layer depth.

* The Q-Anchored lines consistently have lower ΔP values (more negative) than the A-Anchored lines for the same dataset, indicating that question-anchored methods generally perform better than answer-anchored methods.

* The TriviaQA dataset consistently shows the largest decrease in ΔP (most negative values) across both models, suggesting it is the most challenging dataset.

* The 3B model (right chart) shows a more pronounced decrease in ΔP across all datasets compared to the 1B model (left chart), indicating that the larger model benefits more from increased layer depth.

### Interpretation

The data suggests that increasing the depth of the Llama models (increasing the layer number) generally leads to increased perplexity, implying a potential degradation in performance. However, the specific impact varies depending on the QA dataset and the anchoring method used. Question-anchored methods consistently outperform answer-anchored methods, suggesting that framing the task from the question's perspective is more effective. The larger 3B model demonstrates a more significant improvement with increased depth, indicating that larger models are better able to leverage the benefits of deeper architectures. The differences in performance across datasets highlight the importance of dataset characteristics in model evaluation. The consistent downward trend across all lines suggests a potential need for regularization or architectural modifications to mitigate the increase in perplexity with depth. The charts provide valuable insights into the behavior of these models and can inform future research and development efforts.