## Neural Network Architecture Diagram: Dual-Path Model for Rainfall and Geographic Data

### Overview

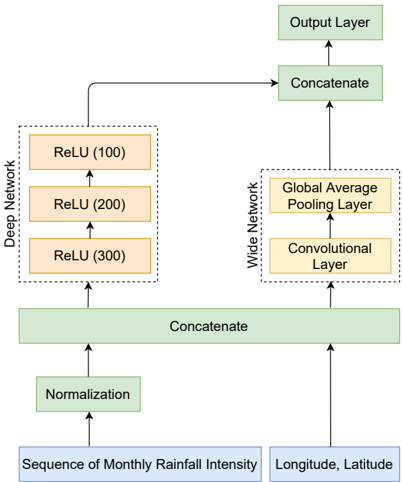

The image displays a block diagram of a neural network architecture designed to process two distinct input types: a sequence of monthly rainfall intensity and geographic coordinates (longitude, latitude). The model features a dual-path design, splitting the processed input into a "Deep Network" branch and a "Wide Network" branch before merging them for a final output. The diagram uses color-coded blocks and directional arrows to illustrate the data flow and transformation stages.

### Components/Axes

The diagram is structured as a vertical flowchart with inputs at the bottom and the output at the top. All text is in English.

**Input Layer (Bottom):**

* **Left Input Block:** "Sequence of Monthly Rainfall Intensity" (Light blue rectangle).

* **Right Input Block:** "Longitude, Latitude" (Light blue rectangle).

**Preprocessing & Initial Merge:**

* **Normalization Block:** A green rectangle labeled "Normalization" receives the rainfall sequence input.

* **First Concatenation Block:** A wide green rectangle labeled "Concatenate" receives arrows from the "Normalization" block and the "Longitude, Latitude" block. This merges the two input streams.

**Dual Network Paths (Middle):**

The data from the first concatenation splits into two parallel paths, enclosed in dashed-line boxes.

1. **Left Path - "Deep Network" (Dashed box on the left):**

* Contains three orange rectangles stacked vertically, connected by upward arrows.

* **Bottom:** "ReLU (300)"

* **Middle:** "ReLU (200)"

* **Top:** "ReLU (100)"

* The numbers in parentheses likely indicate the number of neurons or units in each fully connected layer with a ReLU activation function.

2. **Right Path - "Wide Network" (Dashed box on the right):**

* Contains two yellow rectangles stacked vertically, connected by an upward arrow.

* **Bottom:** "Convolutional Layer"

* **Top:** "Global Average Pooling Layer"

**Final Merge and Output (Top):**

* **Second Concatenation Block:** A green rectangle labeled "Concatenate" receives arrows from the top of the "Deep Network" (ReLU (100)) and the top of the "Wide Network" (Global Average Pooling Layer).

* **Output Layer:** A final green rectangle labeled "Output Layer" receives an arrow from the second "Concatenate" block.

### Detailed Analysis

**Data Flow and Connections:**

1. The "Sequence of Monthly Rainfall Intensity" is first normalized.

2. The normalized rainfall data is concatenated with the raw "Longitude, Latitude" data.

3. This combined feature vector is fed simultaneously into two separate processing streams:

* **Deep Network Stream:** Processes the combined features through three successive, decreasingly sized fully connected layers (300 -> 200 -> 100 units) with ReLU activation.

* **Wide Network Stream:** Processes the combined features through a convolutional layer followed by a global average pooling layer, which reduces spatial dimensions.

4. The final, refined feature representations from both the Deep Network (output of ReLU (100)) and the Wide Network (output of Global Average Pooling) are concatenated.

5. This final concatenated vector is passed to the "Output Layer" for the model's prediction or classification task.

**Spatial Layout:**

* The diagram is vertically oriented, emphasizing a bottom-up data flow.

* The two input blocks are positioned side-by-side at the bottom.

* The "Deep Network" and "Wide Network" are presented as parallel, equally prominent branches in the center.

* The two "Concatenate" blocks act as critical junction points, visually represented as wide green bars that span the width of the flow they are merging.

### Key Observations

* **Hybrid Architecture:** The model explicitly combines a deep, multi-layer perceptron-style network ("Deep Network") with a convolutional network component ("Wide Network"). This suggests an intent to capture both high-level abstract features (via deep layers) and potentially local or spatial patterns within the input data (via convolution).

* **Input Heterogeneity:** The model is designed for multi-modal input, handling sequential/temporal data (rainfall intensity) and static spatial coordinates (longitude, latitude) simultaneously.

* **Feature Fusion Strategy:** Information from the two input sources is fused early (first concatenation) and then again late (second concatenation) in the network, allowing for interaction between the processed features from both the deep and wide pathways before the final output.

* **Dimensionality Reduction:** The "Global Average Pooling Layer" in the Wide Network is a key component for reducing the spatial dimensions of the feature maps from the convolutional layer, creating a fixed-size output for concatenation.

### Interpretation

This architecture diagram represents a specialized neural network model likely designed for a spatio-temporal prediction or regression task, such as forecasting rainfall impacts, agricultural yield prediction, or environmental modeling based on geographic and climatic data.

The **dual-path design** is the core investigative insight. The "Deep Network" path, with its stack of ReLU layers, is structured to learn complex, non-linear hierarchical relationships within the combined input data. The "Wide Network" path, using convolution and pooling, is structured to detect local patterns or features, which could be relevant if the input data has an inherent spatial or sequential structure that benefits from convolutional filters (e.g., treating the rainfall sequence and coordinates as a 1D "image").

The **two-stage concatenation** strategy is significant. The first concatenation forces the model to immediately integrate geographic context with the normalized rainfall data. The second concatenation merges the high-level abstractions from the deep path with the pooled features from the wide path, ensuring the final decision layer has access to a rich, composite representation of the input. This suggests the hypothesis that both deep feature extraction and localized pattern recognition are necessary for optimal performance on the target task. The model's effectiveness would depend on how well these two distinct processing paradigms complement each other for the given problem.