\n

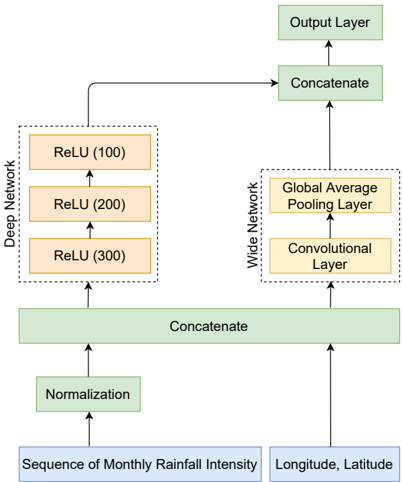

## Diagram: Neural Network Architecture for Rainfall Prediction

### Overview

The image depicts a neural network architecture designed for rainfall prediction. The network consists of two main branches: a "Deep Network" and a "Wide Network". These branches process different input data types – a sequence of monthly rainfall intensity and longitude/latitude coordinates, respectively – and are then combined to produce an output. The diagram illustrates the flow of data through various layers, including normalization, ReLU activations, convolutional layers, and pooling layers.

### Components/Axes

The diagram is structured vertically, with inputs at the bottom and the output at the top. Key components include:

* **Inputs:**

* "Sequence of Monthly Rainfall Intensity"

* "Longitude, Latitude"

* **Processing Layers:**

* "Normalization"

* "ReLU (100)", "ReLU (200)", "ReLU (300)" – within the Deep Network

* "Convolutional Layer"

* "Global Average Pooling Layer" – within the Wide Network

* "Concatenate" (appears twice)

* **Output:**

* "Output Layer"

The diagram uses arrows to indicate the direction of data flow. The "Deep Network" is positioned on the left, and the "Wide Network" is on the right.

### Detailed Analysis or Content Details

1. **Input Layer:**

* The "Sequence of Monthly Rainfall Intensity" input feeds into a "Normalization" layer.

* The "Longitude, Latitude" input directly feeds into the "Wide Network".

2. **Deep Network:**

* The output of the "Normalization" layer is fed into a series of three "ReLU" layers.

* The ReLU layers have 100, 200, and 300 units respectively, indicated by the numbers in parentheses.

* Data flows sequentially from ReLU(100) to ReLU(200) to ReLU(300).

3. **Wide Network:**

* The "Longitude, Latitude" input is fed into a "Convolutional Layer".

* The output of the "Convolutional Layer" is then passed through a "Global Average Pooling Layer".

4. **Concatenation and Output:**

* The output of the "Deep Network" (ReLU(300)) and the output of the "Wide Network" (Global Average Pooling Layer) are concatenated using a "Concatenate" layer.

* The output of this "Concatenate" layer is then fed into another "Concatenate" layer.

* Finally, the output of the second "Concatenate" layer is passed to the "Output Layer".

### Key Observations

* The architecture combines a deep, fully connected network with a wide, convolutional network.

* The deep network appears to process temporal data (monthly rainfall intensity), while the wide network processes spatial data (longitude and latitude).

* The use of ReLU activations suggests a non-linear transformation of the data at each layer.

* The "Global Average Pooling Layer" in the wide network likely reduces the spatial dimensionality of the input.

* The two "Concatenate" layers suggest a hierarchical combination of features extracted from both networks.

### Interpretation

This diagram illustrates a neural network architecture designed to leverage both temporal and spatial information for rainfall prediction. The "Deep Network" likely learns complex patterns in the sequence of monthly rainfall, while the "Wide Network" captures spatial relationships between locations. By concatenating the outputs of these two networks, the model can potentially make more accurate predictions by considering both the history of rainfall at a location and the surrounding geographical context. The use of ReLU activations introduces non-linearity, allowing the model to learn more complex relationships. The normalization layer likely improves the training process by scaling the input data. The architecture suggests a focus on feature extraction and integration, with the convolutional layer in the wide network designed to identify relevant spatial features. The two concatenate layers suggest a hierarchical feature combination, where features from both networks are combined and further processed. The diagram does not provide specific details about the output layer, such as the type of prediction (e.g., rainfall amount, probability of rain) or the loss function used for training.