## Diagram: Neural Network Architecture for Rainfall Intensity Prediction

### Overview

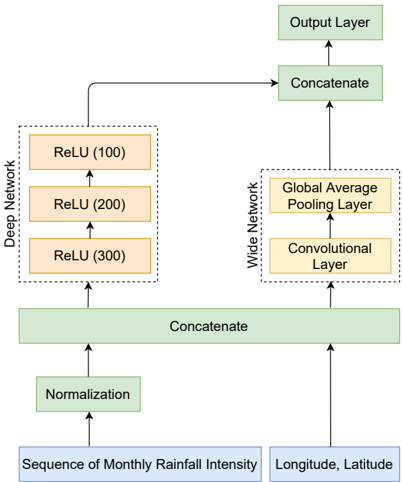

The diagram illustrates a hybrid neural network architecture combining deep and wide networks for processing geospatial and temporal rainfall data. The model integrates monthly rainfall intensity sequences with geographic coordinates (longitude/latitude) through normalization, feature extraction, and concatenation before producing predictions via an output layer.

### Components/Axes

1. **Input Data**:

- **Sequence of Monthly Rainfall Intensity** (blue box)

- **Longitude, Latitude** (blue box)

2. **Processing Pipeline**:

- **Normalization** (green box)

- **Deep Network** (orange boxes):

- ReLU (100 units)

- ReLU (200 units)

- ReLU (300 units)

- **Wide Network** (orange boxes):

- Convolutional Layer

- Global Average Pooling Layer

- **Concatenate** (green boxes, two instances)

3. **Output**:

- **Output Layer** (green box)

### Detailed Analysis

- **Data Flow**:

1. Input data (rainfall sequences + coordinates) undergoes normalization.

2. Normalized data splits into two parallel pathways:

- **Deep Network**: Sequential ReLU activation layers with increasing units (100 → 200 → 300).

- **Wide Network**: Convolutional layer extracts spatial features, followed by global average pooling to summarize spatial patterns.

3. Outputs from both networks are concatenated twice before reaching the final output layer.

- **Key Architectural Choices**:

- Hybrid design merges deep learning's hierarchical feature learning (deep network) with wide network's ability to capture spatial relationships (convolutional + pooling).

- Normalization ensures input data standardization, critical for stable training.

- Concatenation combines low-level features (wide network) with high-level abstractions (deep network).

### Key Observations

1. **Feature Integration**: The dual-pathway design suggests the model aims to capture both temporal patterns (rainfall sequences) and spatial correlations (geographic coordinates).

2. **Scaling Strategy**: ReLU layer sizes increase progressively in the deep network (100 → 300 units), indicating deeper hierarchical representation learning.

3. **Spatial Processing**: The wide network's convolutional layer likely handles 2D spatial relationships between geographic coordinates, while global pooling reduces dimensionality while preserving location-invariant features.

### Interpretation

This architecture demonstrates a **spatiotemporal fusion approach** for rainfall prediction:

- The deep network processes temporal dependencies in rainfall sequences through its increasing ReLU layers.

- The wide network's convolutional layer captures geographic patterns (e.g., regional rainfall correlations), while global pooling summarizes these spatially.

- Concatenation merges these complementary features, enabling the output layer to make predictions that account for both time-series dynamics and spatial context.

The design aligns with modern hybrid models (e.g., Wide & Deep) that combine deep learning's representational power with wide models' ability to handle high-dimensional inputs. The absence of explicit activation functions in the output layer suggests flexibility for regression/classification tasks depending on the final layer's configuration.