## Diagram: Neuro-Symbolic AI Architecture

### Overview

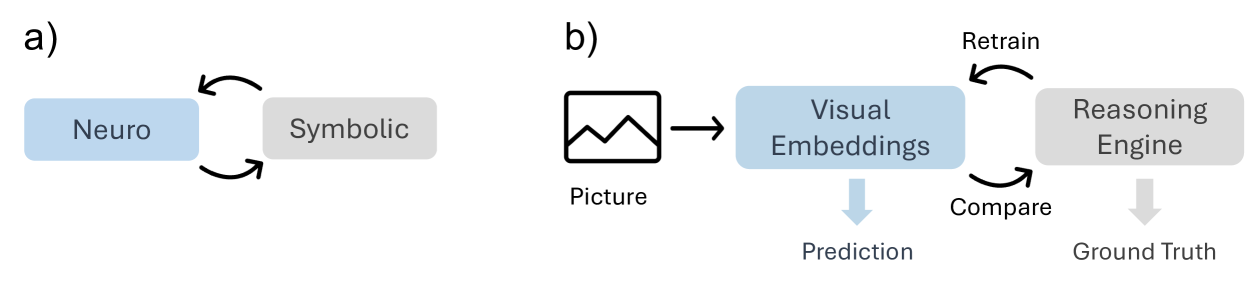

The image displays two related conceptual diagrams, labeled **a)** and **b)**, illustrating a neuro-symbolic artificial intelligence architecture. Diagram **a)** presents a high-level abstraction of the core concept, while diagram **b)** expands this into a specific pipeline for visual reasoning tasks. The diagrams use a consistent color scheme: blue for neural/perceptual components and gray for symbolic/reasoning components.

### Components/Axes

The image is divided into two distinct panels:

**Panel a) (Left Side):**

* **Component 1:** A blue rectangular box labeled **"Neuro"**.

* **Component 2:** A gray rectangular box labeled **"Symbolic"**.

* **Relationship:** Two curved, black arrows form a closed loop between the "Neuro" and "Symbolic" boxes, indicating a bidirectional, cyclical interaction or feedback loop.

**Panel b) (Right Side):**

* **Input:** An icon of a landscape picture labeled **"Picture"**.

* **Neural Component:** A blue rectangular box labeled **"Visual Embeddings"**.

* **Symbolic Component:** A gray rectangular box labeled **"Reasoning Engine"**.

* **Outputs:**

* A downward-pointing blue arrow from "Visual Embeddings" leads to the label **"Prediction"**.

* A downward-pointing gray arrow from "Reasoning Engine" leads to the label **"Ground Truth"**.

* **Process Flow & Feedback:**

* A straight black arrow points from the "Picture" icon to the "Visual Embeddings" box.

* A curved black arrow labeled **"Compare"** points from the "Visual Embeddings" box to the "Reasoning Engine" box.

* A second curved black arrow labeled **"Retrain"** points from the "Reasoning Engine" box back to the "Visual Embeddings" box, completing a feedback loop.

### Detailed Analysis

The diagrams describe a system where perception and reasoning are integrated.

* **In Diagram a):** The core principle is a continuous cycle between a "Neuro" module (likely a neural network for pattern recognition) and a "Symbolic" module (likely a logic-based system for structured reasoning). The bidirectional arrows imply that each module informs and updates the other.

* **In Diagram b):** This principle is applied to a visual task.

1. A **"Picture"** is processed by the **"Visual Embeddings"** module (the "Neuro" component), which generates a numerical representation.

2. This representation is used to make a **"Prediction"**.

3. Simultaneously, the embeddings are sent (**"Compare"**) to the **"Reasoning Engine"** (the "Symbolic" component).

4. The Reasoning Engine likely evaluates the prediction against logical rules or known facts to establish a **"Ground Truth"**.

5. Crucially, the Reasoning Engine can send feedback (**"Retrain"**) to the Visual Embeddings module, suggesting the symbolic reasoning is used to improve or correct the neural perception model over time.

### Key Observations

1. **Color-Coded Semantics:** The consistent use of blue for "Neuro"/"Visual Embeddings" and gray for "Symbolic"/"Reasoning Engine" visually reinforces the separation and collaboration between these two AI paradigms.

2. **Closed-Loop System:** Both diagrams emphasize a closed-loop, iterative process. This is not a simple feed-forward pipeline but a system designed for continuous learning and refinement.

3. **Direction of Feedback:** The "Retrain" arrow in diagram **b)** is particularly significant. It indicates that the symbolic reasoning component has authority to update the neural component, which is a specific architectural choice in neuro-symbolic AI.

4. **Abstraction to Application:** Diagram **a)** is a pure conceptual model, while **b)** instantiates it with specific modules ("Visual Embeddings," "Reasoning Engine") and data flows ("Picture," "Prediction," "Ground Truth").

### Interpretation

These diagrams illustrate a **neuro-symbolic AI approach** aimed at overcoming the limitations of purely neural or purely symbolic systems.

* **What it suggests:** The architecture proposes that robust AI requires both **subsymbolic perception** (the "Neuro" part, good at handling noisy, raw data like images) and **symbolic reasoning** (the "Symbolic" part, good at logic, explanation, and leveraging structured knowledge). The "Compare" and "Retrain" loop is the mechanism for integration, where symbolic knowledge guides and improves perceptual learning.

* **How elements relate:** The "Picture" is the real-world input. "Visual Embeddings" are the system's internal, learned representation of that picture. The "Prediction" is the system's initial guess. The "Reasoning Engine" acts as a critic or supervisor, using rules or knowledge to judge the prediction against a "Ground Truth" and then providing corrective feedback to make the perceptual model ("Visual Embeddings") more accurate or aligned with logical constraints.

* **Notable implication:** This model addresses a key challenge in AI: creating systems that can both perceive the world flexibly *and* reason about it reliably. The "Retrain" pathway suggests a focus on **explainability and corrective learning**, where errors can be traced back and fixed through logical analysis rather than just more data. The absence of numerical data or trends indicates this is a conceptual framework, not a performance report.