## Diagram: Neuro-Symbolic System Architecture and Workflow

### Overview

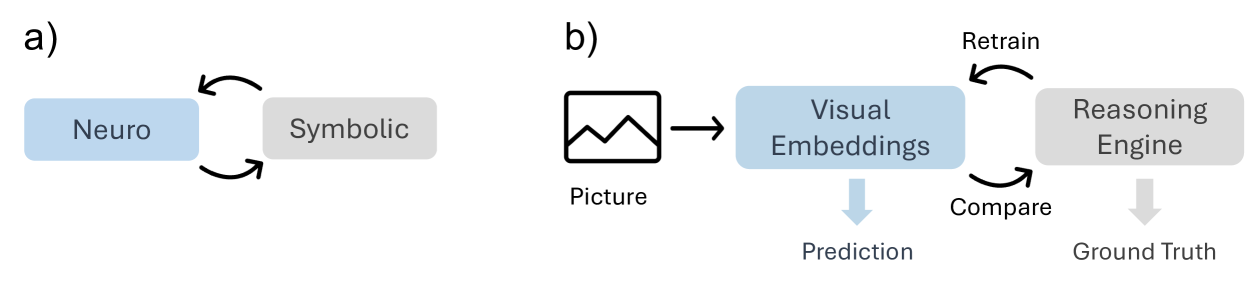

The image depicts two interconnected diagrams illustrating a neuro-symbolic system. Diagram **a)** shows a bidirectional relationship between "Neuro" and "Symbolic" components, while diagram **b)** outlines a workflow involving visual embeddings, prediction, reasoning, and retraining.

### Components/Axes

#### Diagram a)

- **Labels**:

- Left box: "Neuro" (blue background)

- Right box: "Symbolic" (gray background)

- **Arrows**:

- Bidirectional arrows between "Neuro" and "Symbolic", indicating mutual interaction.

#### Diagram b)

- **Components**:

1. **Input**: "Picture" (icon of a mountain range).

2. **Processing**:

- "Visual Embeddings" (blue box)

- "Reasoning Engine" (gray box)

3. **Output**:

- "Prediction" (arrow pointing downward from "Visual Embeddings")

- "Ground Truth" (arrow pointing downward from "Reasoning Engine")

4. **Feedback Loop**:

- "Retrain" (arrow looping from "Reasoning Engine" back to "Visual Embeddings")

- "Compare" (arrow connecting "Prediction" and "Ground Truth" to the "Reasoning Engine")

### Detailed Analysis

- **Diagram a)**:

- The bidirectional arrows suggest a hybrid system where neural networks ("Neuro") and symbolic reasoning ("Symbolic") influence each other.

- **Diagram b)**:

- **Flow**:

1. A "Picture" is processed into "Visual Embeddings".

2. The embeddings generate a "Prediction".

3. The "Reasoning Engine" compares predictions with "Ground Truth" to refine outputs.

4. Discrepancies trigger retraining of the "Visual Embeddings" via the "Retrain" loop.

### Key Observations

- The system integrates neural and symbolic approaches, emphasizing iterative improvement through feedback.

- The "Reasoning Engine" acts as a central hub for validation and retraining.

- No numerical data or quantitative trends are present; the focus is on architectural relationships.

### Interpretation

This diagram represents a neuro-symbolic AI framework where neural networks handle perceptual tasks (e.g., visual embeddings), while symbolic reasoning ensures logical consistency. The feedback loop between prediction and ground truth enables continuous learning, addressing limitations of purely data-driven or rule-based systems. The bidirectional interaction in diagram **a)** highlights the importance of integrating sub-symbolic (neural) and symbolic components for robust AI behavior.